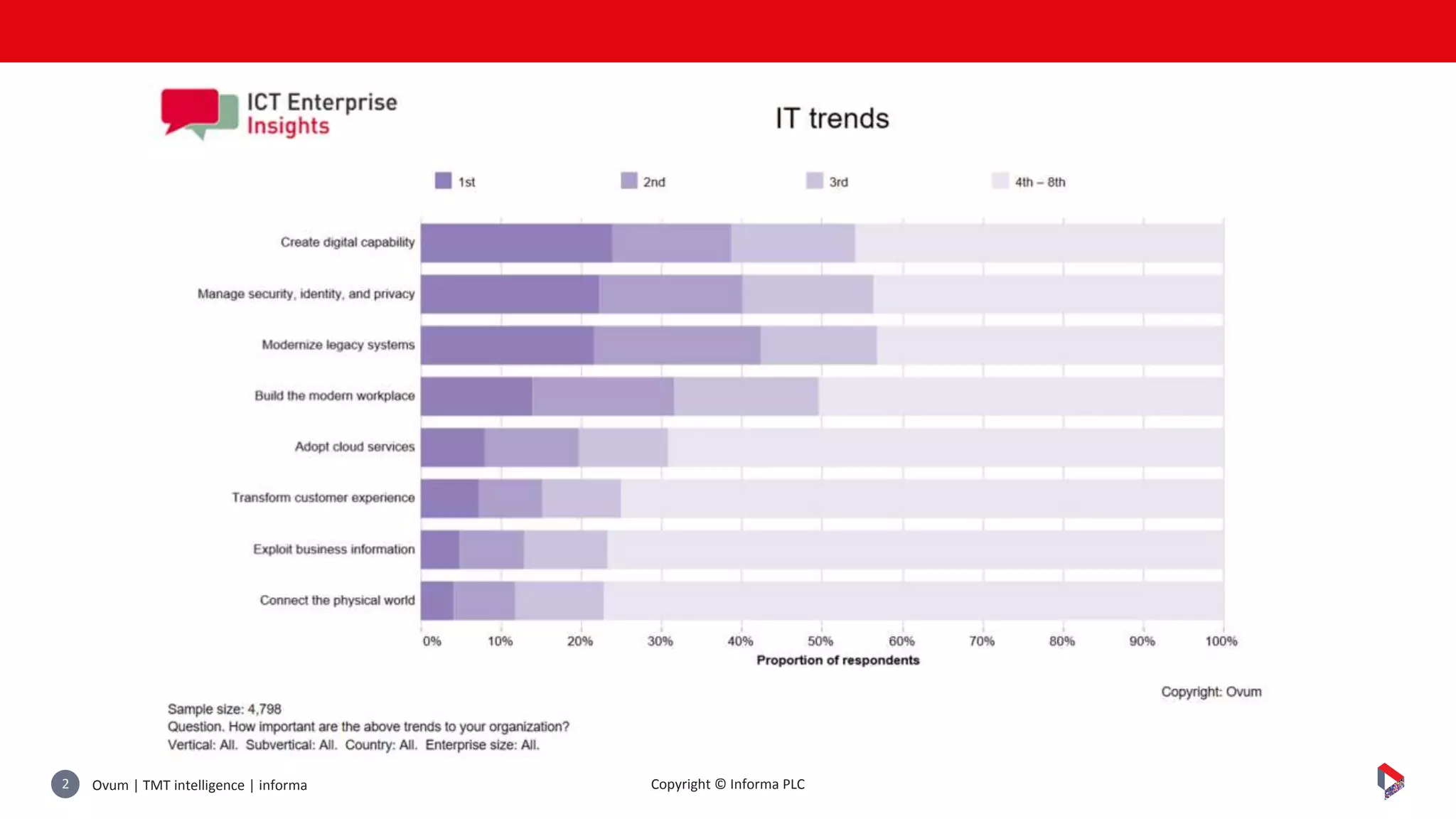

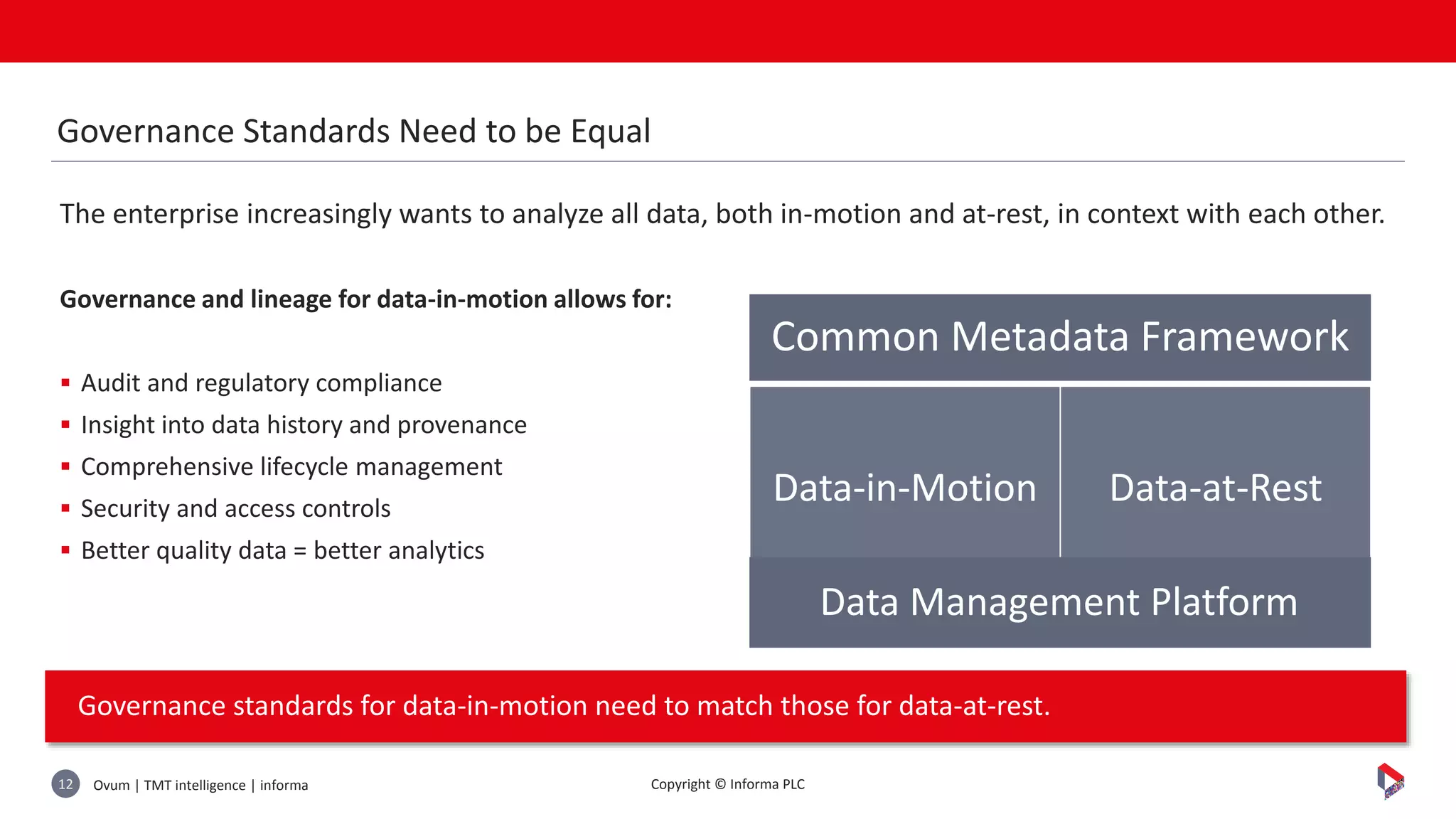

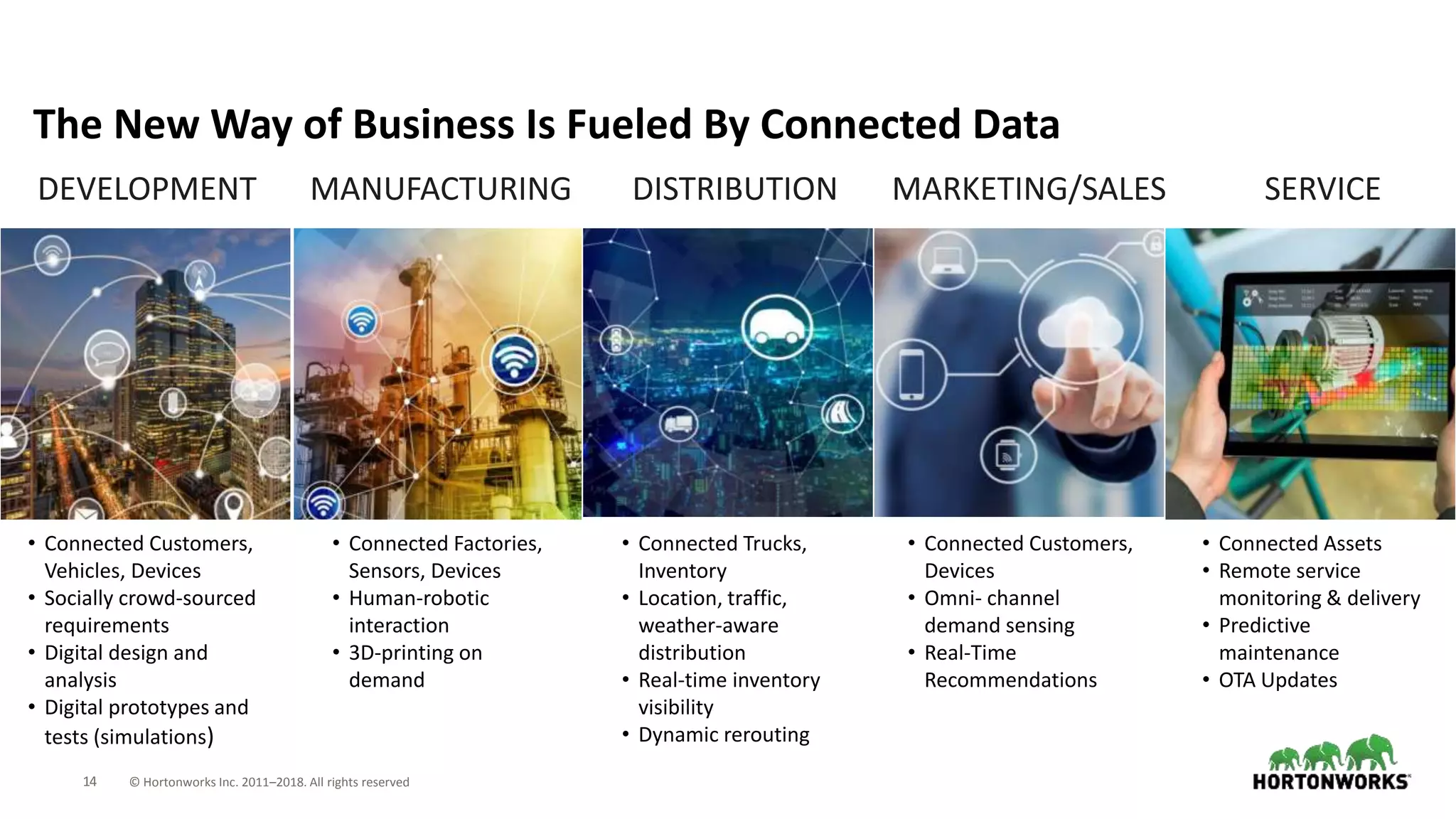

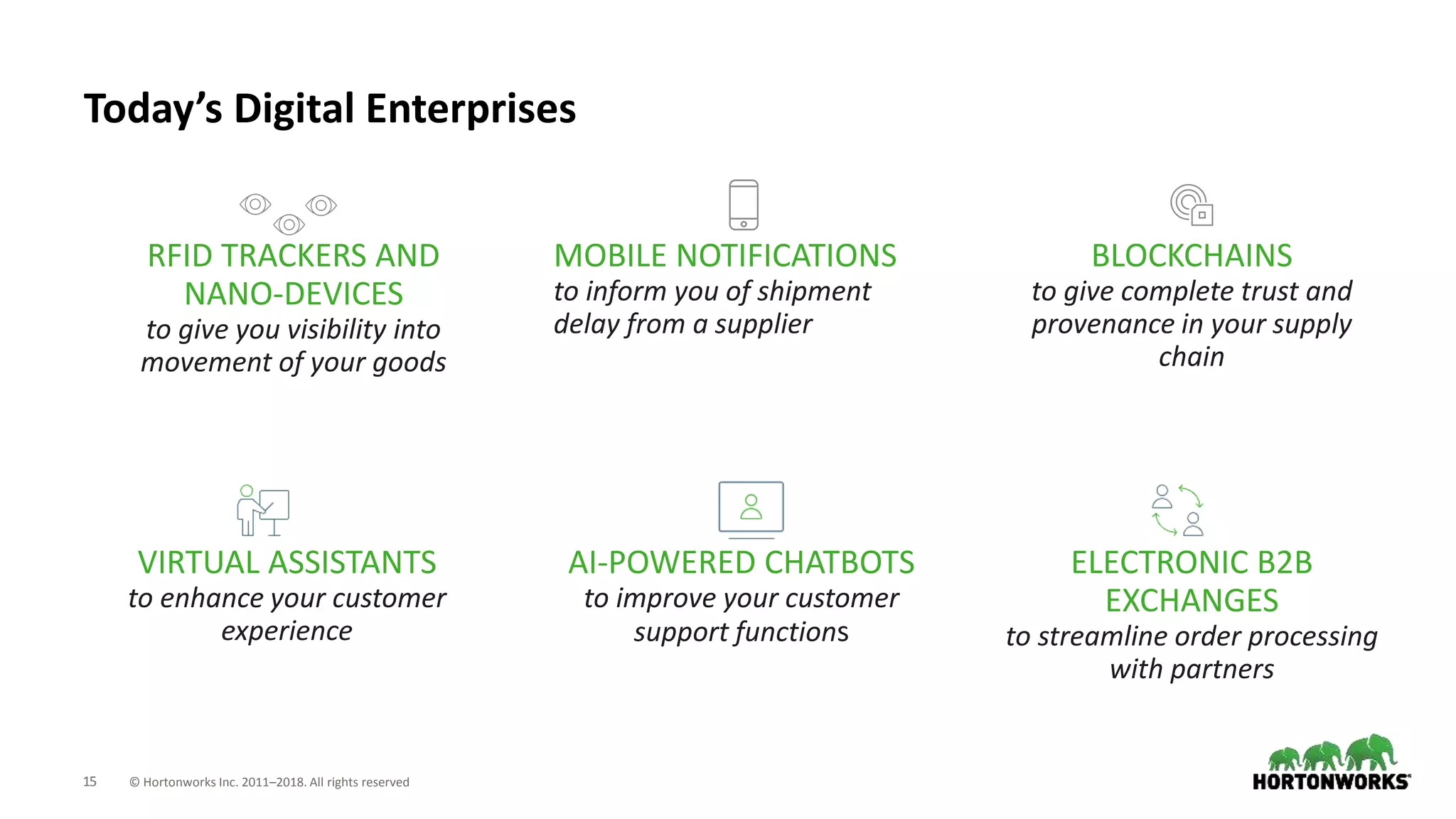

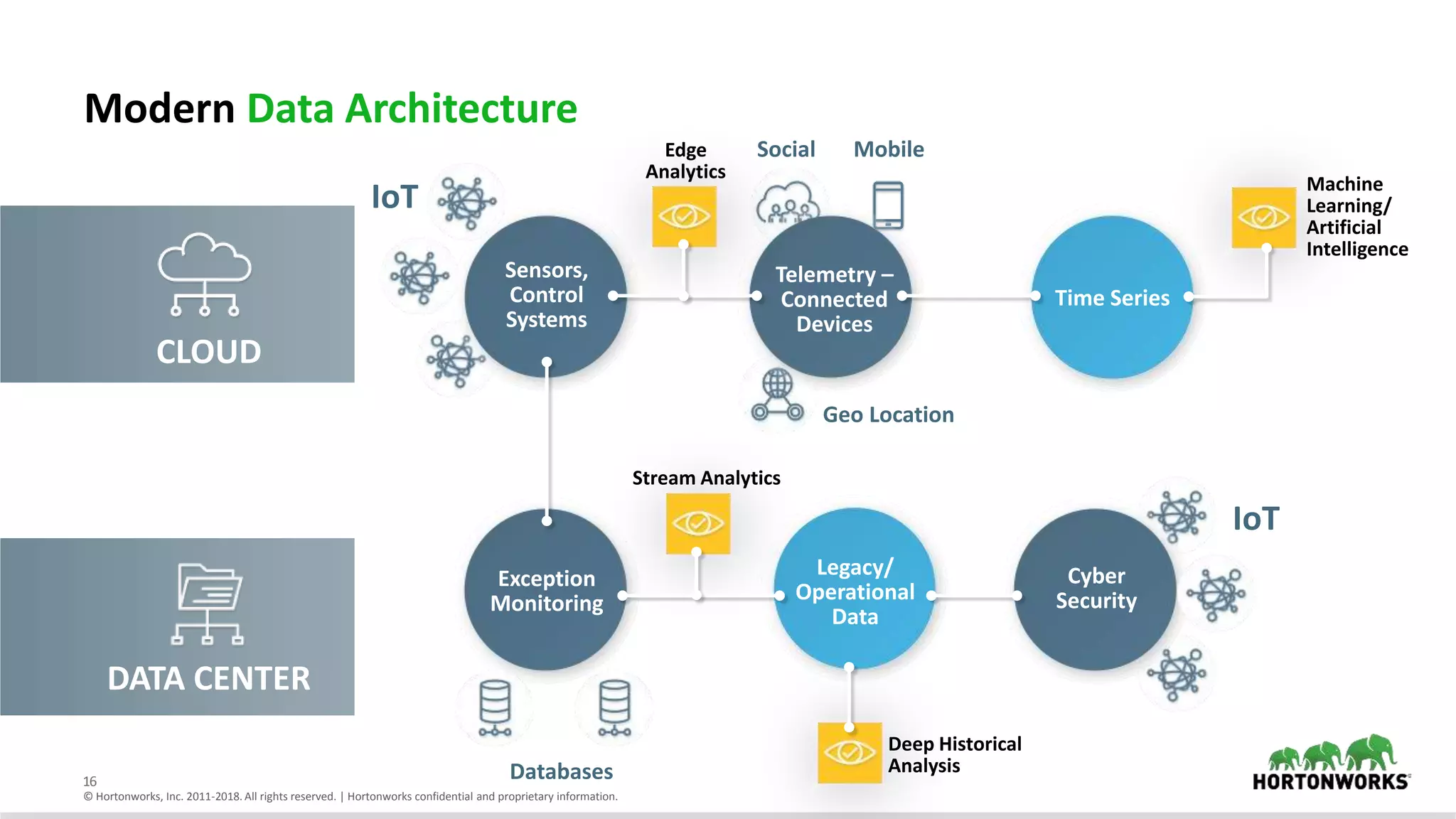

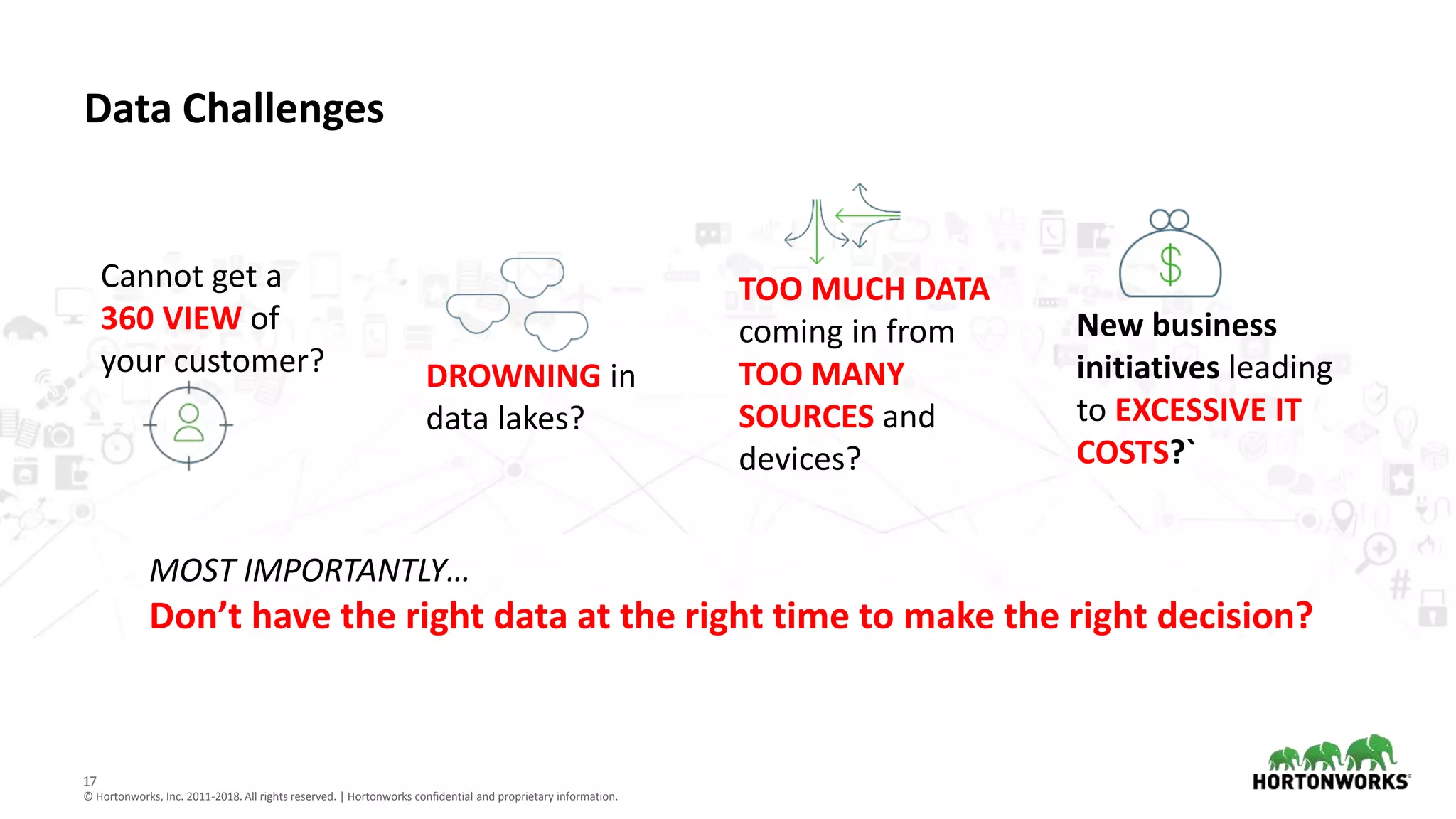

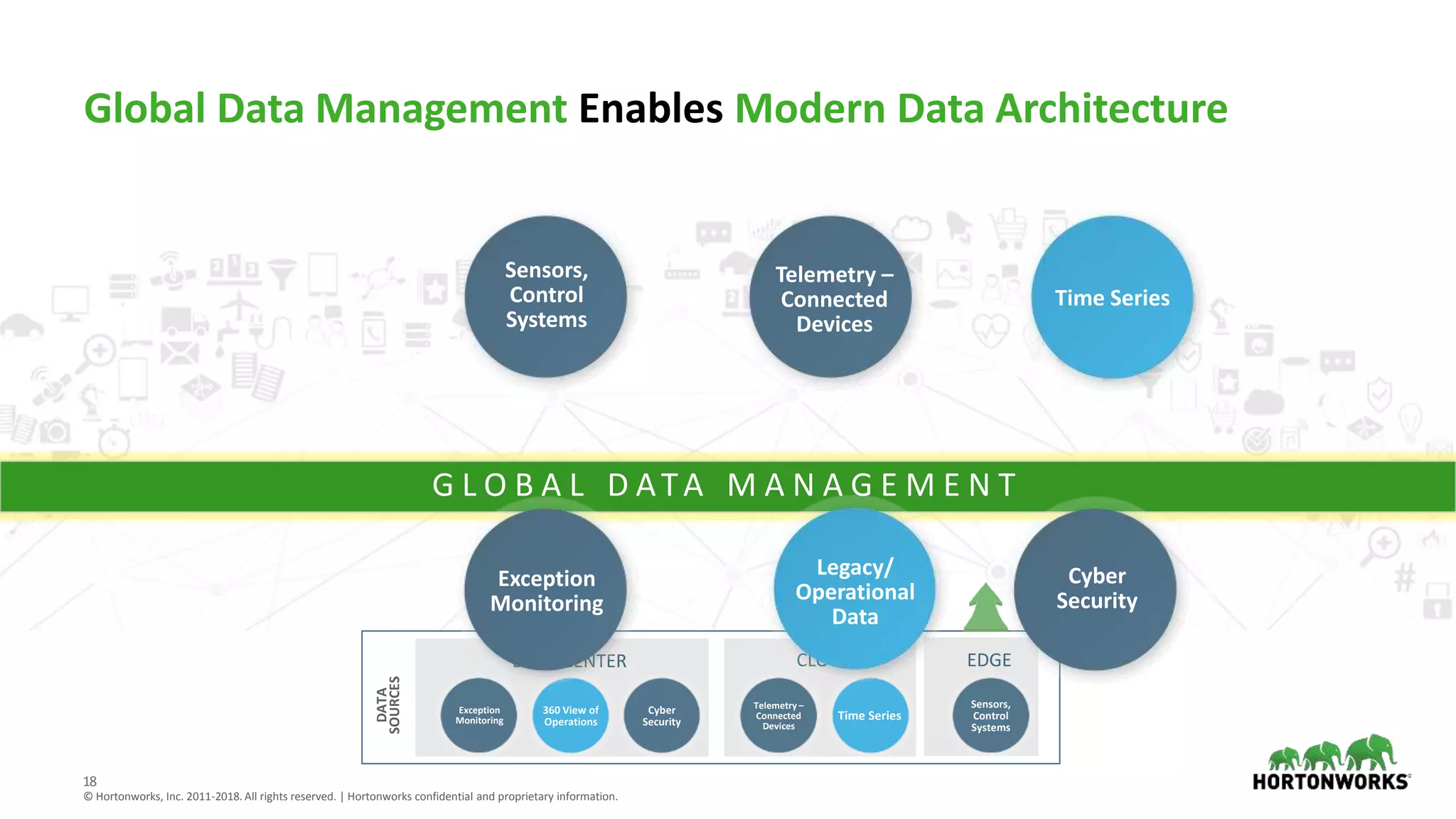

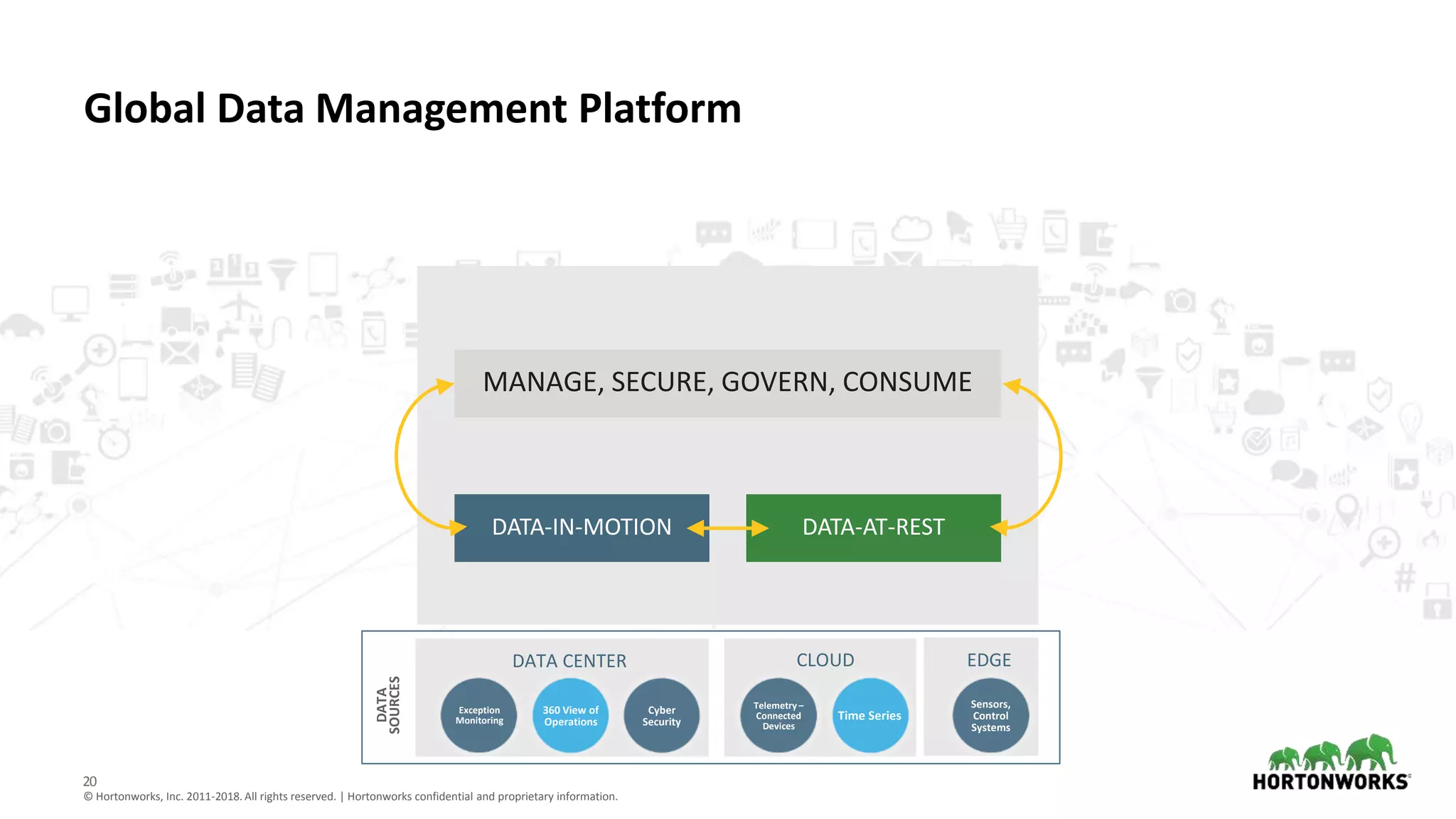

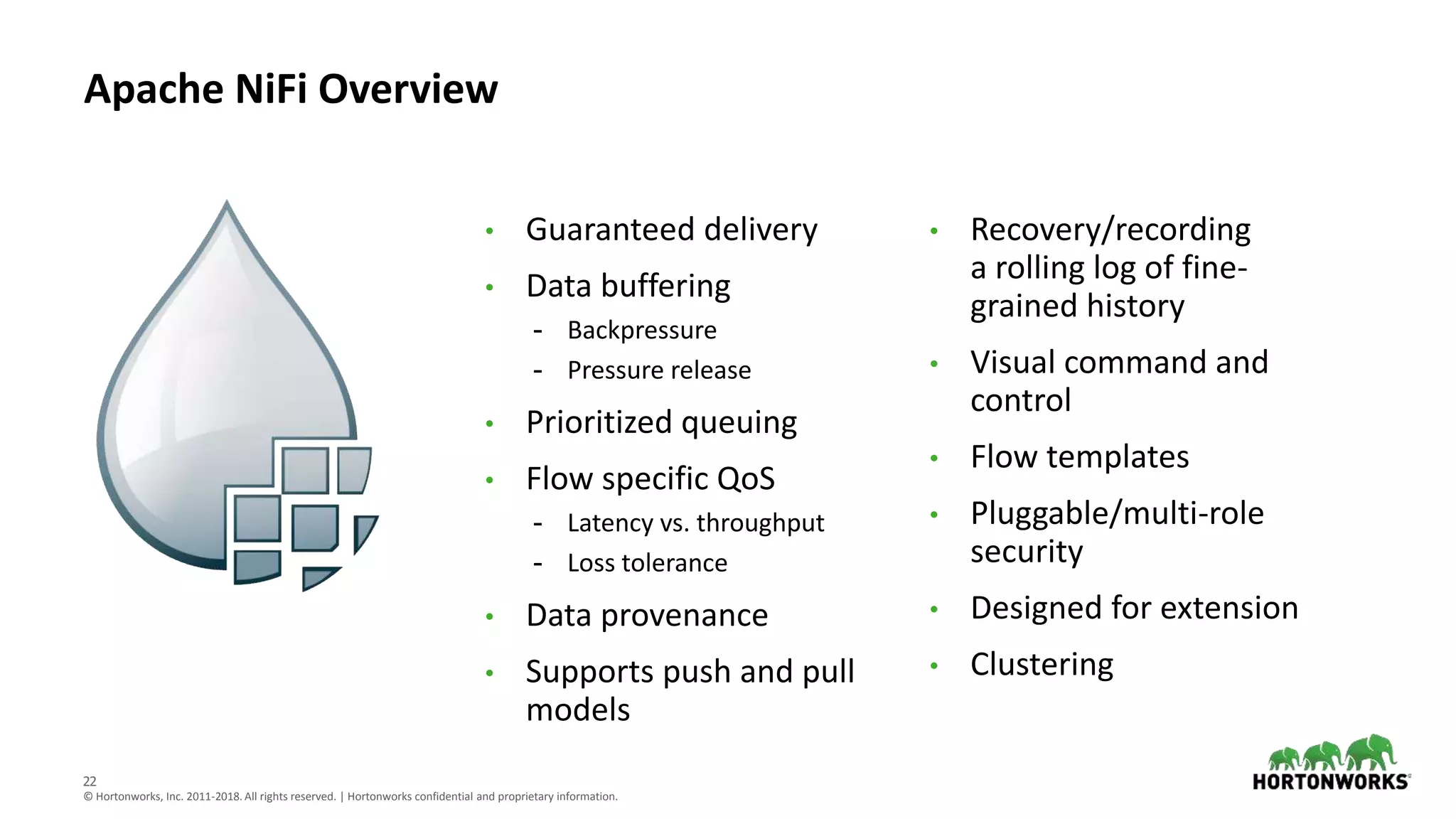

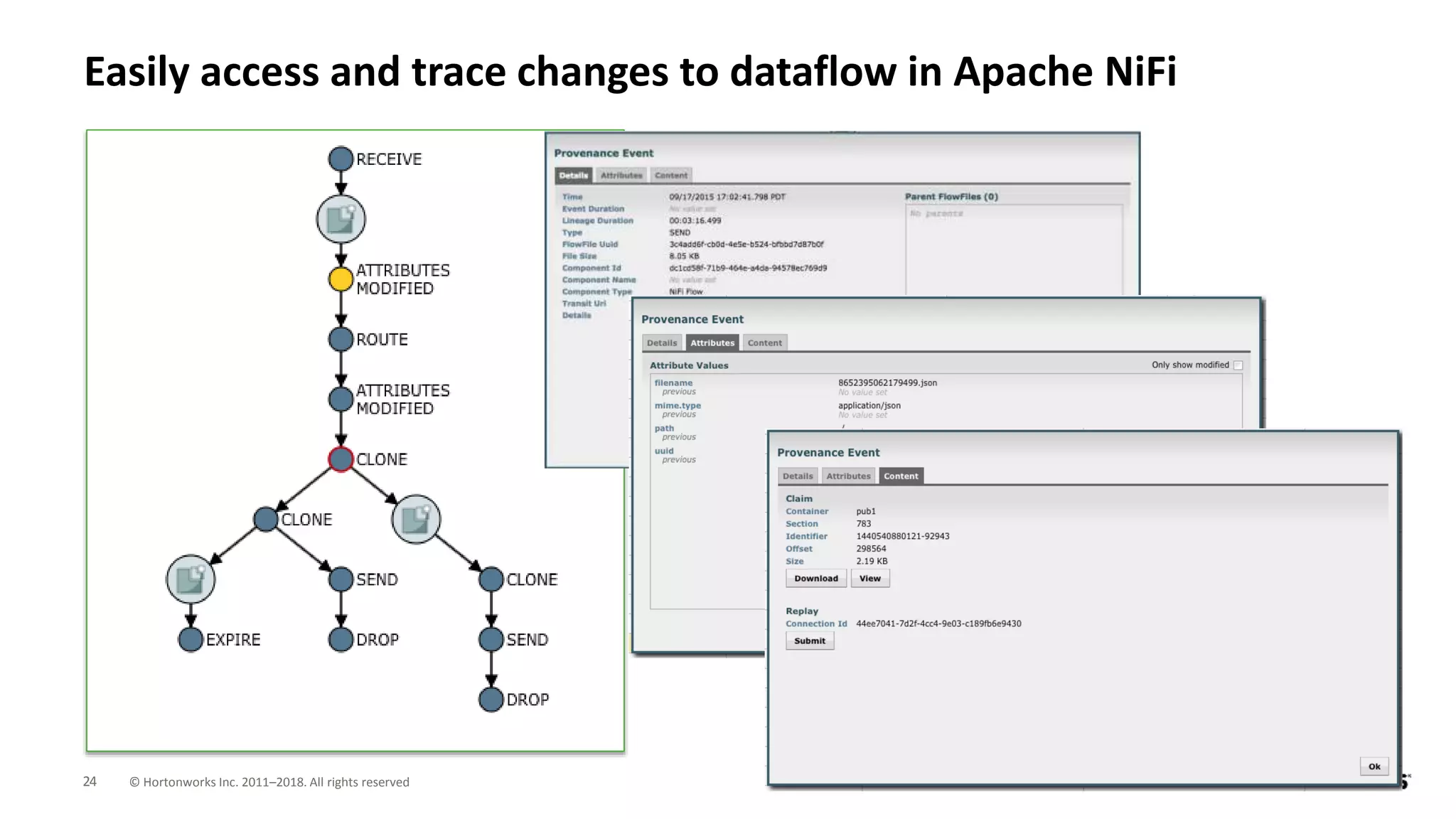

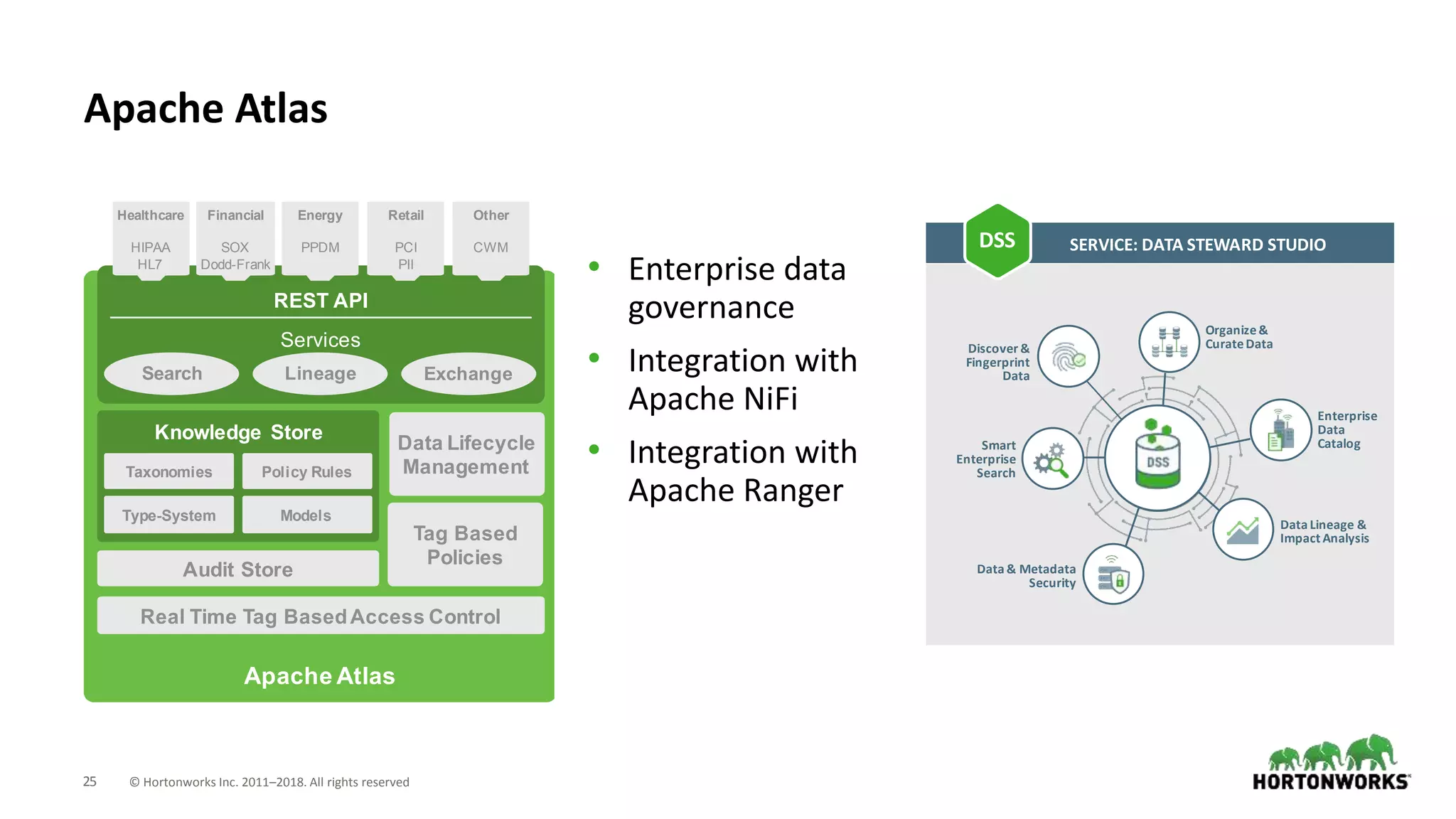

The document discusses the critical need for data governance and provenance in a digital environment, highlighting challenges such as reproducibility, regulatory compliance, and access rights. It emphasizes the importance of managing both data-in-motion and data-at-rest through a common metadata framework to improve data analytics and business outcomes. Additionally, it outlines the evolving landscape of data management driven by increased data sources, user demands, and regulatory pressures.