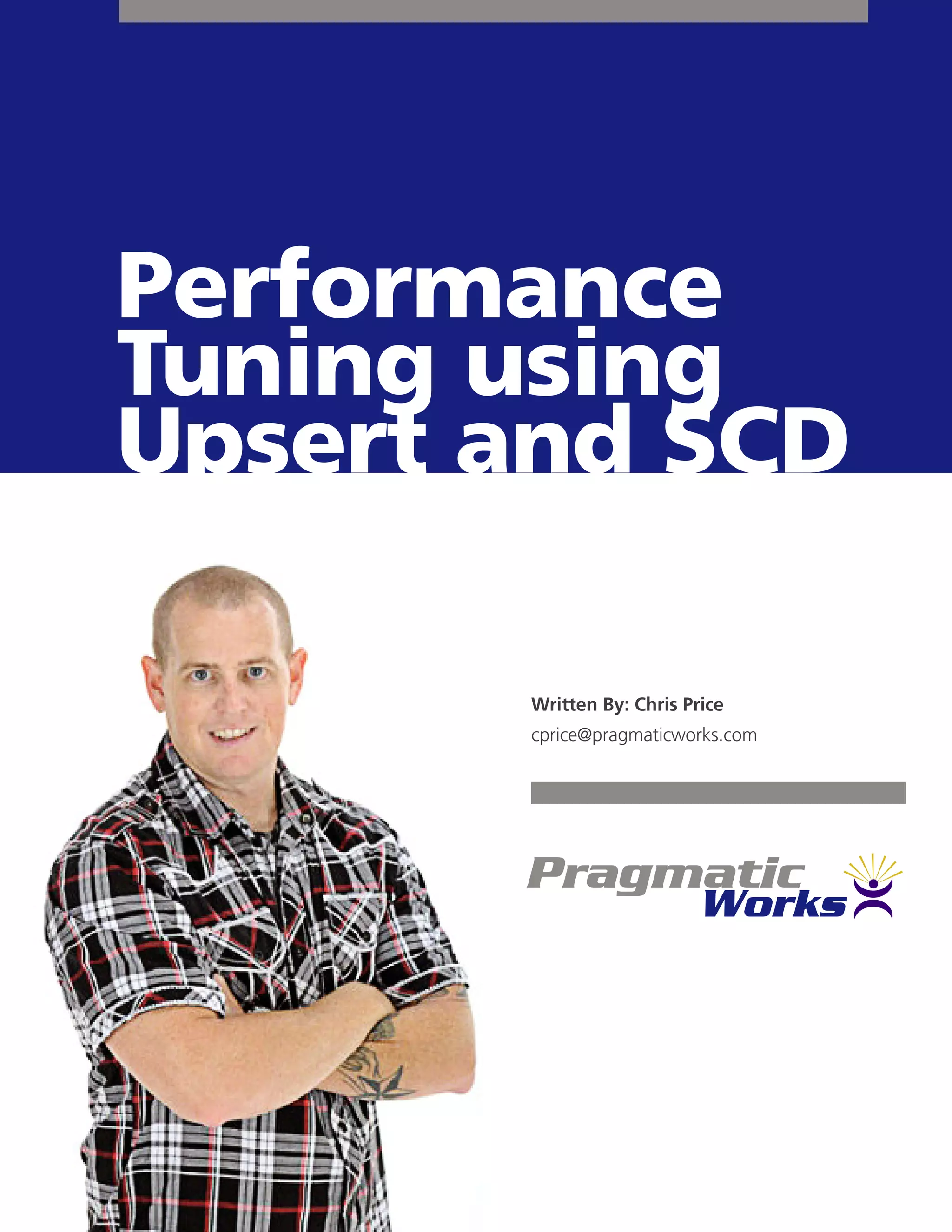

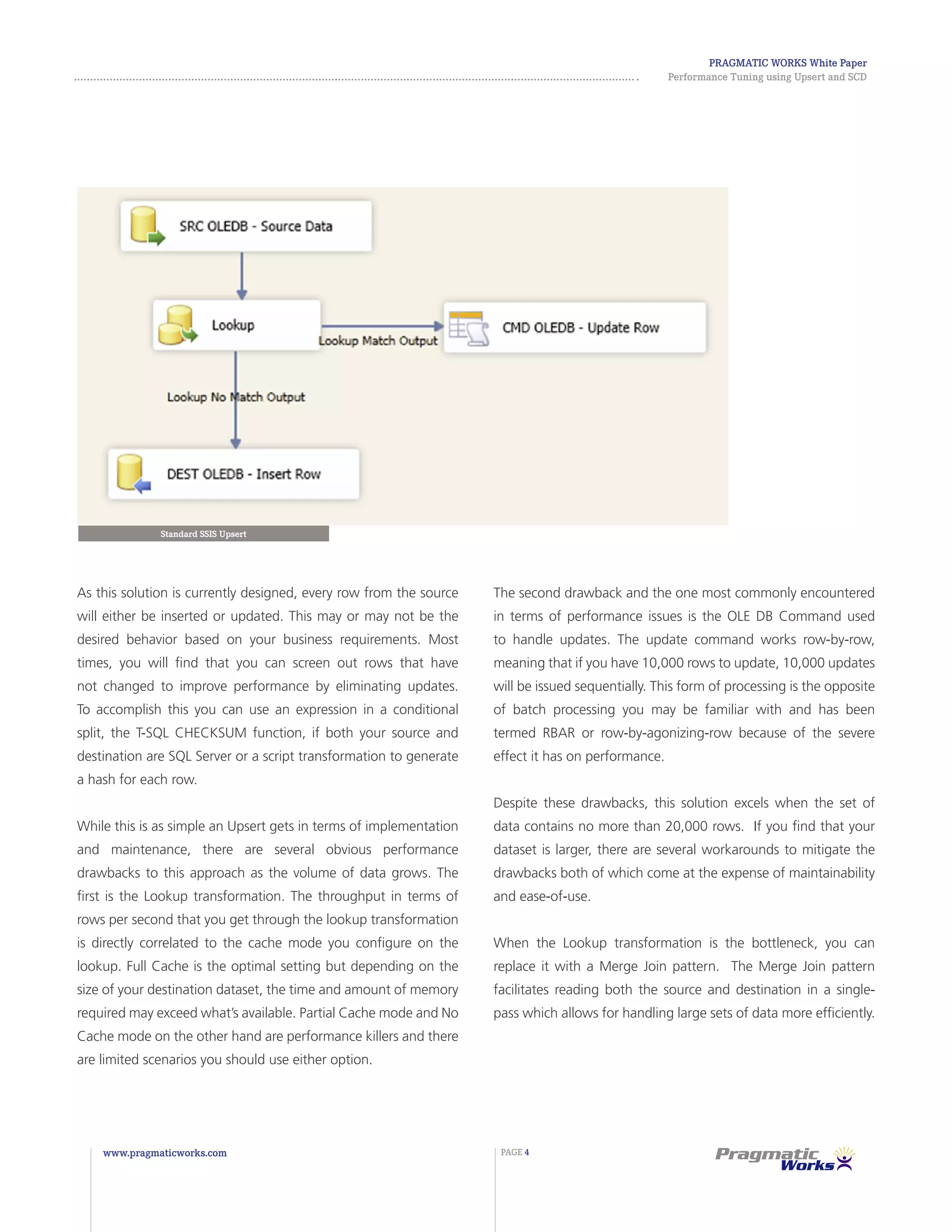

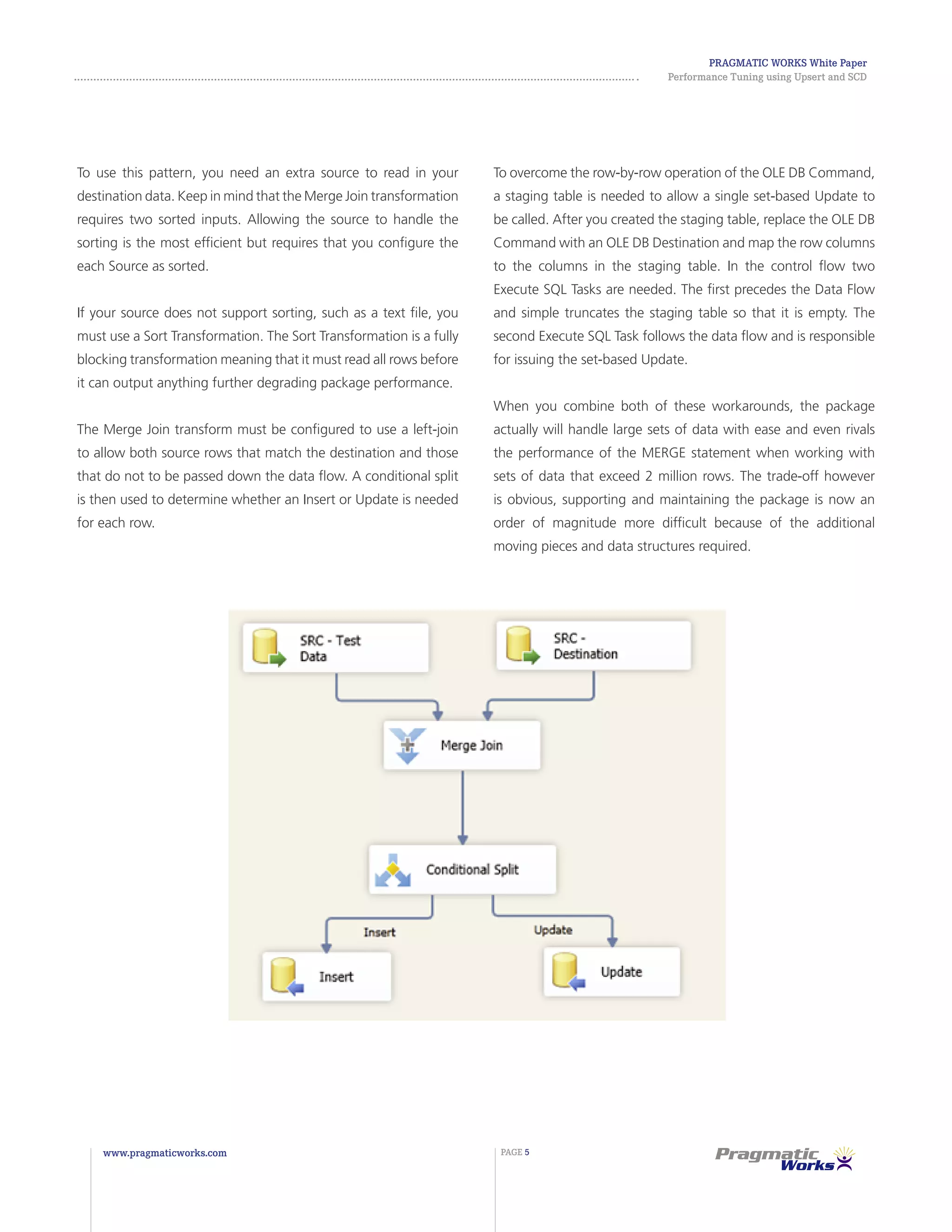

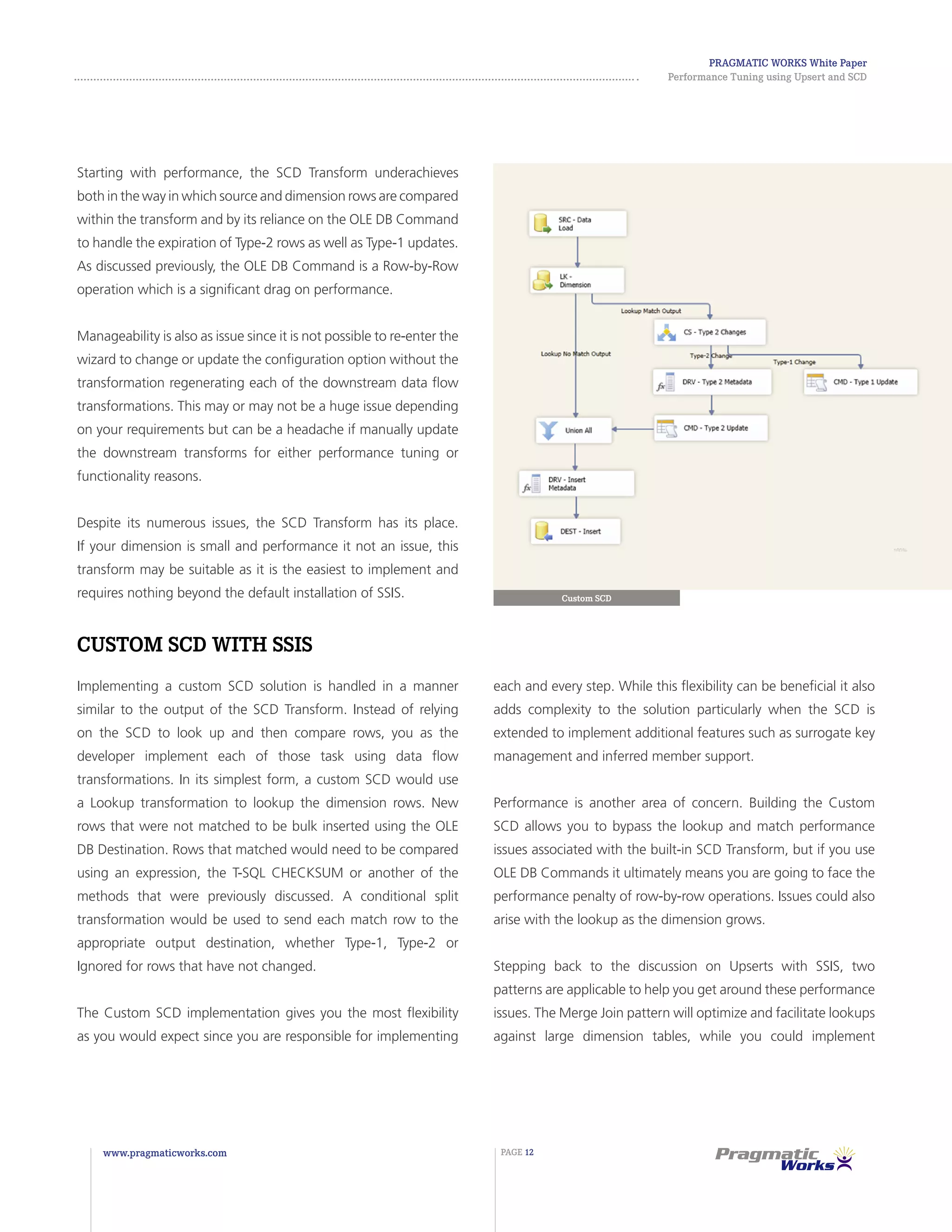

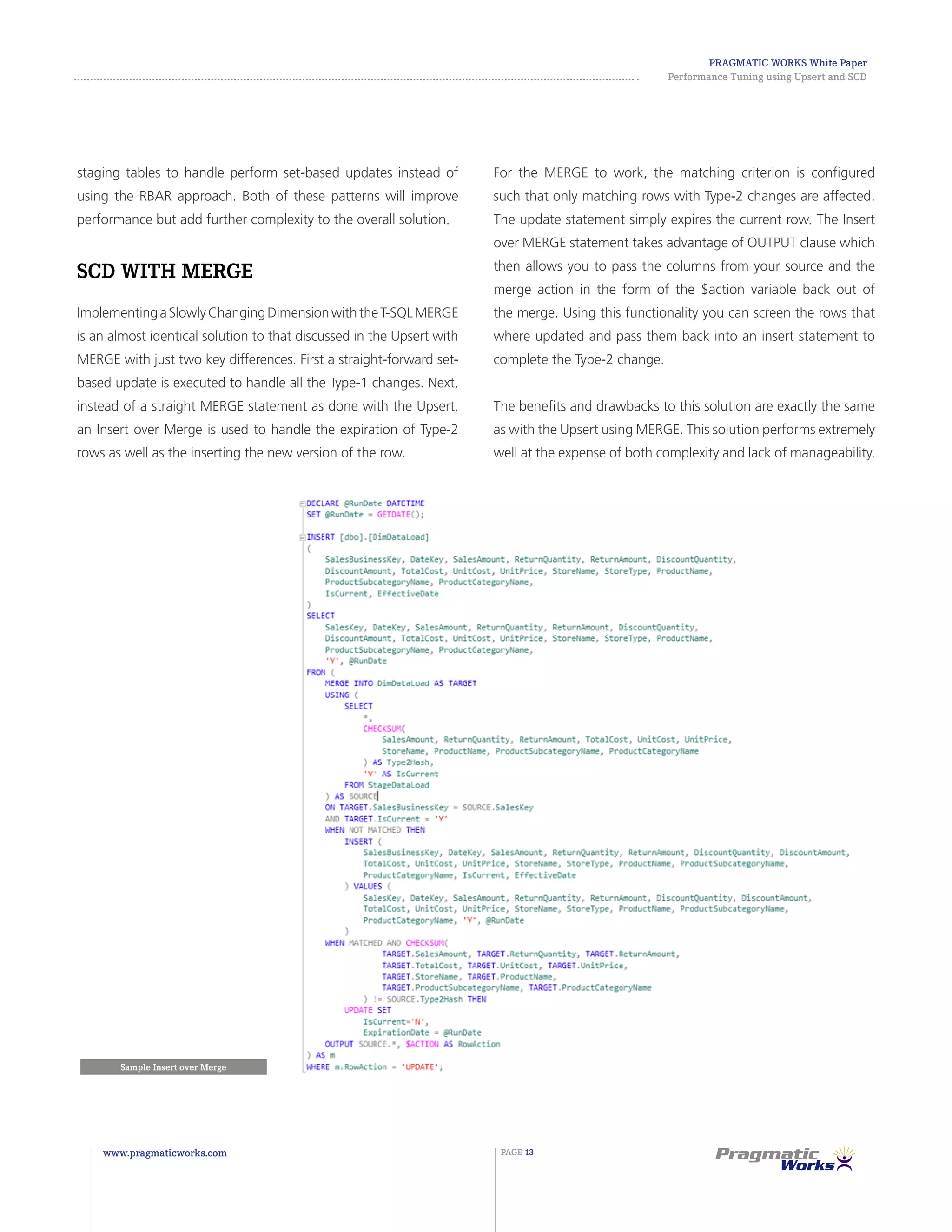

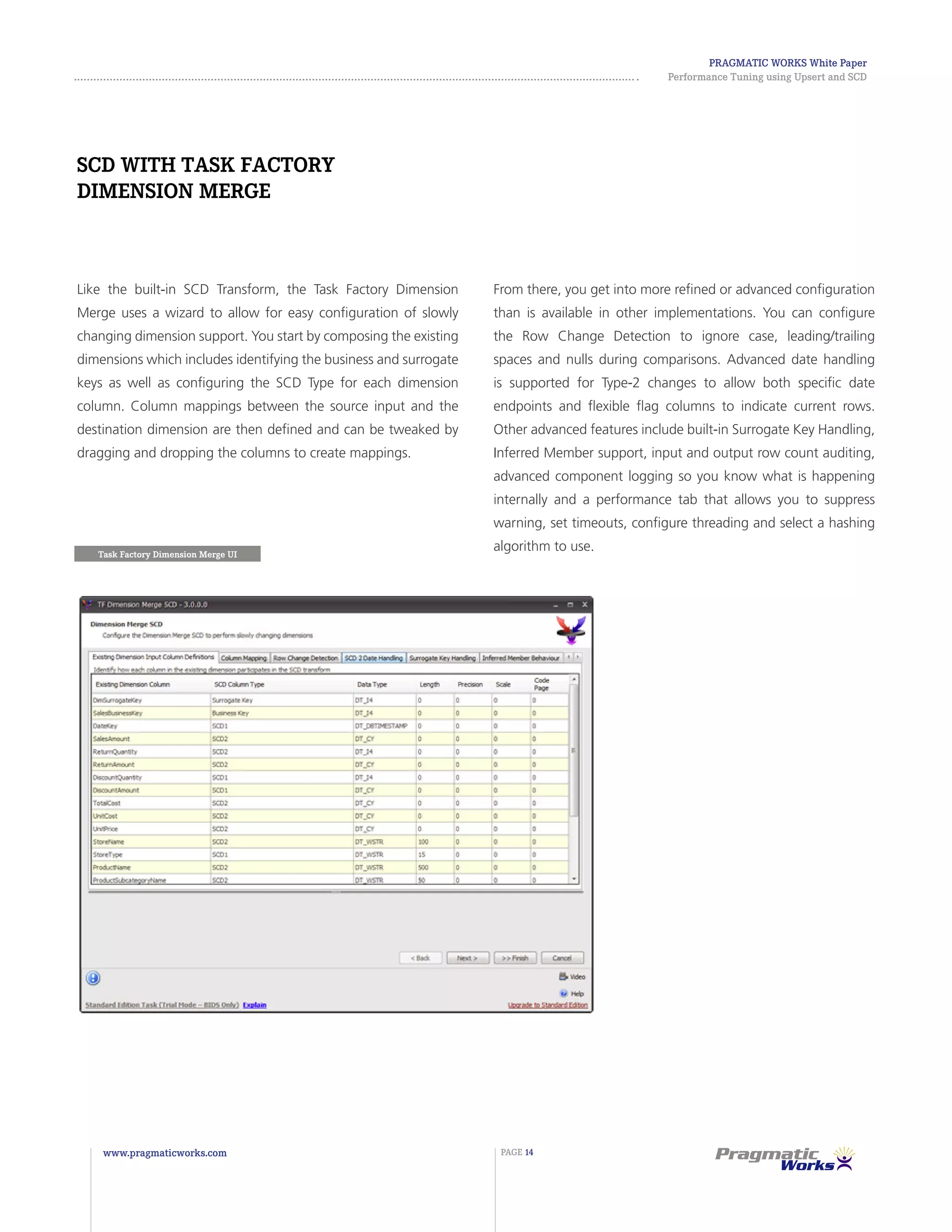

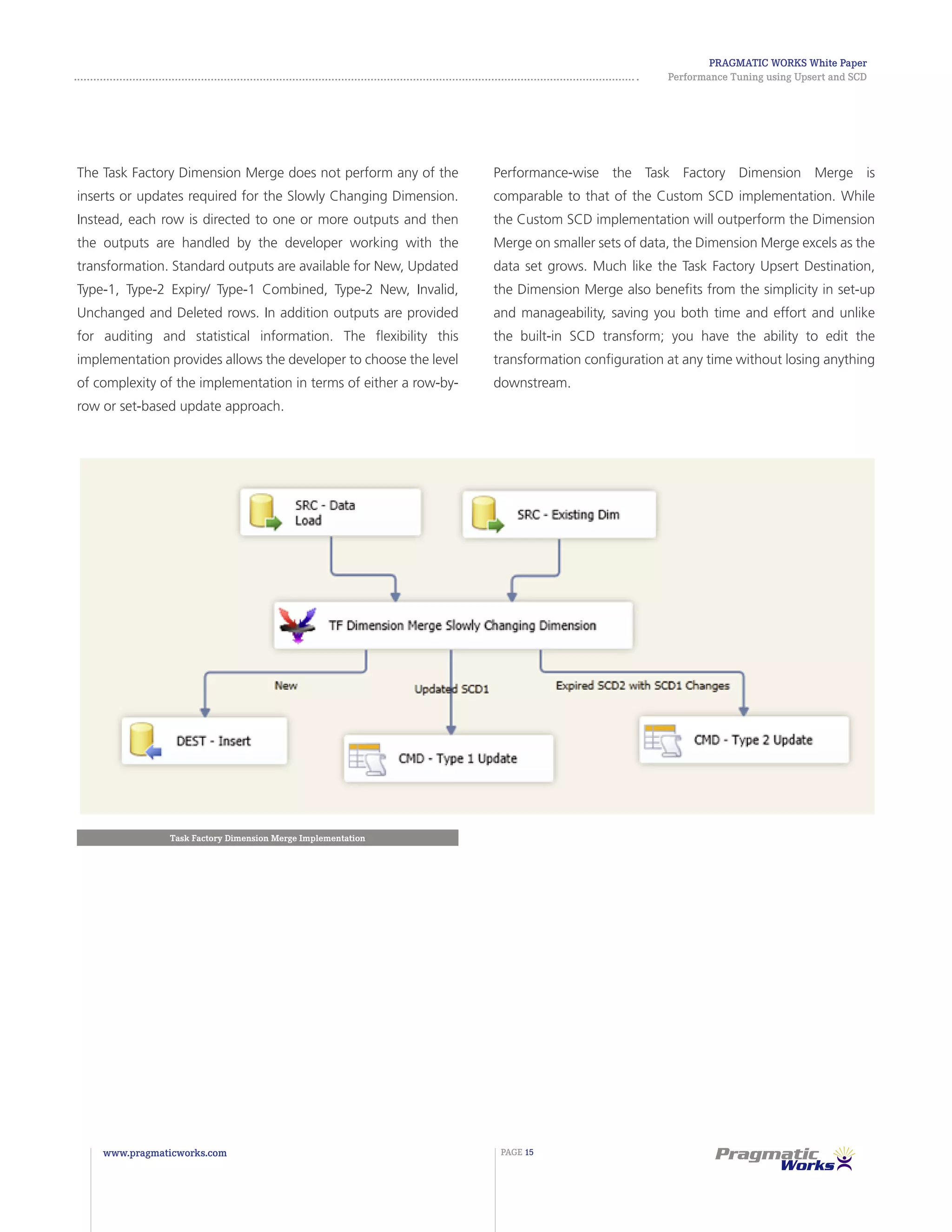

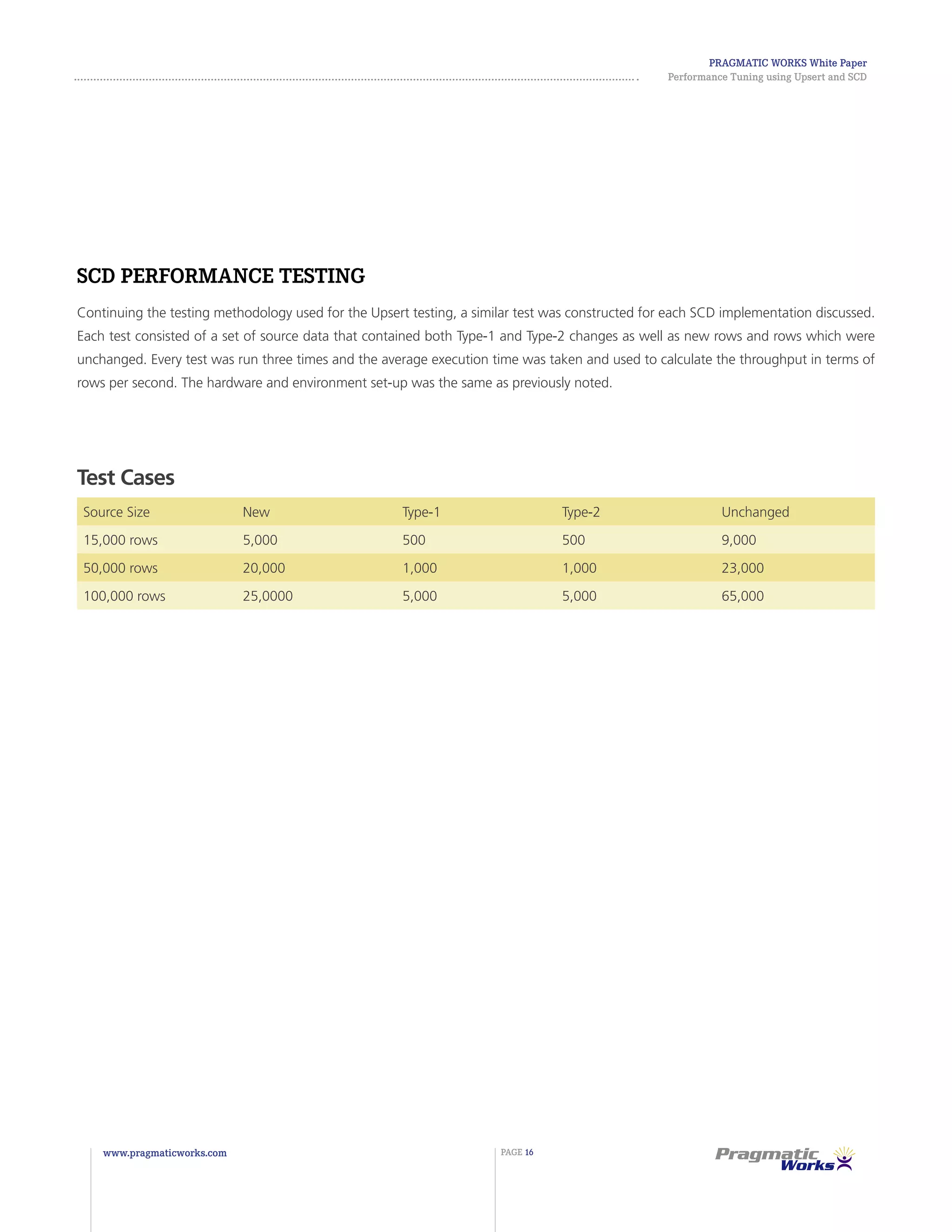

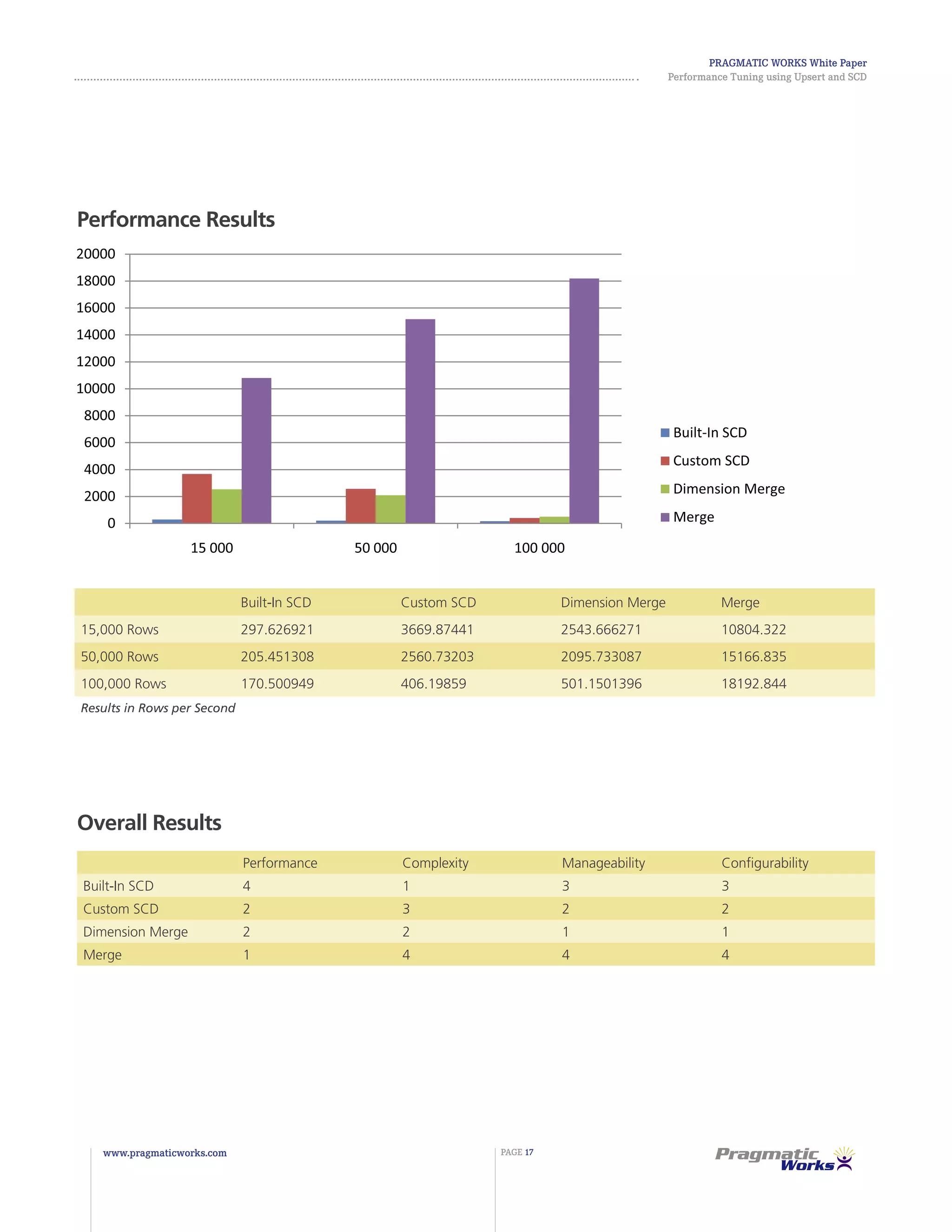

The document discusses various options for implementing upserts and slowly changing dimensions (SCDs) in SQL Server Integration Services (SSIS). It compares the performance, complexity, manageability, and configurability of using the SSIS data flow components, MERGE statements, Task Factory upsert destination, and SCD transform. The Task Factory upsert destination provides the best balance of strong performance, low complexity, and high manageability compared to the other options.