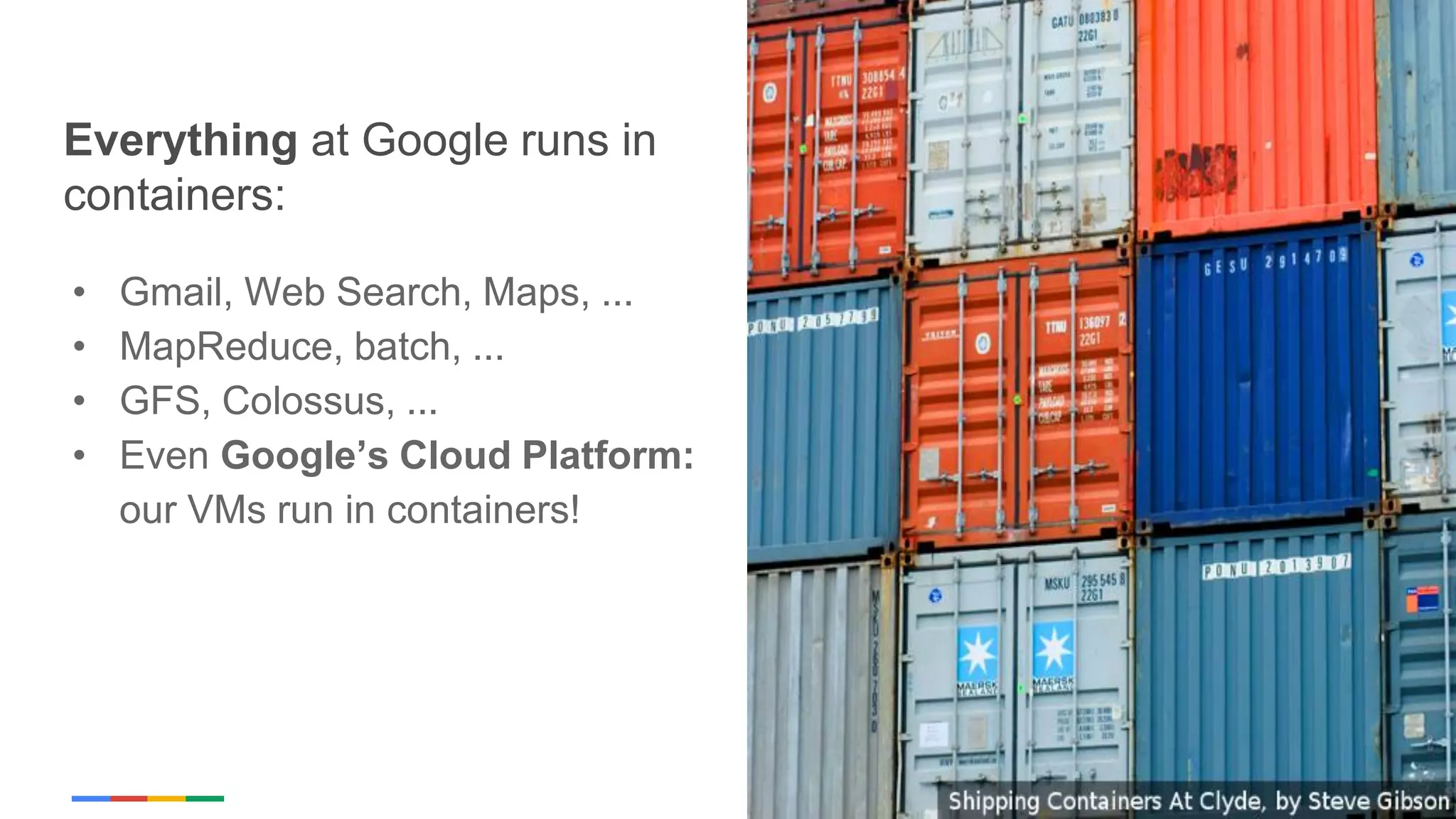

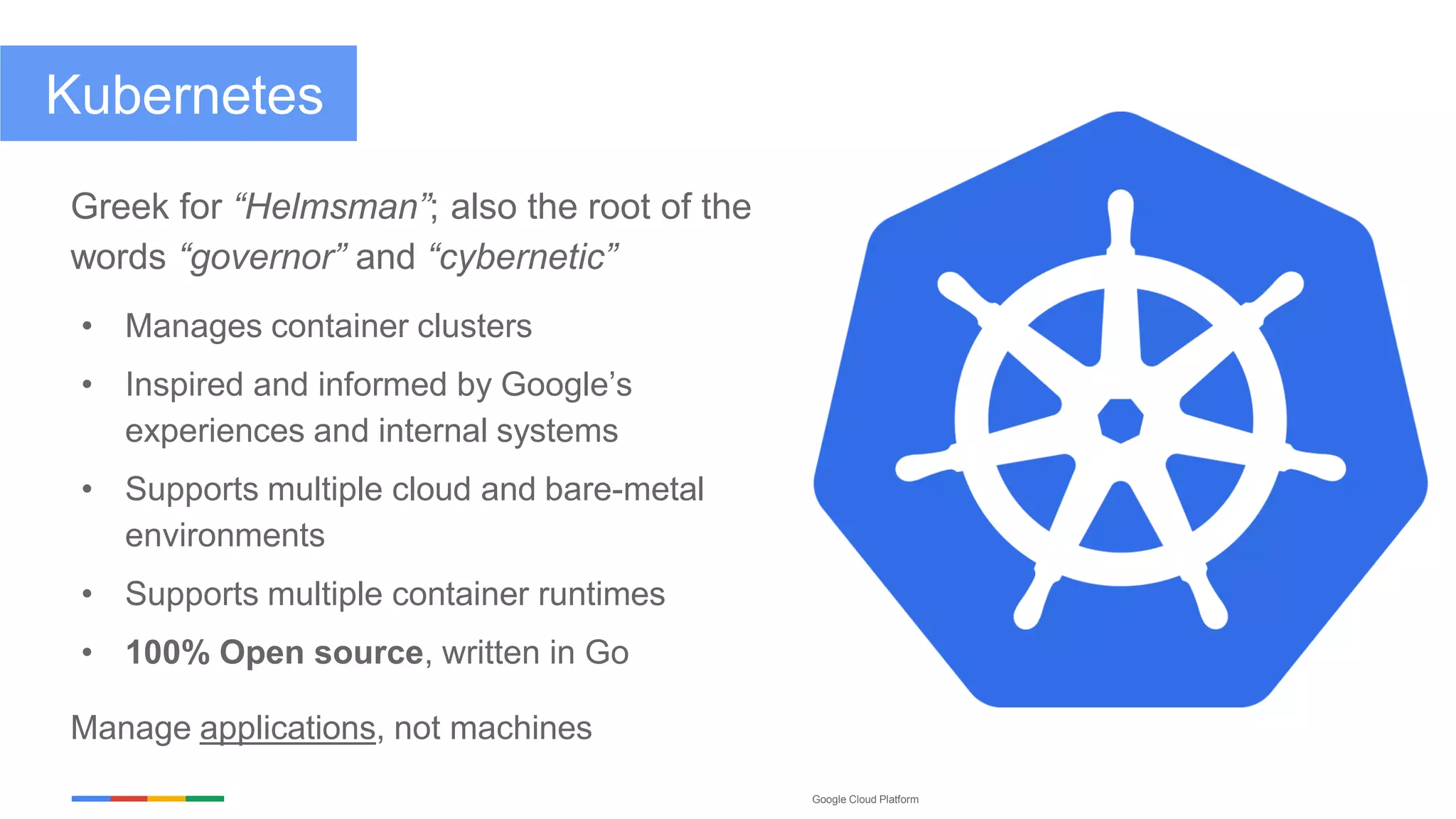

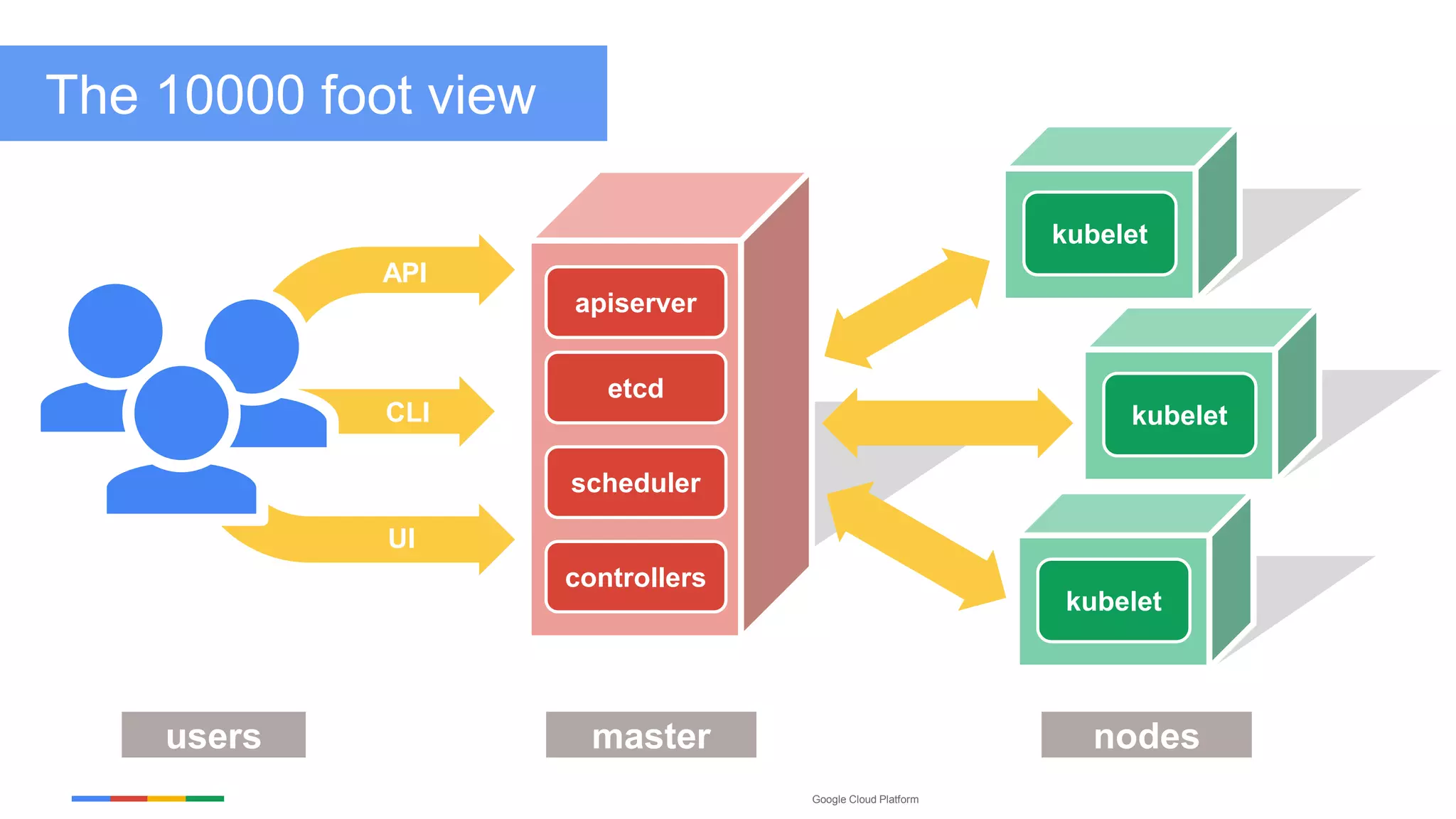

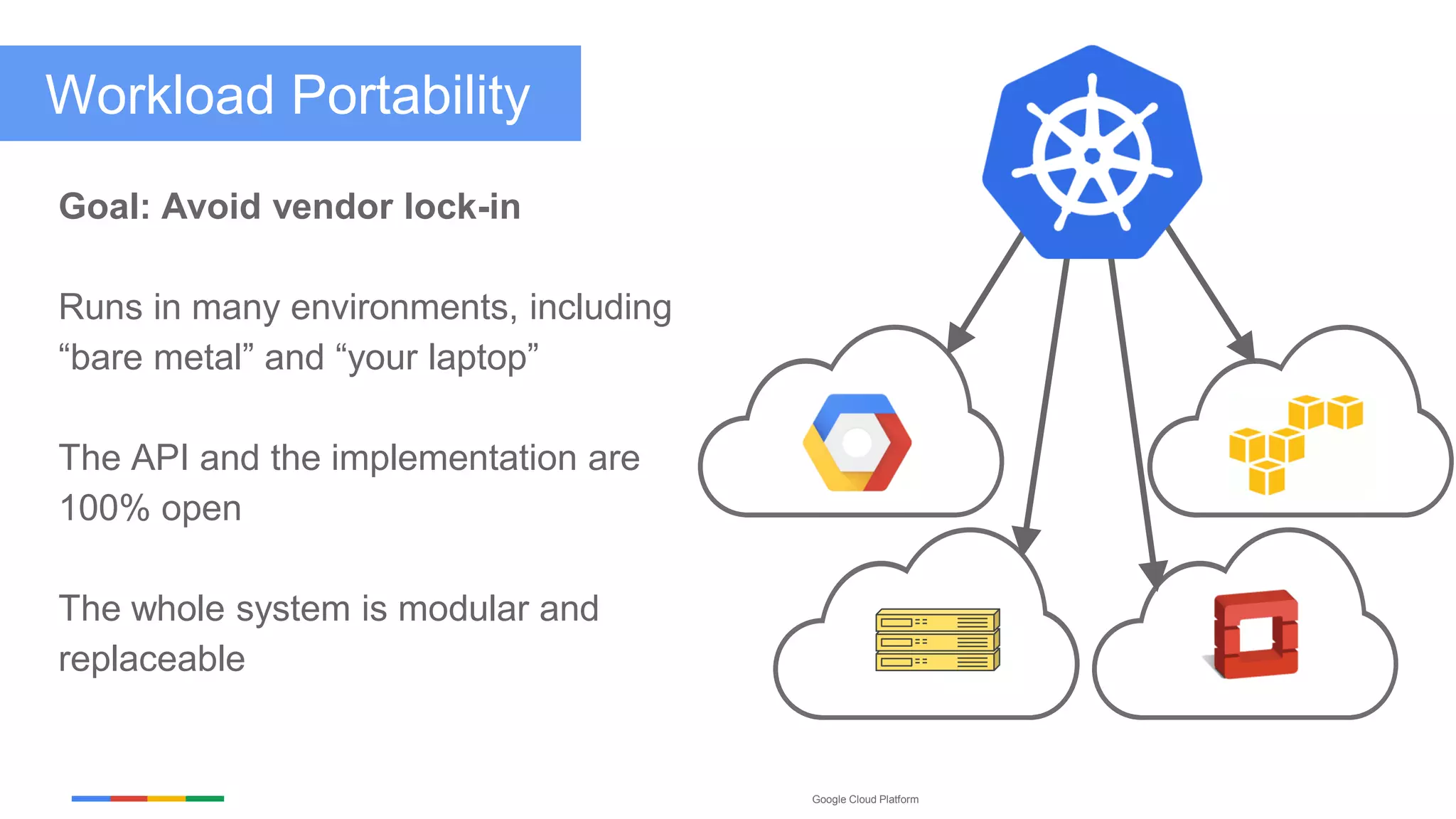

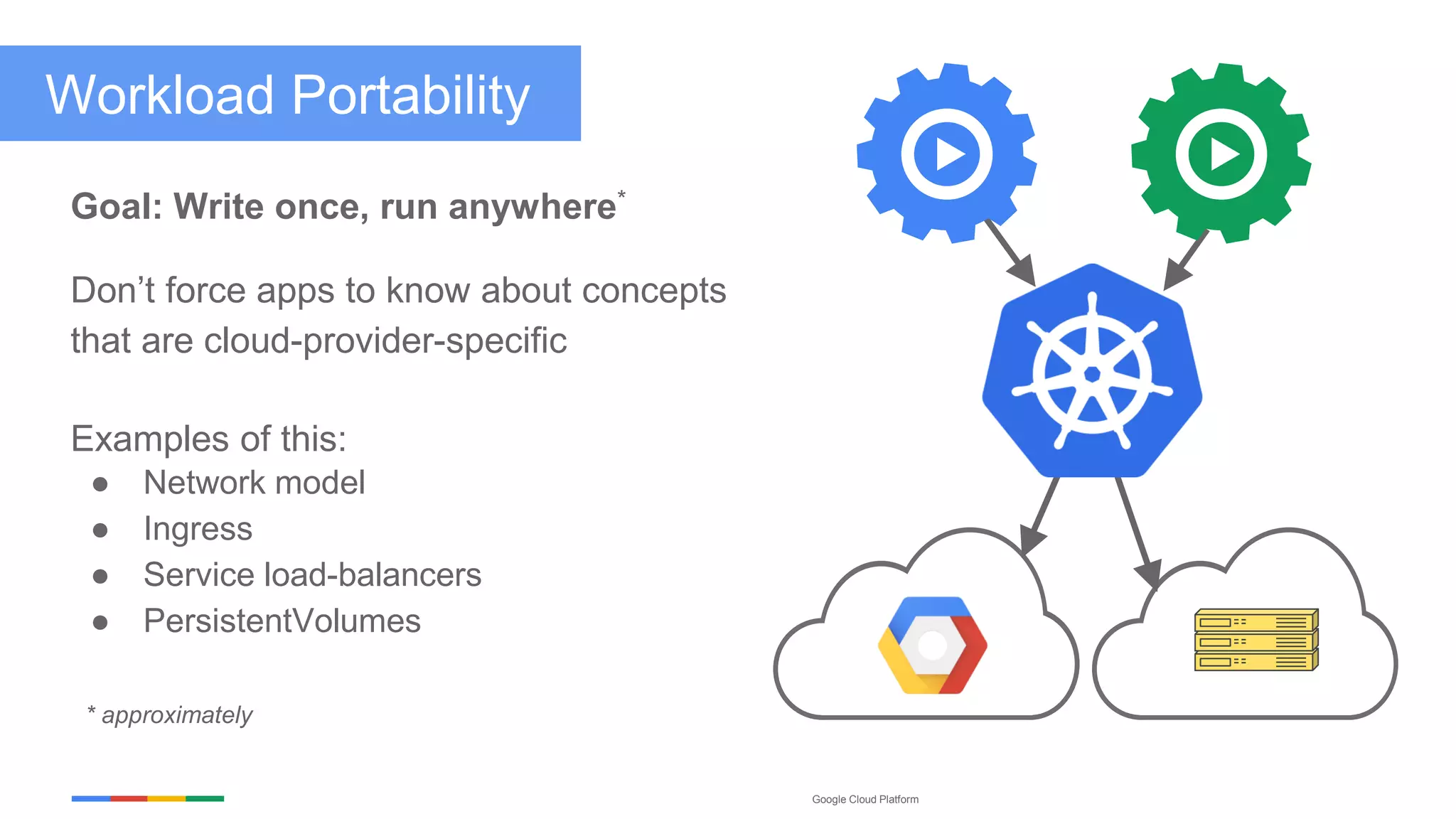

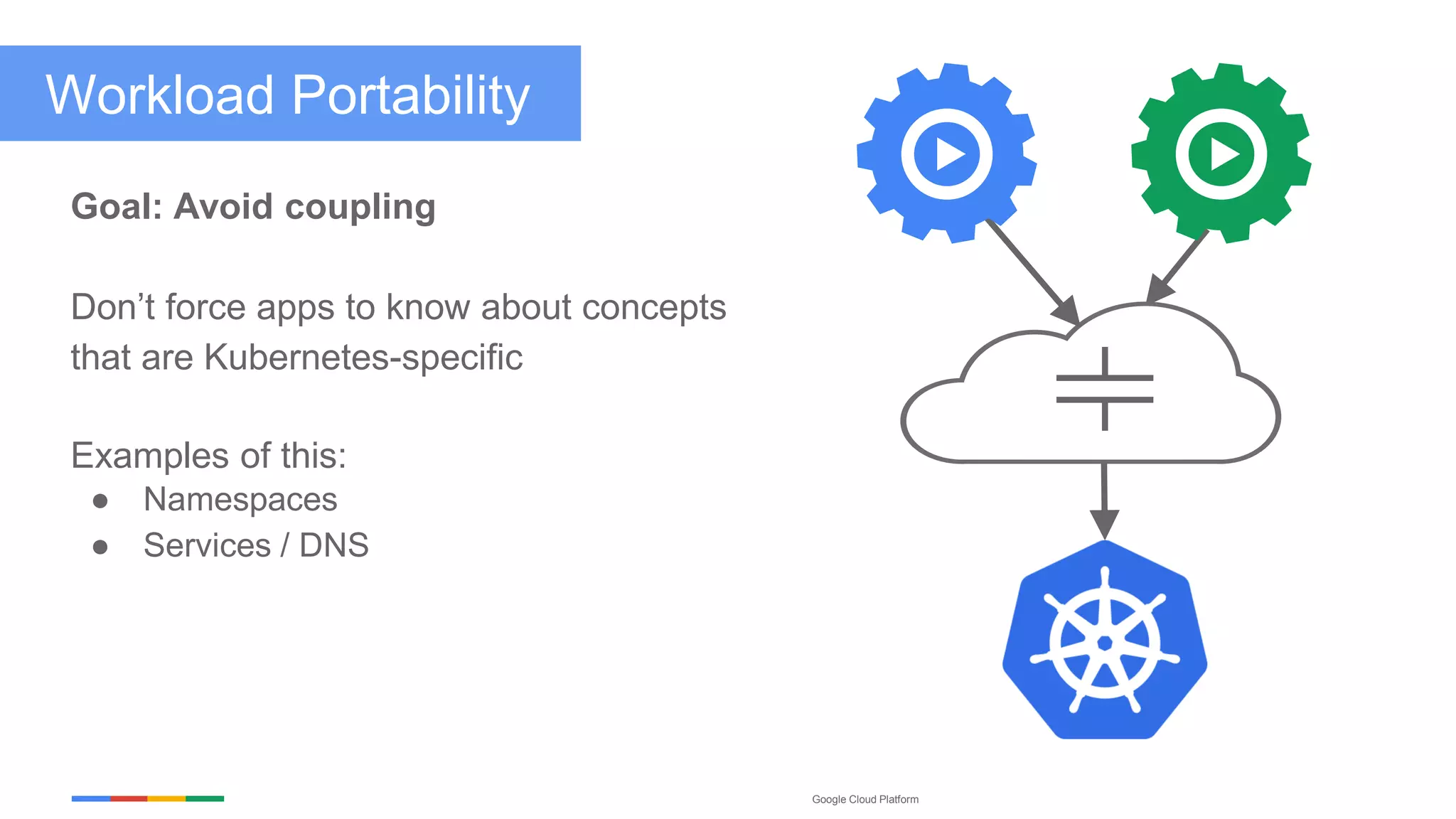

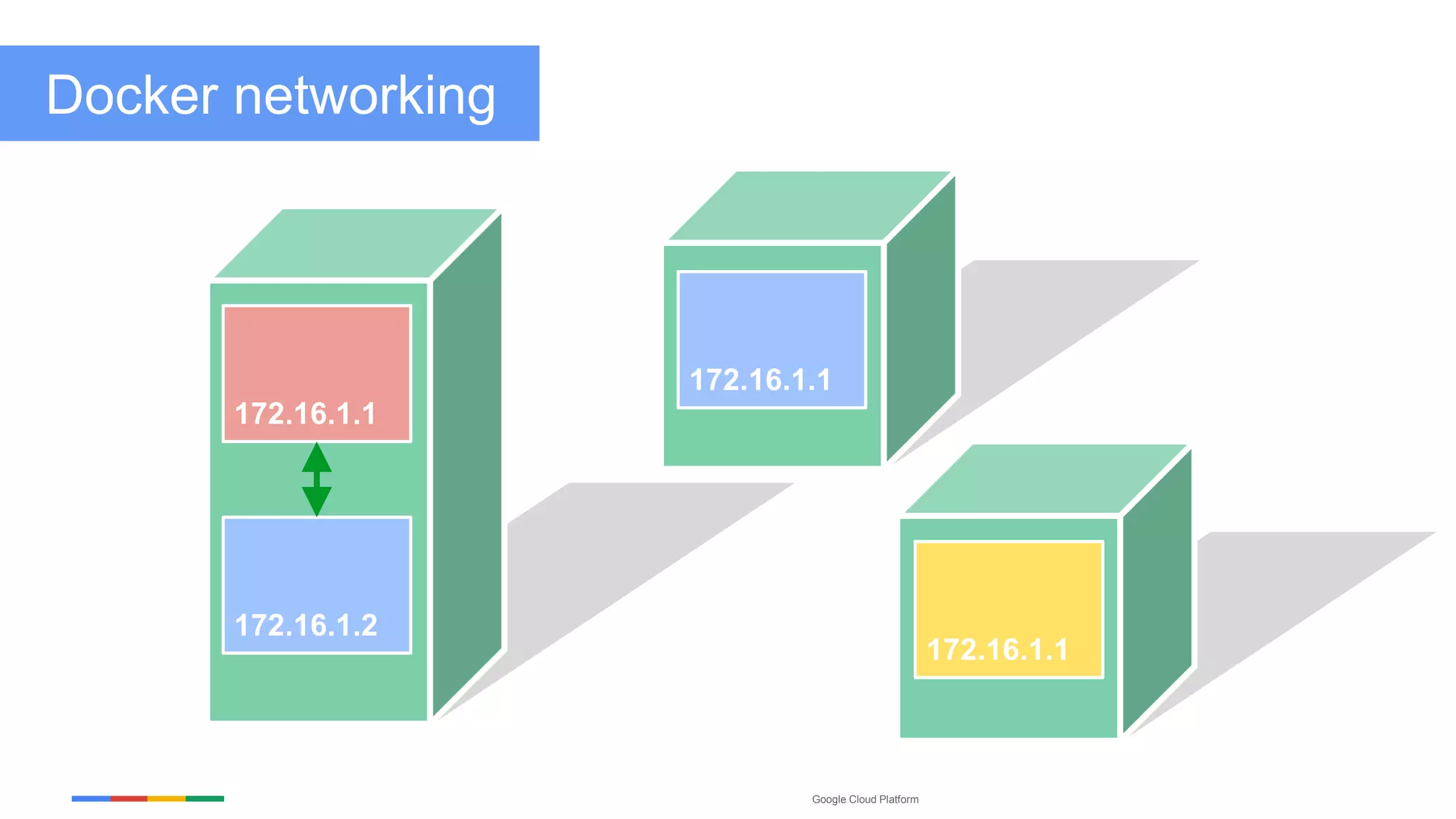

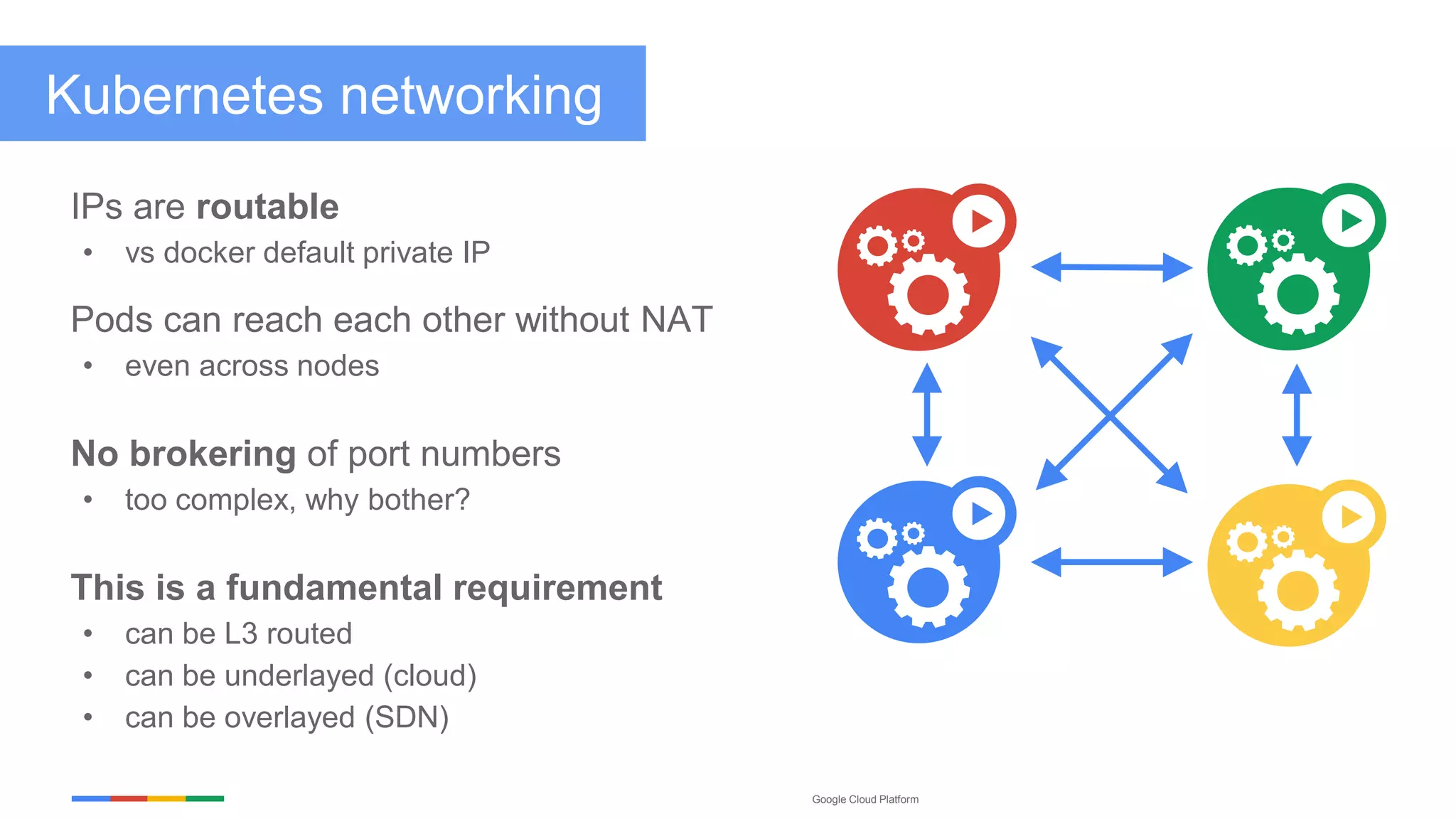

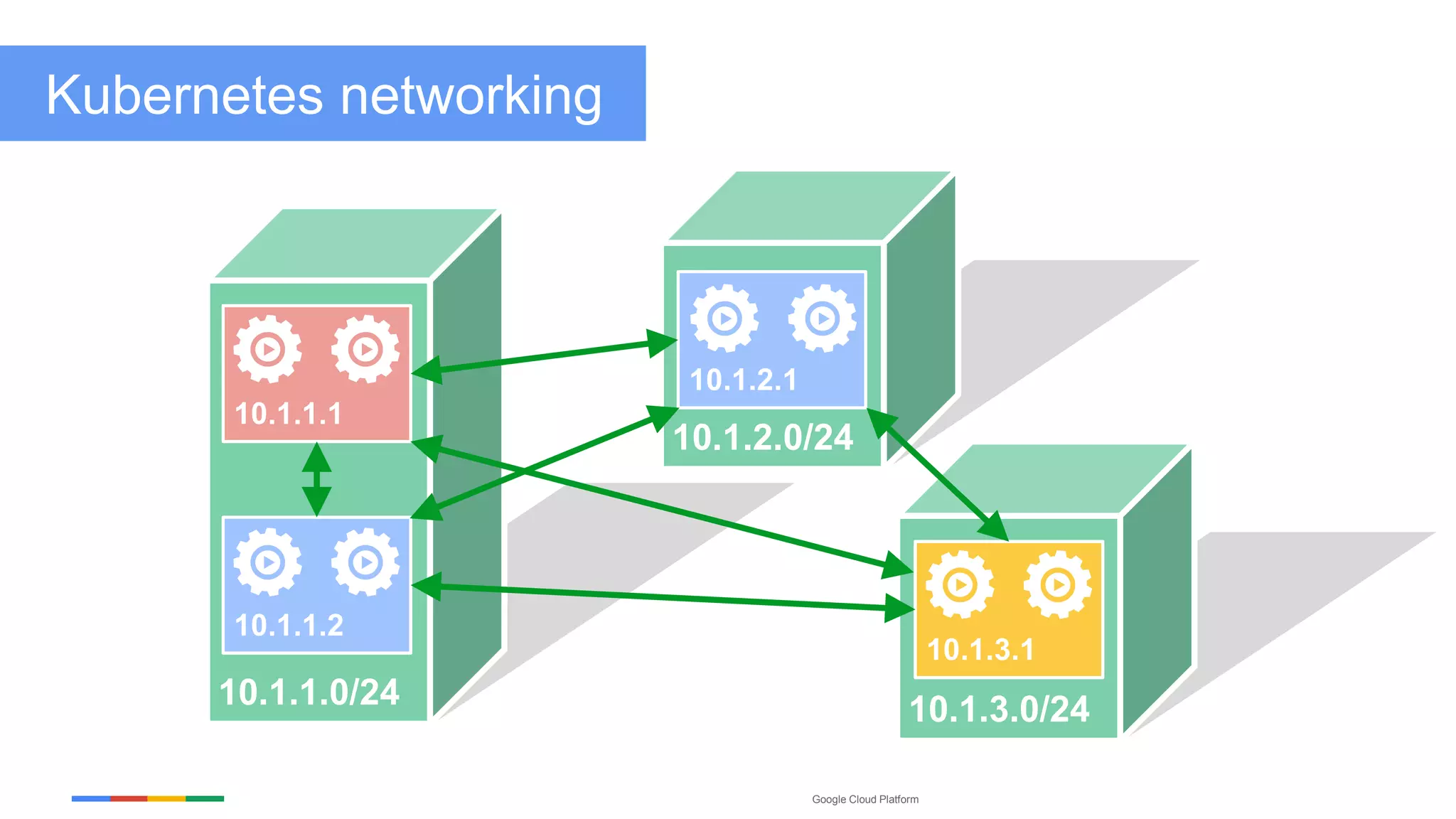

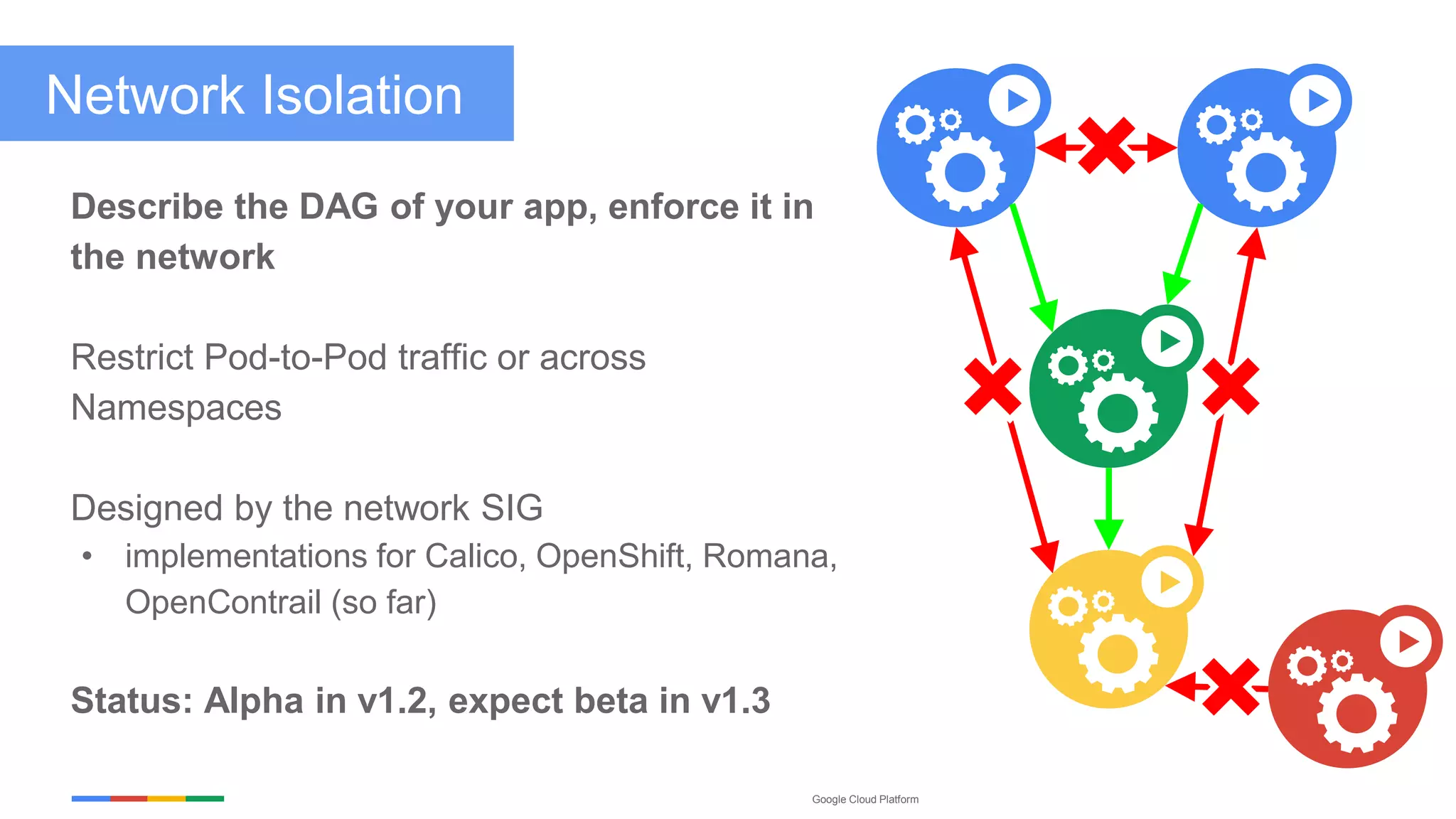

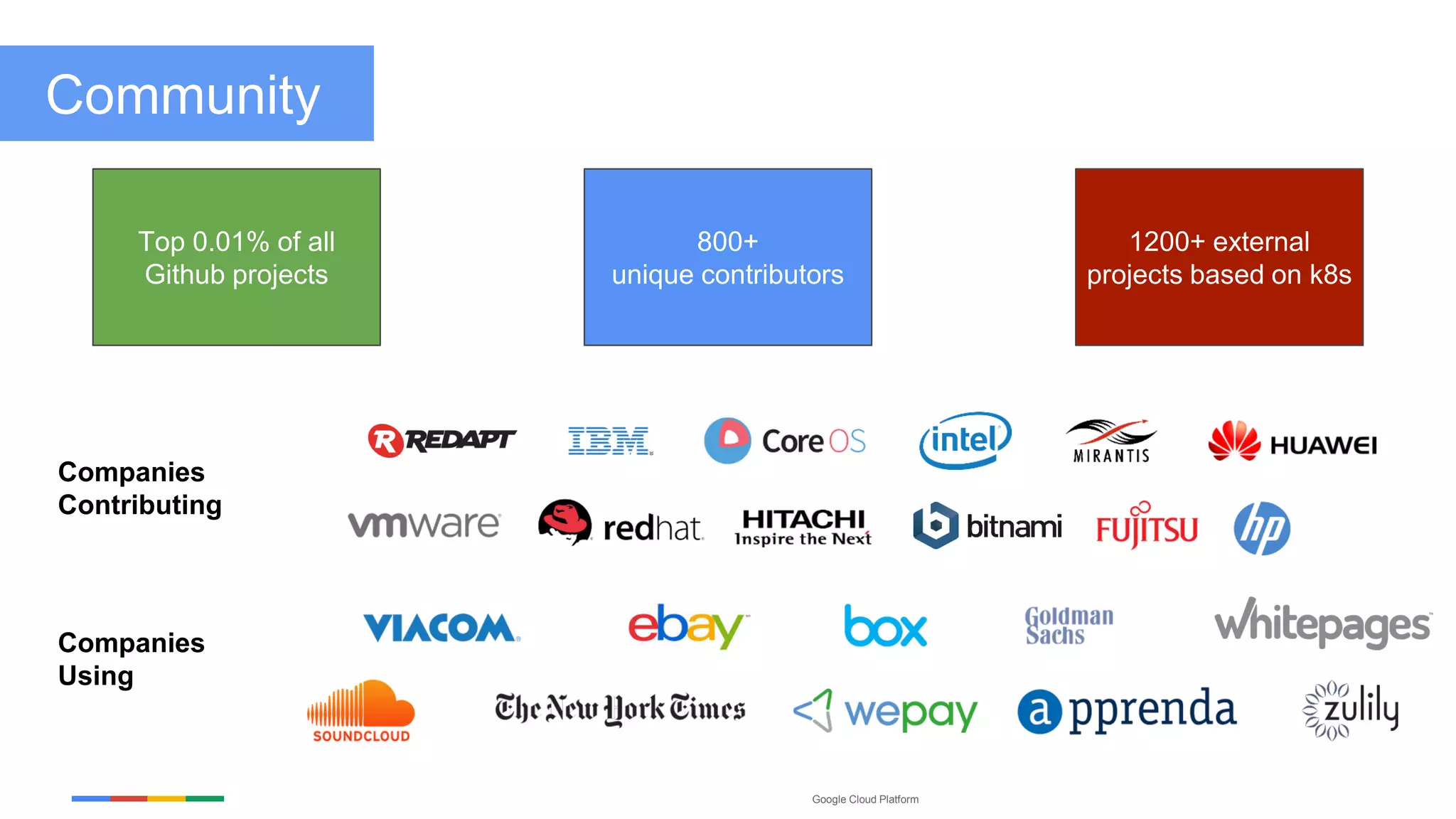

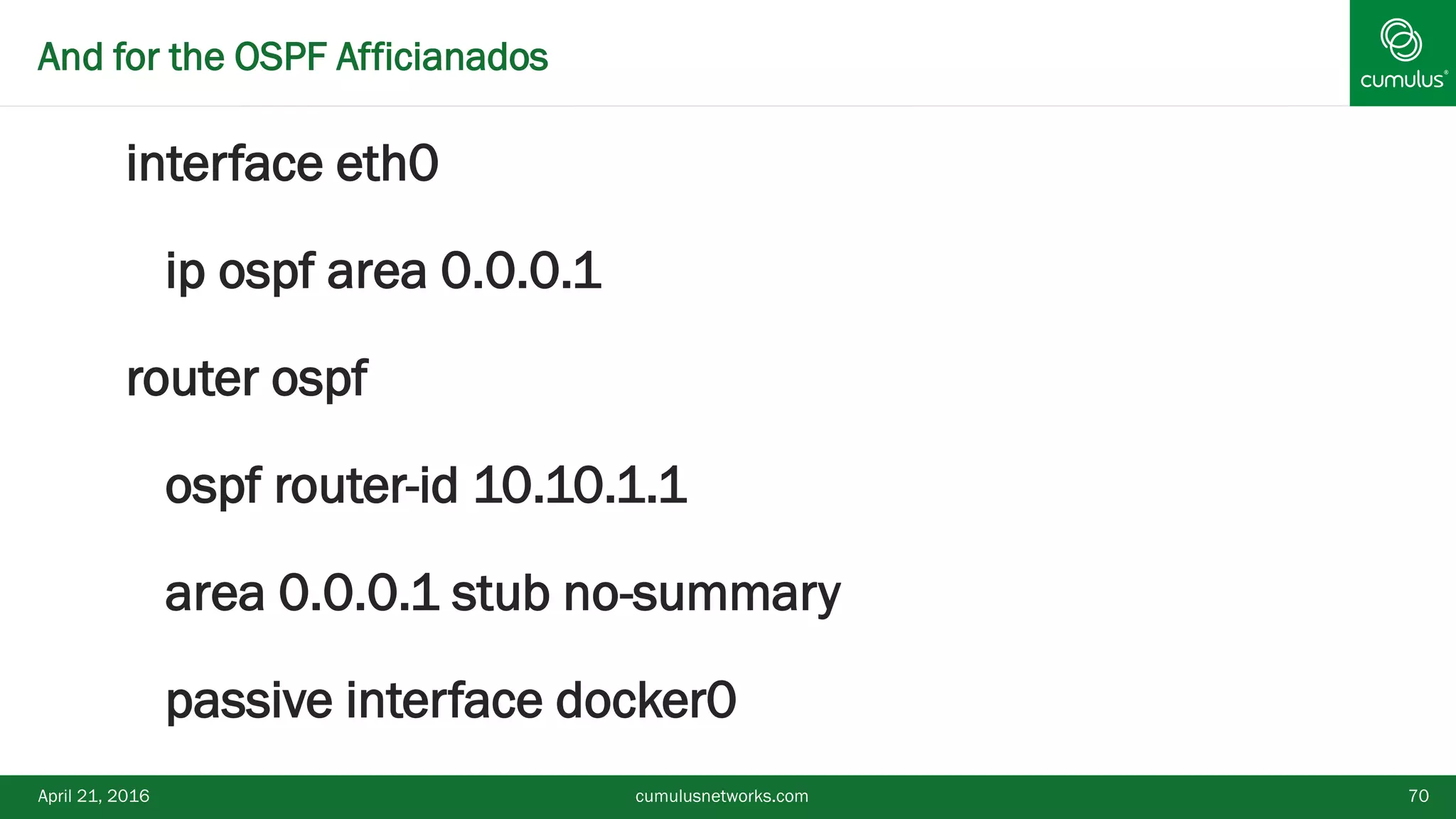

The document discusses the evolution of application design and the role of Kubernetes in modern networking, promoting a pure Layer 3 fabric for deploying applications. It highlights Kubernetes' ease of use, scalability, and native support for container networking while emphasizing the simplicity brought by modern routing protocols. Open networking solutions are also examined, underscoring the importance of robust and scalable designs in contemporary data centers.