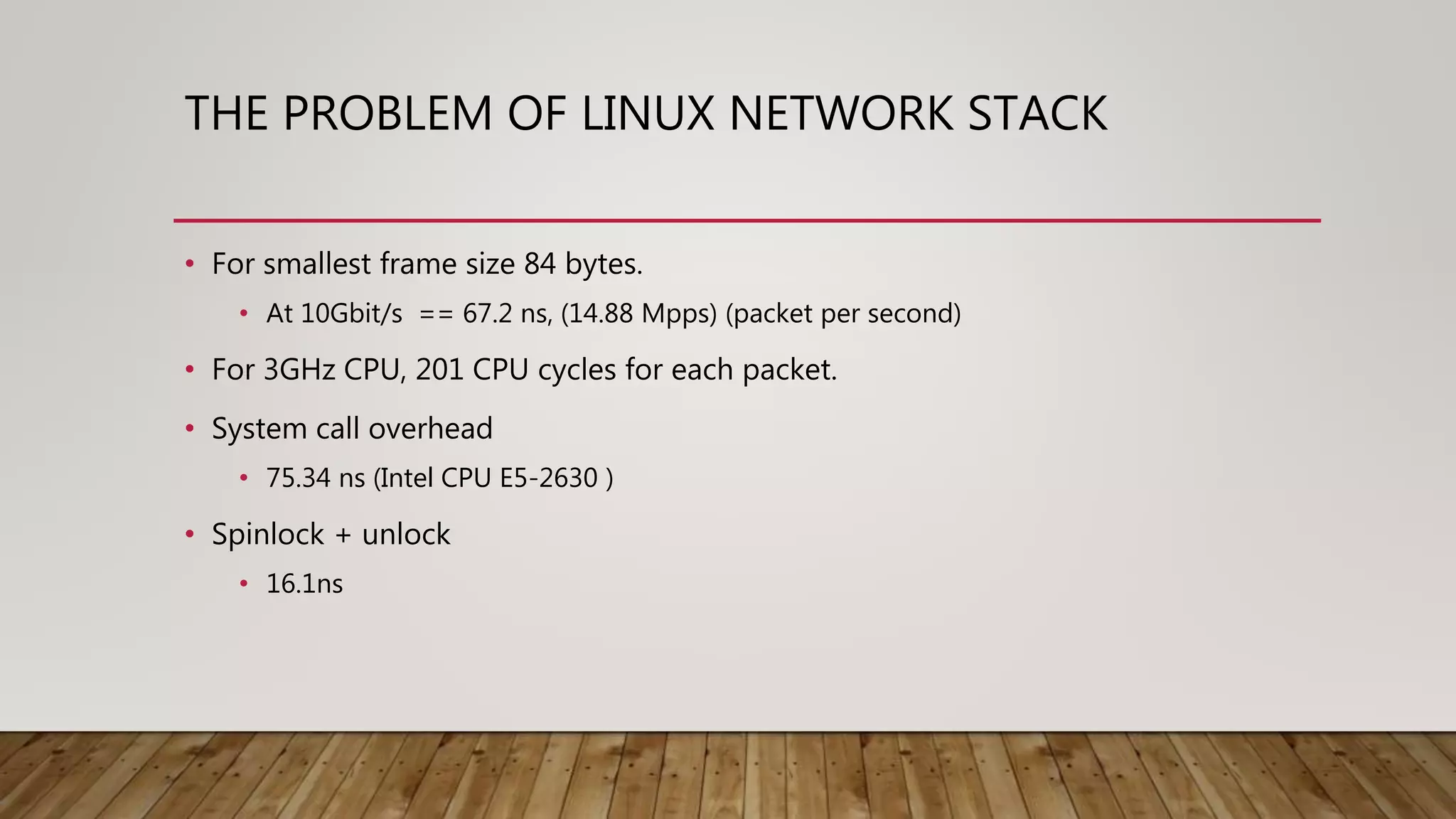

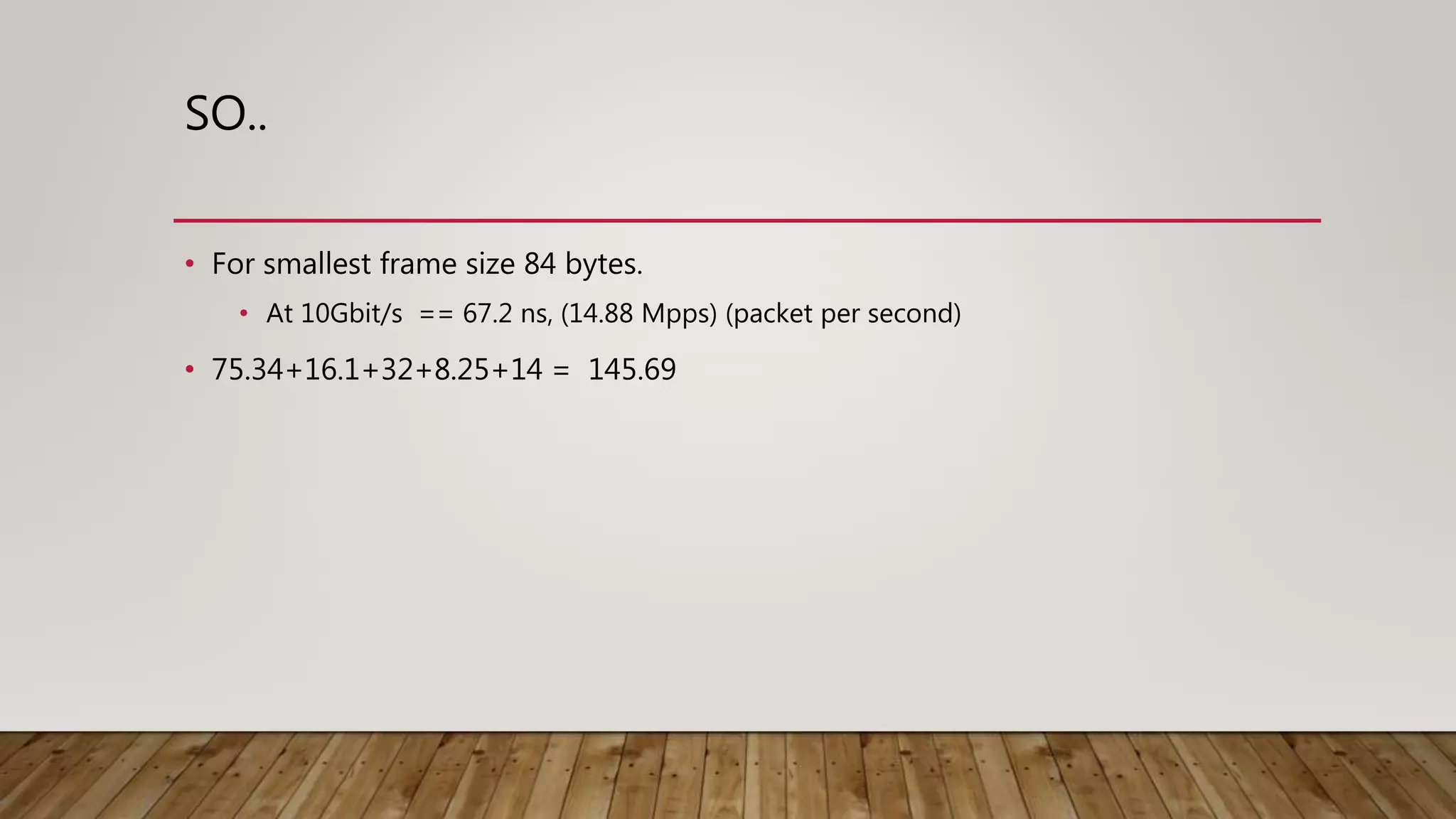

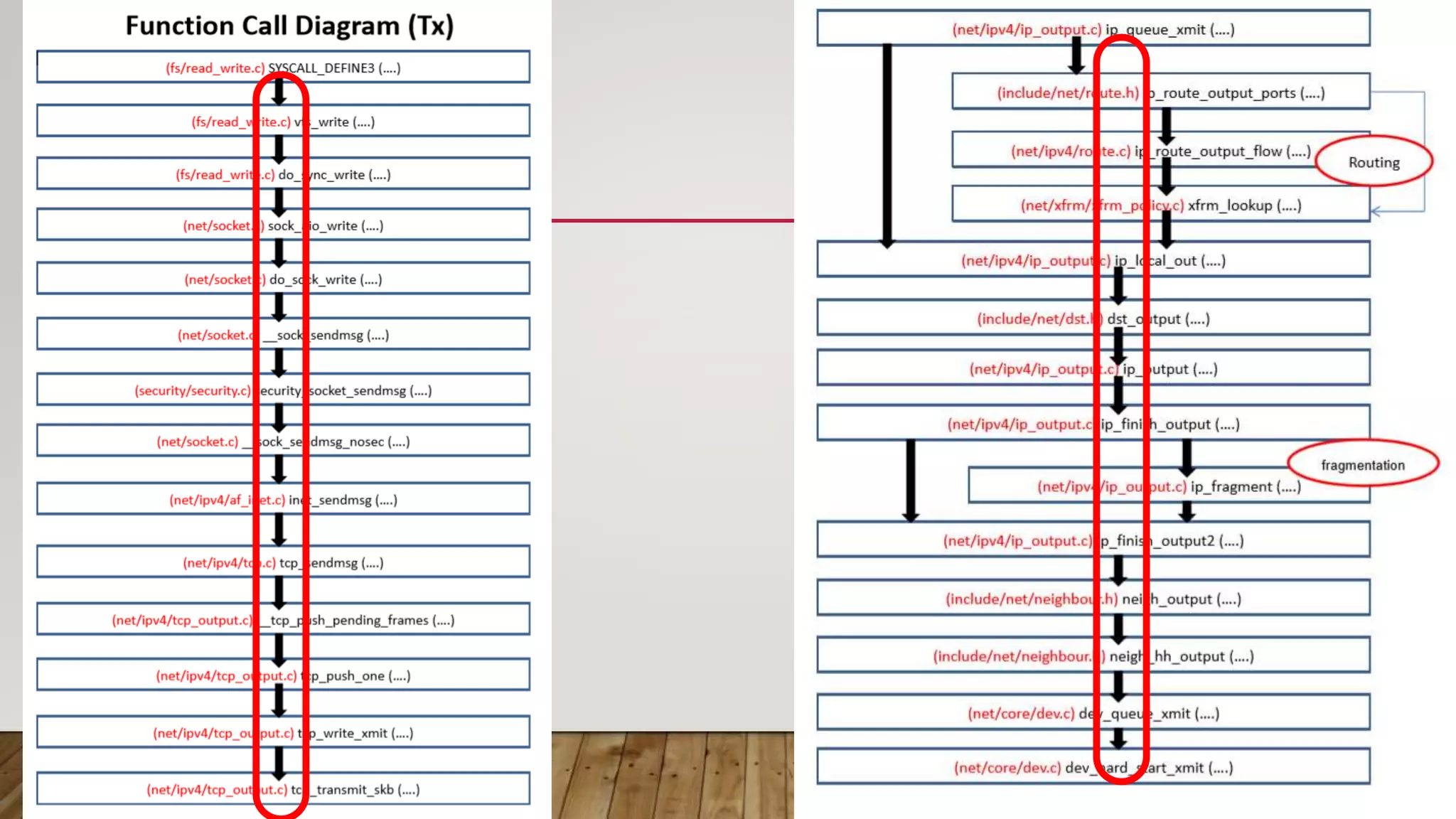

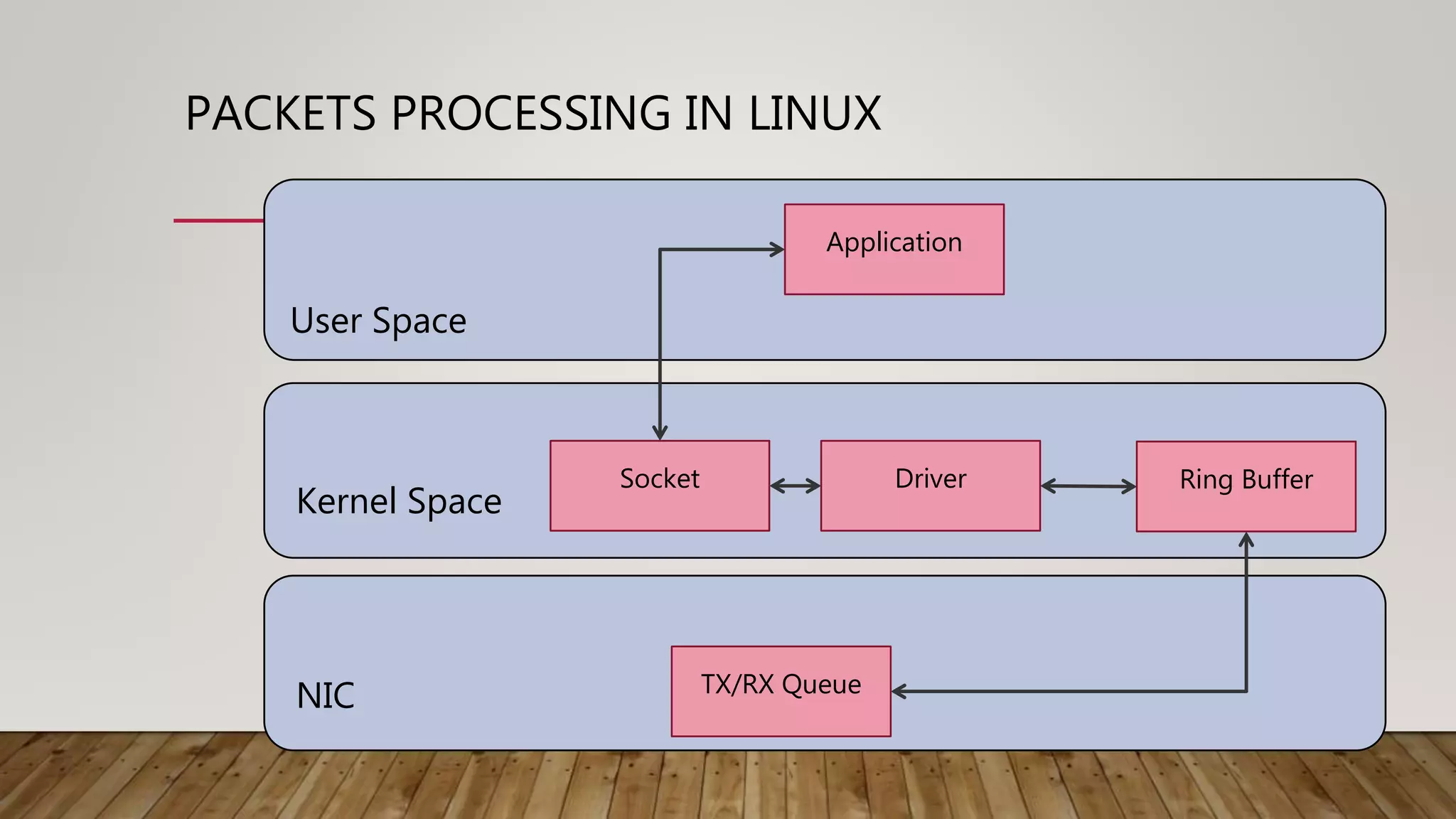

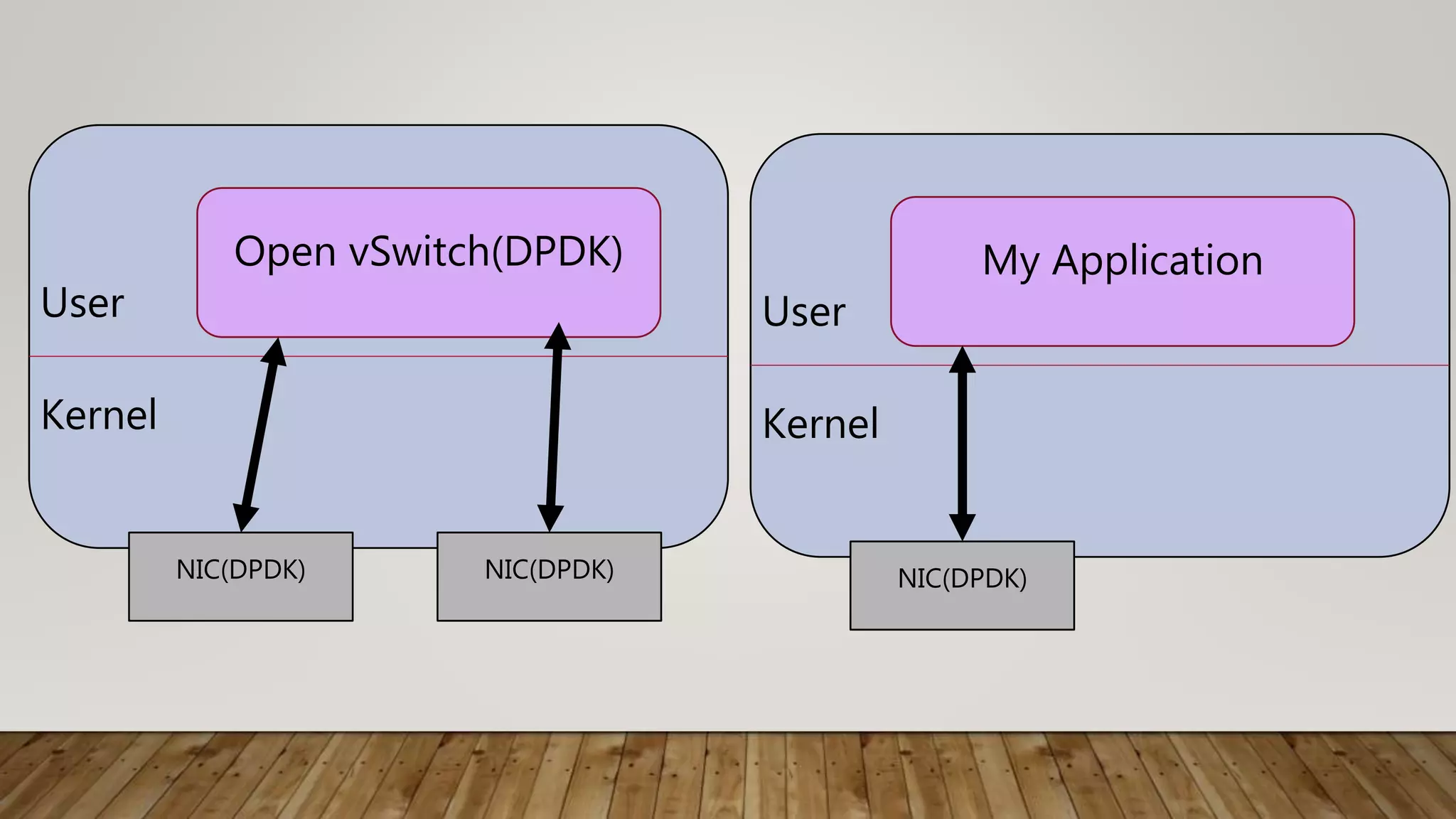

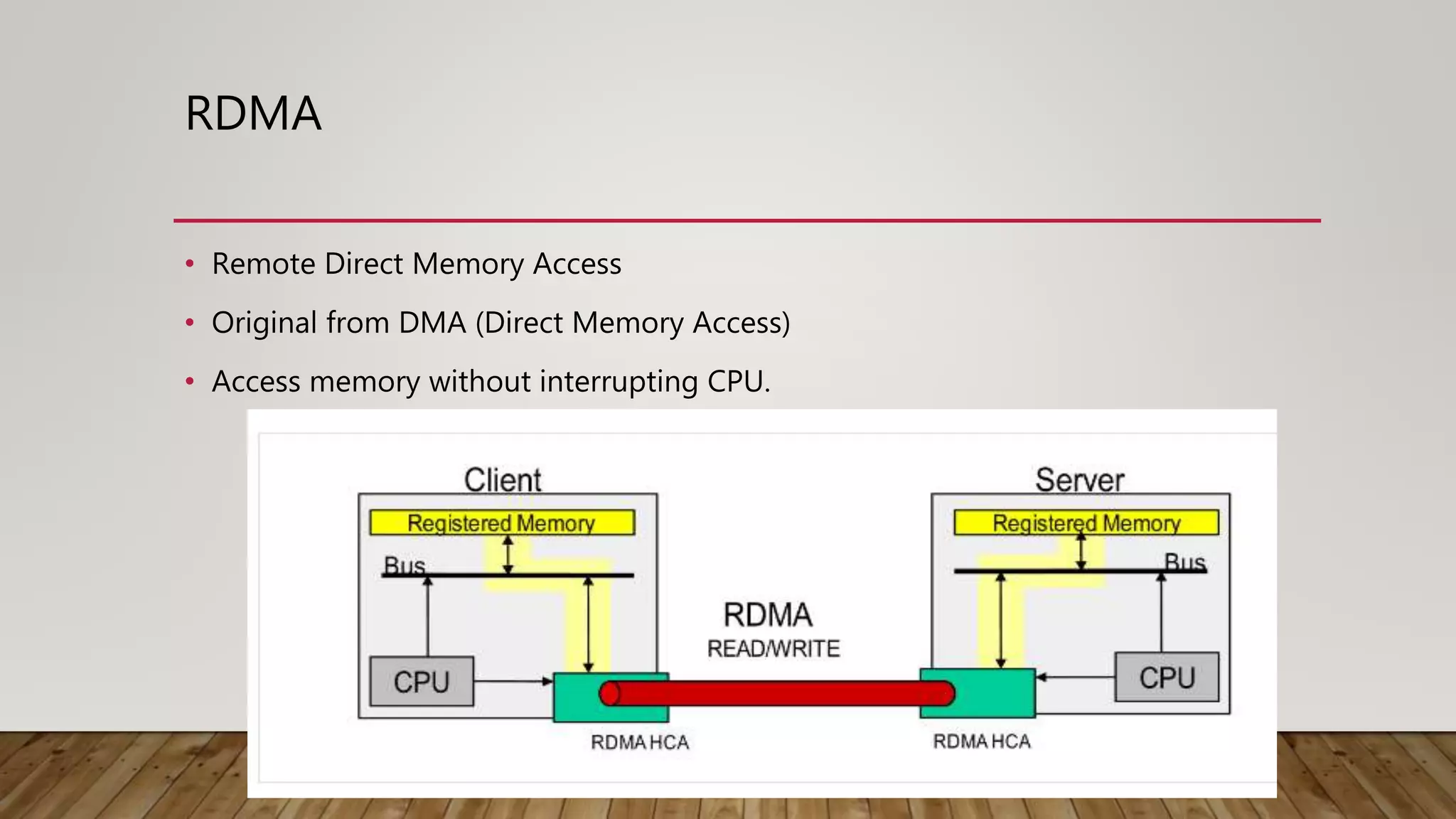

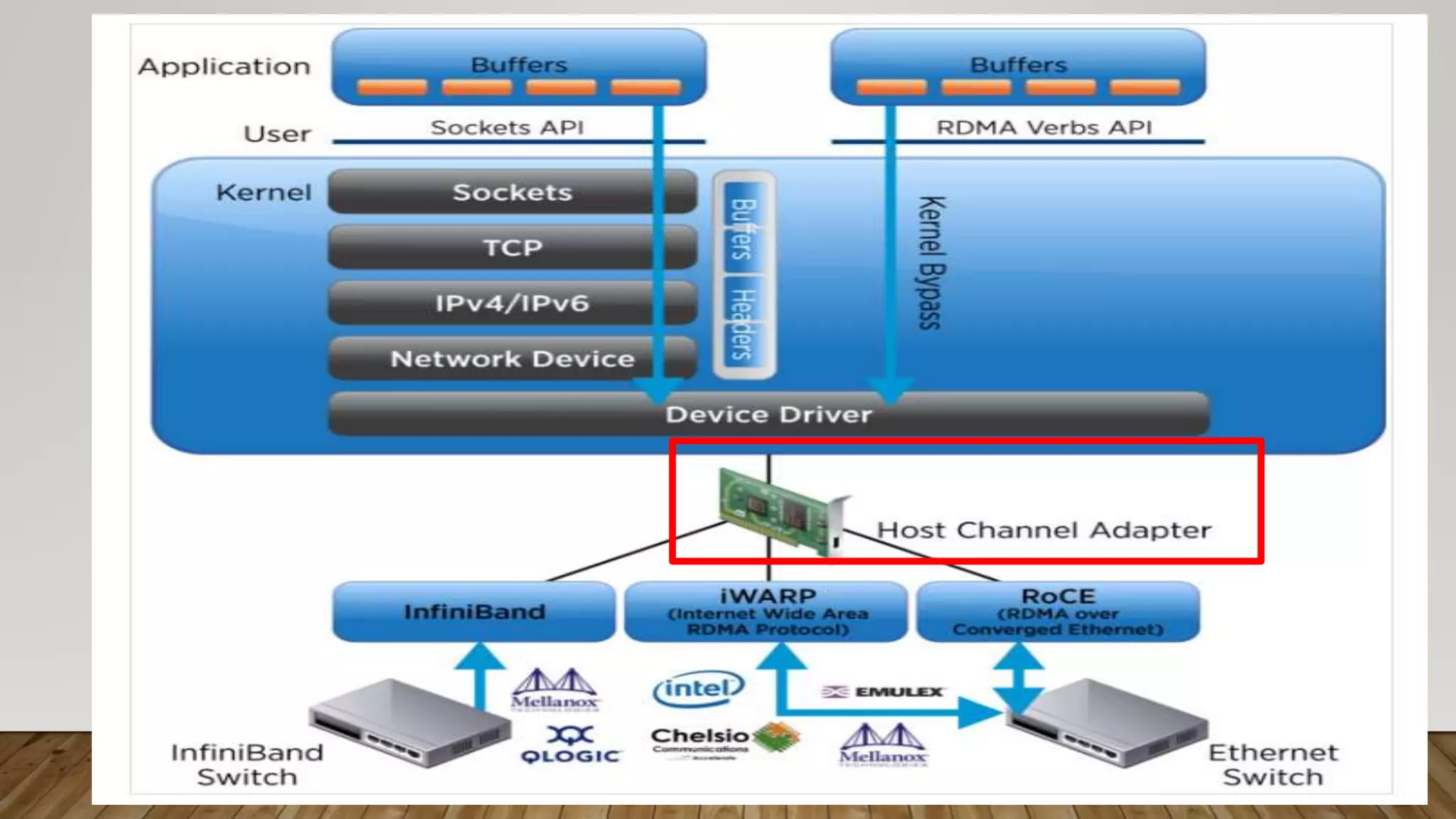

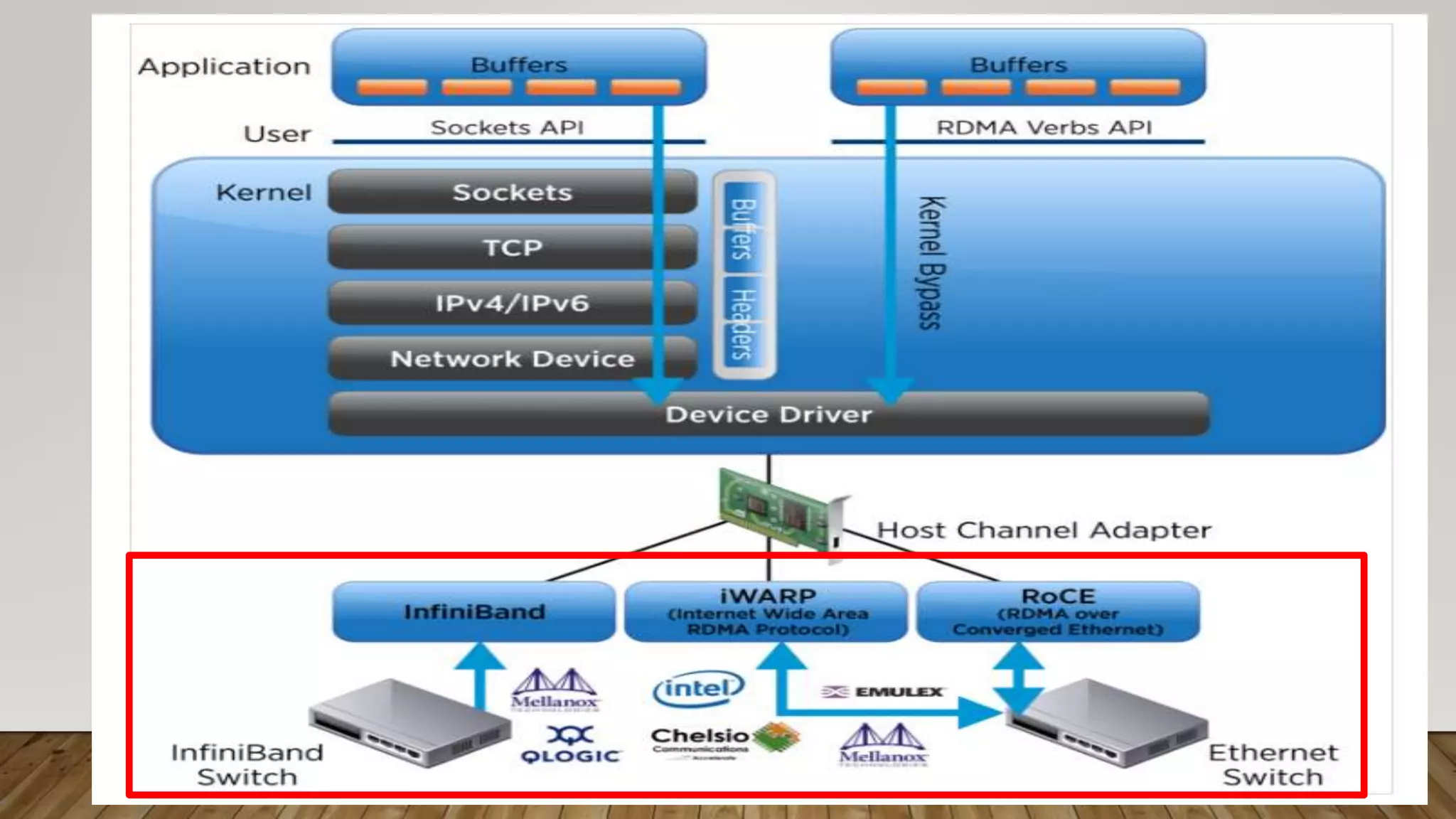

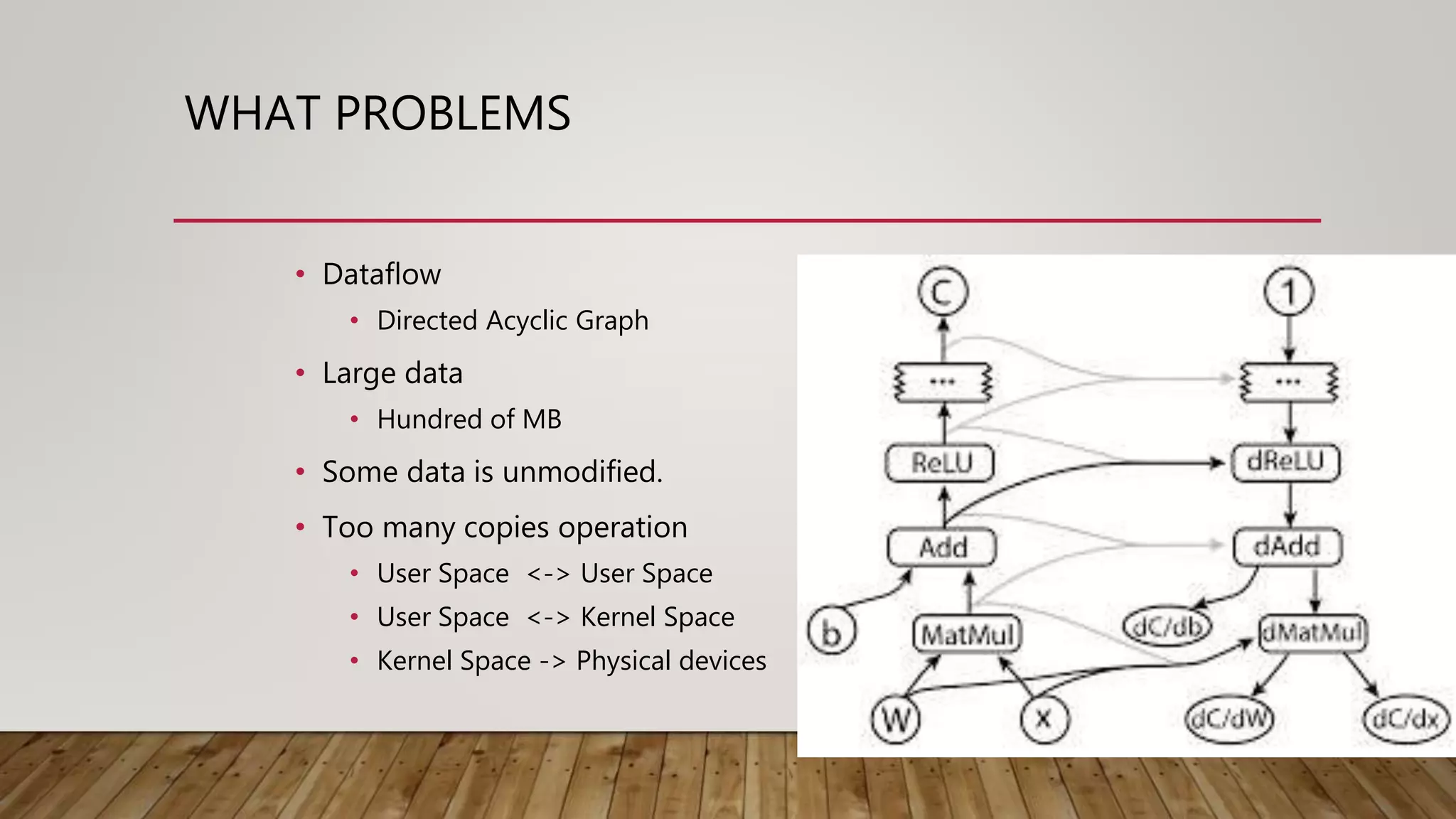

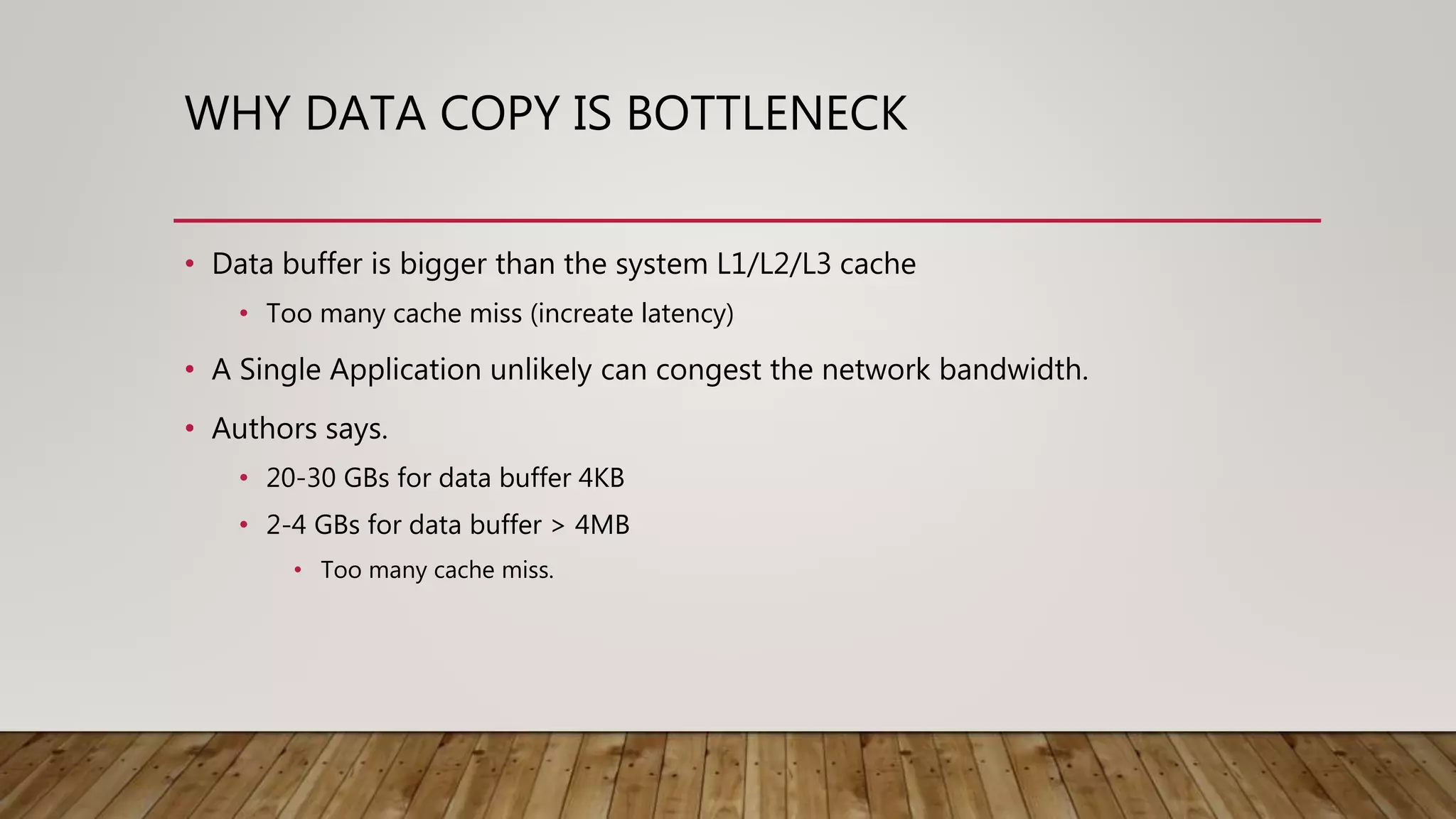

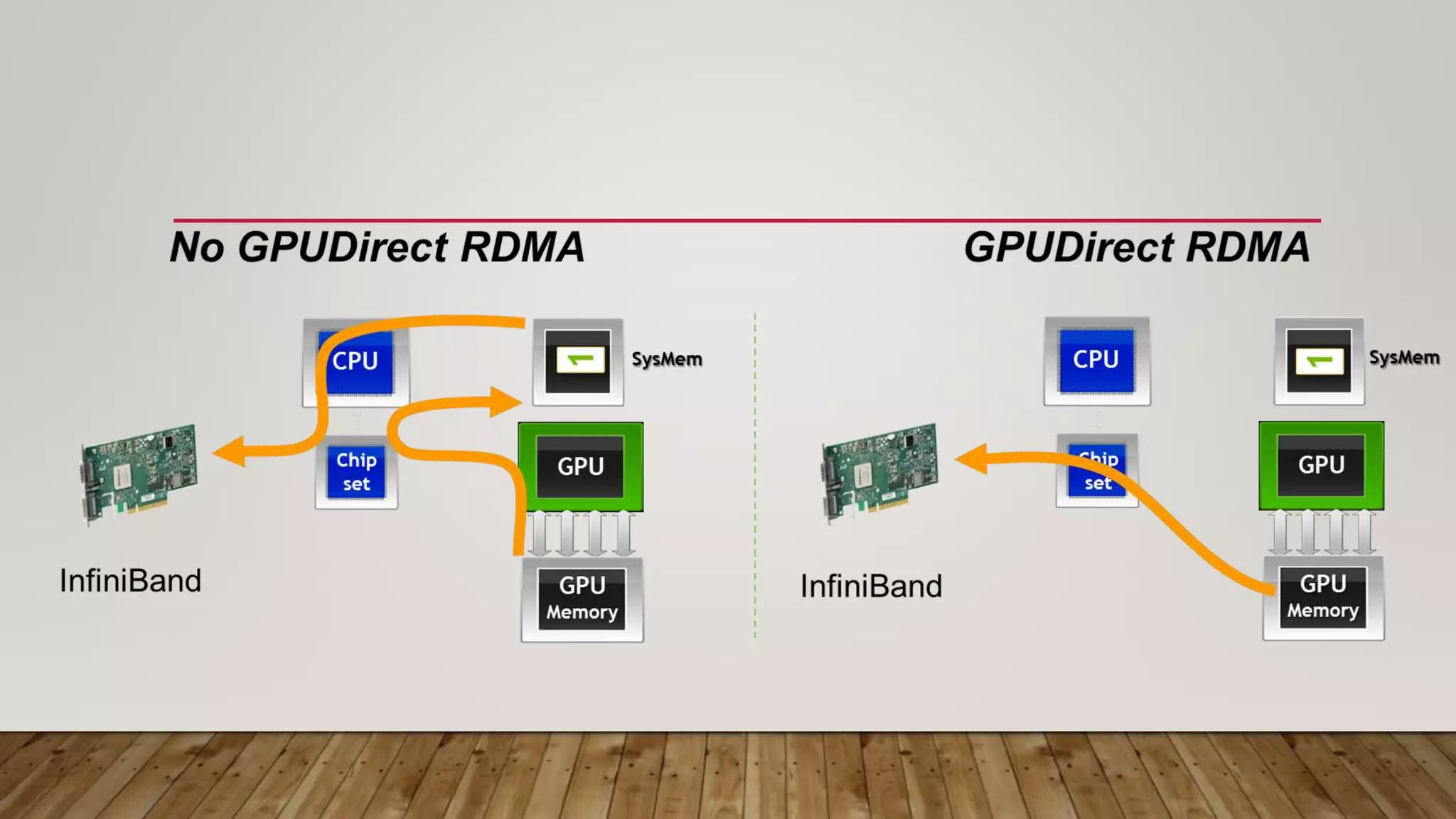

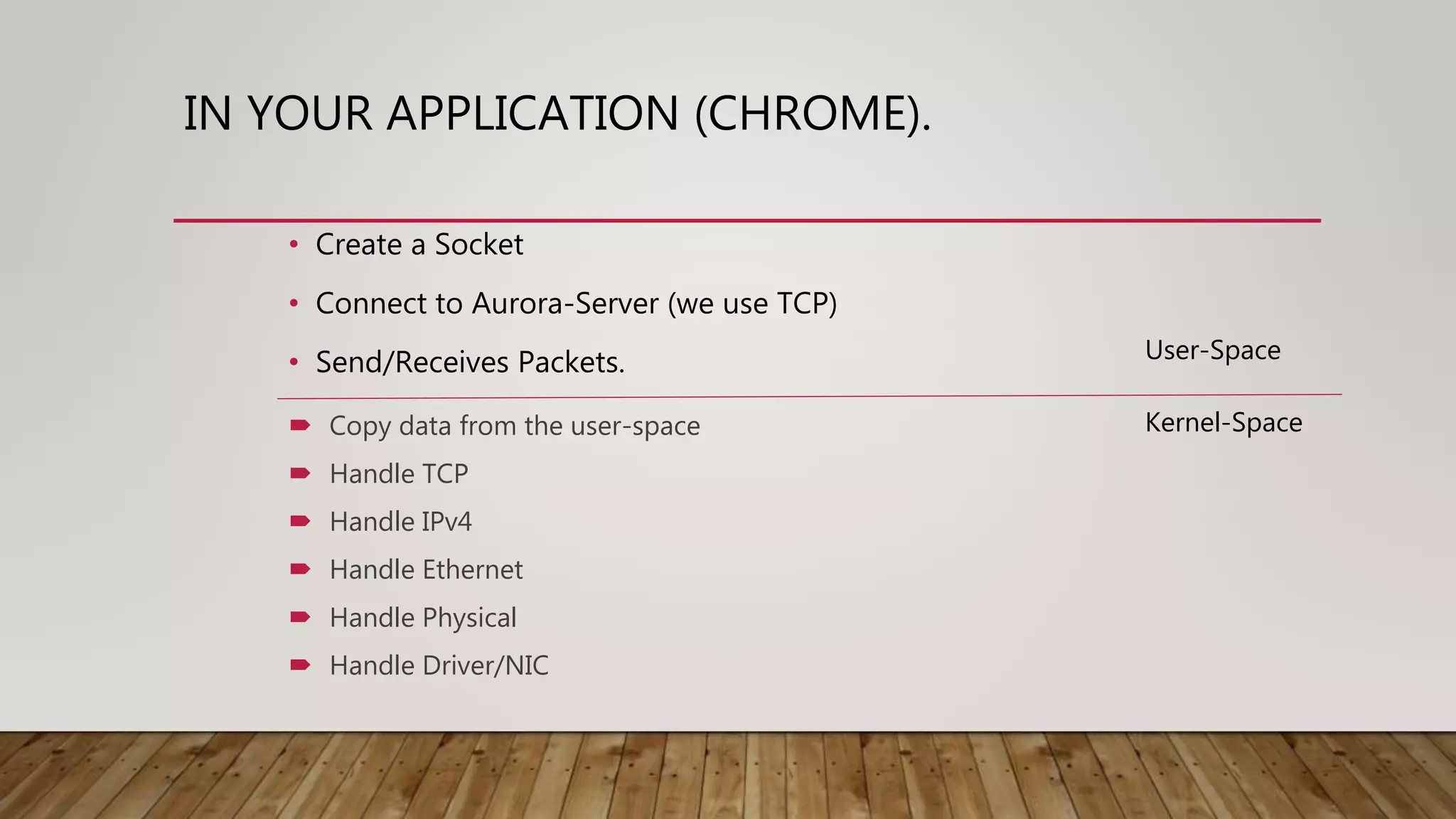

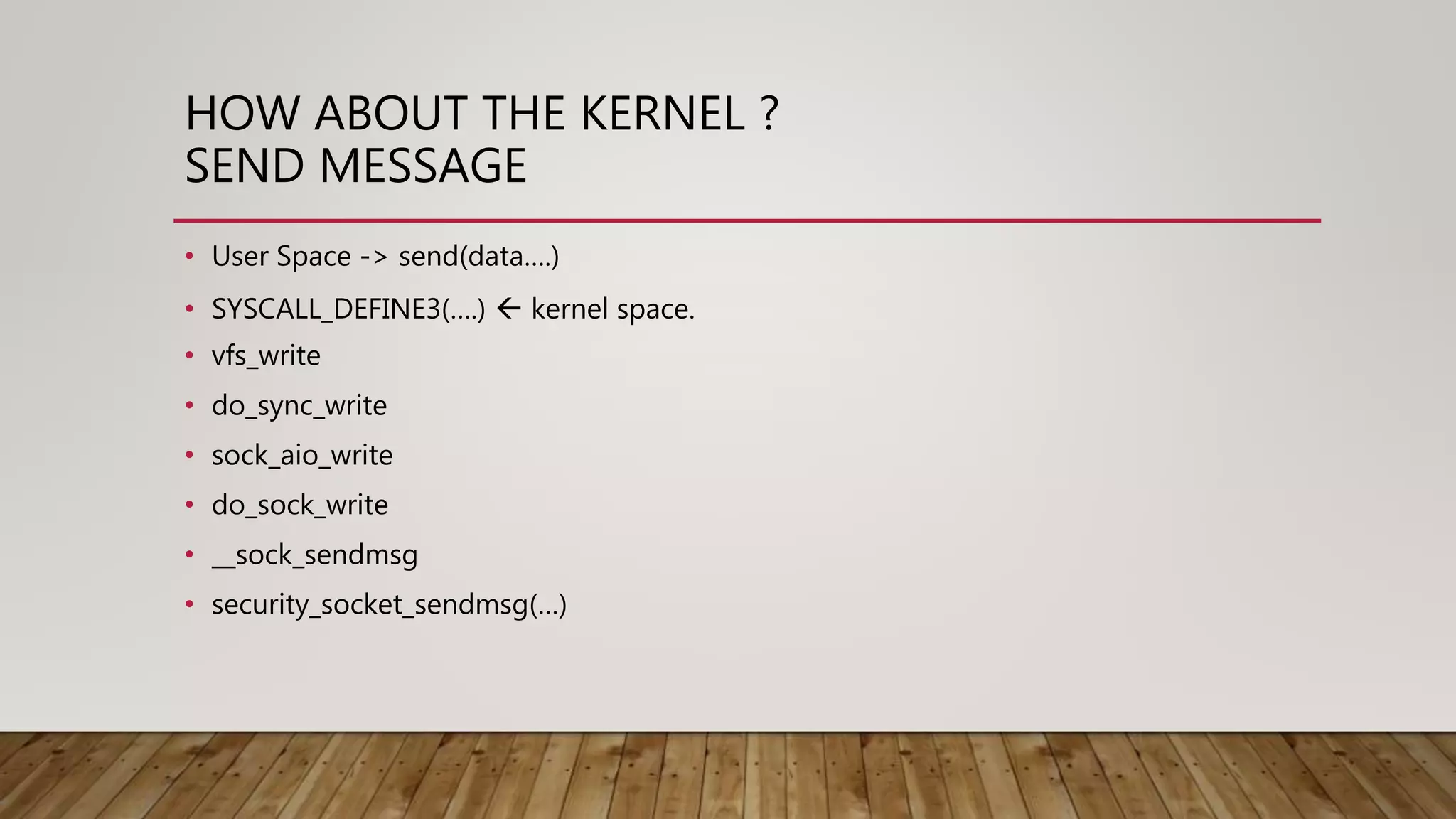

The document discusses high performance networking and summarizes a presentation about improving network performance. It describes drawbacks of the current Linux network stack, including kernel overhead and data copying. It then discusses approaches like DPDK and RDMA that can help improve performance by reducing overhead and enabling zero-copy data transfers. A case study is presented on using RDMA to improve TensorFlow performance by eliminating unnecessary data copies between devices.

![THE PROBLEM OF TCP

• Designed for WAN network environment

• Different hardware between now and then.

• Modify the implementation of TCP to improve its performance

• DCTCP (Data Center TCP)

• MPTCP (Multi Path TCP)

• Google BBR (Modify Congestion Control Algorithm)

• New Protocol

• [論文導讀]

• Re-architecting datacenter networks and stacks for low latency and high performance](https://image.slidesharecdn.com/highperformacenetwork-180210021741/75/High-performace-network-of-Cloud-Native-Taiwan-User-Group-19-2048.jpg)