Web scraping with nutch solr part 2

•Download as ODP, PDF•

4 likes•3,882 views

Part 2 of a three part presentation showing how nutch and solr may be used to crawl the web, extract data and prepare it for loading into a data warehouse.

Report

Share

Report

Share

Recommended

Large Scale Crawling with Apache Nutch and Friends

Presented by Julien Nioche, Director, DigitalPebble

This session will give an overview of Apache Nutch. I will describe its main components and how it fits with other Apache projects such as Hadoop, SOLR, Tika or HBase. The second part of the presentation will be focused on the latest developments in Nutch, the differences between the 1.x and 2.x branch and what we can expect to see in Nutch in the future. This session will cover many practical aspects and should be a good starting point to crawling on a large scale with Apache Nutch and SOLR.

Web scraping with nutch solr

Part 1 of a three part presentation showing how nutch and solr may be used to crawl the web, extract data and prepare it for loading into a data warehouse.

Large Scale Crawling with Apache Nutch and Friends

This session will give an overview of Apache Nutch. I will describe its main components and how it fits with other Apache projects such as Hadoop, SOLR, Tika or HBase. The second part of the presentation will be focused on the latest developments in Nutch, the differences between the 1.x and 2.x branch and what we can expect to see in Nutch in the future. This session will cover many practical aspects and should be a good starting point to crawling on a large scale with Apache Nutch and SOLR.

StormCrawler at Bristech

StormCrawler presentation given at the Bristech meetup on 6/10/2016. Covers the main concepts and functionalities of Apache Storm, then describes StormCrawler with a step by step approach to building a scalable web crawler. Finally we saw 3 real users of StormCrawler, illustrating the versatility of the project.

Web Crawling and Data Gathering with Apache Nutch

Apache Nutch Presentation by Steve Watt at Data Day Austin 2011

Introduction to apache nutch

Session 1 : "Intro to Apache Nutch"

- Mr. Sungrem Thang (25 mins)

Web crawling/Web scrapping

Web crawlers,robots,spiders etc.

An introduction to Storm Crawler

Storm-Crawler is a collection of resources for building low-latency, large scale web crawlers on Apache Storm. We will compare with similar projects like Apache Nutch and present several use cases where the storm-crawler is being used. In particular we will see how the Storm-crawler can be used with ElasticSearch and Kibana for crawling and indexing web pages.

Recommended

Large Scale Crawling with Apache Nutch and Friends

Presented by Julien Nioche, Director, DigitalPebble

This session will give an overview of Apache Nutch. I will describe its main components and how it fits with other Apache projects such as Hadoop, SOLR, Tika or HBase. The second part of the presentation will be focused on the latest developments in Nutch, the differences between the 1.x and 2.x branch and what we can expect to see in Nutch in the future. This session will cover many practical aspects and should be a good starting point to crawling on a large scale with Apache Nutch and SOLR.

Web scraping with nutch solr

Part 1 of a three part presentation showing how nutch and solr may be used to crawl the web, extract data and prepare it for loading into a data warehouse.

Large Scale Crawling with Apache Nutch and Friends

This session will give an overview of Apache Nutch. I will describe its main components and how it fits with other Apache projects such as Hadoop, SOLR, Tika or HBase. The second part of the presentation will be focused on the latest developments in Nutch, the differences between the 1.x and 2.x branch and what we can expect to see in Nutch in the future. This session will cover many practical aspects and should be a good starting point to crawling on a large scale with Apache Nutch and SOLR.

StormCrawler at Bristech

StormCrawler presentation given at the Bristech meetup on 6/10/2016. Covers the main concepts and functionalities of Apache Storm, then describes StormCrawler with a step by step approach to building a scalable web crawler. Finally we saw 3 real users of StormCrawler, illustrating the versatility of the project.

Web Crawling and Data Gathering with Apache Nutch

Apache Nutch Presentation by Steve Watt at Data Day Austin 2011

Introduction to apache nutch

Session 1 : "Intro to Apache Nutch"

- Mr. Sungrem Thang (25 mins)

Web crawling/Web scrapping

Web crawlers,robots,spiders etc.

An introduction to Storm Crawler

Storm-Crawler is a collection of resources for building low-latency, large scale web crawlers on Apache Storm. We will compare with similar projects like Apache Nutch and present several use cases where the storm-crawler is being used. In particular we will see how the Storm-crawler can be used with ElasticSearch and Kibana for crawling and indexing web pages.

Web Crawling with Apache Nutch

Talk about Apache Nutch on ApacheCon Europe 2014:

http://sched.co/1nyYa7b

http://events.linuxfoundation.org/sites/events/files/slides/aceu2014-snagel-web-crawling-nutch.pdf

A quick introduction to Storm Crawler

Introduction to Storm Crawler [ https://github.com/DigitalPebble/storm-crawler], a collection of resources for building low-latency, large scale web crawlers on Storm available under Apache License.

Fast Feather talk given at ApacheCon EU 2014 Budapest

Meet Solr For The Tirst Again

Anyone who has tried integrating search in their application knows how good and powerful Solr is but always wished it was simpler to get started and simpler to take it to production.

I will talk about the recent features added to Solr making it easier for users and some of the changes we plan on adding soon to make the experience even better.

Nutch - web-scale search engine toolkit

This slideset presents the Nutch search engine (http://lucene.apache.org/nutch). A high-level architecture is described, as well as some challenges common in web-crawling and solutions implemented in Nutch. The presentation closes with a brief look into the Nutch future.

Nutch + Hadoop scaled, for crawling protected web sites (hint: Selenium)

Presented at Houston Hadoop Meetup in March '14

8a. How To Setup HBase with Docker

Here we analyze how to start a single node and a pseudo-distributed instance of HBase using docker.

Hadoop 2.x HDFS Cluster Installation (VirtualBox)

This is a straight-forward tutorial for those who are goring to use HDFS in an academic environment on their notebooks or PCs.

Get started with Developing Frameworks in Go on Apache Mesos

Apache Mesos provides a platform for building distributed systems. Mesos is built using the same principles as the Linux kernel, only at a different level of abstraction. The Mesos kernel runs on every machine and provides applications (e.g., Hadoop, Spark, Kafka, Elastic Search) with API’s for resource management and scheduling across entire datacenter and cloud environments. How to use that platform and what to make of it becomes a complex task requiring not only understanding of where the system has been but also where it is going. Using schedulers like Marathon and Aurora help to get your applications scheduled and executing on Mesos. In many cases it makes sense to build a framework and integrate directly. This talk will breakdown what is involved in building a framework in Go, how to-do this with examples and why you would want to-do this. Frameworks are not only for generally available software applications (like Kafka, HDFS, Spark ,etc) but should also be used for custom internal R&D built software applications too.

Developing Frameworks for Apache Mesos

Using schedulers like Marathon and Aurora help to get your applications scheduled and executing on Mesos. In many cases it makes sense to build a framework and integrate directly. This talk will breakdown what is involved in building a framework, how to-do this with examples and why you would want to-do this. Frameworks are not only for generally available software applications (like Kafka, HDFS, Spark ,etc) but can also be used for custom internal R&D built software applications too.

You know, for search. Querying 24 Billion Documents in 900ms

Who doesn't love building high-available, scalable systems holding multiple Terabytes of data? Recently we had the pleasure to crack some tough nuts to solve the problems and we'd love to share our findings designing, building up and operating a 120 Node, 6TB Elasticsearch (and Hadoop) cluster with the community.

Distributed Data Processing Workshop - SBU

This presentation is about how to prepare a distributed data processing environment on your PC.

Elasticsearch 1.x Cluster Installation (VirtualBox)

This is a straightforward tutorial for those are going to run a cluster of elasticsearch on their notebooks or PCs.

Creating Elasticsearch Snapshots

For Elasticsearch users, backups are done using the Elasticsearch snapshot facility. In this presentation I'll go through the design of an Elasticsearch backup system that you can use to create snapshots of your cluster's indices and documents.

Getting started with the Lupus Nuxt.js Drupal Stack

Presentation at Drupalcon North America 2021 (April)

More Related Content

What's hot

Web Crawling with Apache Nutch

Talk about Apache Nutch on ApacheCon Europe 2014:

http://sched.co/1nyYa7b

http://events.linuxfoundation.org/sites/events/files/slides/aceu2014-snagel-web-crawling-nutch.pdf

A quick introduction to Storm Crawler

Introduction to Storm Crawler [ https://github.com/DigitalPebble/storm-crawler], a collection of resources for building low-latency, large scale web crawlers on Storm available under Apache License.

Fast Feather talk given at ApacheCon EU 2014 Budapest

Meet Solr For The Tirst Again

Anyone who has tried integrating search in their application knows how good and powerful Solr is but always wished it was simpler to get started and simpler to take it to production.

I will talk about the recent features added to Solr making it easier for users and some of the changes we plan on adding soon to make the experience even better.

Nutch - web-scale search engine toolkit

This slideset presents the Nutch search engine (http://lucene.apache.org/nutch). A high-level architecture is described, as well as some challenges common in web-crawling and solutions implemented in Nutch. The presentation closes with a brief look into the Nutch future.

Nutch + Hadoop scaled, for crawling protected web sites (hint: Selenium)

Presented at Houston Hadoop Meetup in March '14

8a. How To Setup HBase with Docker

Here we analyze how to start a single node and a pseudo-distributed instance of HBase using docker.

Hadoop 2.x HDFS Cluster Installation (VirtualBox)

This is a straight-forward tutorial for those who are goring to use HDFS in an academic environment on their notebooks or PCs.

Get started with Developing Frameworks in Go on Apache Mesos

Apache Mesos provides a platform for building distributed systems. Mesos is built using the same principles as the Linux kernel, only at a different level of abstraction. The Mesos kernel runs on every machine and provides applications (e.g., Hadoop, Spark, Kafka, Elastic Search) with API’s for resource management and scheduling across entire datacenter and cloud environments. How to use that platform and what to make of it becomes a complex task requiring not only understanding of where the system has been but also where it is going. Using schedulers like Marathon and Aurora help to get your applications scheduled and executing on Mesos. In many cases it makes sense to build a framework and integrate directly. This talk will breakdown what is involved in building a framework in Go, how to-do this with examples and why you would want to-do this. Frameworks are not only for generally available software applications (like Kafka, HDFS, Spark ,etc) but should also be used for custom internal R&D built software applications too.

Developing Frameworks for Apache Mesos

Using schedulers like Marathon and Aurora help to get your applications scheduled and executing on Mesos. In many cases it makes sense to build a framework and integrate directly. This talk will breakdown what is involved in building a framework, how to-do this with examples and why you would want to-do this. Frameworks are not only for generally available software applications (like Kafka, HDFS, Spark ,etc) but can also be used for custom internal R&D built software applications too.

You know, for search. Querying 24 Billion Documents in 900ms

Who doesn't love building high-available, scalable systems holding multiple Terabytes of data? Recently we had the pleasure to crack some tough nuts to solve the problems and we'd love to share our findings designing, building up and operating a 120 Node, 6TB Elasticsearch (and Hadoop) cluster with the community.

Distributed Data Processing Workshop - SBU

This presentation is about how to prepare a distributed data processing environment on your PC.

Elasticsearch 1.x Cluster Installation (VirtualBox)

This is a straightforward tutorial for those are going to run a cluster of elasticsearch on their notebooks or PCs.

What's hot (20)

Nutch + Hadoop scaled, for crawling protected web sites (hint: Selenium)

Nutch + Hadoop scaled, for crawling protected web sites (hint: Selenium)

Get started with Developing Frameworks in Go on Apache Mesos

Get started with Developing Frameworks in Go on Apache Mesos

You know, for search. Querying 24 Billion Documents in 900ms

You know, for search. Querying 24 Billion Documents in 900ms

Elasticsearch 1.x Cluster Installation (VirtualBox)

Elasticsearch 1.x Cluster Installation (VirtualBox)

Similar to Web scraping with nutch solr part 2

Creating Elasticsearch Snapshots

For Elasticsearch users, backups are done using the Elasticsearch snapshot facility. In this presentation I'll go through the design of an Elasticsearch backup system that you can use to create snapshots of your cluster's indices and documents.

Getting started with the Lupus Nuxt.js Drupal Stack

Presentation at Drupalcon North America 2021 (April)

Training Slides: 203 - Backup & Recovery

Watch this 36min training to learn about planning for backups, what some of the methods and tools are, how to restore backups and more.

TOPICS COVERED

- How to develop a backup plan

- Methods and tools for taking a backup

- Verifying the backup contains the last binary position, and the importance of this

- Restore backups into the cluster

- Provision a replica from an existing datasource

Selenium grid workshop london 2016

These were the opening slides used in the all-day Selenium Grid Workshop, given by Marcus Merrell and Manoj Kumar on November 14, 2016 at the 2016 London Selenium Conference

NGINX High-performance Caching

Content caching is one of the most effective ways to dramatically improve the performance of a web site. In this webinar, we’ll deep-dive into NGINX’s caching abilities and investigate the architecture used, debugging techniques and advanced configuration. By the end of the webinar, you’ll be well equipped to configure NGINX to cache content exactly as you need.

View full webinar on demand at http://nginx.com/resources/webinars/content-caching-nginx/

Elastic 101 tutorial - Percona Europe 2018

Elasticsearch is well known as a highly scalable search engine that stores data in a structure optimized for language based searches but its capabilities and use cases don't stop there. In this tutorial, I'll give you a hands-on introduction to Elasticsearch and give you a glimpse at some of the fundamental concepts.

Database administration is challenging, and Elasticsearch is not an exception to that rule. In this tutorial, we will cover various administrative topics like Installation and Configuration, Cluster/Node management, Indexes management and Monitoring Cluster Health which will help you. Building applications on top of an Elasticsearch are also challenging and raise concerns about schema design. In this tutorial, we will cover developer-oriented topics like Mappings and Analysis, Aggregations and Schema Design that will help you build a robust application on top of Elasticsearch.

There will be lab sessions at the end of some chapters so please have your laptops with you.

Clug 2012 March web server optimisation

These slides show how to reduce latency on websites and reduce bandwidth for improved user experience.

Covering network, compression, caching, etags, application optimisation, sphinxsearch, memcache, db optimisation

MongoDB – Sharded cluster tutorial - Percona Europe 2017

MongoDB – Sharded cluster tutorial - Percona Europe 2017

MongoDB - Sharded Cluster Tutorial

This tutorial will guide you through the many considerations when deploying a sharded cluster. We will cover the services that make up a sharded cluster, configuration recommendations for these services, shard key selection, use cases, and how data is managed within a sharded cluster. Maintaining a sharded cluster also has its challenges. We will review these challenges and how you can prevent them with proper design or ways to resolve them if they exist today. There will be lab sessions at the end of some chapters so please have your laptops with you.

NGINX 101 - now with more Docker

NGINX is used by more than 130 million websites as a lightweight way to serve web content. Use it to decrease costs, improve performance and open up bottlenecks in web and application server environments without a major architectural overhaul. In this talk, we'll cover the three most basic use cases of static content delivery, application load balancing, and web proxying with caching; and touch on the NGINX maintained Docker container.

NGINX 101 - now with more Docker

NGINX is used by more than 130 million websites as a lightweight way to serve web content. Use it to decrease costs, improve performance and open up bottlenecks in web and application server environments without a major architectural overhaul. In this talk, we'll cover the three most basic use cases of static content delivery, application load balancing, and web proxying with caching; and touch on the NGINX maintained Docker container.

Nagios Conference 2014 - Eric Mislivec - Getting Started With Nagios Core

Eric Mislivec's presentation on getting started with Nagios Core. The presentation was given during the Nagios World Conference North America held Oct 13th - Oct 16th, 2014 in Saint Paul, MN. For more information on the conference (including photos and videos), visit: http://go.nagios.com/conference.

Introduction to Drupal - Installation, Anatomy, Terminologies

A quick guide on how to install Drupal, its anatomy and some terminologies that you need to know in the beginning of your journey to Drupal sphere.

Kubernetes Walk Through from Technical View

Kubernetes walk through in Alibaba Kubernetes workshop by Lei (Harry) Zhang @resouer

Similar to Web scraping with nutch solr part 2 (20)

Getting started with the Lupus Nuxt.js Drupal Stack

Getting started with the Lupus Nuxt.js Drupal Stack

MongoDB – Sharded cluster tutorial - Percona Europe 2017

MongoDB – Sharded cluster tutorial - Percona Europe 2017

Performance Optimization using Caching | Swatantra Kumar

Performance Optimization using Caching | Swatantra Kumar

Nagios Conference 2014 - Eric Mislivec - Getting Started With Nagios Core

Nagios Conference 2014 - Eric Mislivec - Getting Started With Nagios Core

Introduction to Drupal - Installation, Anatomy, Terminologies

Introduction to Drupal - Installation, Anatomy, Terminologies

More from Mike Frampton

Apache Airavata

This presentation gives an overview of the Apache Airavata project. It explains Apache Airavata in terms of it's architecture, data models and user interface.

Links for further information and connecting

http://www.amazon.com/Michael-Frampton/e/B00NIQDOOM/

https://nz.linkedin.com/pub/mike-frampton/20/630/385

https://open-source-systems.blogspot.com/

Apache MADlib AI/ML

This presentation gives an overview of the Apache MADlib AI/ML project. It explains Apache MADlib AI/ML in terms of it's functionality, it's architecture, dependencies and also gives an SQL example.

Links for further information and connecting

http://www.amazon.com/Michael-Frampton/e/B00NIQDOOM/

https://nz.linkedin.com/pub/mike-frampton/20/630/385

https://open-source-systems.blogspot.com/

Apache MXNet AI

This presentation gives an overview of the Apache MXNet AI project. It explains Apache MXNet AI in terms of it's architecture, eco system, languages and the generic problems that the architecture attempts to solve.

Links for further information and connecting

http://www.amazon.com/Michael-Frampton/e/B00NIQDOOM/

https://nz.linkedin.com/pub/mike-frampton/20/630/385

https://open-source-systems.blogspot.com/

Apache Gobblin

This presentation gives an overview of the Apache Gobblin project. It explains Apache Gobblin in terms of it's architecture, data sources/sinks and it's work unit processing.

Links for further information and connecting

http://www.amazon.com/Michael-Frampton/e/B00NIQDOOM/

https://nz.linkedin.com/pub/mike-frampton/20/630/385

https://open-source-systems.blogspot.com/

Apache Singa AI

This presentation gives an overview of the Apache Singa AI project. It explains Apache Singa in terms of it's architecture, distributed training and functionality.

Links for further information and connecting

http://www.amazon.com/Michael-Frampton/e/B00NIQDOOM/

https://nz.linkedin.com/pub/mike-frampton/20/630/385

https://open-source-systems.blogspot.com/

Apache Ranger

This presentation gives an overview of the Apache Ranger project. It explains Apache Ranger in terms of it's architecture, security, audit and plugin features.

Links for further information and connecting

http://www.amazon.com/Michael-Frampton/e/B00NIQDOOM/

https://nz.linkedin.com/pub/mike-frampton/20/630/385

https://open-source-systems.blogspot.com/

OrientDB

This presentation gives an overview of the OrientDB database project. It explains OrientDB in terms of it's functionality, its indexing and architecture. It examines the ETL functionality as well as the UI available.

Links for further information and connecting

http://www.amazon.com/Michael-Frampton/e/B00NIQDOOM/

https://nz.linkedin.com/pub/mike-frampton/20/630/385

https://open-source-systems.blogspot.com/

Prometheus

This presentation gives an overview of the Prometheus project. It explains Prometheus in terms of it's visualisation, time series processing capabilities and architecture. It also examines it's query language PromQL.

Links for further information and connecting

http://www.amazon.com/Michael-Frampton/e/B00NIQDOOM/

https://nz.linkedin.com/pub/mike-frampton/20/630/385

https://open-source-systems.blogspot.com/

Apache Tephra

This presentation gives an overview of the Apache Tephra project. It explains Tephra in terms of Pheonix, HBase and HDFS. It examines the project architecture and configuration.

Links for further information and connecting

http://www.amazon.com/Michael-Frampton/e/B00NIQDOOM/

https://nz.linkedin.com/pub/mike-frampton/20/630/385

https://open-source-systems.blogspot.com/

Apache Kudu

This presentation gives an overview of the Apache Kudu project. It explains the Kudu project in terms of it's architecture, schema, partitioning and replication. It also provides an example deployment scale.

Links for further information and connecting

http://www.amazon.com/Michael-Frampton/e/B00NIQDOOM/

https://nz.linkedin.com/pub/mike-frampton/20/630/385

https://open-source-systems.blogspot.com/

Apache Bahir

This presentation gives an overview of the Apache Bahir project. It explains the Bahir project in terms of it's Spark and Flink extensions and why it is useful and important.

Links for further information and connecting

http://www.amazon.com/Michael-Frampton/e/B00NIQDOOM/

https://nz.linkedin.com/pub/mike-frampton/20/630/385

https://open-source-systems.blogspot.com/

Apache Arrow

This presentation gives an overview of the Apache Arrow project. It explains the Arrow project in terms of its in memory structure, its purpose, language interfaces and supporting projects.

Links for further information and connecting

http://www.amazon.com/Michael-Frampton/e/B00NIQDOOM/

https://nz.linkedin.com/pub/mike-frampton/20/630/385

https://open-source-systems.blogspot.com/

JanusGraph DB

This presentation gives an overview of the JanusGraph DB project. It explains the JanusGraph database in terms of its architecture, storage backends, capabilities and community.

Links for further information and connecting

http://www.amazon.com/Michael-Frampton/e/B00NIQDOOM/

https://nz.linkedin.com/pub/mike-frampton/20/630/385

https://open-source-systems.blogspot.com/

Apache Ignite

This presentation gives an overview of the Apache Ignite project. It explains Ignite in relation to its architecture, scaleability, caching, datagrid and machine learning abilities.

Links for further information and connecting

http://www.amazon.com/Michael-Frampton/e/B00NIQDOOM/

https://nz.linkedin.com/pub/mike-frampton/20/630/385

https://open-source-systems.blogspot.com/

Apache Samza

This presentation gives an overview of the Apache Samza project. It explains Samza's stream processing capabilities as well as its architecture, users, use cases etc.

Links for further information and connecting

http://www.amazon.com/Michael-Frampton/e/B00NIQDOOM/

https://nz.linkedin.com/pub/mike-frampton/20/630/385

https://open-source-systems.blogspot.com/

Apache Flink

This presentation gives an overview of the Apache Flink project. It explains Flink in terms of its architecture, use cases and the manner in which it works.

Links for further information and connecting

http://www.amazon.com/Michael-Frampton/e/B00NIQDOOM/

https://nz.linkedin.com/pub/mike-frampton/20/630/385

https://open-source-systems.blogspot.com/

Apache Edgent

This presentation gives an overview of the Apache Edgent project. It explains Edgent in terms of edge of network IOT analytics. It also explains the Edgent API, cookbook and console.

Links for further information and connecting

http://www.amazon.com/Michael-Frampton/e/B00NIQDOOM/

https://nz.linkedin.com/pub/mike-frampton/20/630/385

https://open-source-systems.blogspot.com/

Apache CouchDB

This presentation gives an overview of the Apache CouchDB project. It explains CouchDB architecture in relation to replication, usage, its UI and the platforms it is available for.

Links for further information and connecting

http://www.amazon.com/Michael-Frampton/e/B00NIQDOOM/

https://nz.linkedin.com/pub/mike-frampton/20/630/385

https://open-source-systems.blogspot.com/

More from Mike Frampton (20)

Recently uploaded

FIDO Alliance Osaka Seminar: The WebAuthn API and Discoverable Credentials.pdf

FIDO Alliance Osaka Seminar

Transcript: Selling digital books in 2024: Insights from industry leaders - T...

The publishing industry has been selling digital audiobooks and ebooks for over a decade and has found its groove. What’s changed? What has stayed the same? Where do we go from here? Join a group of leading sales peers from across the industry for a conversation about the lessons learned since the popularization of digital books, best practices, digital book supply chain management, and more.

Link to video recording: https://bnctechforum.ca/sessions/selling-digital-books-in-2024-insights-from-industry-leaders/

Presented by BookNet Canada on May 28, 2024, with support from the Department of Canadian Heritage.

By Design, not by Accident - Agile Venture Bolzano 2024

As presented at the Agile Venture Bolzano, 4.06.2024

20240607 QFM018 Elixir Reading List May 2024

Everything I found interesting about the Elixir programming ecosystem in May 2024

Epistemic Interaction - tuning interfaces to provide information for AI support

Paper presented at SYNERGY workshop at AVI 2024, Genoa, Italy. 3rd June 2024

https://alandix.com/academic/papers/synergy2024-epistemic/

As machine learning integrates deeper into human-computer interactions, the concept of epistemic interaction emerges, aiming to refine these interactions to enhance system adaptability. This approach encourages minor, intentional adjustments in user behaviour to enrich the data available for system learning. This paper introduces epistemic interaction within the context of human-system communication, illustrating how deliberate interaction design can improve system understanding and adaptation. Through concrete examples, we demonstrate the potential of epistemic interaction to significantly advance human-computer interaction by leveraging intuitive human communication strategies to inform system design and functionality, offering a novel pathway for enriching user-system engagements.

GraphSummit Singapore | The Future of Agility: Supercharging Digital Transfor...

Leonard Jayamohan, Partner & Generative AI Lead, Deloitte

This keynote will reveal how Deloitte leverages Neo4j’s graph power for groundbreaking digital twin solutions, achieving a staggering 100x performance boost. Discover the essential role knowledge graphs play in successful generative AI implementations. Plus, get an exclusive look at an innovative Neo4j + Generative AI solution Deloitte is developing in-house.

GraphSummit Singapore | The Art of the Possible with Graph - Q2 2024

Neha Bajwa, Vice President of Product Marketing, Neo4j

Join us as we explore breakthrough innovations enabled by interconnected data and AI. Discover firsthand how organizations use relationships in data to uncover contextual insights and solve our most pressing challenges – from optimizing supply chains, detecting fraud, and improving customer experiences to accelerating drug discoveries.

Communications Mining Series - Zero to Hero - Session 1

This session provides introduction to UiPath Communication Mining, importance and platform overview. You will acquire a good understand of the phases in Communication Mining as we go over the platform with you. Topics covered:

• Communication Mining Overview

• Why is it important?

• How can it help today’s business and the benefits

• Phases in Communication Mining

• Demo on Platform overview

• Q/A

Generative AI Deep Dive: Advancing from Proof of Concept to Production

Join Maher Hanafi, VP of Engineering at Betterworks, in this new session where he'll share a practical framework to transform Gen AI prototypes into impactful products! He'll delve into the complexities of data collection and management, model selection and optimization, and ensuring security, scalability, and responsible use.

A tale of scale & speed: How the US Navy is enabling software delivery from l...

Rapid and secure feature delivery is a goal across every application team and every branch of the DoD. The Navy’s DevSecOps platform, Party Barge, has achieved:

- Reduction in onboarding time from 5 weeks to 1 day

- Improved developer experience and productivity through actionable findings and reduction of false positives

- Maintenance of superior security standards and inherent policy enforcement with Authorization to Operate (ATO)

Development teams can ship efficiently and ensure applications are cyber ready for Navy Authorizing Officials (AOs). In this webinar, Sigma Defense and Anchore will give attendees a look behind the scenes and demo secure pipeline automation and security artifacts that speed up application ATO and time to production.

We will cover:

- How to remove silos in DevSecOps

- How to build efficient development pipeline roles and component templates

- How to deliver security artifacts that matter for ATO’s (SBOMs, vulnerability reports, and policy evidence)

- How to streamline operations with automated policy checks on container images

GraphSummit Singapore | Graphing Success: Revolutionising Organisational Stru...

Sudheer Mechineni, Head of Application Frameworks, Standard Chartered Bank

Discover how Standard Chartered Bank harnessed the power of Neo4j to transform complex data access challenges into a dynamic, scalable graph database solution. This keynote will cover their journey from initial adoption to deploying a fully automated, enterprise-grade causal cluster, highlighting key strategies for modelling organisational changes and ensuring robust disaster recovery. Learn how these innovations have not only enhanced Standard Chartered Bank’s data infrastructure but also positioned them as pioneers in the banking sector’s adoption of graph technology.

DevOps and Testing slides at DASA Connect

My and Rik Marselis slides at 30.5.2024 DASA Connect conference. We discuss about what is testing, then what is agile testing and finally what is Testing in DevOps. Finally we had lovely workshop with the participants trying to find out different ways to think about quality and testing in different parts of the DevOps infinity loop.

Video Streaming: Then, Now, and in the Future

In his public lecture, Christian Timmerer provides insights into the fascinating history of video streaming, starting from its humble beginnings before YouTube to the groundbreaking technologies that now dominate platforms like Netflix and ORF ON. Timmerer also presents provocative contributions of his own that have significantly influenced the industry. He concludes by looking at future challenges and invites the audience to join in a discussion.

PHP Frameworks: I want to break free (IPC Berlin 2024)

In this presentation, we examine the challenges and limitations of relying too heavily on PHP frameworks in web development. We discuss the history of PHP and its frameworks to understand how this dependence has evolved. The focus will be on providing concrete tips and strategies to reduce reliance on these frameworks, based on real-world examples and practical considerations. The goal is to equip developers with the skills and knowledge to create more flexible and future-proof web applications. We'll explore the importance of maintaining autonomy in a rapidly changing tech landscape and how to make informed decisions in PHP development.

This talk is aimed at encouraging a more independent approach to using PHP frameworks, moving towards a more flexible and future-proof approach to PHP development.

Encryption in Microsoft 365 - ExpertsLive Netherlands 2024

In this session I delve into the encryption technology used in Microsoft 365 and Microsoft Purview. Including the concepts of Customer Key and Double Key Encryption.

SAP Sapphire 2024 - ASUG301 building better apps with SAP Fiori.pdf

Building better applications for business users with SAP Fiori.

• What is SAP Fiori and why it matters to you

• How a better user experience drives measurable business benefits

• How to get started with SAP Fiori today

• How SAP Fiori elements accelerates application development

• How SAP Build Code includes SAP Fiori tools and other generative artificial intelligence capabilities

• How SAP Fiori paves the way for using AI in SAP apps

Recently uploaded (20)

FIDO Alliance Osaka Seminar: The WebAuthn API and Discoverable Credentials.pdf

FIDO Alliance Osaka Seminar: The WebAuthn API and Discoverable Credentials.pdf

Transcript: Selling digital books in 2024: Insights from industry leaders - T...

Transcript: Selling digital books in 2024: Insights from industry leaders - T...

By Design, not by Accident - Agile Venture Bolzano 2024

By Design, not by Accident - Agile Venture Bolzano 2024

Epistemic Interaction - tuning interfaces to provide information for AI support

Epistemic Interaction - tuning interfaces to provide information for AI support

GraphSummit Singapore | The Future of Agility: Supercharging Digital Transfor...

GraphSummit Singapore | The Future of Agility: Supercharging Digital Transfor...

GraphSummit Singapore | The Art of the Possible with Graph - Q2 2024

GraphSummit Singapore | The Art of the Possible with Graph - Q2 2024

Monitoring Java Application Security with JDK Tools and JFR Events

Monitoring Java Application Security with JDK Tools and JFR Events

Communications Mining Series - Zero to Hero - Session 1

Communications Mining Series - Zero to Hero - Session 1

Generative AI Deep Dive: Advancing from Proof of Concept to Production

Generative AI Deep Dive: Advancing from Proof of Concept to Production

A tale of scale & speed: How the US Navy is enabling software delivery from l...

A tale of scale & speed: How the US Navy is enabling software delivery from l...

GraphSummit Singapore | Graphing Success: Revolutionising Organisational Stru...

GraphSummit Singapore | Graphing Success: Revolutionising Organisational Stru...

PHP Frameworks: I want to break free (IPC Berlin 2024)

PHP Frameworks: I want to break free (IPC Berlin 2024)

Encryption in Microsoft 365 - ExpertsLive Netherlands 2024

Encryption in Microsoft 365 - ExpertsLive Netherlands 2024

SAP Sapphire 2024 - ASUG301 building better apps with SAP Fiori.pdf

SAP Sapphire 2024 - ASUG301 building better apps with SAP Fiori.pdf

FIDO Alliance Osaka Seminar: Passkeys and the Road Ahead.pdf

FIDO Alliance Osaka Seminar: Passkeys and the Road Ahead.pdf

Web scraping with nutch solr part 2

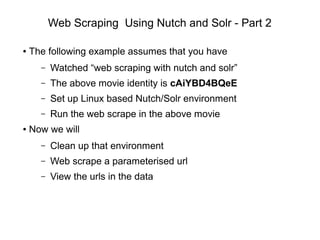

- 1. Web Scraping Using Nutch and Solr - Part 2 ● The following example assumes that you have – Watched “web scraping with nutch and solr” – The above movie identity is cAiYBD4BQeE – Set up Linux based Nutch/Solr environment – Run the web scrape in the above movie ● Now we will – Clean up that environment – Web scrape a parameterised url – View the urls in the data

- 2. Empty Nutch Database ● Clean up the Nutch crawl database – Previously used apache-nutch-1.6/nutch_start.sh – This contained -dir crawl option – This created apache-nutch-1.6/crawl directory – Which contains our Nutch data ● Clean this as – cd apache-nutch-1.6; rm -rf crawl ● Only because it contained dummy data ! ● Next run of script will create dir again

- 3. Empty Solr Database ● Clean Solr database via a url – Book mark this url – Only use it if you need to empty your data ● Run the following ( with solr server running ) – http://localhost:8983/solr/update?commit=true -d '<delete><query>*:*</query></delete>'

- 4. Set up Nutch ● Now we will do something more complex ● Web scrape a url that has parameters i.e. – http://<site>/<function>?var1=val1&var2=val2 ● This web scrape will – Have extra url characters '?=&' – Need greater search depth – Need better url filtering ● Remember that you need to get permission to scrape a third party web site

- 5. Nutch Configuration ● Change seed file for Nutch ● apache-nutch-1.6/urls/seed.txt ● In this instance I will use a url of the form – http://somesite.co.nz/Search?DateRange=7&industry=62 – ( this is not a real url – just an example ) ● Change conf regex-urlfilter.txt entry i.e. – # skip URLs containing certain characters – -[*!@] – # accept anything else – +^http://([a-z0-9]*.)*somesite.co.nz/Search ● This will only consider some site Search urls

- 6. Run Nutch ● Now run nutch using start script – cd apache-nutch-1.6 ; ./nutch_start.bash ● Monitor for errors in solr admin log window ● The Nutch crawl should end with – crawl finished: crawl

- 7. Checking Data ● Data should have been indexed in Solr ● In Solr Admin window – Set 'Core Selector' = collection1 – Click 'Query' – In Query window set fl field = url – Click Execute Query ● The result ( next ) shows the filtered list of urls in Solr

- 9. Results ● Congratulations you have completed your second crawl – With parameterised urls – More complex url filtering – With a Solr Query search

- 10. Contact Us ● Feel free to contact us at – www.semtech-solutions.co.nz – info@semtech-solutions.co.nz ● We offer IT project consultancy ● We are happy to hear about your problems ● You can just pay for those hours that you need ● To solve your problems