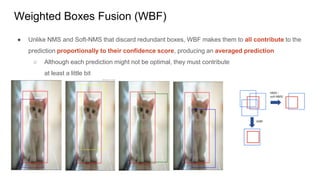

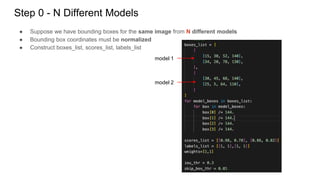

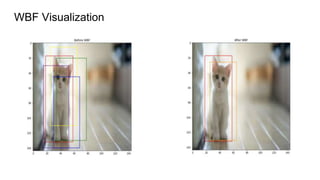

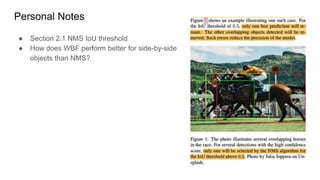

Weighted boxes fusion (WBF) is an object detection ensemble method that averages bounding box predictions from multiple models rather than discarding redundant boxes. It works by clustering overlapping boxes, calculating a weighted average bounding box for each cluster, and rescaling confidence scores based on cluster size. This allows all predictions to contribute to the final output. WBF is shown to outperform non-maximum suppression (NMS) and soft-NMS on ensembles of different object detection models and with test time augmentation, producing more accurate averaged predictions.

![Step 1 - Merge bboxes to B

● Add each predicted box from each model to a single list B

○ Filter boxes with confidence < score_thr

● Sort in decreasing order of the confidence score C

B = [b1, b2, b3, b4]](https://image.slidesharecdn.com/wbf-220411124808/85/WBF-pptx-8-320.jpg)

![Step 2 - Boxes cluster L / Fused Box F

● Declare an empty list L for boxes clusters - each position of L contains a set of boxes

● Declare an empty list F for fused boxes - each position of F contains a single box

B = [b1, b2, b3, b4]

L = [ ] (2D list)

F = [ ] (1D list)](https://image.slidesharecdn.com/wbf-220411124808/85/WBF-pptx-9-320.jpg)

![Step 3 - Loop through B and find a match from F

● Iterate through each box in B and find the best matching box in F

○ Highest IoU with B

○ best_iou > iou_thr

B = [b1, b2, b3, b4]

L = [ ]

F = [ ]

B = [b1, b2, b3, b4]

F = [f1, f2, f3]

best_f_idx

best_iou](https://image.slidesharecdn.com/wbf-220411124808/85/WBF-pptx-10-320.jpg)

![Step 4 - If no match found

● If no match found, add the box from B to the end of lists L and F

● Then, go back to Step 3

● If no match found for all boxes in B, the algorithm never proceeds to the next step

each position of L contains a set of boxes

B = [b1, b2, b3, b4]

L = [ ]

F = [ ]

B = [b1, b2, b3, b4]

L = [[b1]]

F = [b1]](https://image.slidesharecdn.com/wbf-220411124808/85/WBF-pptx-11-320.jpg)

![Step 5 - If match found

● If match found,

○ Add b_i to L at position best_f_idx corresponding to the matching box in F

B = [b1, b2, b3, b4]

L = [[b1, b2]]

F = [b1]

best_f_idx

B = [b1, b2, b3, b4]

L = [[b1]]

F = [b1]

iou > iou_thr](https://image.slidesharecdn.com/wbf-220411124808/85/WBF-pptx-12-320.jpg)

![Step 6 - Perform WBF

● Perform WBF on the set of boxes in L which now has one more element

● Fused Confidence Score = Averaged confidence of all boxes from the cluster

● Fused box coordinates = Weighted average of all boxes from the cluster

B = [b1, b2, b3, b4]

L = [[b1, b2]]

F = [b1]

B = [b1, b2, b3, b4]

L = [[b1, b2]]

F = [f1]](https://image.slidesharecdn.com/wbf-220411124808/85/WBF-pptx-13-320.jpg)

![Step 7 - Re-scale confidence scores in F

● After all boxes in B are processed, re-scale the confidence scores in F by

○ multiply by min(# of boxes in a cluster, N) - len(L[i])

○ divide by a # of models N

● The fused box predicted by many boxes is likely to be more accurate

B = [b1, b2, b3, b4]

L = [[b1, b2, b3], b4]

F = [f1, f2]](https://image.slidesharecdn.com/wbf-220411124808/85/WBF-pptx-14-320.jpg)

![[No Match]

B = [b1, b2, b3, b4]

L = [ ]

F = [ ]](https://image.slidesharecdn.com/wbf-220411124808/85/WBF-pptx-15-320.jpg)

![[No Match] - Add to L and F

B = [b1, b2, b3, b4]

L = [[b1]]

F = [b1]](https://image.slidesharecdn.com/wbf-220411124808/85/WBF-pptx-16-320.jpg)

![[Match]

B = [b1, b2, b3, b4]

L = [[b1]]

F = [b1]

best_f_idx](https://image.slidesharecdn.com/wbf-220411124808/85/WBF-pptx-17-320.jpg)

![[Match] - Add to L[best_f_idx]

B = [b1, b2, b3, b4]

L = [[b1, b2]]

F = [b1]

best_f_idx](https://image.slidesharecdn.com/wbf-220411124808/85/WBF-pptx-18-320.jpg)

![[Match] - WBF

B = [b1, b2, b3, b4]

L = [[b1, b2]]

F = [f1]](https://image.slidesharecdn.com/wbf-220411124808/85/WBF-pptx-19-320.jpg)

![[No Match]

B = [b1, b2, b3, b4]

L = [[b1, b2]]

F = [f1]](https://image.slidesharecdn.com/wbf-220411124808/85/WBF-pptx-20-320.jpg)

![[No Match] - Add to L and F

B = [b1, b2, b3, b4]

L = [[b1, b2], [b3]]

F = [f1, b3]](https://image.slidesharecdn.com/wbf-220411124808/85/WBF-pptx-21-320.jpg)

![[Match]

B = [b1, b2, b3, b4]

L = [[b1, b2], [b3]]

F = [f1, b3]

best_f_idx](https://image.slidesharecdn.com/wbf-220411124808/85/WBF-pptx-22-320.jpg)

![[Match] - Add to L

B = [b1, b2, b3, b4]

L = [[b1, b2, b4], [b3]]

F = [f1, b3]

best_f_idx](https://image.slidesharecdn.com/wbf-220411124808/85/WBF-pptx-23-320.jpg)

![[Match] - WBF

B = [b1, b2, b3, b4]

L = [[b1, b2, b4], [b3]]

F = [f1’, b3]](https://image.slidesharecdn.com/wbf-220411124808/85/WBF-pptx-24-320.jpg)

![References

[1] https://arxiv.org/pdf/1910.13302.pdf

[2] https://github.com/ZFTurbo/Weighted-Boxes-Fusion](https://image.slidesharecdn.com/wbf-220411124808/85/WBF-pptx-30-320.jpg)