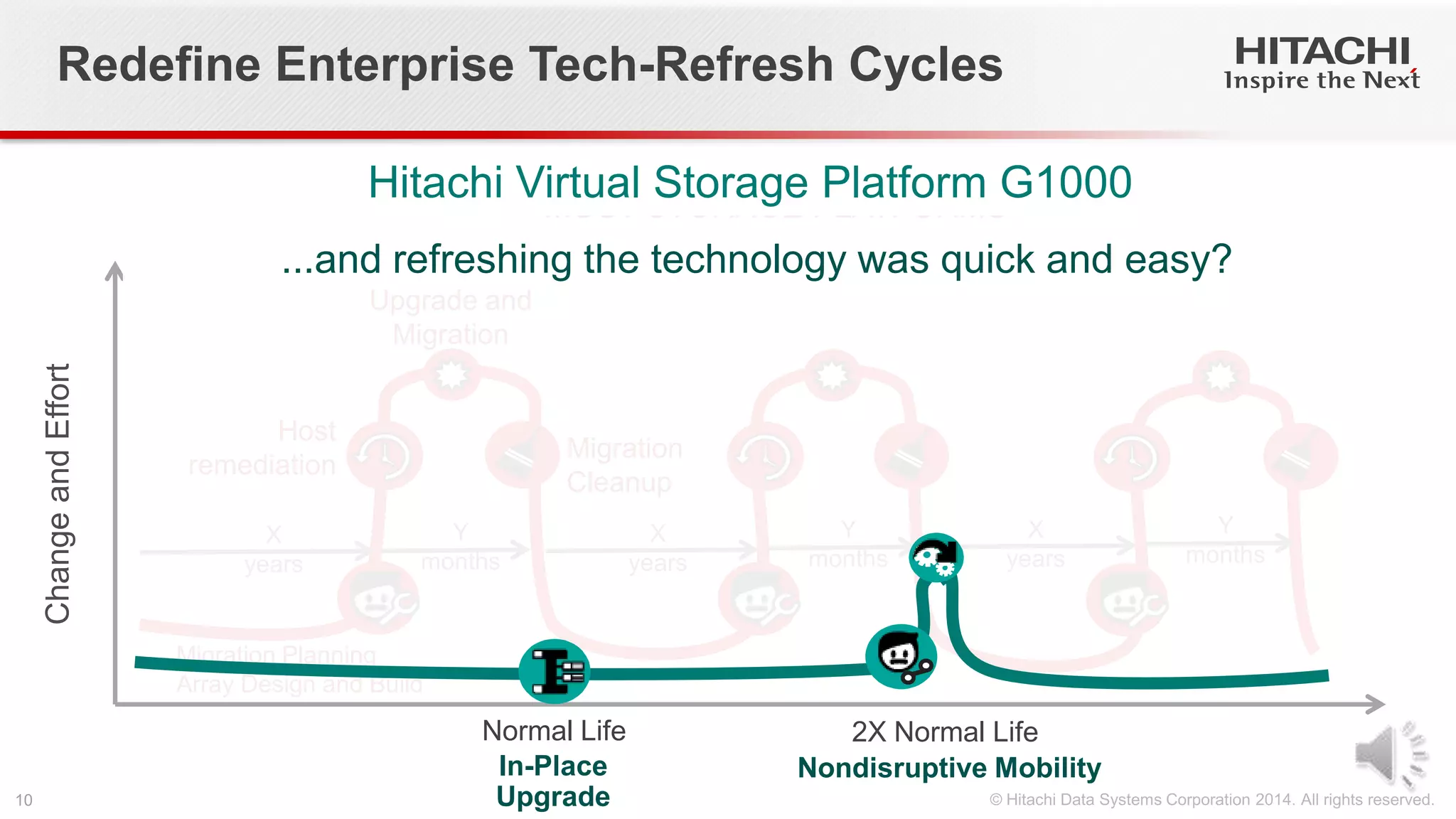

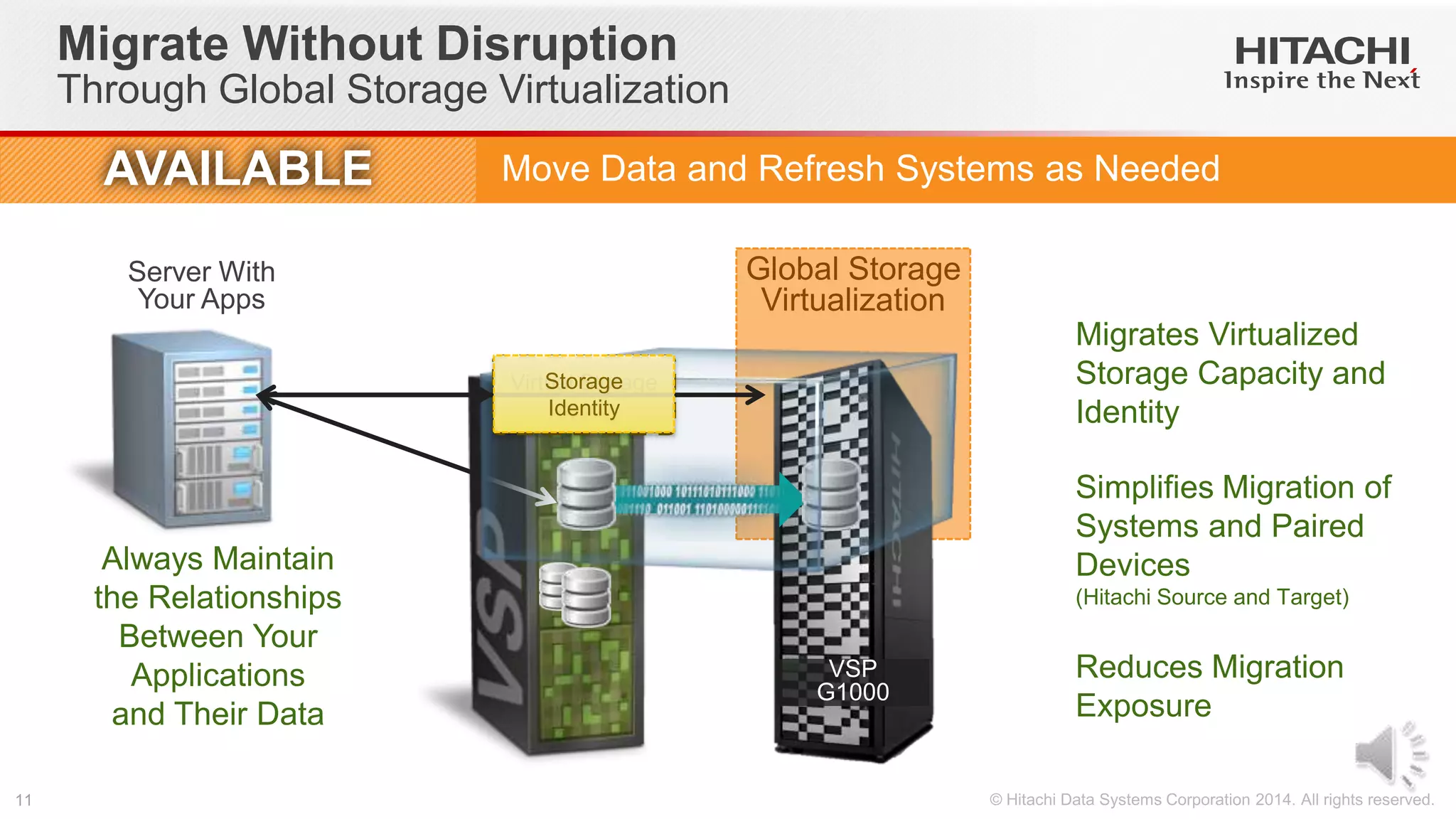

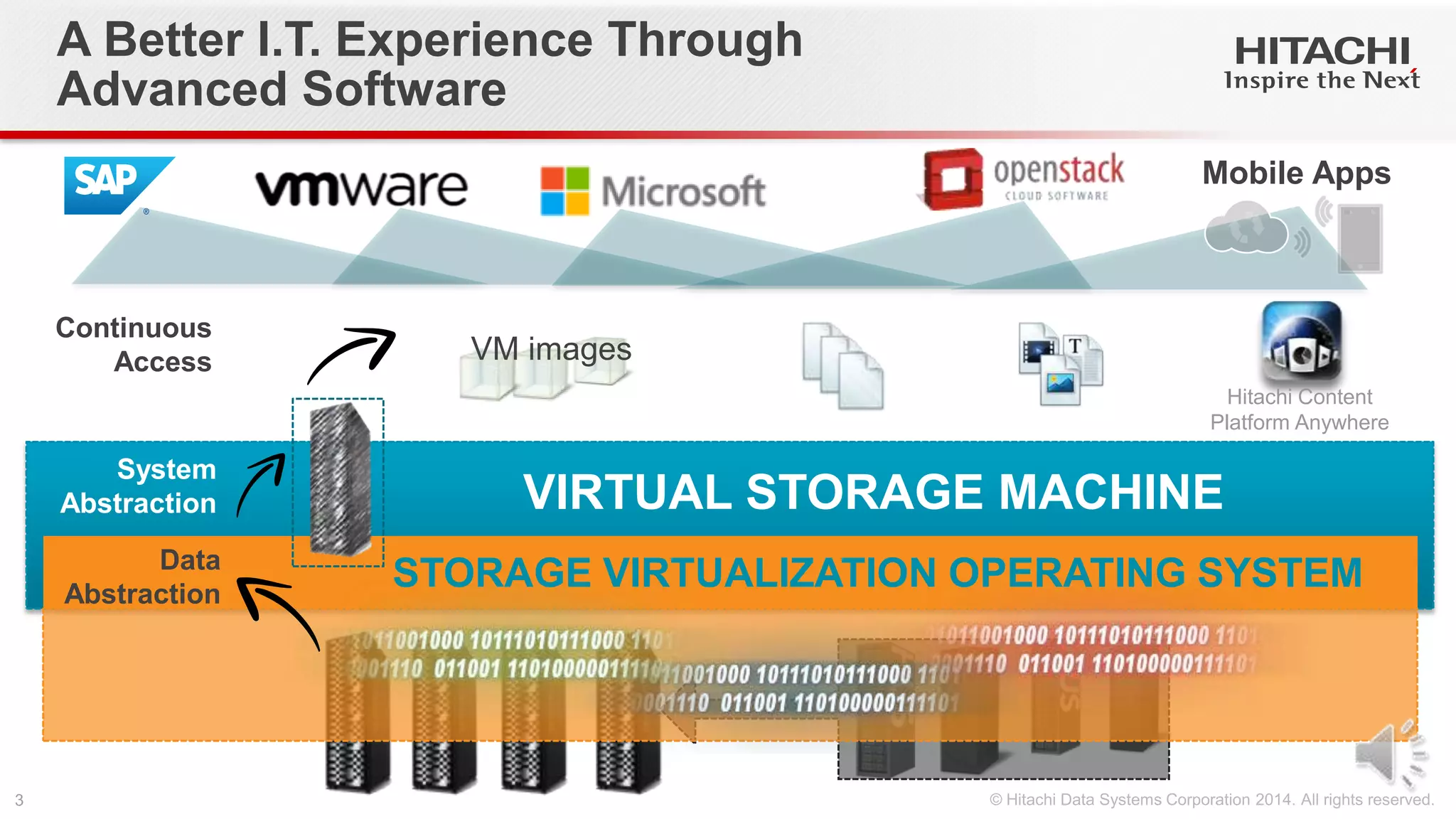

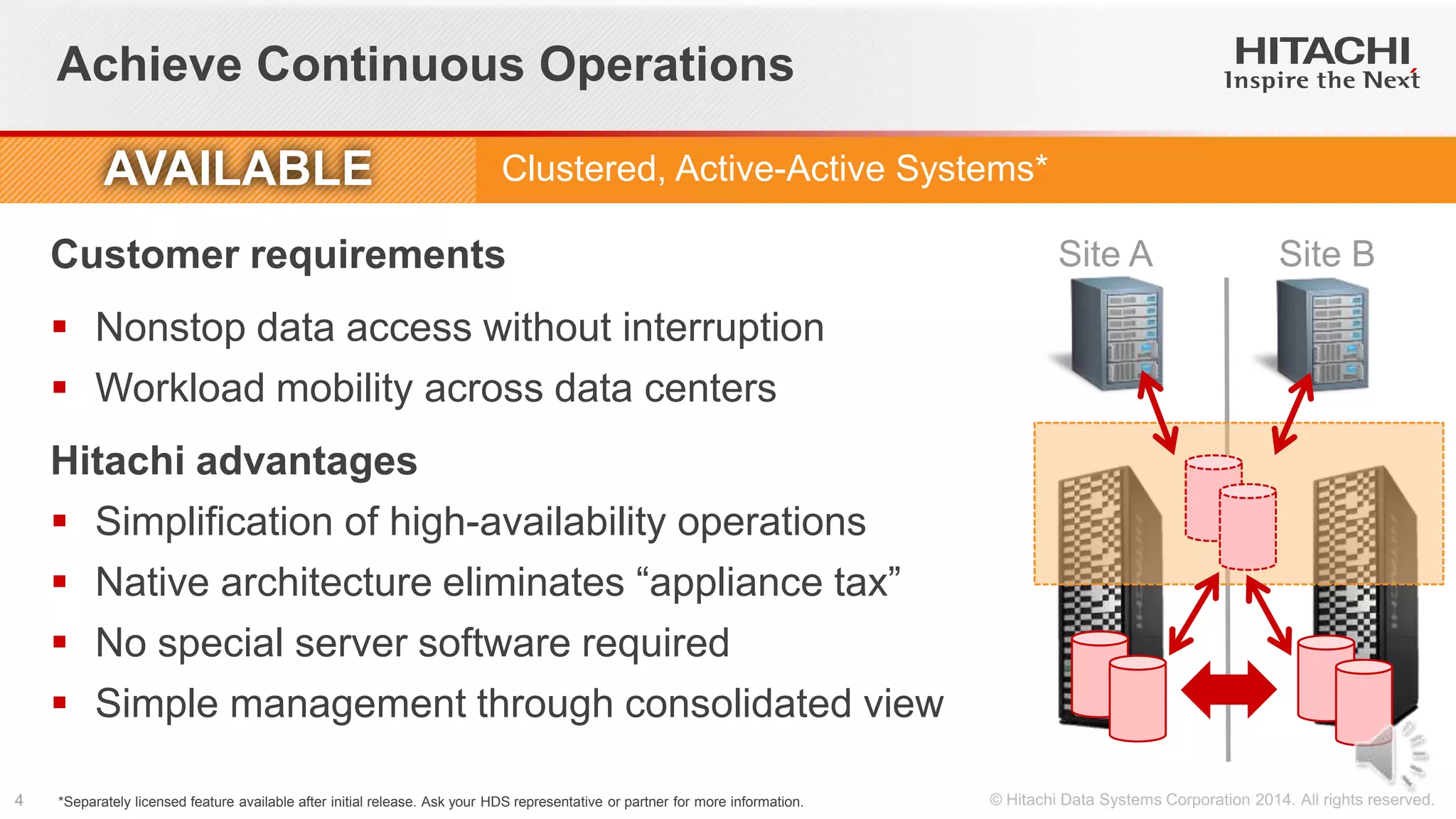

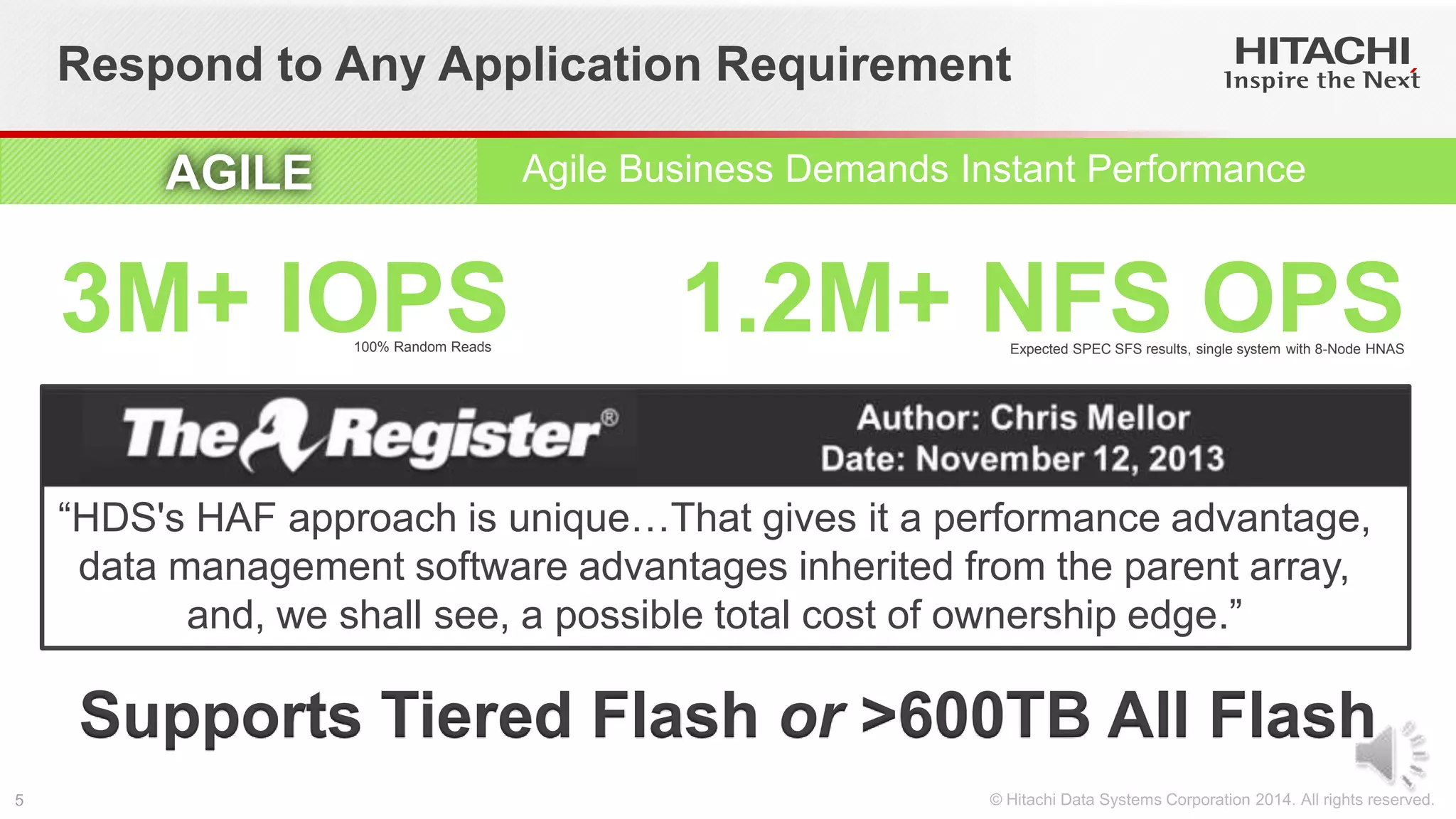

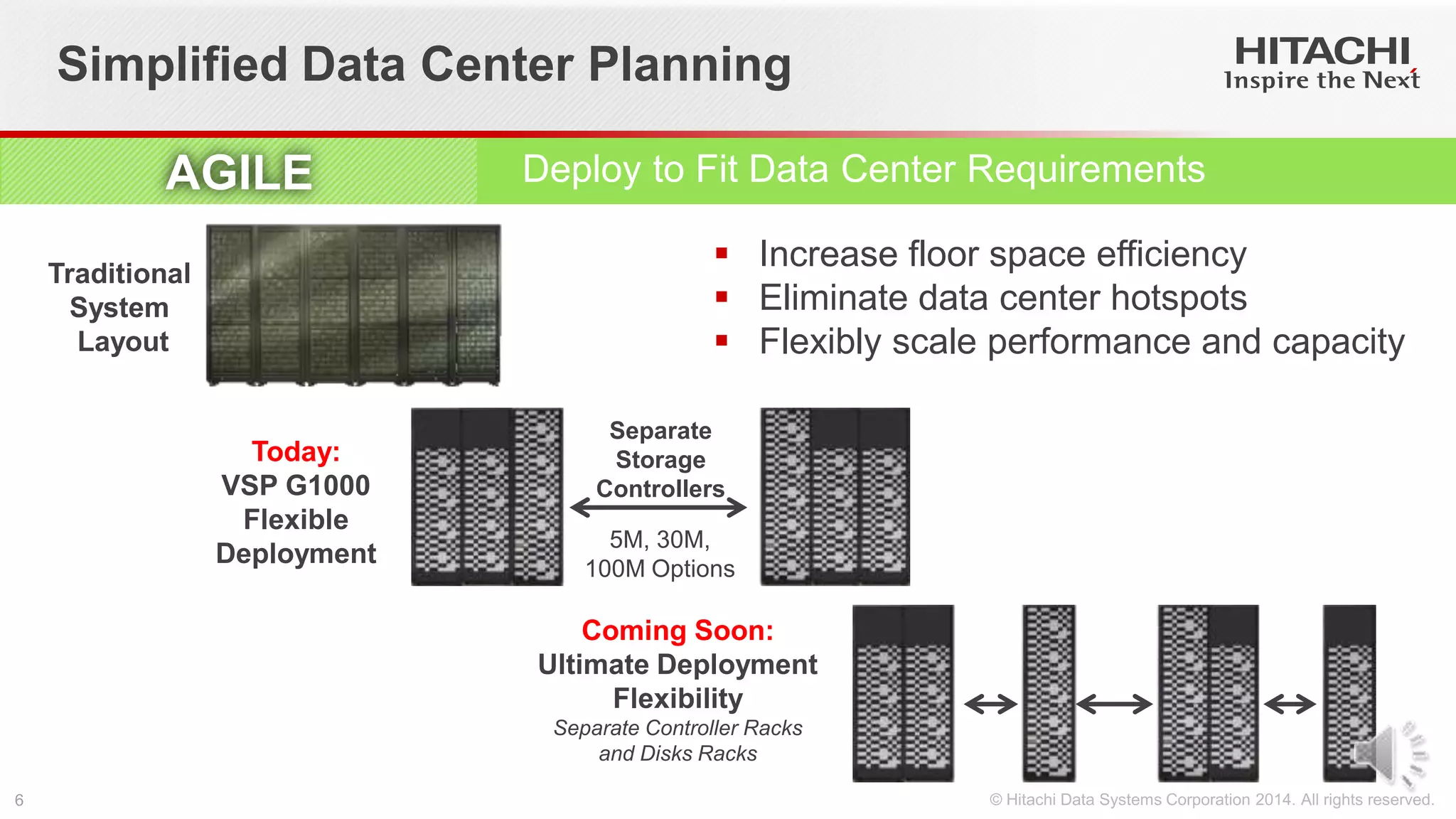

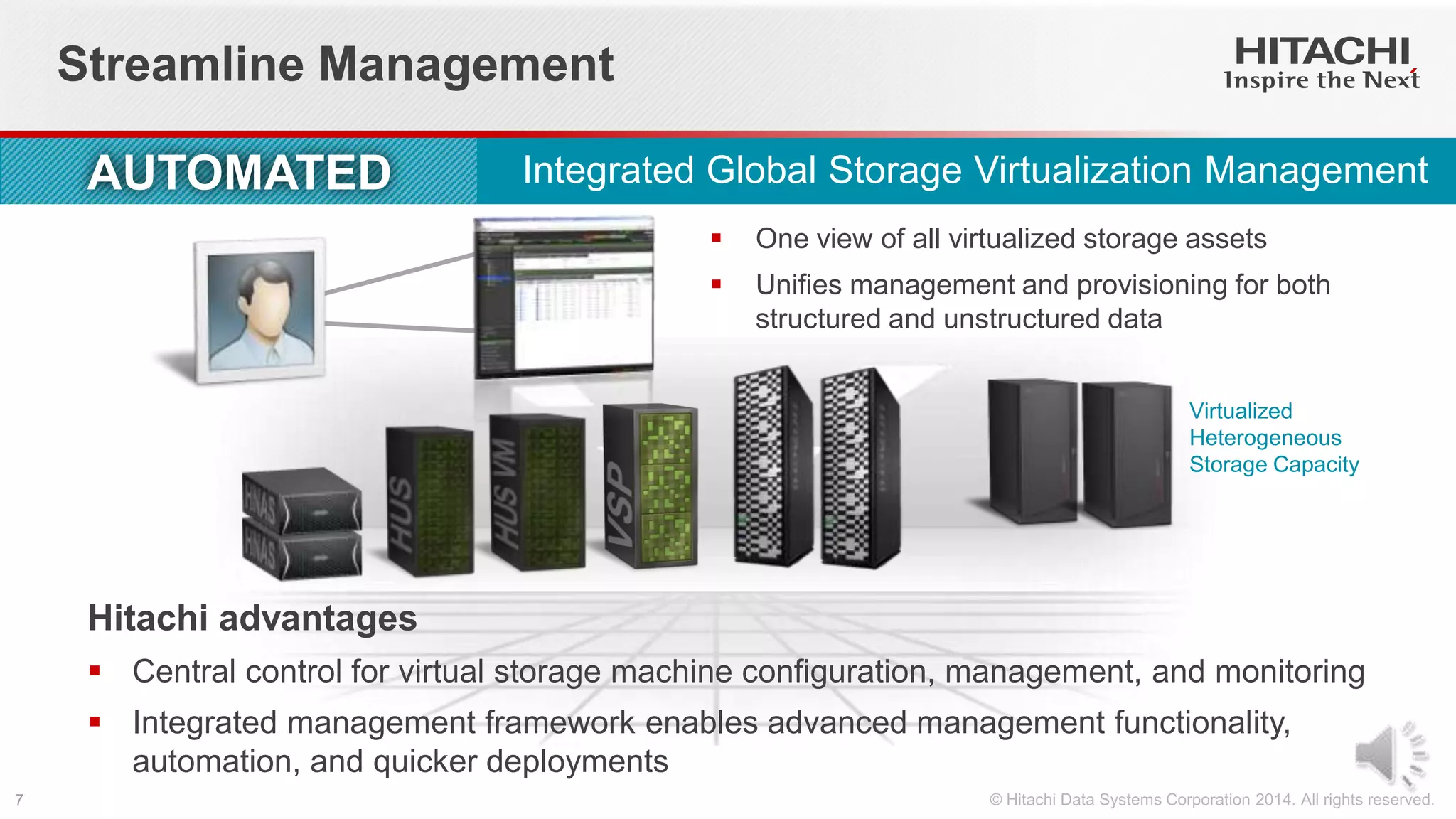

This document summarizes Hitachi's Virtual Storage Platform G1000 and Storage Virtualization Operating System. The SVOS allows data to be accessed continuously across sites and on mobile apps. The VSP G1000 uses a virtual storage machine architecture to provide highly available, clustered systems. It can support all-flash configurations with over 600TB of capacity or mixed flash and disk. Management is streamlined through a single view of all virtualized storage assets. The VSP G1000 also aims to redefine technology refresh cycles by allowing nondisruptive data and system migration through its global storage virtualization.

![Technology Refresh Challenges Persist

Forecast Analysis: External Controller-Based Disk Storage, Worldwide 3Q13 Update,

10/31/13, Cox/Chang

“Five- to seven-year

[external] disk storage

infrastructure refresh

cycles will become the

new normal.”

Why?](https://image.slidesharecdn.com/vspg1000andsvosslidecast-140422220716-phpapp01/75/Hitachi-Virtual-Storage-Platform-and-Storage-Virtualization-Operating-System-Slidecast-9-2048.jpg)