This project explores the integration of data science methodologies for improving computer forensics analysis, focusing on the visualization of digital evidence using Python and Jupyter Notebook. By addressing the limitations of existing forensic tools that lack statistical and visualization features, the project aims to enhance the clarity and comprehensibility of forensic data analysis. Ultimately, it demonstrates how visualizations can improve evidence profiling and assist investigators in understanding digital crime trends.

![VISUALIZATION OF COMPUTER FORENSICS

ANALYSIS ON DIGITAL EVIDENCE

Muhd Mu’izuddin b. Hj.Muhsinon,

Nazri b. Ahmad Zamani

University Tenaga Nasional,

CyberSecurity Malaysia

muiz_din94@rocketmail.com

Abstract

The project is to explore the usage of data science methodology in further analyzing

computer forensics analysis results. In computer forensics the analysis in carried out via

forensics tools, for example EnCase, FTK, and XRY. These tools have powerful engine

to zooming in digital evidence and finding information pertinent to an investigation. What

lack in these tools are features for statistical, machine learning and visualization

function that may be crucial in looking into the evidence in its entirety. The project will

explore methods to profile and visualize these in computer forensics analysis findings

by using Python and Jupyter Notebook. The EnCase csv files of a real-life case analysis

will be loaded and will be analyzed by using Python’s SKLearn statistical and pattern

recognition engine. The result will be plotted by using Python’s visualization tools such

as Matplotlib, Seaborn, and Pandas.

I. Introduction

Computer technology is the major integral

part of everyday human life, and it is

growing rapidly, as are computer crimes

such as financial fraud, unauthorized

intrusion, identity theft and intellectual

theft. To counteract those computer-

related crimes, Computer Forensics plays

a very important role. Computer

Forensics involves obtaining and

analysing digital information for use as

evidence in civil, criminal or

administrative cases [1] .

A Computer Forensic Investigation

generally investigates the data which

could be taken from computer hard disks

or any other storage devices with

adherence to standard operating policies

and procedures to determine if those

devices have been compromised by

unauthorized access or not [2]. Computer

Forensics Investigators work as a team to

investigate the incident and conduct the

forensic analysis by using various

methodologies (e.g. Static and Dynamic)

and tools (e.g. EnCase csv files of a real-

life case).

To ensure the computer network system

is secure in an organization. A successful

Computer Forensic Investigator must be

familiar with various laws and regulations

related to computer crimes in their

country (e.g. Malaysian Computer Crimes

Act , CCA 1997) and various computer

operating systems (e.g. Windows, Linux)

and network operating systems (e.g. Win

NT). This report will be analyzed the

method and visualize these computer

forensics analysis results by using Python

and Jupyter Notebook. The result will be](https://image.slidesharecdn.com/articlevisualization-170116124927/75/Visualization-of-Computer-Forensics-Analysis-on-Digital-Evidence-1-2048.jpg)

![plotted in visualization so that it more

easy to make reference or any

improvement [2].

Digital investigations are constantly

changing as new technologies are utilized

to create, store or transfer vital data [3].

Augmenting existing forensic platforms

with innovative methods of acquiring,

processing, reasoning about and

providing actionable evidence is vital.

Integrating open-source Python scripts

with leading-edge forensic platforms like

EnCase provides great versatility and can

speed new investigative methods and

processing algorithms to address these

emerging technologies.

In Malaysia, law enforcement agency is

now faced with the task of enforcing law

in cyberspace that transcends borders

and raises issues of jurisdiction.

Cybercrime has surpassed drug

trafficking as the most lucrative crime.

Almost anybody who is an active

computer/online user would have been a

cybercrime victim, and in most cases too

its perpetrators. Cybercriminals usually

use to cheat, harass, disseminate false

information for their own good. This

project basically want to improve the

results of the investigation have been

made to visualize these computer

forensics analysis results by using Python

and Jupyter Notebook. By not only have

raw data into something that is more

easily understood as a whole. So that,

people can also see the overview of the

results and it will be more accurate.

II. Problem Statement

In the analysis period of the computer

forensics crime scene investigation, the

analyst may confront numerous issues

on getting the exceptionally precise

result. They only get some kind of raw

information and less clear than regular

visualizations even more

understandable. One of the problems

is:

1. Computer forensics system lacks

statistical and visualization tools.

There are key points that need to be

considered in the investigation period

of the digital evidence:

1. Evidence profiling is crucial in

understanding relationships of

the digital evidence activities

timeline to the case investigation

timeline.

III. Workflow

Figure 3.: Flowchart](https://image.slidesharecdn.com/articlevisualization-170116124927/75/Visualization-of-Computer-Forensics-Analysis-on-Digital-Evidence-2-2048.jpg)

![information to perceive how security

groups can accomplish their objectives.

4. Visualize

There are two angles to perception

hypothesis; one of it is the style. There is

writing around how to utilize shading,

tone, thickness and different perspectives

to make outwardly satisfying pictures to

target group. There is part of outline rules

in the book [4]. Graphics Press: There is

a committed section in the book [5].

These are sample of visualizations and

some explanation about it that could be

made.

5. Feedback

This step involves continuous

improvement with feedback from the

stakeholders and availability of new data.

In reporting part, data science results

could be represented in many ways.

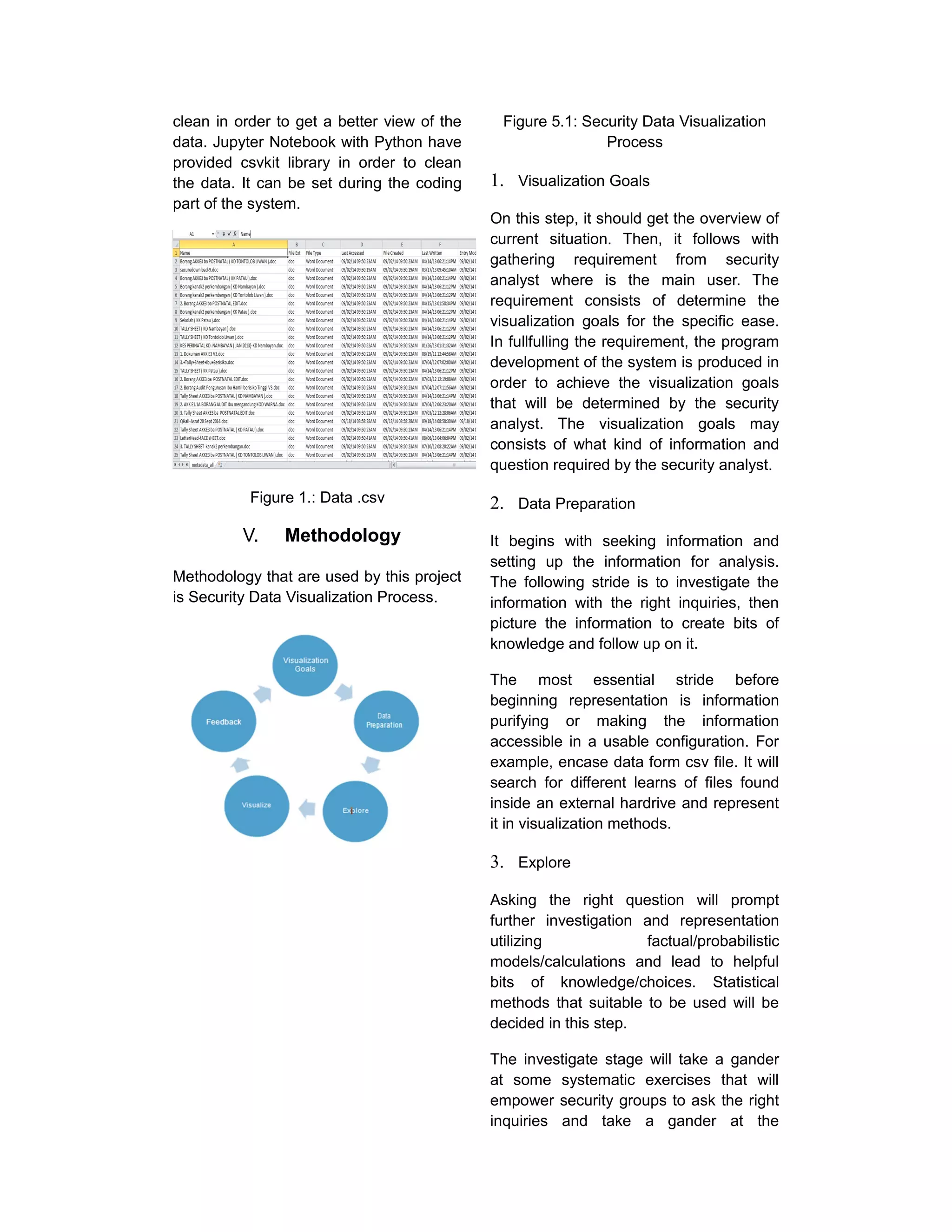

VI. Results

For this visualization, the CyberSecurity

Malaysia has provided this data. It

provides metadata from Encase Result in

real forensic cases. The format for this

data is in .csv.

The metadata from the Encase Result

was a real data that given by Digital

Forensics Departments In CyberSecurity

Malaysia. The data was exhibit from

external hard drive. The first impression

by just looking the raw data, visualization

can make the data into something that is

more easily understood as a whole. So

that, people can also see the overview of

the results and it will be more accurate. In

this way, analysts are doing deduction of

material evidence so that they are easy to

identify the suspect

Figure 6.1: Overall Data Pie Chart

This pie chart shows the perentage of

each data type in the metadata file. From

the chart, .jpg data type is the highest

data that are produced/keeped by the

suspect. Followed by .xls, .pdf, .doc and

lastly .pptx.

Suspects showed a deep interest in data

type .jpg extent that more than 50% of the

data is based on data type .jpg. But, none

the less the number of data types .xls

where it represents 22% of the total data.

The suspect is likely an overpowering

interest in the collection, but the suspect

was also a diligent collecting data in the

calculation of whether skilled or analyze.

Figure .2: Data Compared by Month

From the graph, total number of metadata

shows that in April is the active period for

the suspect to produced/keeped the data.

So, it can be predict that April month for

each year are the most busy time for the

suspect to produced/keeped the data.](https://image.slidesharecdn.com/articlevisualization-170116124927/75/Visualization-of-Computer-Forensics-Analysis-on-Digital-Evidence-5-2048.jpg)