This document discusses using Perl stored procedures with MariaDB. Key points include:

- Perl stored procedures are implemented as Perl modules that use DBD::mysql to access the database from within the MariaDB process. This makes them easier to debug than SQL stored routines.

- Examples are provided for simple "Hello World" procedures, handling errors, reading and returning data from queries, and modifying data by inserting rows.

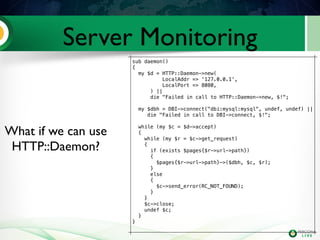

- Perl stored procedures allow extending MariaDB's functionality by leveraging thousands of modules on CPAN. As an example, the document shows how a procedure could implement a basic monitoring server using HTTP::Daemon to expose server status data via a JSON API.

![Hello World

MariaDB [Demo]> CREATE PROCEDURE PerlHello()

-> DYNAMIC RESULT SETS 1

-> NO SQL

-> LANGUAGE Perl

-> EXTERNAL NAME "HelloWorld::test";

Query OK, 0 rows affected (0.02 sec)

MariaDB [Demo]> call PerlHello();

+-----------------------+

| message |

+-----------------------+

| Hello World from Perl |

+-----------------------+

1 row in set (0.03 sec)

Query OK, 0 rows affected (0.03 sec)

package HelloWorld;

# put this file in <prefix>/lib/mysql/perl

use 5.008008;

use strict;

use warnings;

use Symbol qw(delete_package);

require Exporter;

our @ISA = qw(Exporter);

our @EXPORT_OK = qw( );

our @EXPORT = qw( test );

our $VERSION = '0.01';

sub test()

{

return {

'message' => 'Hello World from Perl',

};

}

1;](https://image.slidesharecdn.com/perlstoredprocedures2013-130425182647-phpapp01/85/Using-Perl-Stored-Procedures-for-MariaDB-4-320.jpg)

![Hello World

MariaDB [Demo]> CREATE PROCEDURE PerlHello()

-> DYNAMIC RESULT SETS 1

-> NO SQL

-> LANGUAGE Perl

-> EXTERNAL NAME "HelloWorld::test";

Query OK, 0 rows affected (0.02 sec)

MariaDB [Demo]> call PerlHello();

+-----------------------+

| message |

+-----------------------+

| Hello World from Perl |

+-----------------------+

1 row in set (0.03 sec)

Query OK, 0 rows affected (0.03 sec)

package HelloWorld;

# put this file in <prefix>/lib/mysql/perl

use 5.008008;

use strict;

use warnings;

use Symbol qw(delete_package);

require Exporter;

our @ISA = qw(Exporter);

our @EXPORT_OK = qw( );

our @EXPORT = qw( test );

our $VERSION = '0.01';

sub test()

{

return {

'message' => 'Hello World from Perl',

};

}

1;](https://image.slidesharecdn.com/perlstoredprocedures2013-130425182647-phpapp01/85/Using-Perl-Stored-Procedures-for-MariaDB-5-320.jpg)

![Error handling or die

MariaDB [Demo]> CREATE PROCEDURE PerlError()

-> DYNAMIC RESULT SETS 1

-> NO SQL

-> LANGUAGE Perl

-> EXTERNAL NAME "GoodbyeWorld::test";

Query OK, 0 rows affected (0.00 sec)

MariaDB [Demo]> call PerlError();

ERROR 1220 (HY000): Cannot open file: No

such file or directory at /usr/local/mysql/

lib/plugin/perl/GoodbyeWorld.pm line 16.

package GoodbyeWorld;

# put this file in <prefix>/lib/mysql/perl

use 5.008008;

use strict;

use warnings;

use Symbol qw(delete_package);

require Exporter;

our @ISA = qw(Exporter);

our @EXPORT_OK = qw( );

our @EXPORT = qw( test );

our $VERSION = '0.01';

sub test(

{

open FILE, "</something/nonexist"

or die "Cannot open file: $!";

return {

'message' => 'Goodbye World from Perl',

};

}

1;](https://image.slidesharecdn.com/perlstoredprocedures2013-130425182647-phpapp01/85/Using-Perl-Stored-Procedures-for-MariaDB-6-320.jpg)

![Reading Data

MariaDB [employees]> CREATE PROCEDURE employee(

-> empno INT)

-> DYNAMIC RESULT SETS 1

-> READS SQL DATA

-> LANGUAGE Perl

-> EXTERNAL NAME "ReadData::test";

Query OK, 0 rows affected (0.02 sec)

MariaDB [employees]> call employee(10034)G

*************************** 1. row

***************************

birth_date: 1962-12-29

emp_no: 10034

first_name: Bader

gender: M

hire_date: 1988-09-21

last_name: Swan

1 row in set (0.00 sec)

Query OK, 0 rows affected (0.00 sec)

package ReadData;

# put this file in <prefix>/lib/mysql/perl

use 5.008008;

use strict;

use warnings;

use Symbol qw(delete_package);

require Exporter;

our @ISA = qw(Exporter);

our @EXPORT_OK = qw( );

our @EXPORT = qw( test );

our $VERSION = '0.01';

our $DSN = ‘dbi:mysql:employees’;

use DBI;

use DBD::mysql;

sub test($)

{

my($emp_no) = @_;

my $dbh = DBI->connect($DSN, undef, undef) ||

die "Failed in call to DBI->connect, $!";

my $s = $dbh->prepare(

“SELECT * FROM employees WHERE emp_no=?”);

$s->execute(int $emp_no);

return $s->fetchrow_hashref;

}

1;](https://image.slidesharecdn.com/perlstoredprocedures2013-130425182647-phpapp01/85/Using-Perl-Stored-Procedures-for-MariaDB-7-320.jpg)

![Reading Data

MariaDB [employees]> CREATE PROCEDURE employee(

-> empno INT)

-> DYNAMIC RESULT SETS 1

-> READS SQL DATA

-> LANGUAGE Perl

-> EXTERNAL NAME "ReadData::test";

Query OK, 0 rows affected (0.02 sec)

MariaDB [employees]> call employee(10034)G

*************************** 1. row

***************************

birth_date: 1962-12-29

emp_no: 10034

first_name: Bader

gender: M

hire_date: 1988-09-21

last_name: Swan

1 row in set (0.00 sec)

Query OK, 0 rows affected (0.00 sec)

package ReadData;

# put this file in <prefix>/lib/mysql/perl

use 5.008008;

use strict;

use warnings;

use Symbol qw(delete_package);

require Exporter;

our @ISA = qw(Exporter);

our @EXPORT_OK = qw( );

our @EXPORT = qw( test );

our $VERSION = '0.01';

our $DSN = ‘dbi:mysql:employees’;

use DBI;

use DBD::mysql;

sub test($)

{

my($emp_no) = @_;

my $dbh = DBI->connect($DSN, undef, undef) ||

die "Failed in call to DBI->connect, $!";

my $s = $dbh->prepare(

“SELECT * FROM employees WHERE emp_no=?”);

$s->execute(int $emp_no);

return $s->fetchrow_hashref;

}

1;](https://image.slidesharecdn.com/perlstoredprocedures2013-130425182647-phpapp01/85/Using-Perl-Stored-Procedures-for-MariaDB-8-320.jpg)

![Modifying Data

MariaDB [employees]> CREATE PROCEDURE

-> makesummary(

-> empno INT)

-> MODIFIES SQL DATA

-> LANGUAGE Perl

-> EXTERNAL NAME "ModifyData::test";

Query OK, 0 rows affected (0.00 sec)

MariaDB [employees]> call makesummary;

sub test()

{

my $dbh = DBI->connect($DSN, undef, undef) ||

die "Failed in call to DBI->connect, $!";

$dbh->begin_work or die $!;

my $ins = $dbh->prepare("INSERT INTO summary VALUES (?,?)");

my $emps = $dbh->selectall_arrayref(

"select emp_no, max(salary) salary from employees ".

"left join salaries using (emp_no) group by emp_no",

{ Slice => {} });

foreach my $emp (@$emps)

{

$ins->execute($emp->{emp_no}, $emp->{salary}) or die $!;

}

$dbh->commit or die $!;

return undef;

}](https://image.slidesharecdn.com/perlstoredprocedures2013-130425182647-phpapp01/85/Using-Perl-Stored-Procedures-for-MariaDB-9-320.jpg)

![Modifying Data

MariaDB [employees]> CREATE PROCEDURE

-> makesummary(

-> empno INT)

-> MODIFIES SQL DATA

-> LANGUAGE Perl

-> EXTERNAL NAME "ModifyData::test";

Query OK, 0 rows affected (0.00 sec)

MariaDB [employees]> call makesummary;

Query OK, 0 rows affected (18.02 sec)

sub test()

{

my $dbh = DBI->connect($DSN, undef, undef) ||

die "Failed in call to DBI->connect, $!";

$dbh->begin_work or die $!;

my $ins = $dbh->prepare("INSERT INTO summary VALUES (?,?)");

my $emps = $dbh->selectall_arrayref(

"select emp_no, max(salary) salary from employees ".

"left join salaries using (emp_no) group by emp_no",

{ Slice => {} });

foreach my $emp (@$emps)

{

$ins->execute($emp->{emp_no}, $emp->{salary}) or die $!;

}

$dbh->commit or die $!;

return undef;

}](https://image.slidesharecdn.com/perlstoredprocedures2013-130425182647-phpapp01/85/Using-Perl-Stored-Procedures-for-MariaDB-10-320.jpg)

![Modifying Data

MariaDB [employees]> CREATE PROCEDURE

-> makesummary(

-> empno INT)

-> MODIFIES SQL DATA

-> LANGUAGE Perl

-> EXTERNAL NAME "ModifyData::test";

Query OK, 0 rows affected (0.00 sec)

MariaDB [employees]> call makesummary;

Query OK, 0 rows affected (18.02 sec)

MariaDB [employees]> select count(*)

-> from summary;

+----------+

| count(*) |

+----------+

| 300024 |

+----------+

1 row in set (0.15 sec)

sub test()

{

my $dbh = DBI->connect($DSN, undef, undef) ||

die "Failed in call to DBI->connect, $!";

$dbh->begin_work or die $!;

my $ins = $dbh->prepare("INSERT INTO summary VALUES (?,?)");

my $emps = $dbh->selectall_arrayref(

"select emp_no, max(salary) salary from employees ".

"left join salaries using (emp_no) group by emp_no",

{ Slice => {} });

foreach my $emp (@$emps)

{

$ins->execute($emp->{emp_no}, $emp->{salary}) or die $!;

}

$dbh->commit or die $!;

return undef;

}](https://image.slidesharecdn.com/perlstoredprocedures2013-130425182647-phpapp01/85/Using-Perl-Stored-Procedures-for-MariaDB-11-320.jpg)

![Modifying Data

MariaDB [employees]> CREATE PROCEDURE

-> makesummary(

-> empno INT)

-> MODIFIES SQL DATA

-> LANGUAGE Perl

-> EXTERNAL NAME "ModifyData::test";

Query OK, 0 rows affected (0.00 sec)

MariaDB [employees]> call makesummary;

Query OK, 0 rows affected (18.02 sec)

MariaDB [employees]> select count(*)

-> from summary;

+----------+

| count(*) |

+----------+

| 300024 |

+----------+

1 row in set (0.15 sec)

sub test()

{

my $dbh = DBI->connect($DSN, undef, undef) ||

die "Failed in call to DBI->connect, $!";

$dbh->begin_work or die $!;

my $ins = $dbh->prepare("INSERT INTO summary VALUES (?,?)");

my $emps = $dbh->selectall_arrayref(

"select emp_no, max(salary) salary from employees ".

"left join salaries using (emp_no) group by emp_no",

{ Slice => {} });

foreach my $emp (@$emps)

{

$ins->execute($emp->{emp_no}, $emp->{salary}) or die $!;

}

$dbh->commit or die $!;

return undef;

}

select statement - 2 seconds

300k inserts in 16 seconds

== 18k inserts per second](https://image.slidesharecdn.com/perlstoredprocedures2013-130425182647-phpapp01/85/Using-Perl-Stored-Procedures-for-MariaDB-12-320.jpg)

![Server Monitoring

'/statusz' => sub {

my ($dbh,$c,$r) = @_;

return $c->send_error(RC_FORBIDDEN)

if $r->method ne 'GET';

my $sth = $dbh->prepare_cached(q{

SELECT VARIABLE_NAME name, VARIABLE_VALUE value

FROM INFORMATION_SCHEMA.GLOBAL_STATUS

}, { Slice => {} });

$sth->execute() || die $sth->errstr;

my $response = HTTP::Response->new(200, undef,

HTTP::Headers->new(

Content_Type => "application/json",

));

my $result = {};

while (my $row = $sth->fetchrow_arrayref)

{

$result->{$row->[0]} = $row->[1];

}

$response->add_content(to_json($result,

{ ascii => 1, pretty => 1 }));

return $c->send_response($response);

},

Fetching current

server status could be

as simple as fetching

http://127.0.0.1/statusz](https://image.slidesharecdn.com/perlstoredprocedures2013-130425182647-phpapp01/85/Using-Perl-Stored-Procedures-for-MariaDB-15-320.jpg)