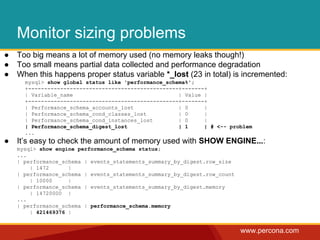

The document discusses using the PERFORMANCE_SCHEMA in MySQL 5.6 to analyze and troubleshoot performance issues. It provides an overview of the PERFORMANCE_SCHEMA, how to configure what is instrumented and collected, and examples of using it to find bottlenecks like long wait times on InnoDB mutexes. The PERFORMANCE_SCHEMA provides visibility into where time is spent and which resources are most used through its set of in-memory tables.

![www.percona.com

Extended slow query log

● Feature of Percona Server (5.1+)

● http://www.percona.com/doc/percona-server/5.5/diagnostics/slow_extended_55.html

# User@Host: mailboxer[mailboxer] @ [192.168.10.165]

# Thread_id: 11167745 Schema: board

# Query_time: 1.009400 Lock_time: 0.000190 Rows_sent: 4 Rows_examined: 1543719

Rows_affected: 0 Rows_read: 4

# Bytes_sent: 278 Tmp_tables: 0 Tmp_disk_tables: 0 Tmp_table_sizes: 0

# InnoDB_trx_id: 1500

# QC_Hit: No Full_scan: Yes Full_join: No Tmp_table: No Tmp_table_on_disk: No

# Filesort: No Filesort_on_disk: No Merge_passes: 0

# InnoDB_IO_r_ops: 6415 InnoDB_IO_r_bytes: 105103360 InnoDB_IO_r_wait: 0.001279

# InnoDB_rec_lock_wait: 0.000000 InnoDB_queue_wait: 0.000000

# InnoDB_pages_distinct: 6430

SET timestamp=1346844943;

SELECT id,title,production_year FROM title WHERE title = 'Bambi';](https://image.slidesharecdn.com/performanceschemamissingmanualflossuk-140319045153-phpapp02/85/MySQL-Performance-schema-missing_manual_flossuk-7-320.jpg)