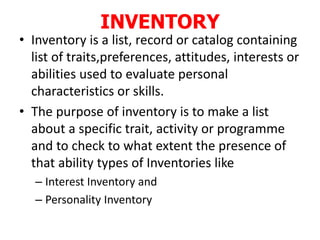

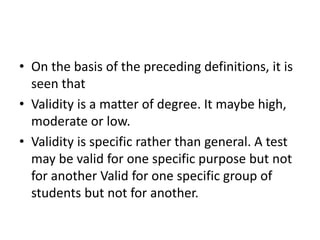

This document provides information about research methodology tools. It discusses various tools used for data collection in educational research, including questionnaires, checklists, rating scales, attitude scales, interviews, inventories, and observation. It describes the purpose, characteristics, types, and effective use of each tool. It emphasizes the importance of selecting valid and reliable tools that are appropriate for the research purpose and collecting the desired information.