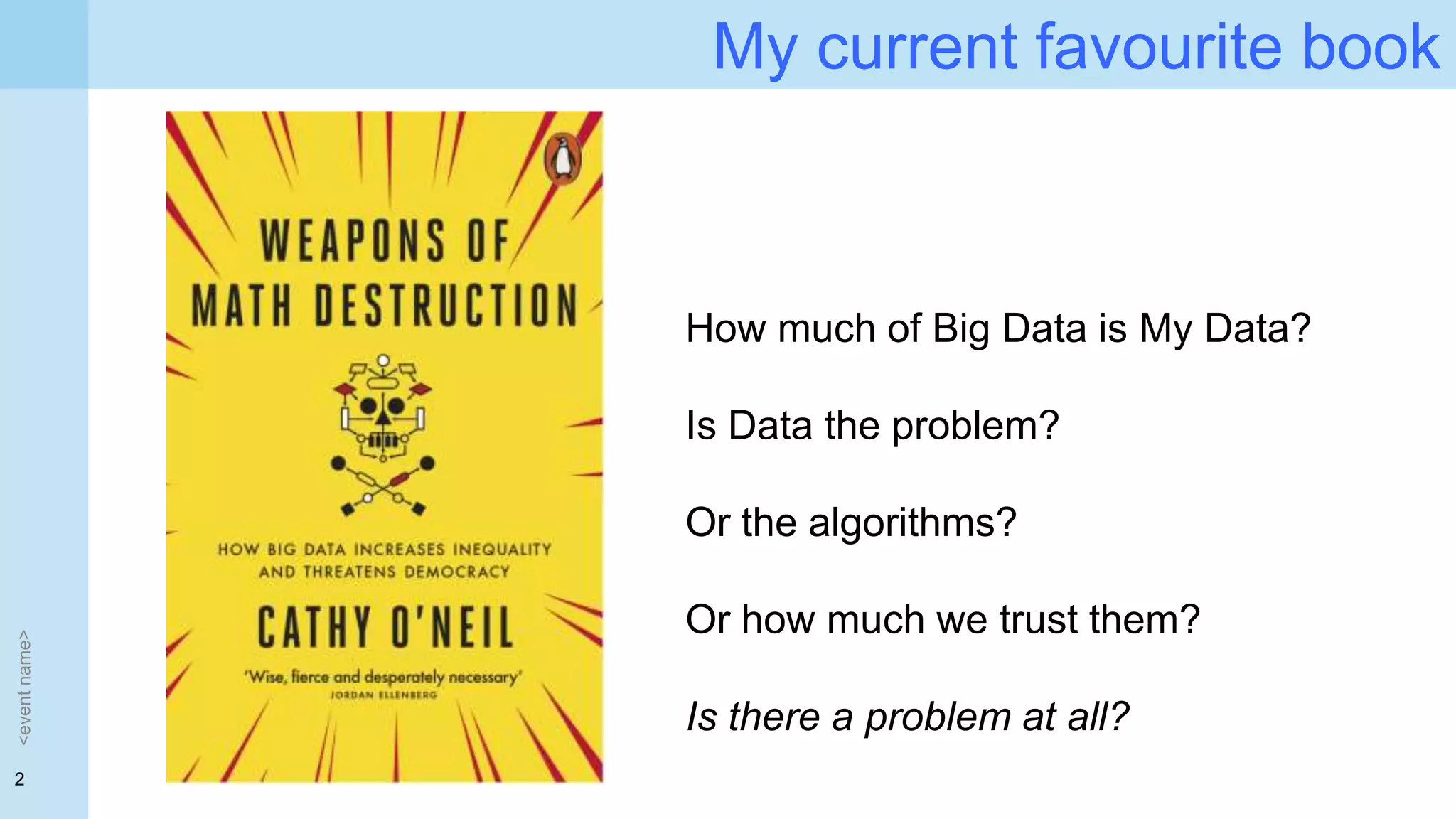

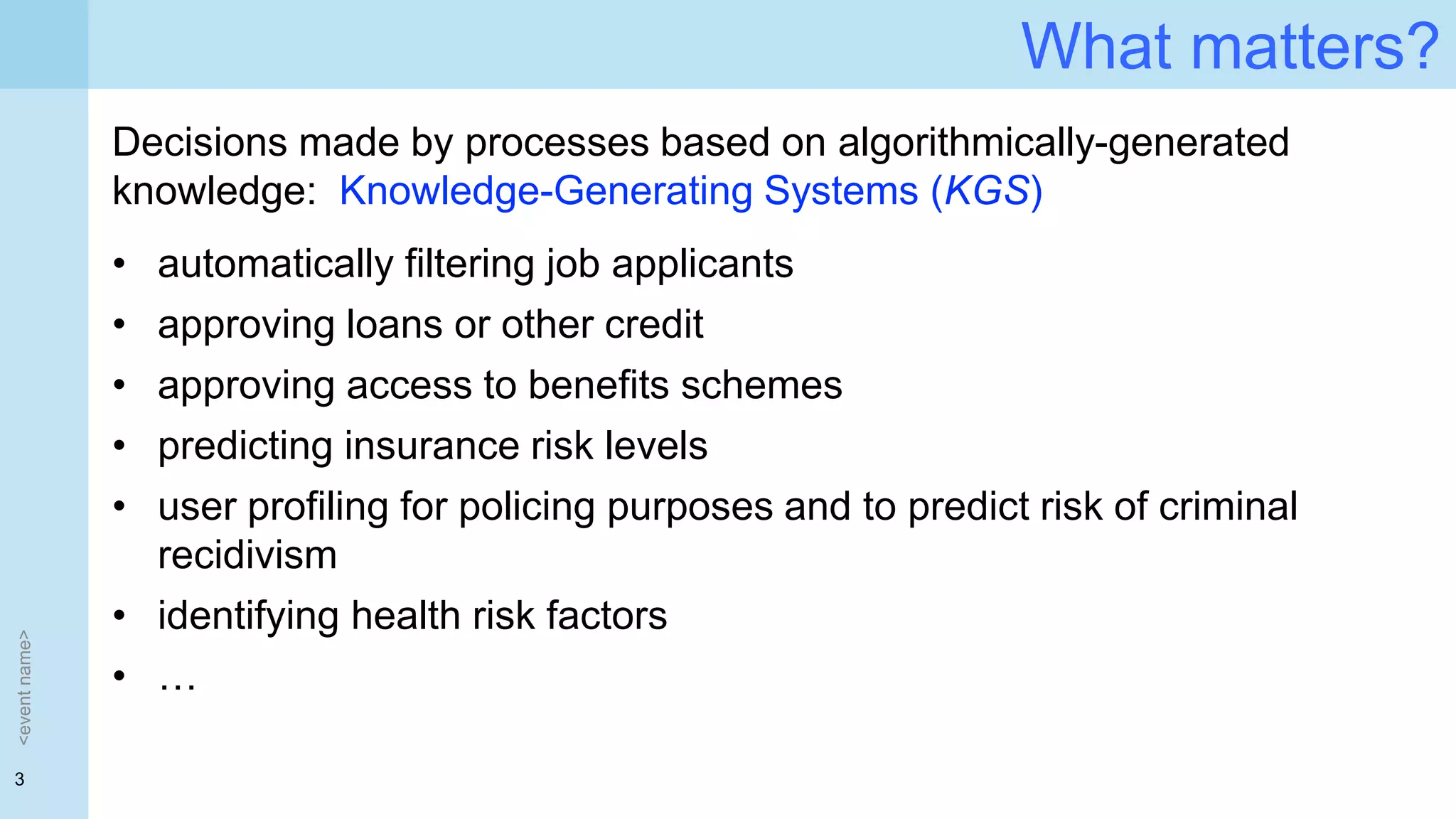

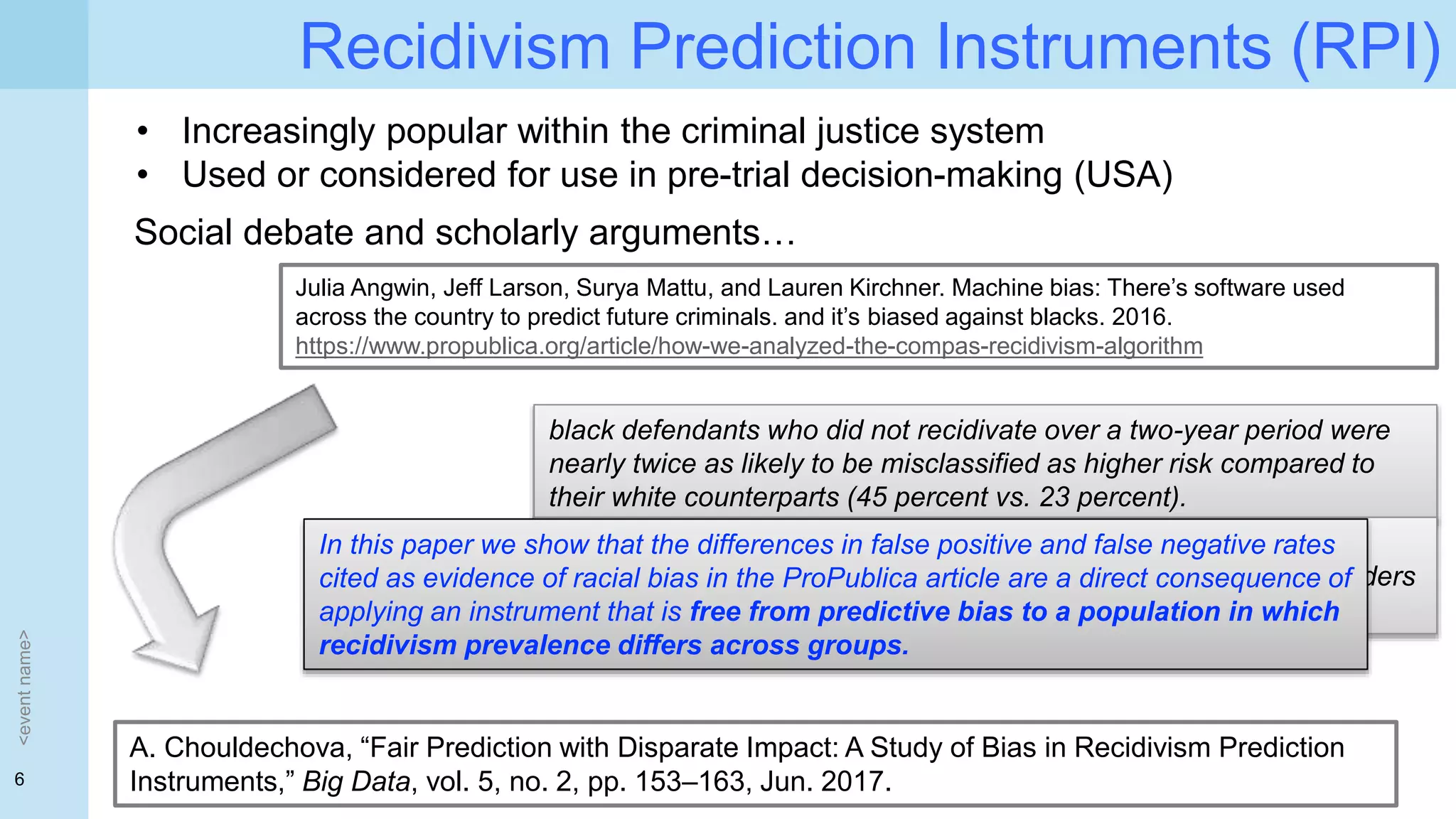

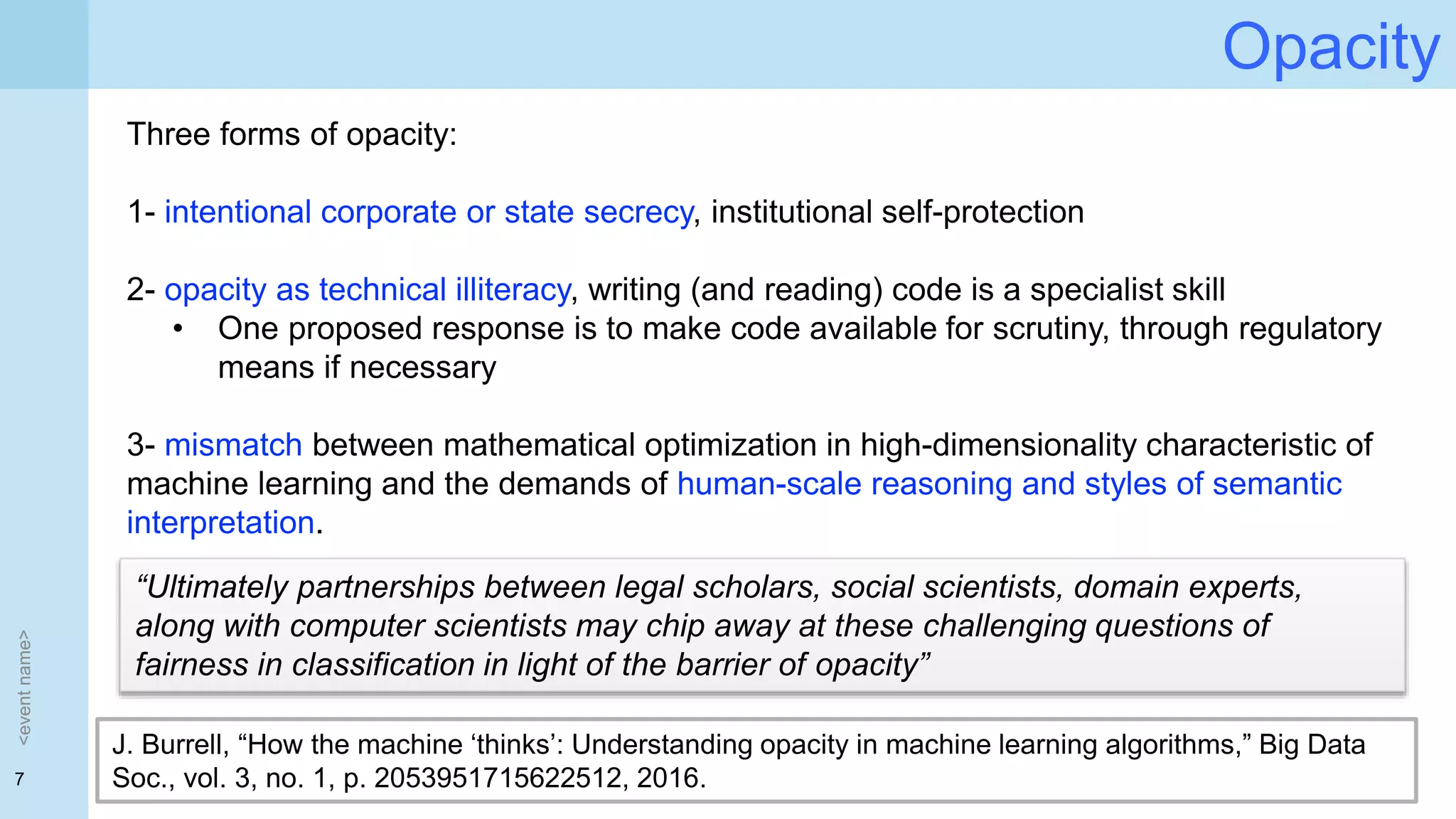

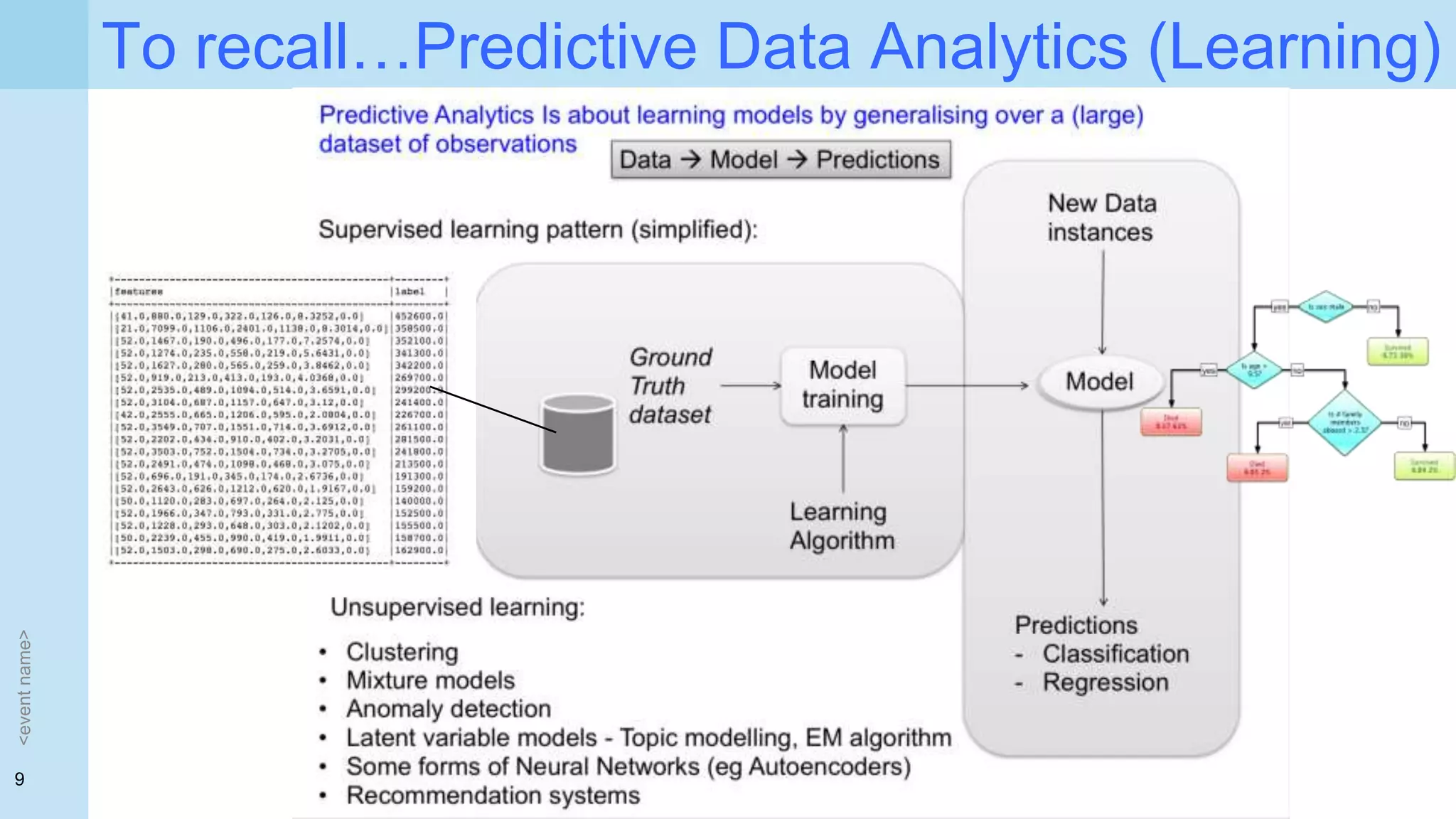

Dr. Paolo Missier's presentation discusses the implications of GDPR for algorithmic decision-making in AI and machine learning, highlighting the need for transparency and trust in these systems. It raises important questions about how individuals are educated on algorithmic decision-making, the mechanisms needed to build trust, and the inter-disciplinary research required to address these challenges. The document also examines biases in predictive analytics, particularly in criminal justice, and emphasizes the necessity for accountability and oversight in AI systems.

![5

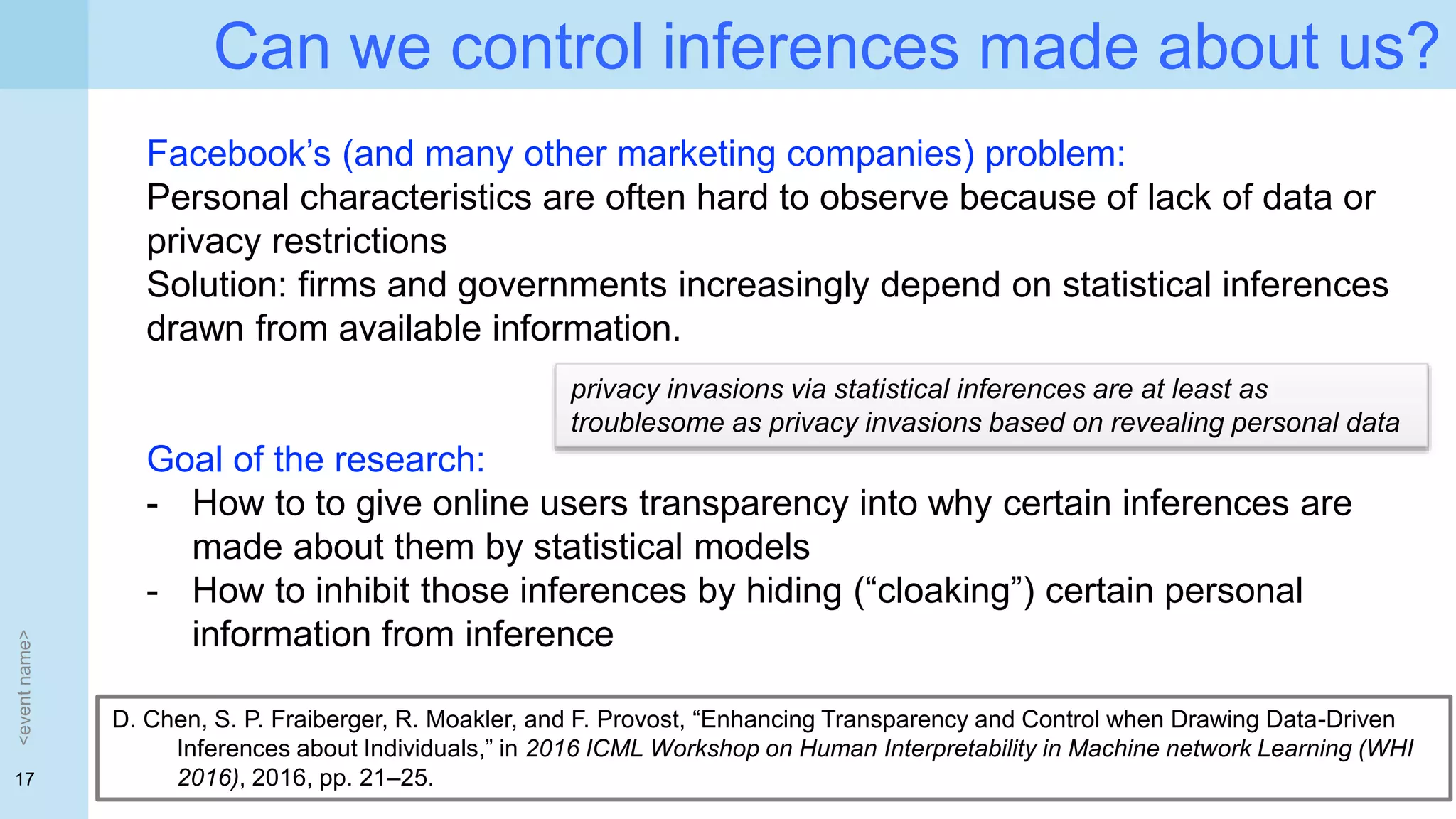

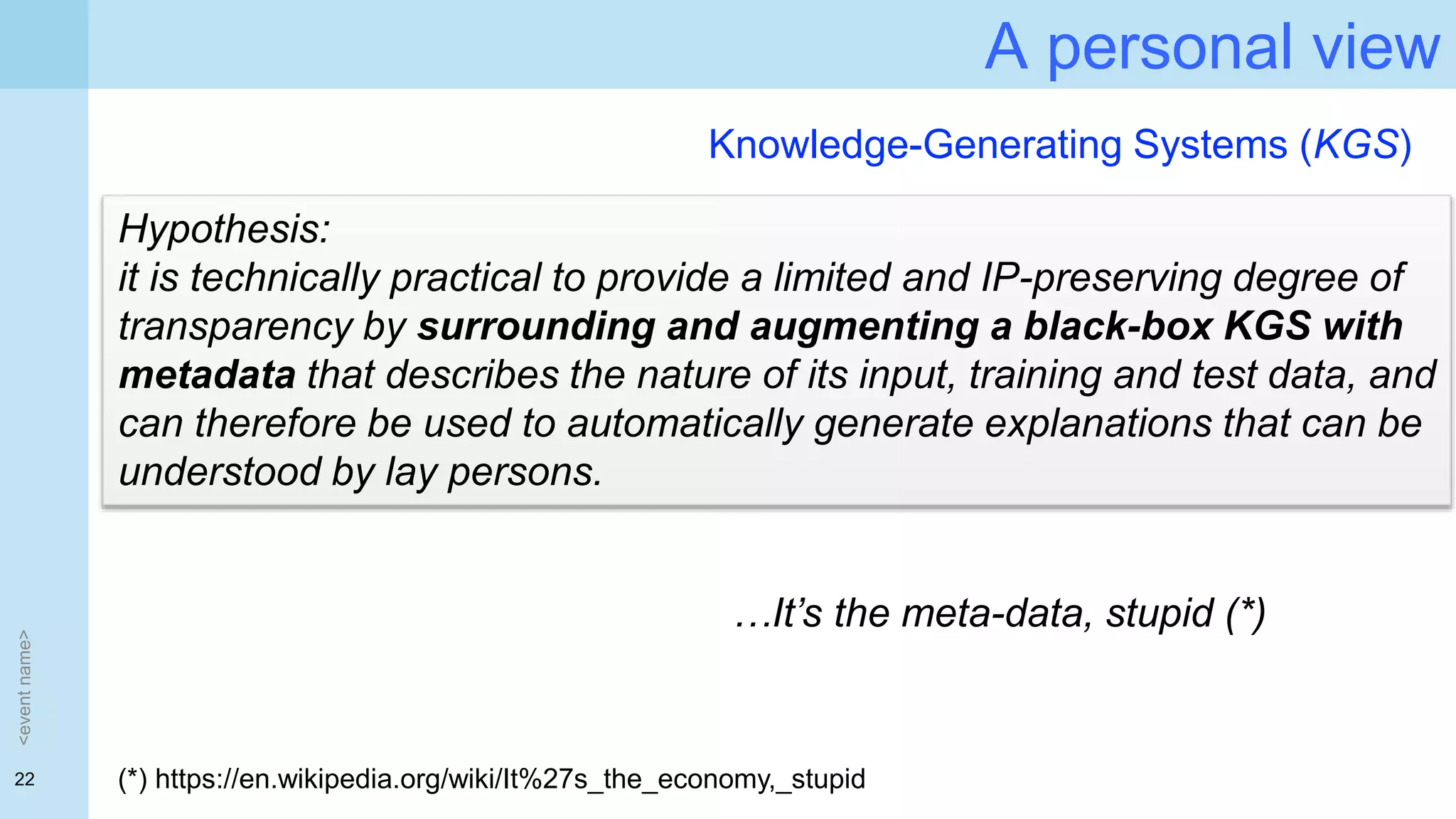

Heads up on the key questions:

• [to what extent, at what level] should lay people be educated about

algorithmic decision making?

• What mechanisms would you propose to engender trust in

algorithmic decision making?

• With regards to trust and transparency, what should Computer

Science researchers focus on?

• What kind of inter-disciplinary research do you see?

<eventname>](https://image.slidesharecdn.com/gdpr-transpatency-dc-talk-180303093911/75/Transparency-in-ML-and-AI-humble-views-from-a-concerned-academic-5-2048.jpg)

![25

Questions to you:

• [to what extent, at what level] should lay people be educated about

algorithmic decision making?

• What mechanisms would you propose to engender trust in

algorithmic decision making?

• With regards to trust and transparency, what should Computer

Science researchers focus on?

• What kind of inter-disciplinary research do you see?

<eventname>](https://image.slidesharecdn.com/gdpr-transpatency-dc-talk-180303093911/75/Transparency-in-ML-and-AI-humble-views-from-a-concerned-academic-25-2048.jpg)

![26

Scenarios

<eventname>

What kind of explanations would you request / expect / accept?

• My application for benefits has been denied but I am not sure why

• My insurance premium is higher than my partner’s, and it’s not clear

why

• My work performance has been deemed unsatisfactory, but I don’t

see why

• [can you suggest other scenarios close to your experience?]](https://image.slidesharecdn.com/gdpr-transpatency-dc-talk-180303093911/75/Transparency-in-ML-and-AI-humble-views-from-a-concerned-academic-26-2048.jpg)