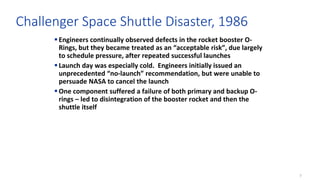

The document discusses the concept of 'normalization of deviance,' where unacceptable practices become accepted within organizations over time, often leading to disastrous consequences. It cites examples such as the Challenger disaster and other incidents where risks were downplayed, resulting in catastrophic failures. To combat this, it emphasizes the importance of recognizing vulnerabilities, adhering to established standards, and learning from past near-misses.

![3

Definition

“The gradual process through which unacceptable practice or standards

become [treated as] acceptable. As the deviant behavior is repeated

without catastrophic results, it becomes the social norm for the

organization.”](https://image.slidesharecdn.com/thenormalizationofdeviance-161109031414/85/The-Normalization-of-Deviance-3-320.jpg)

![9

Gulfstream Business Jet crash, 2014

Jet failed to achieve liftoff, went off the end of the runway

Gust Lock was engaged

“the pilots had neglected to perform complete flight control checks

before 98% of their previous 175 takeoffs in the airplane… it is likely

that they decided to skip the [flight control] check at some point in

the past and that doing so had become their accepted practice.” –

NTSB accident report

One source concluded the pilots likely had adopted a pattern of

neglecting more and more checks over time. None of the standard

checks had been performed prior to takeoff.

Go to model](https://image.slidesharecdn.com/thenormalizationofdeviance-161109031414/85/The-Normalization-of-Deviance-9-320.jpg)

![10

Carbide Industries, 2011

• Manufacturing furnace explosion at Louisville, KY plant –

fatalities resulted

• US Chemical Safety Board incident report included an

entire section on “Normalization of Deviance” as a cause

• “…because Carbide did not thoroughly determine the root

causes of the blows [over-pressure incidents that occurred in

1991 and 2004] and eliminate them, the occurrence became

normalized in the day-to-day operations of the facility…CSB

interviews verified that furnace blows were considered normal”](https://image.slidesharecdn.com/thenormalizationofdeviance-161109031414/85/The-Normalization-of-Deviance-10-320.jpg)