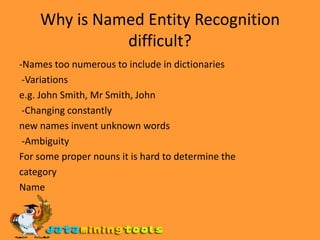

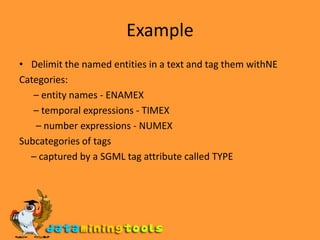

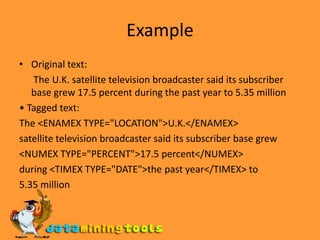

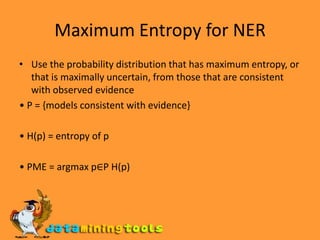

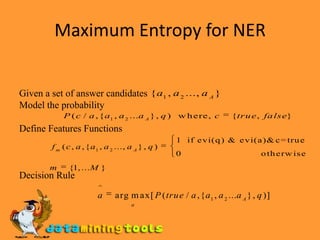

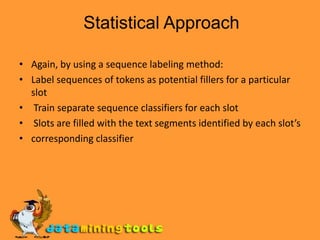

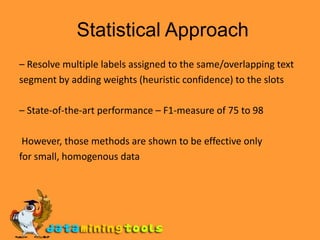

This document discusses text mining and information extraction. It covers the goals of information extraction including extracting structured data from unstructured text. It also discusses named entity recognition, challenges in NER, maximum entropy methods for NER, template filling using statistical and finite-state approaches, and applications of information extraction.