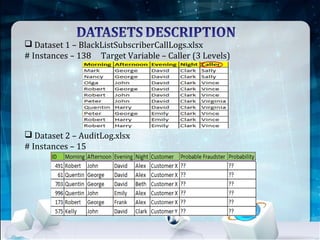

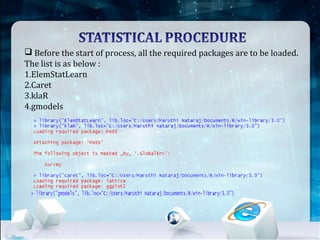

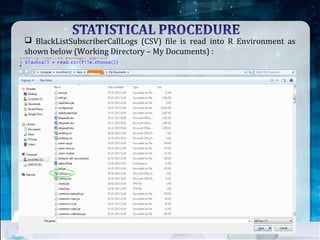

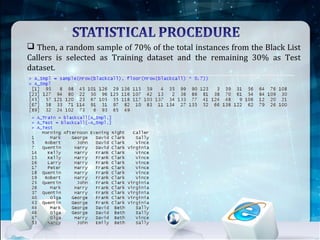

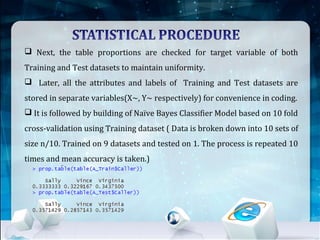

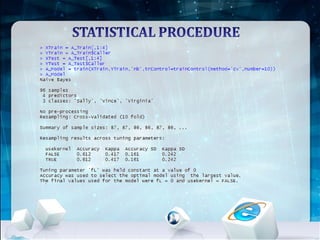

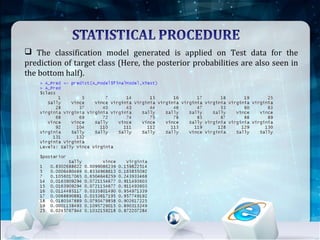

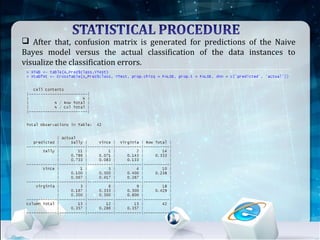

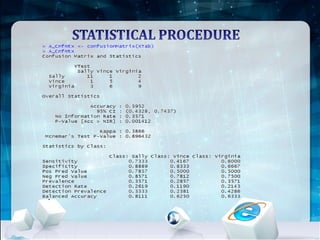

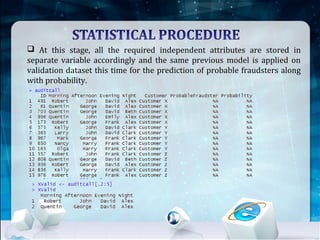

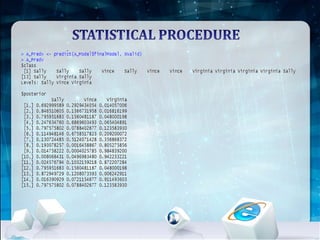

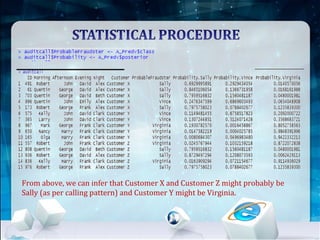

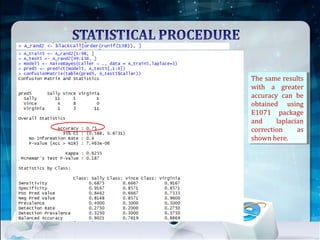

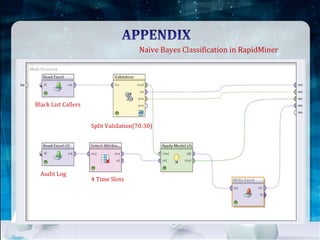

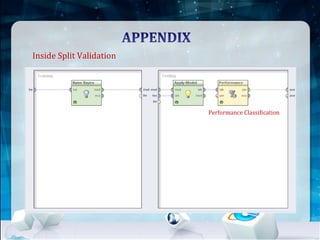

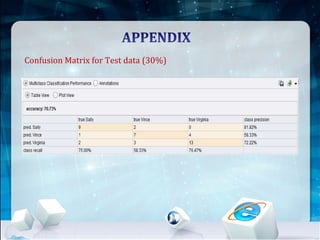

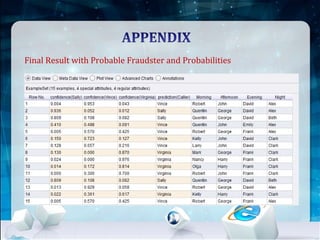

This document discusses using Naive Bayes classification to detect subscription fraud at a telecom company. It analyzes call detail records from known fraudsters to build a model to predict fraudulent subscribers. The model is trained on 70% of records from 3 known fraudsters and tested on 30% of records. It then uses the model to analyze an audit log of 15 subscribers and predicts which subscribers are most likely to be the known fraudsters Sally, Virginia or Vince, along with the probabilities. The document outlines the datasets, tools used including R and various R packages, data preprocessing steps, model building process using 10-fold cross validation, performance evaluation on test data and using the model to analyze the audit log dataset.