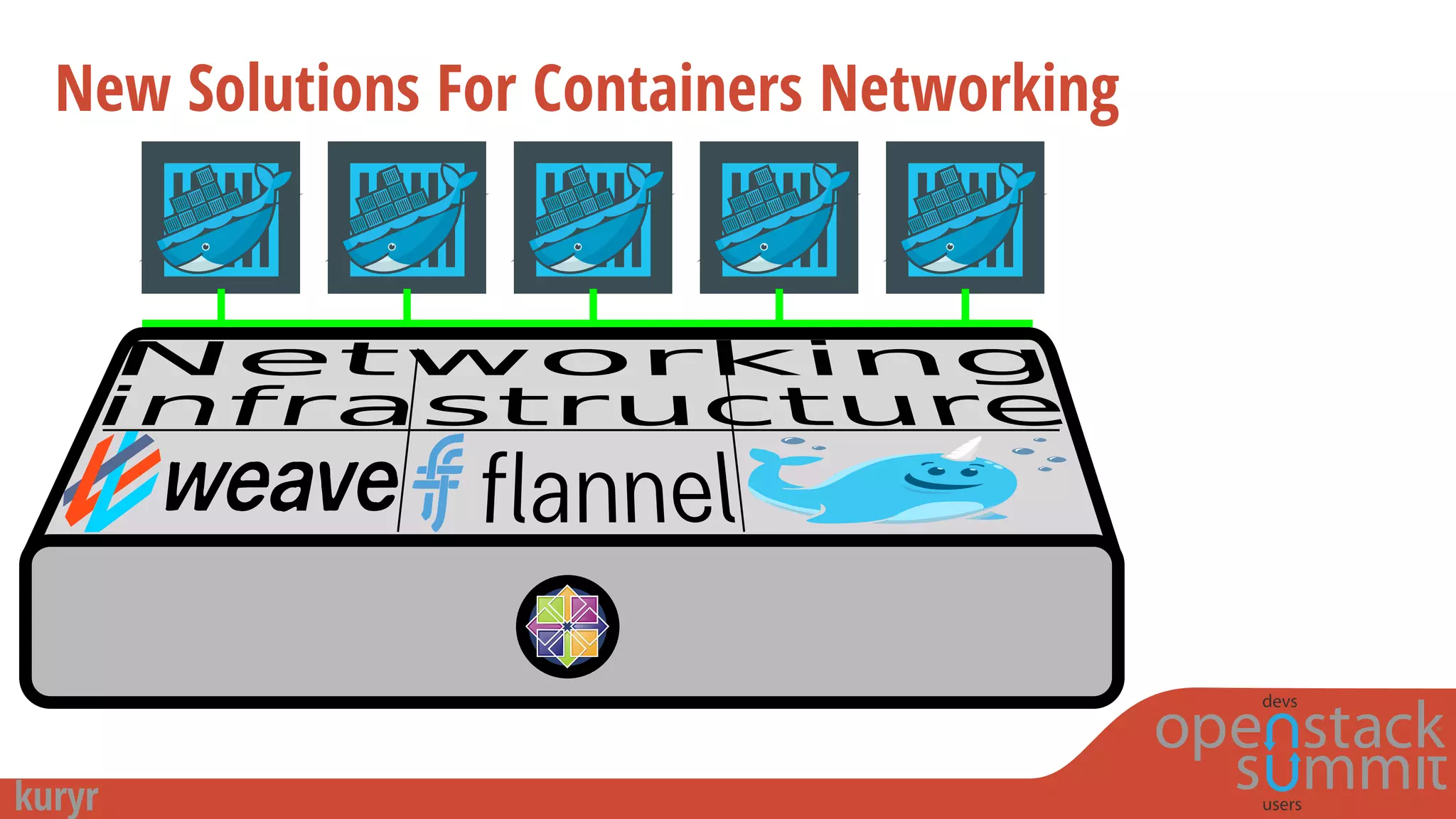

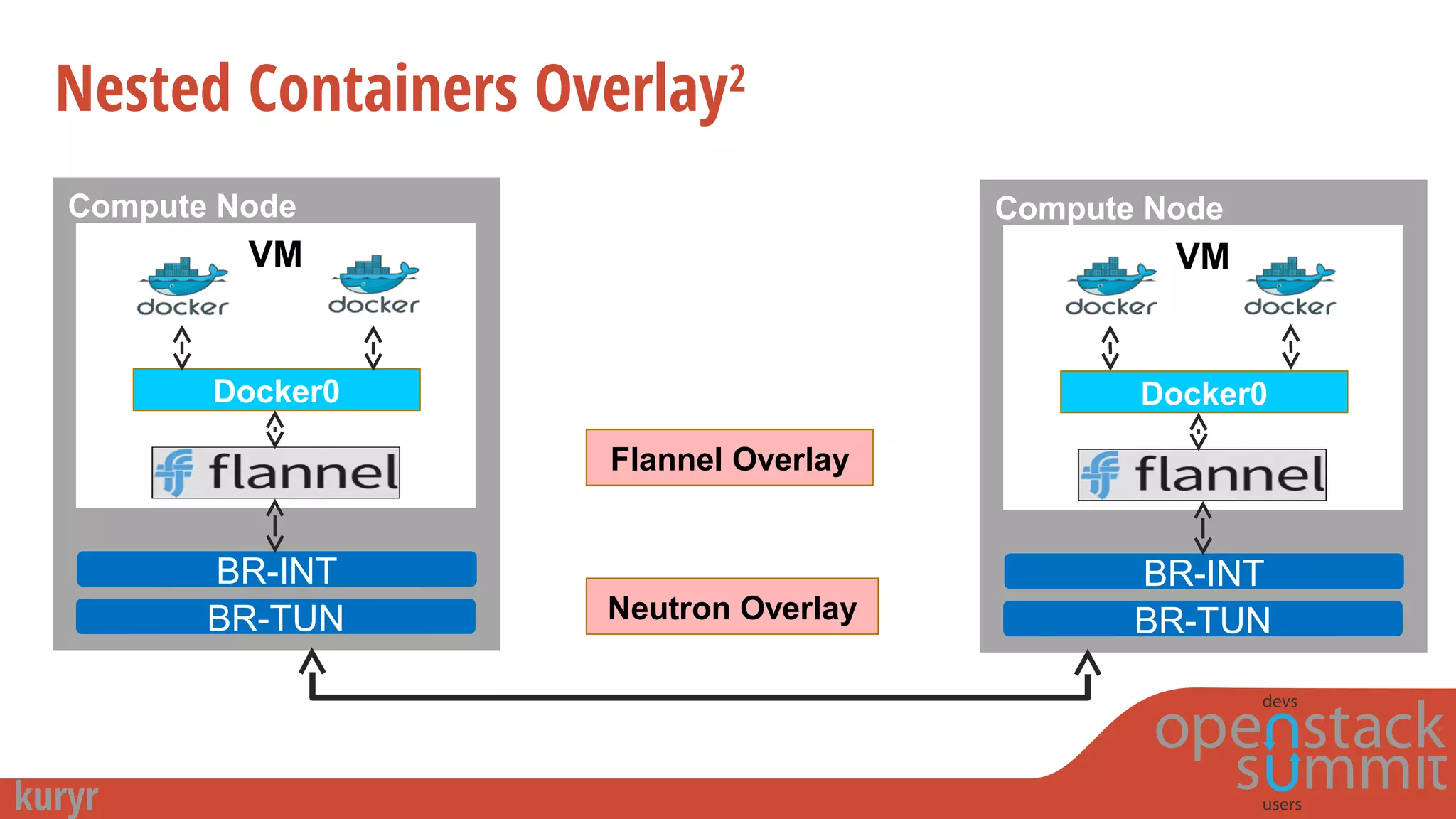

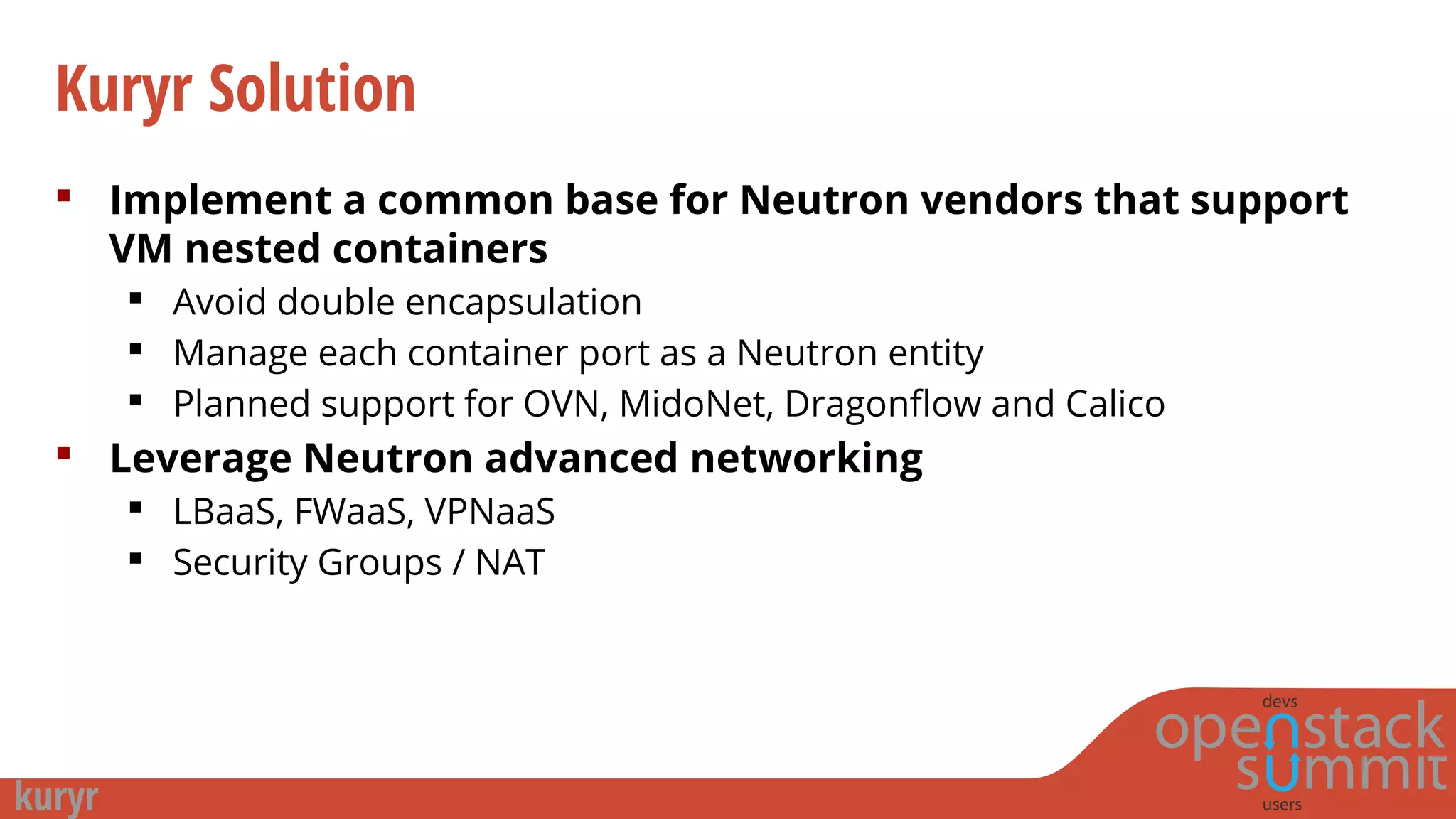

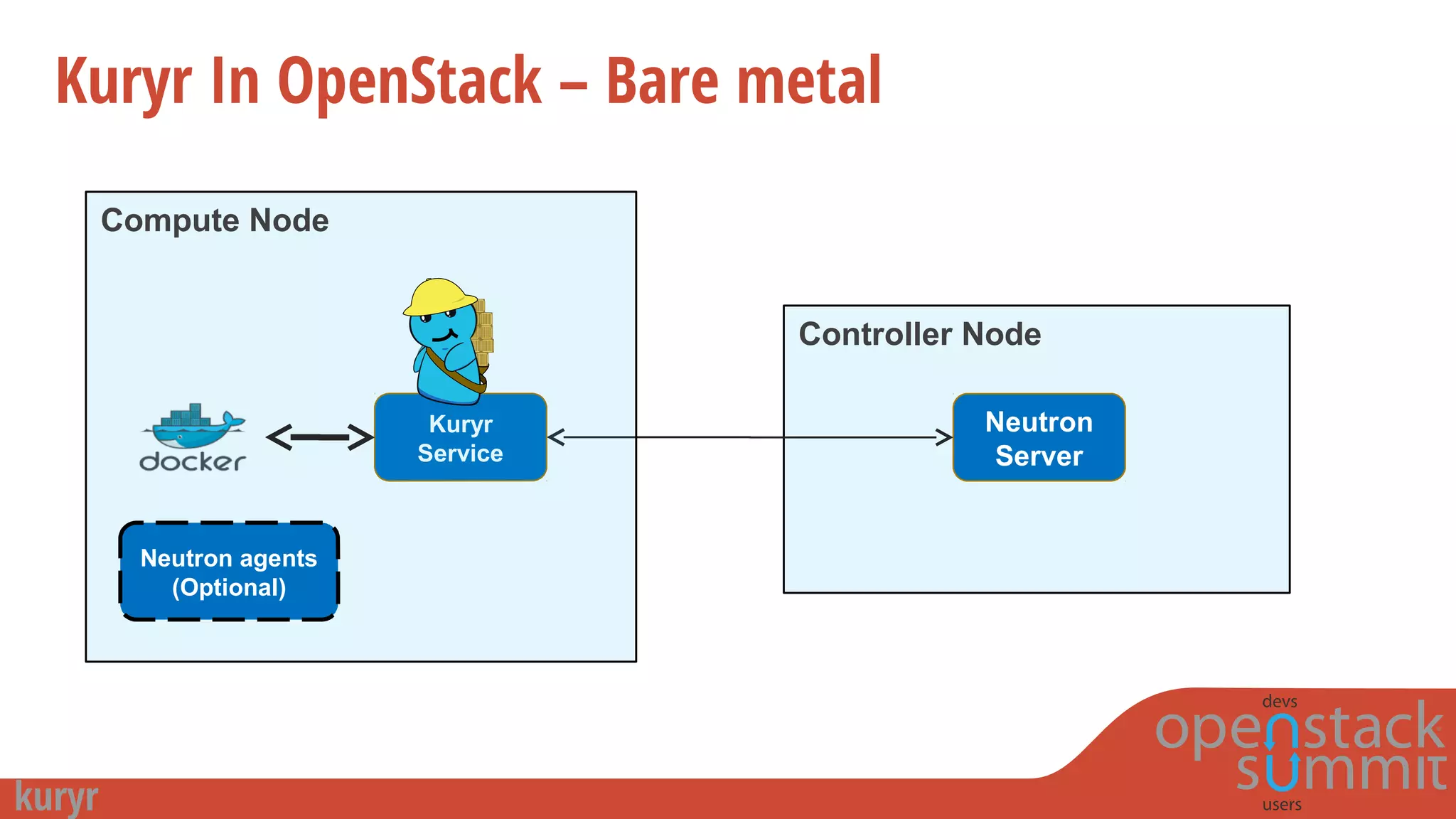

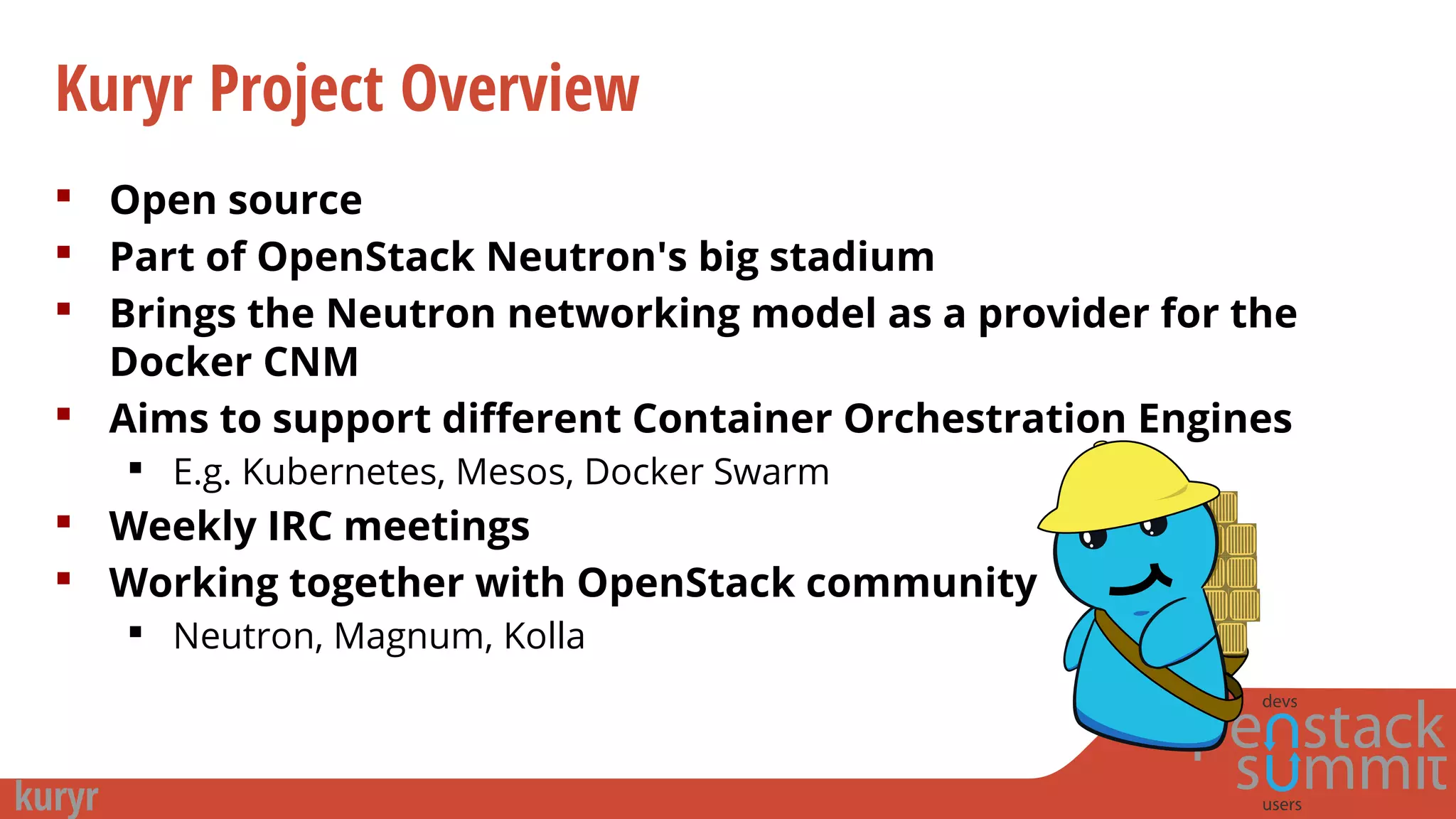

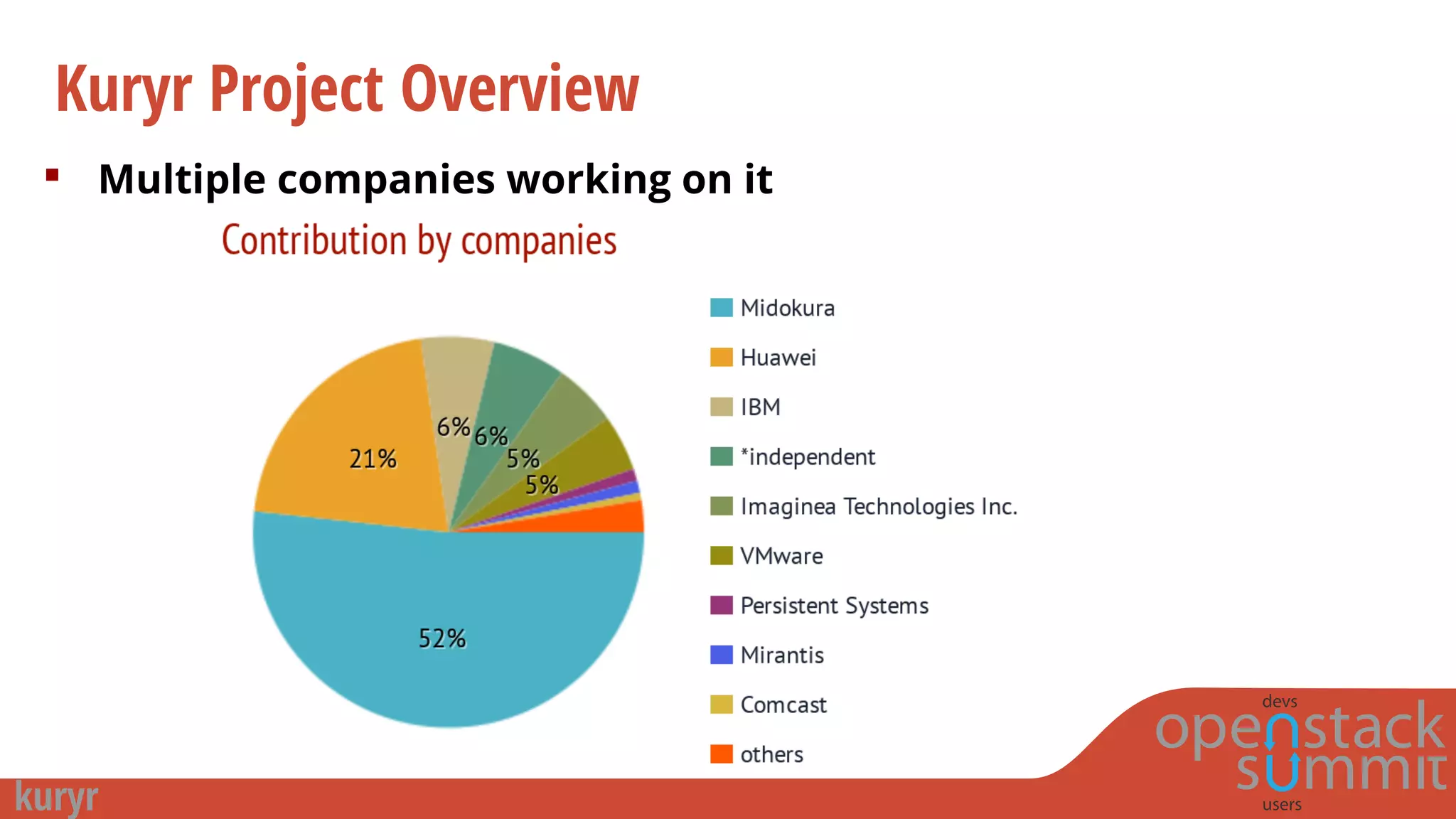

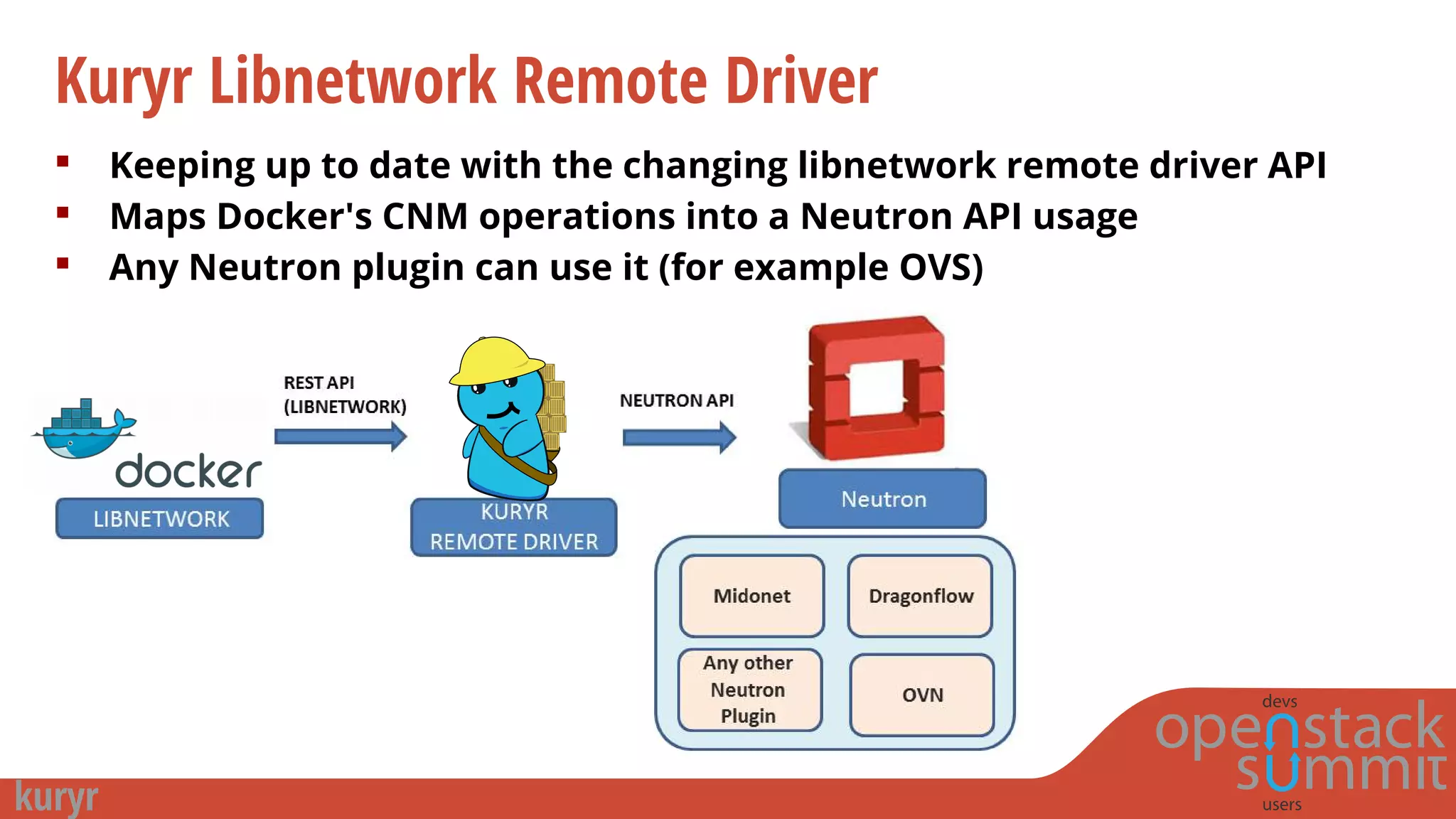

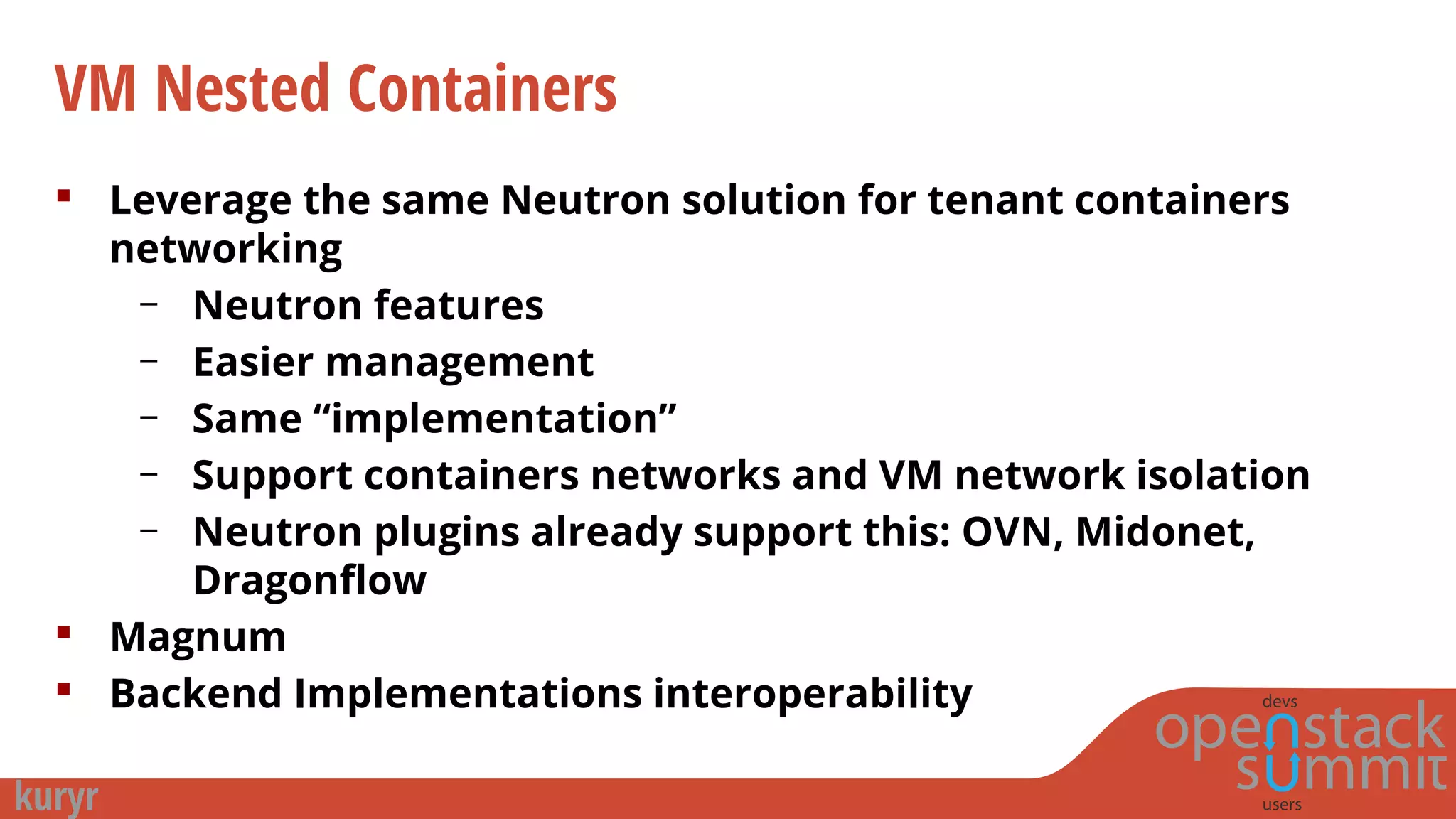

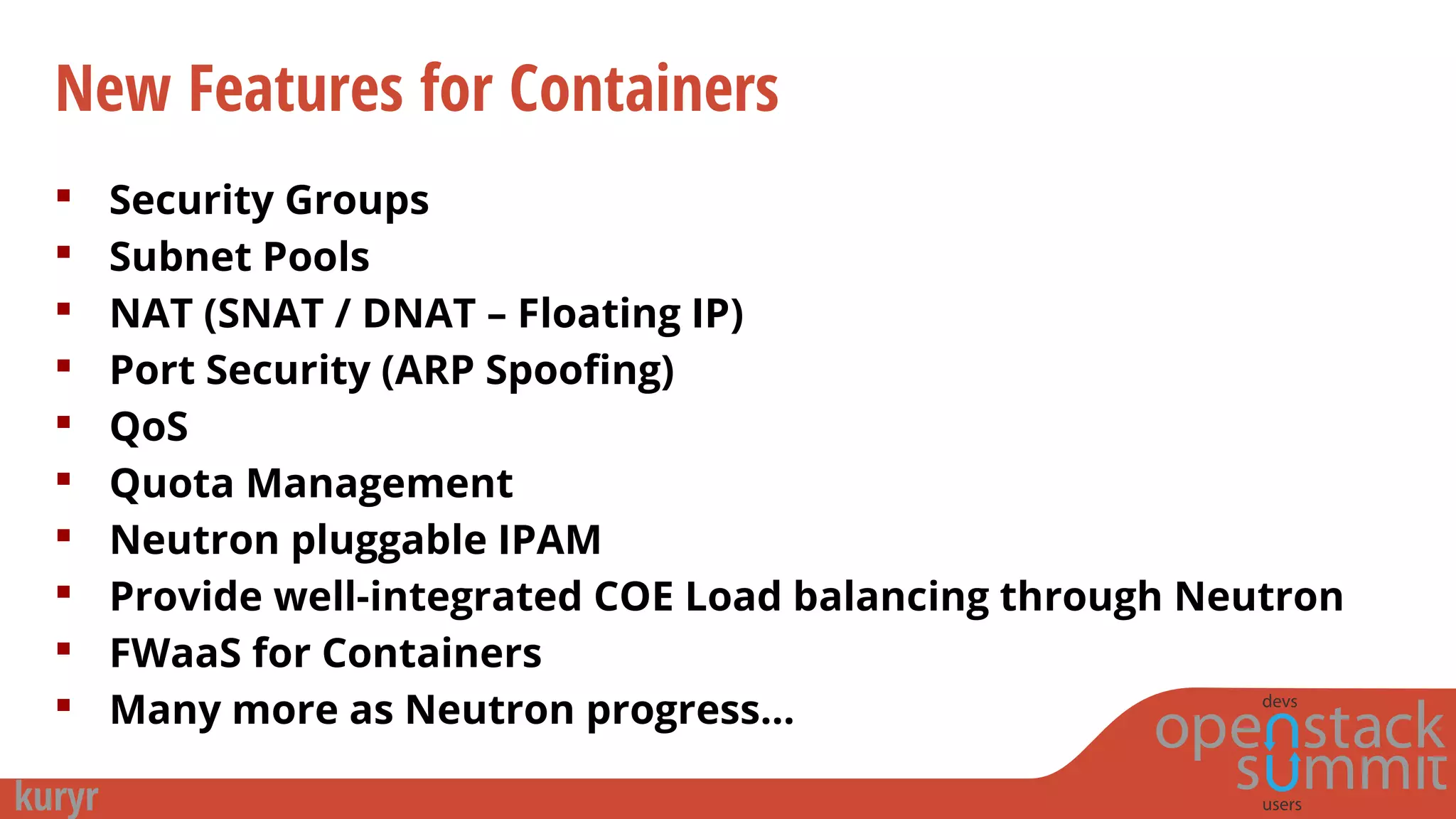

Project Kuryr aims to provide Neutron networking abstractions and APIs for container networking to avoid vendor lock-in. It maps container networking operations to the Neutron API and allows different Neutron plugins like OVN, Midonet, and Calico to provide networking for containers. This provides networking features to containers like security groups, LBaaS, FWaaS, and avoids issues with current container networking solutions around performance, management, and flexibility. Kuryr provides a common base for Neutron vendors to support VM and container networking.

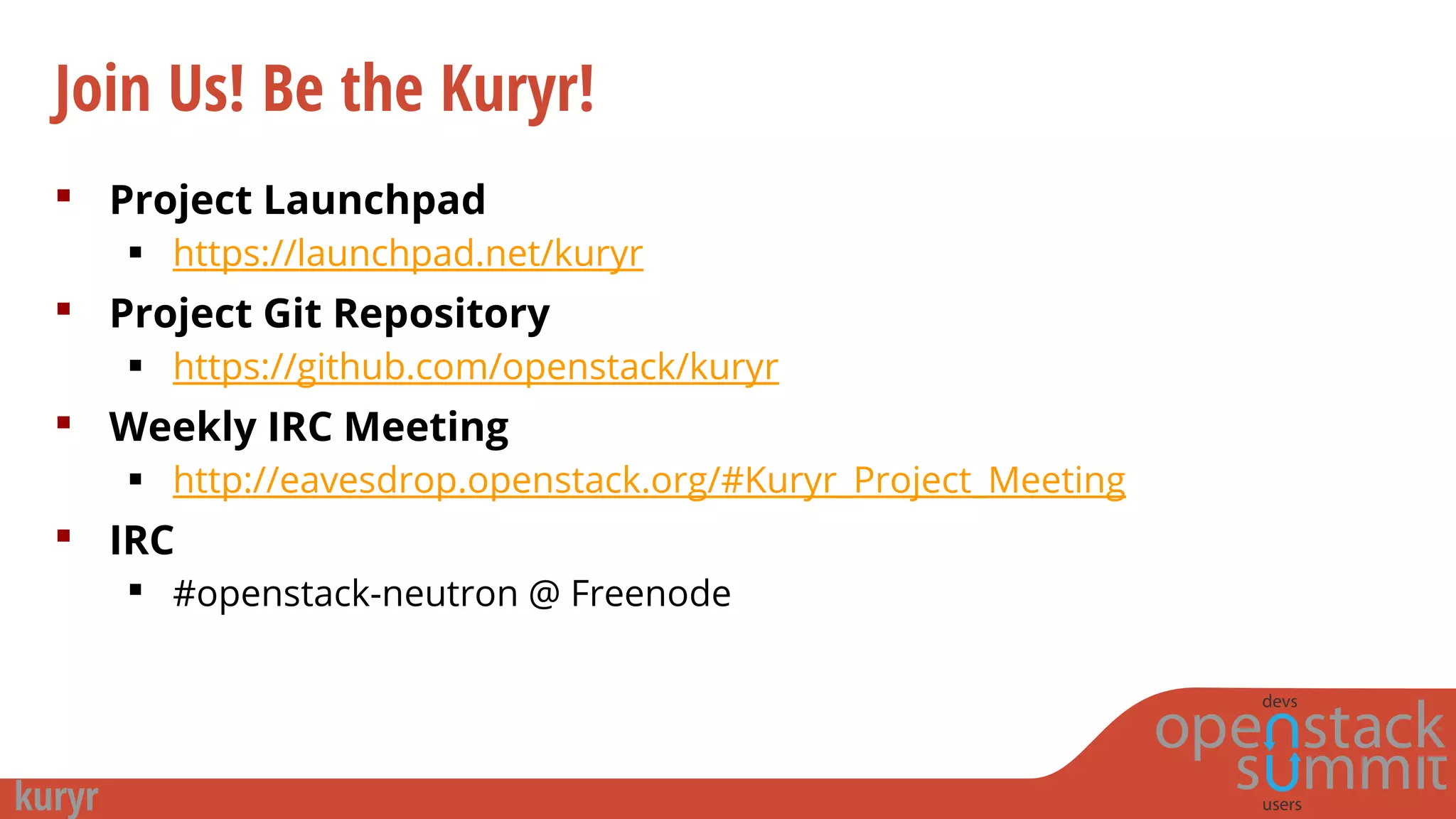

![Join Us! Be the Kuryr!

Mailing List

openstack-dev@lists.openstack.org ([Neutron][Kuryr])

Trello Board

https://trello.com/b/cbIAXrQ2/project-kuryr

Documentation

http://docs.openstack.org/developer/kuryr

Getting Started Blog posts

http://galsagie.github.io/sdn/openstack/docker/kuryr/neutron/2015/08/24/kury

r-part1/

http://galsagie.github.io/sdn/openstack/docker/kuryr/neutron/2015/10/10/kury

r-ovn/](https://image.slidesharecdn.com/kuryr-tokyorc1-151118234630-lva1-app6891/75/Tech-Talk-by-Gal-Sagie-Kuryr-Connecting-containers-networking-to-OpenStack-Neutron-25-2048.jpg)