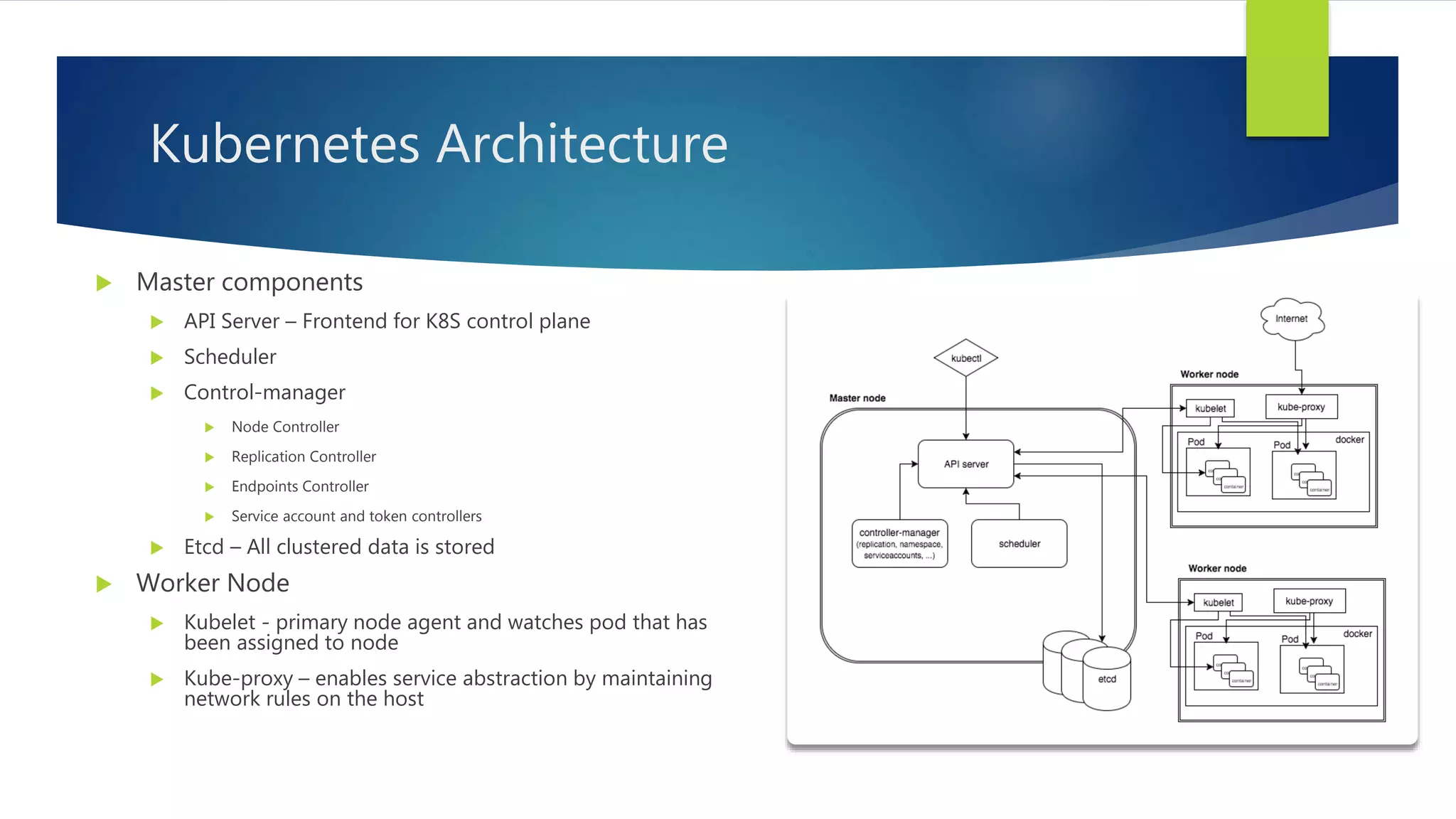

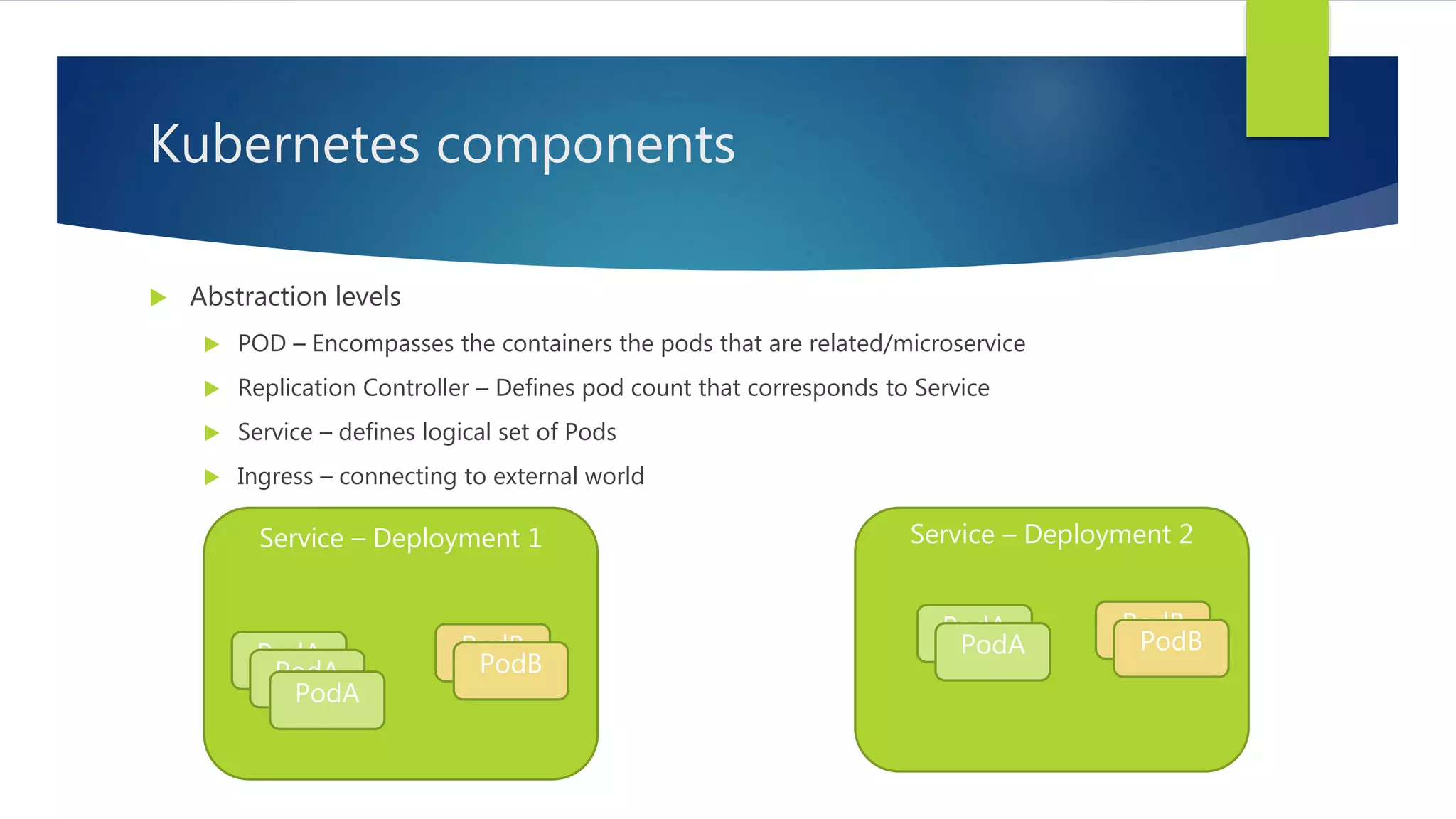

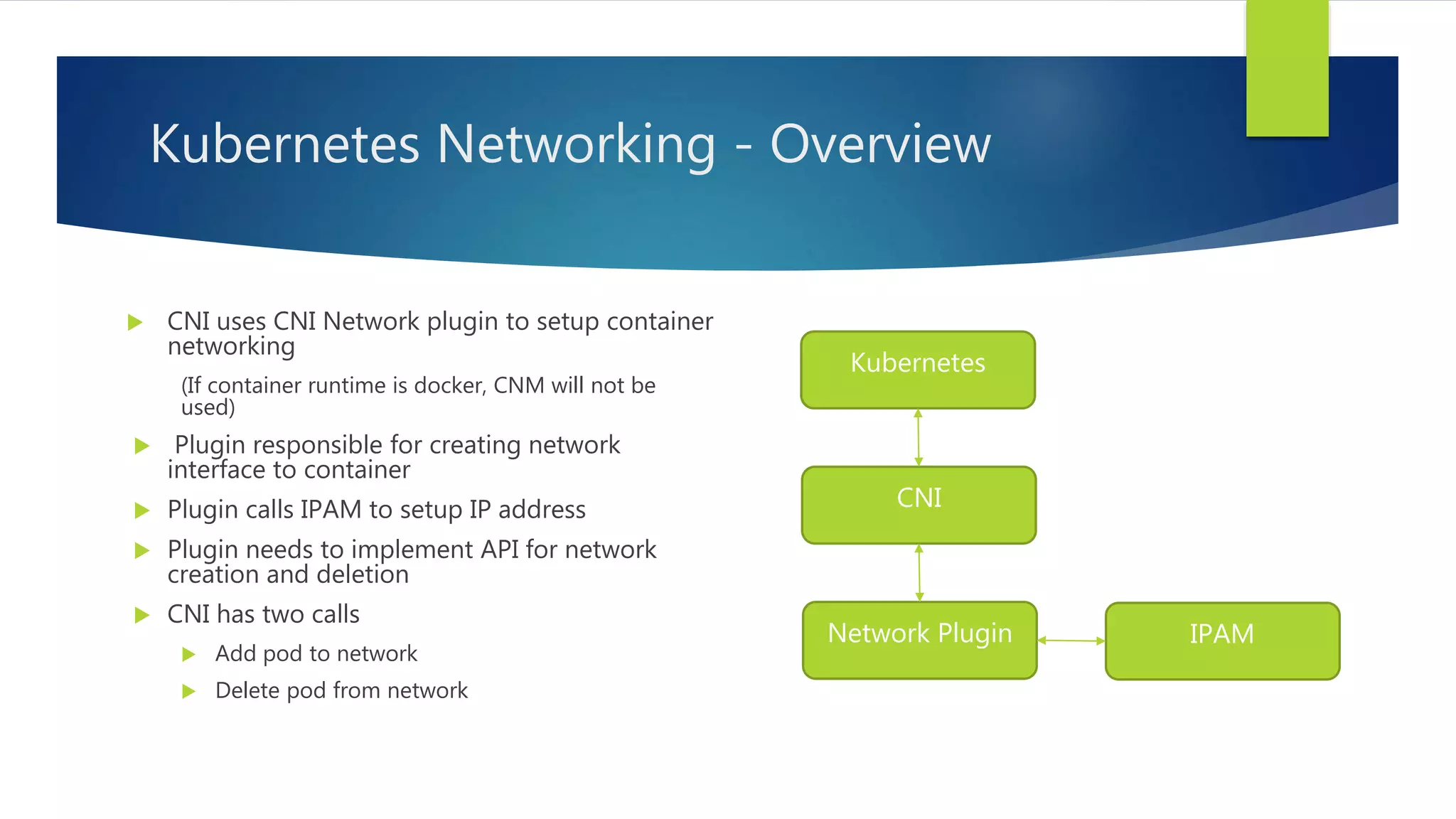

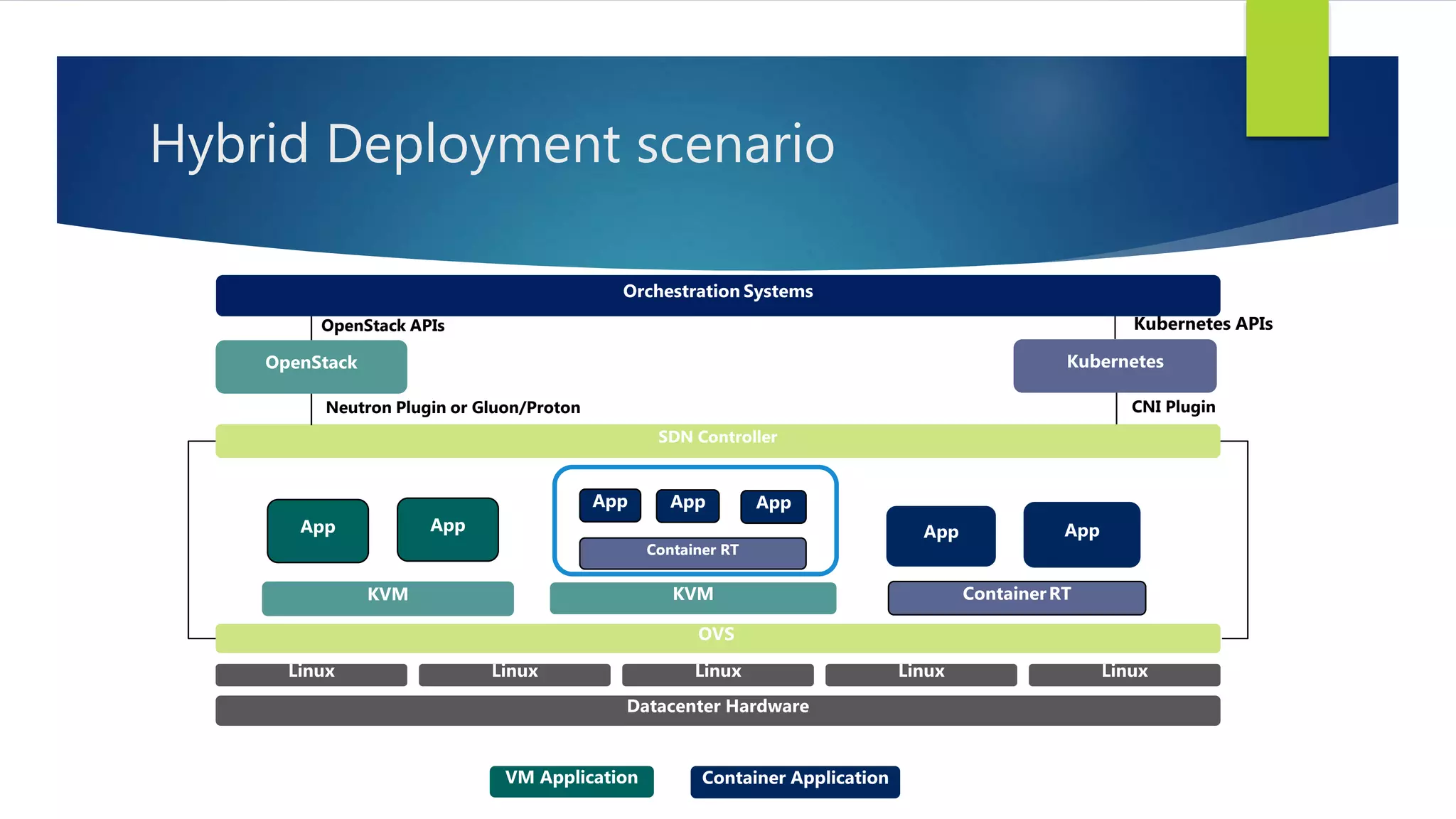

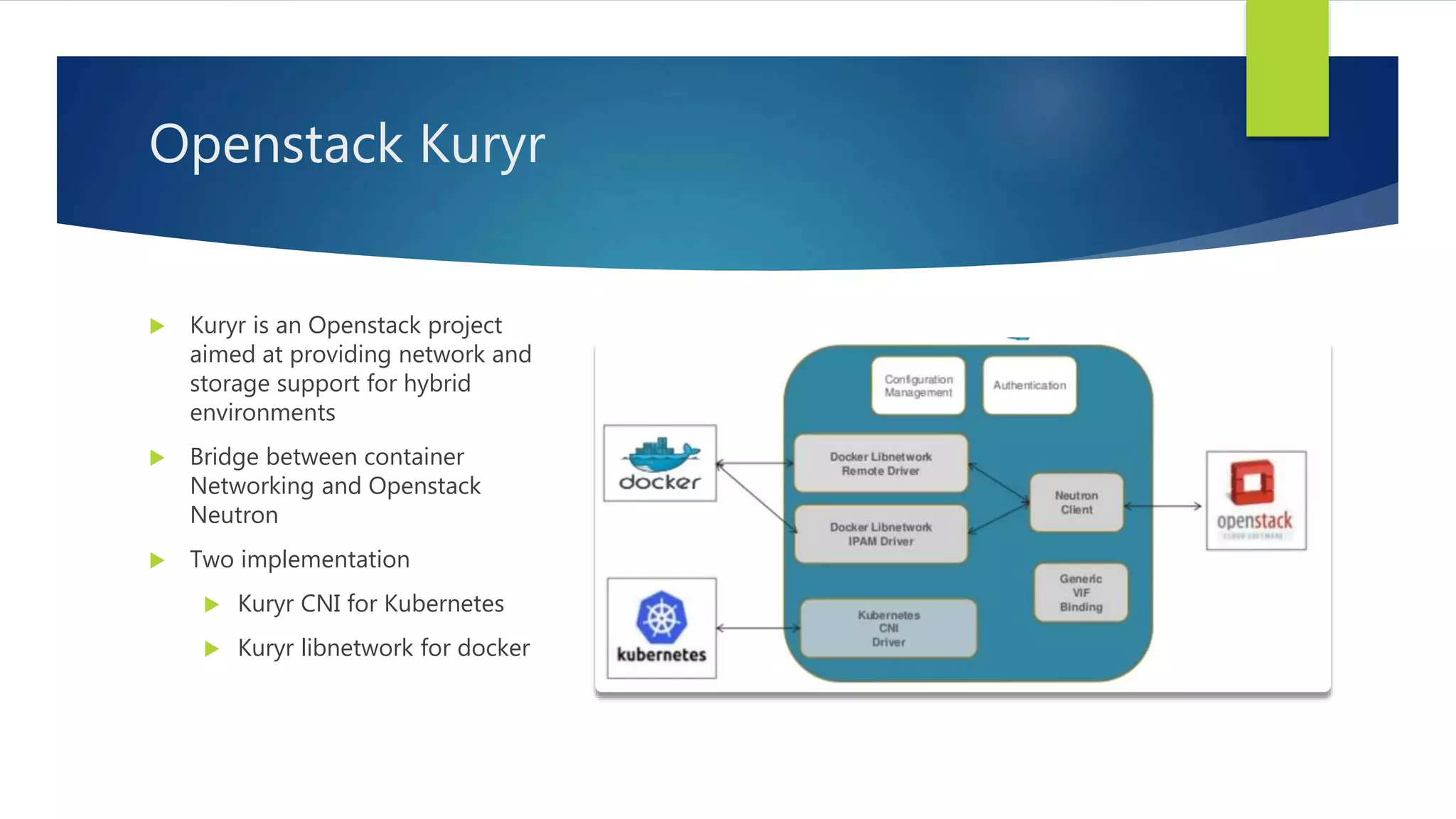

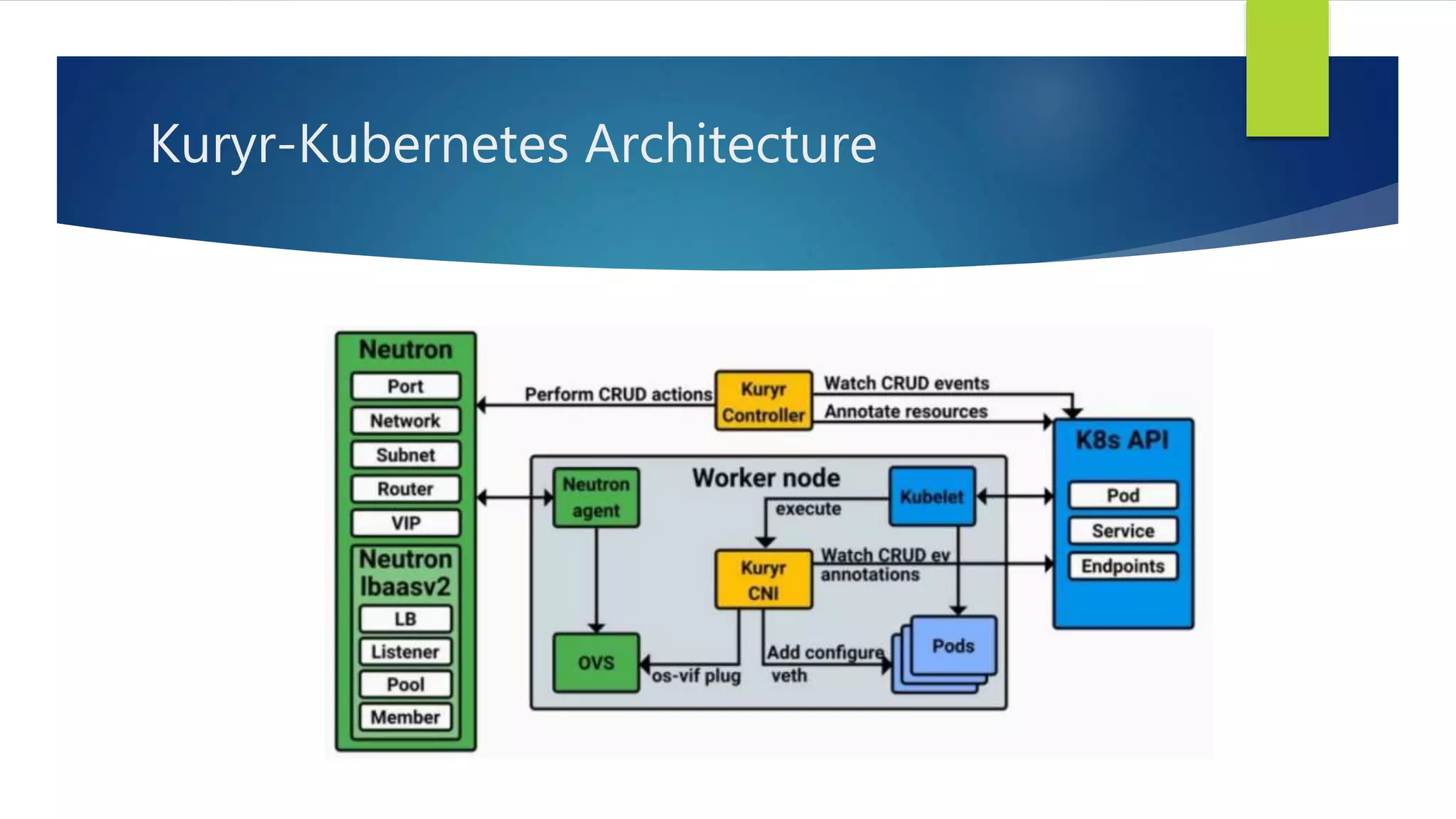

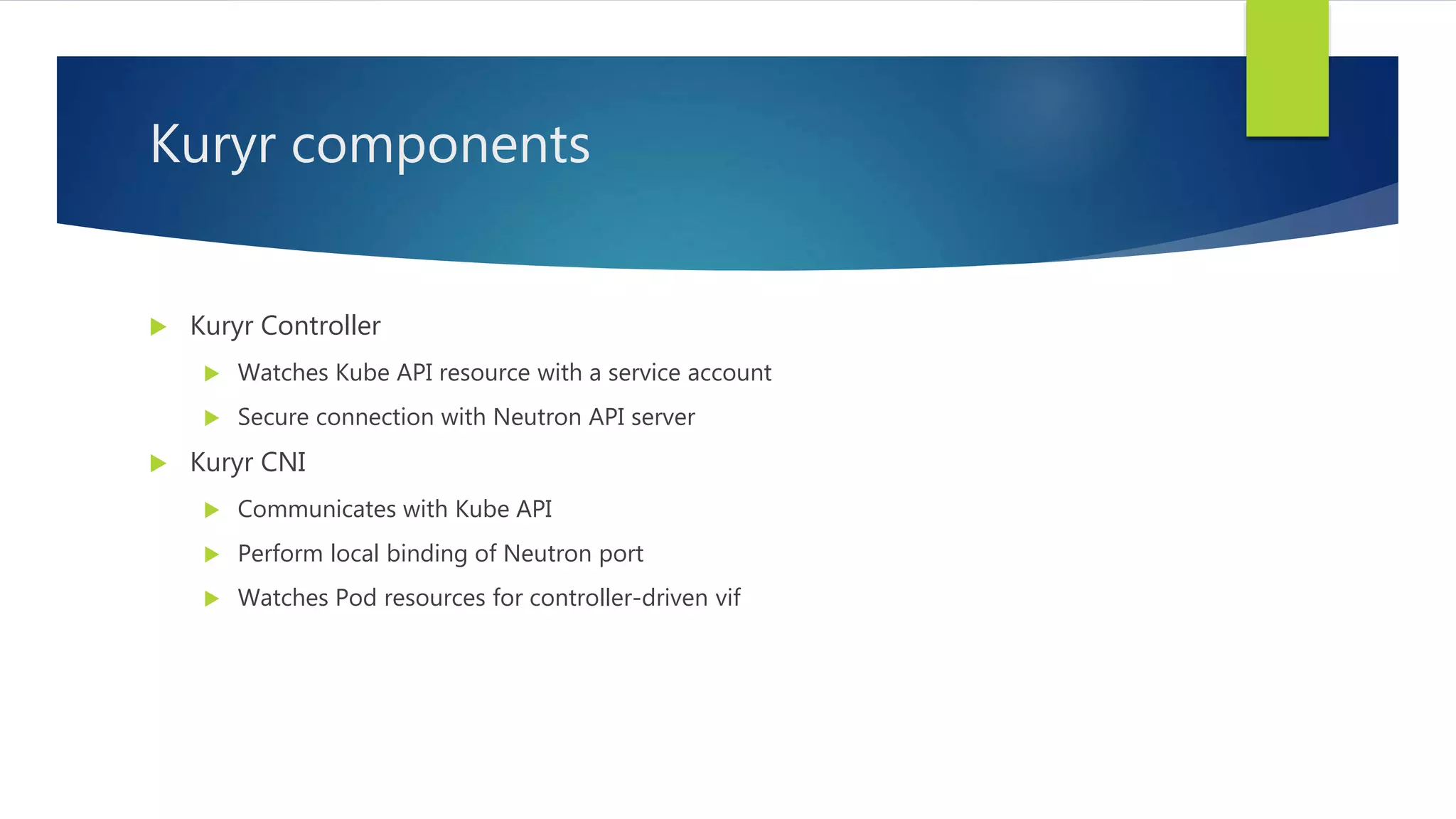

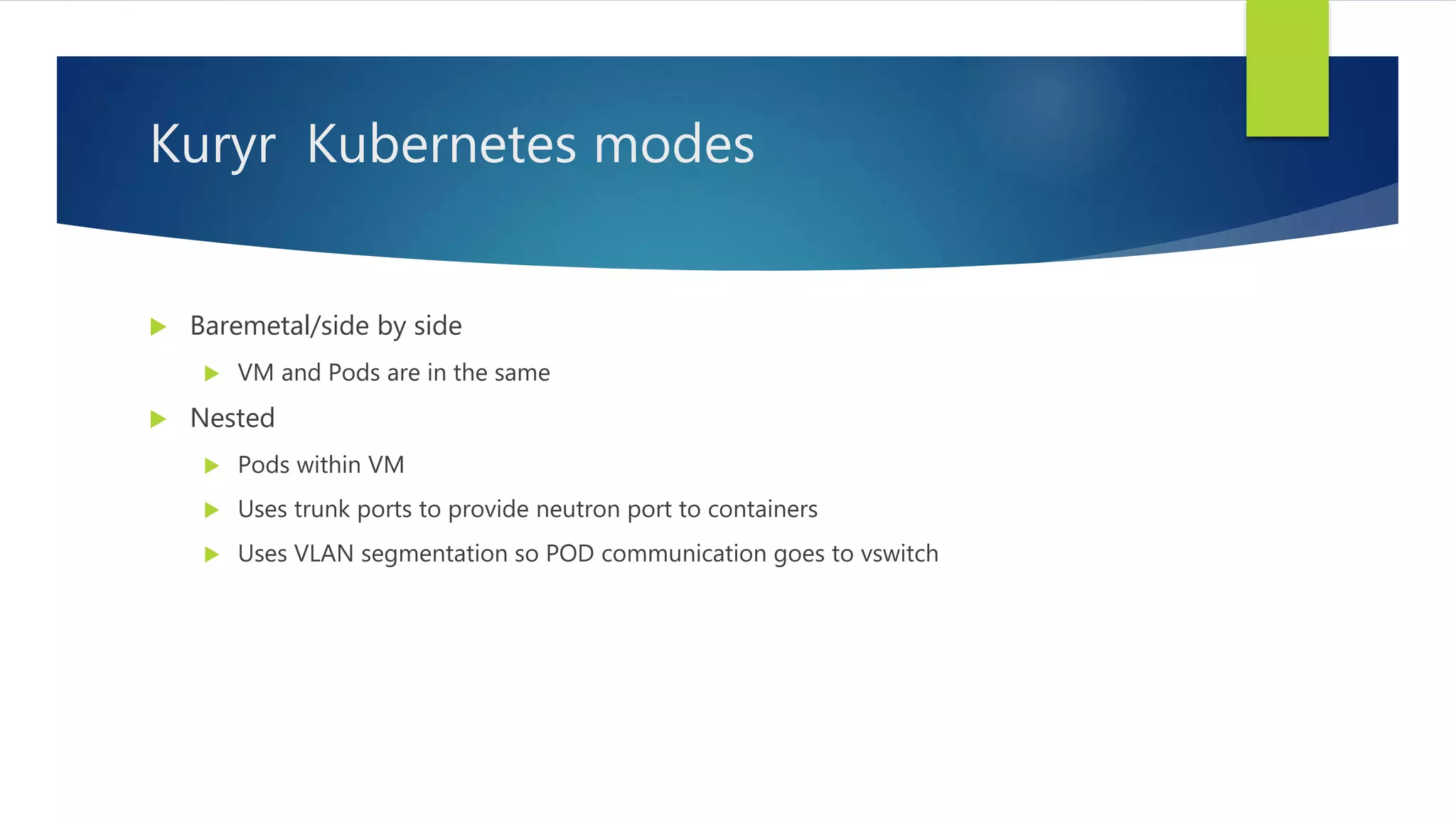

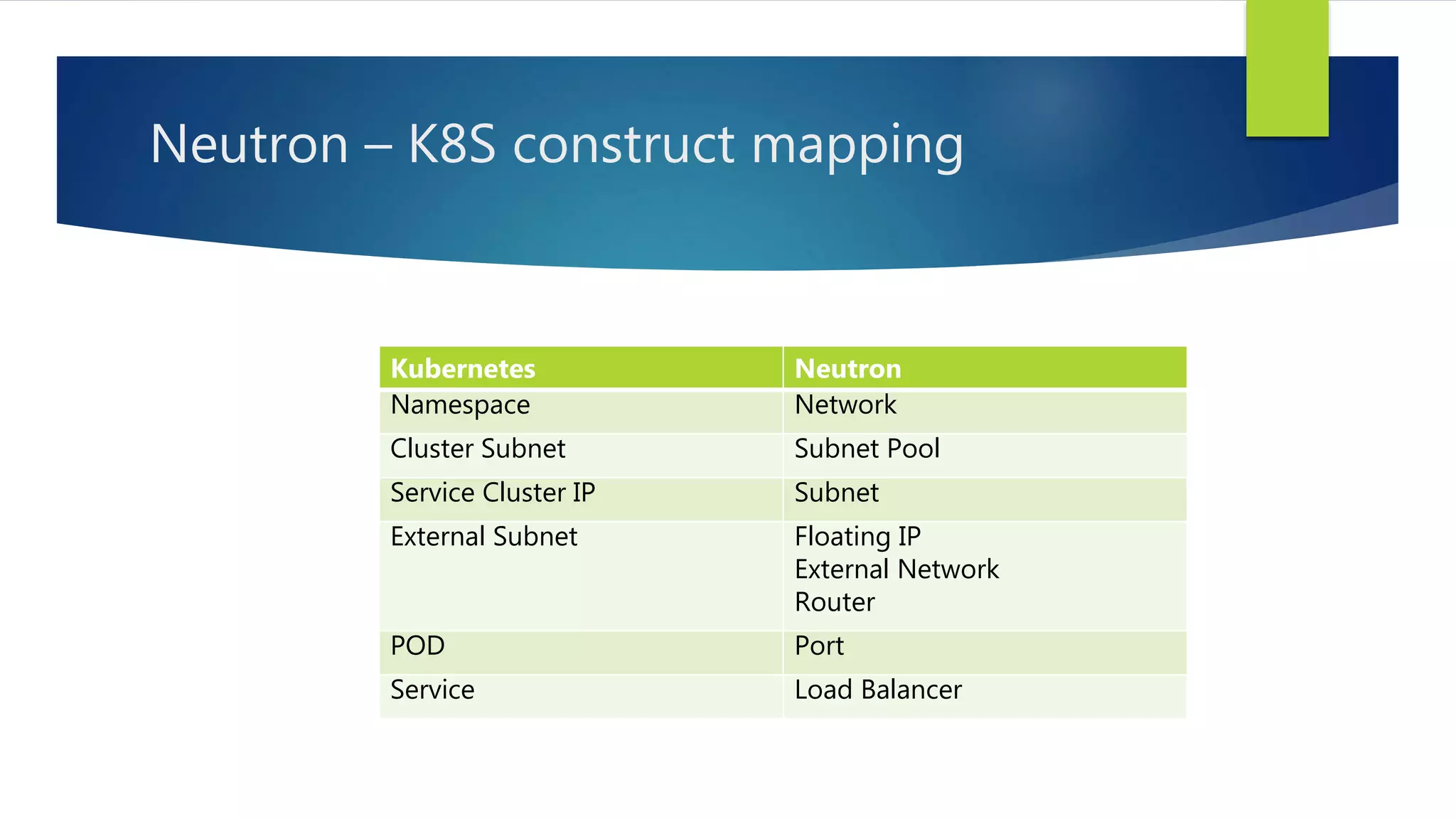

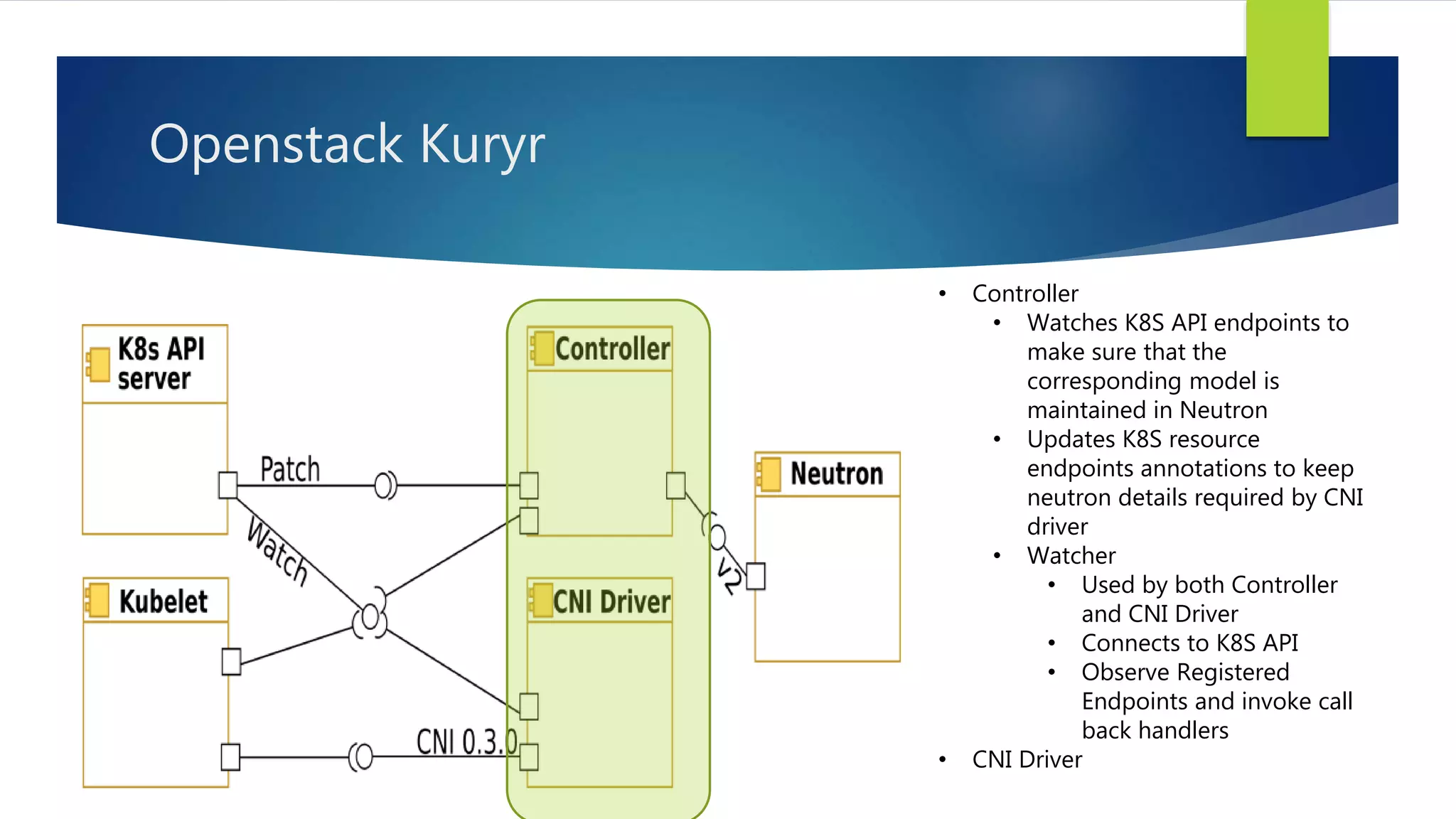

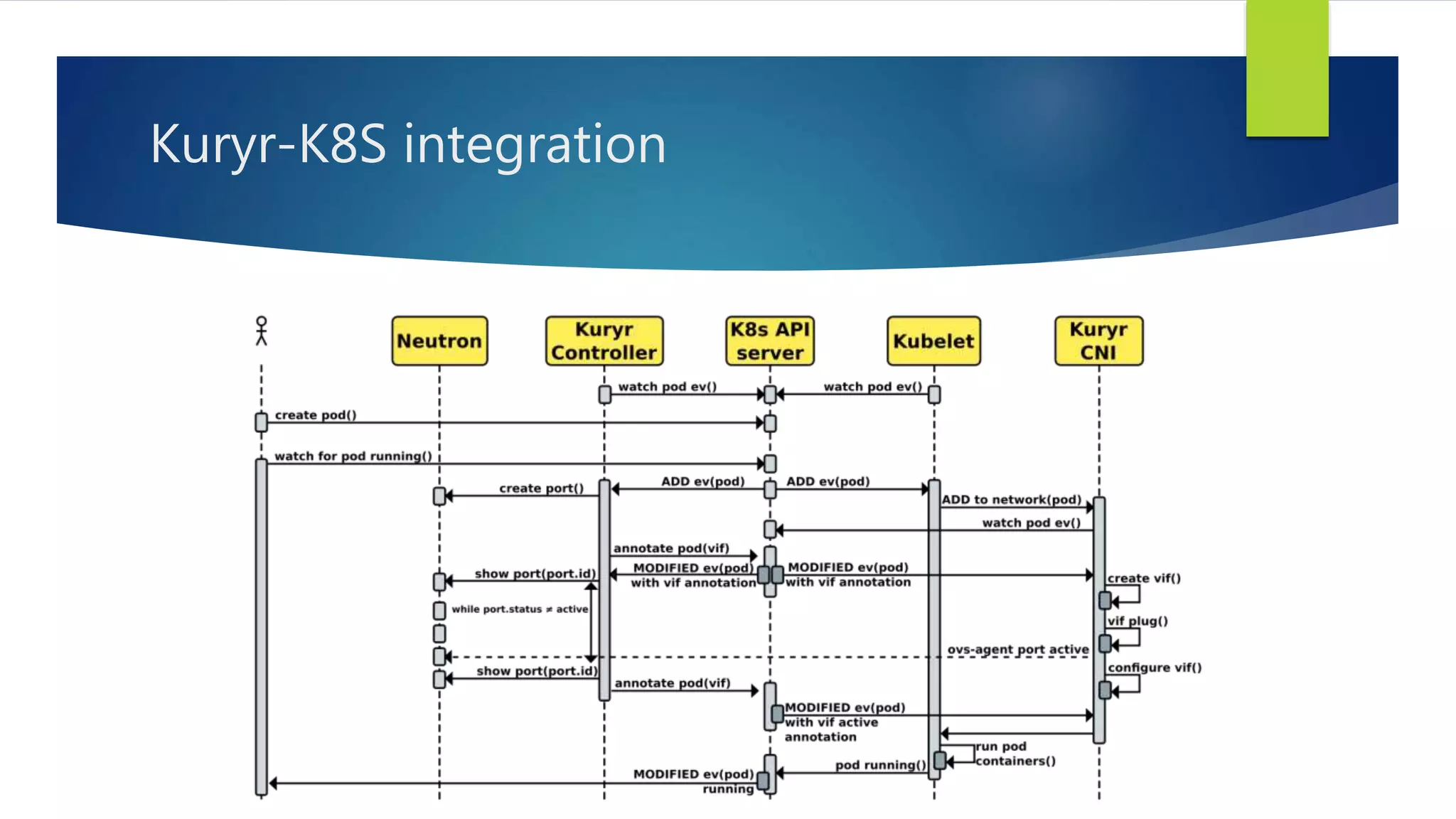

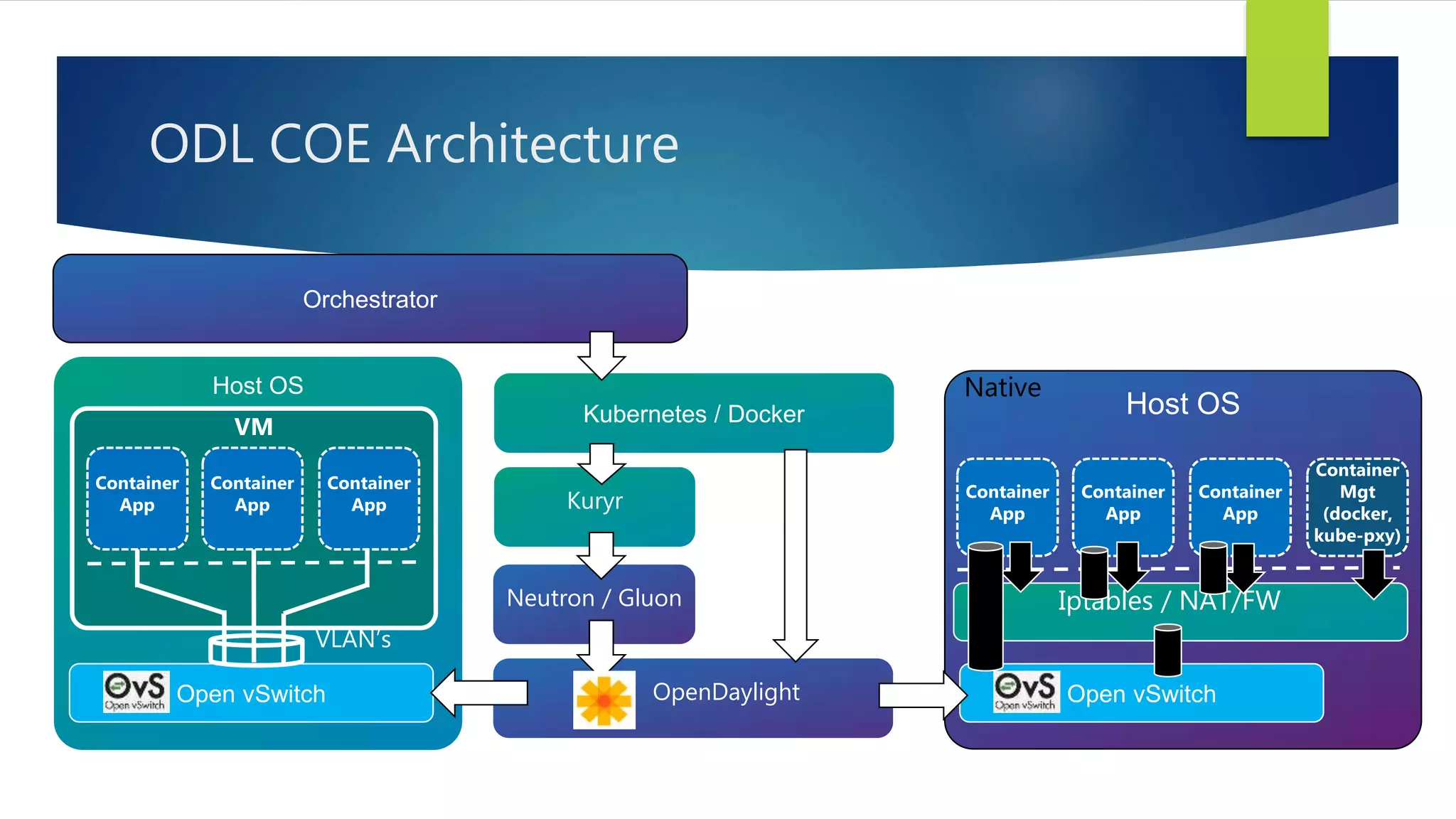

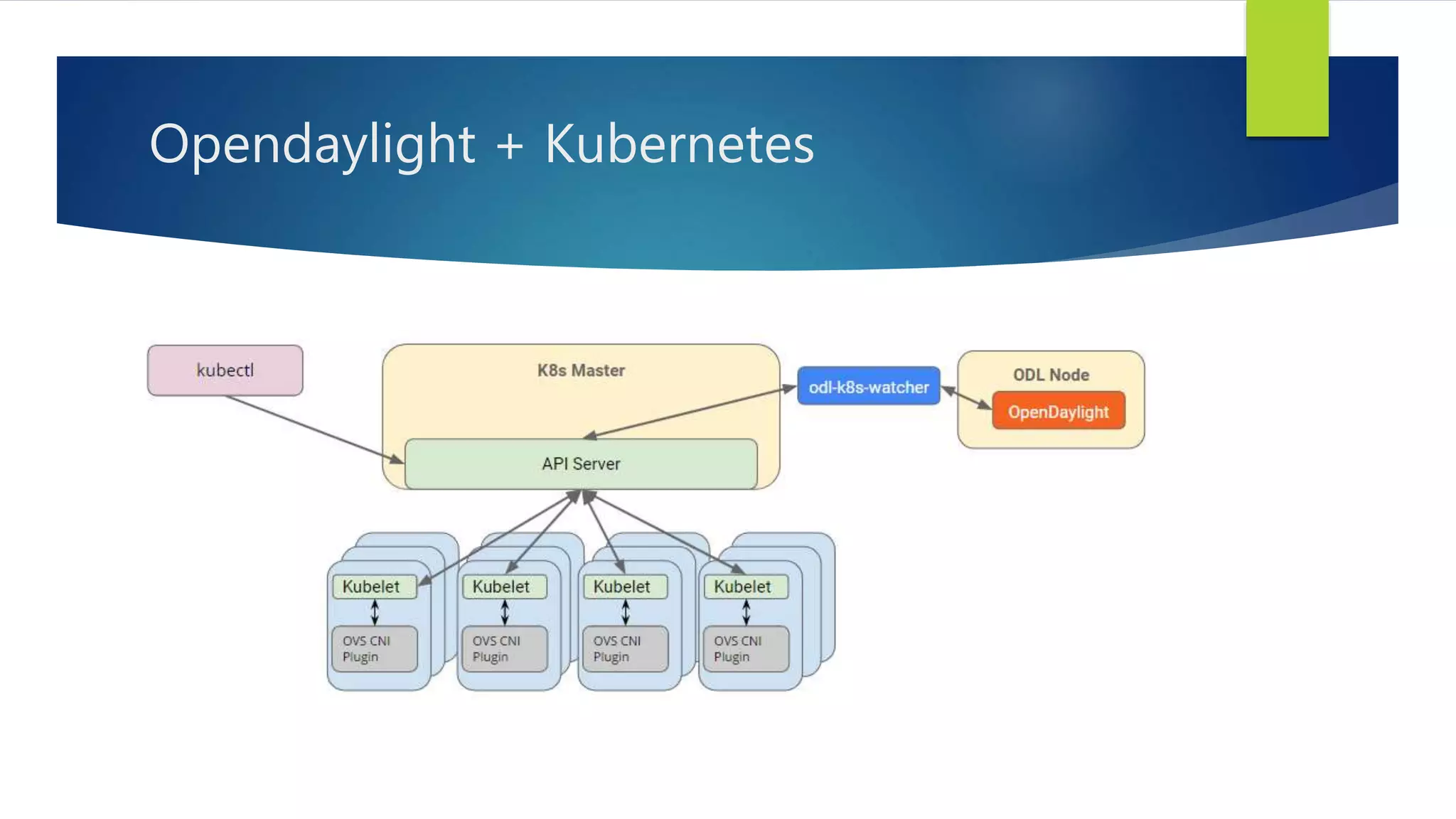

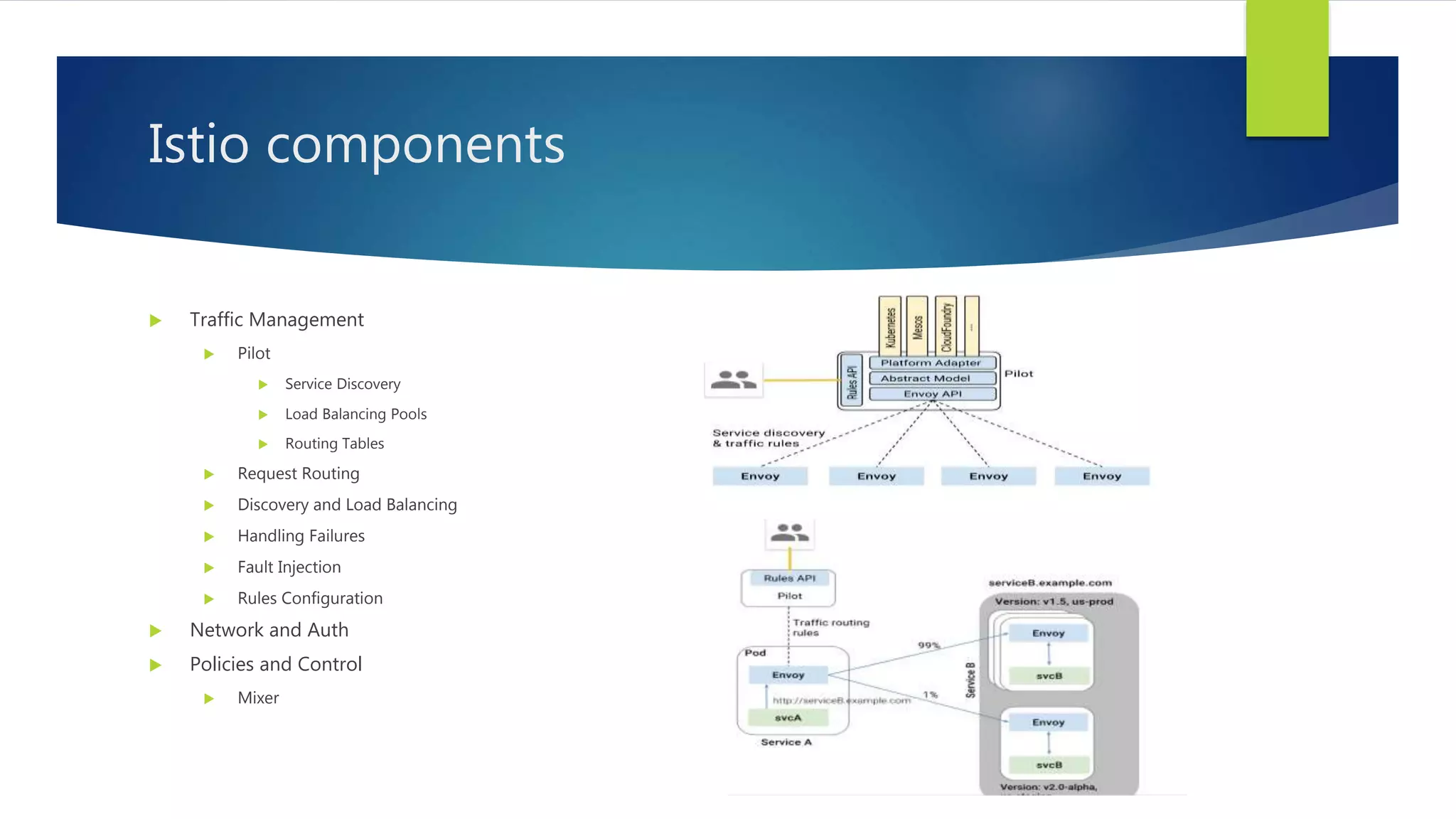

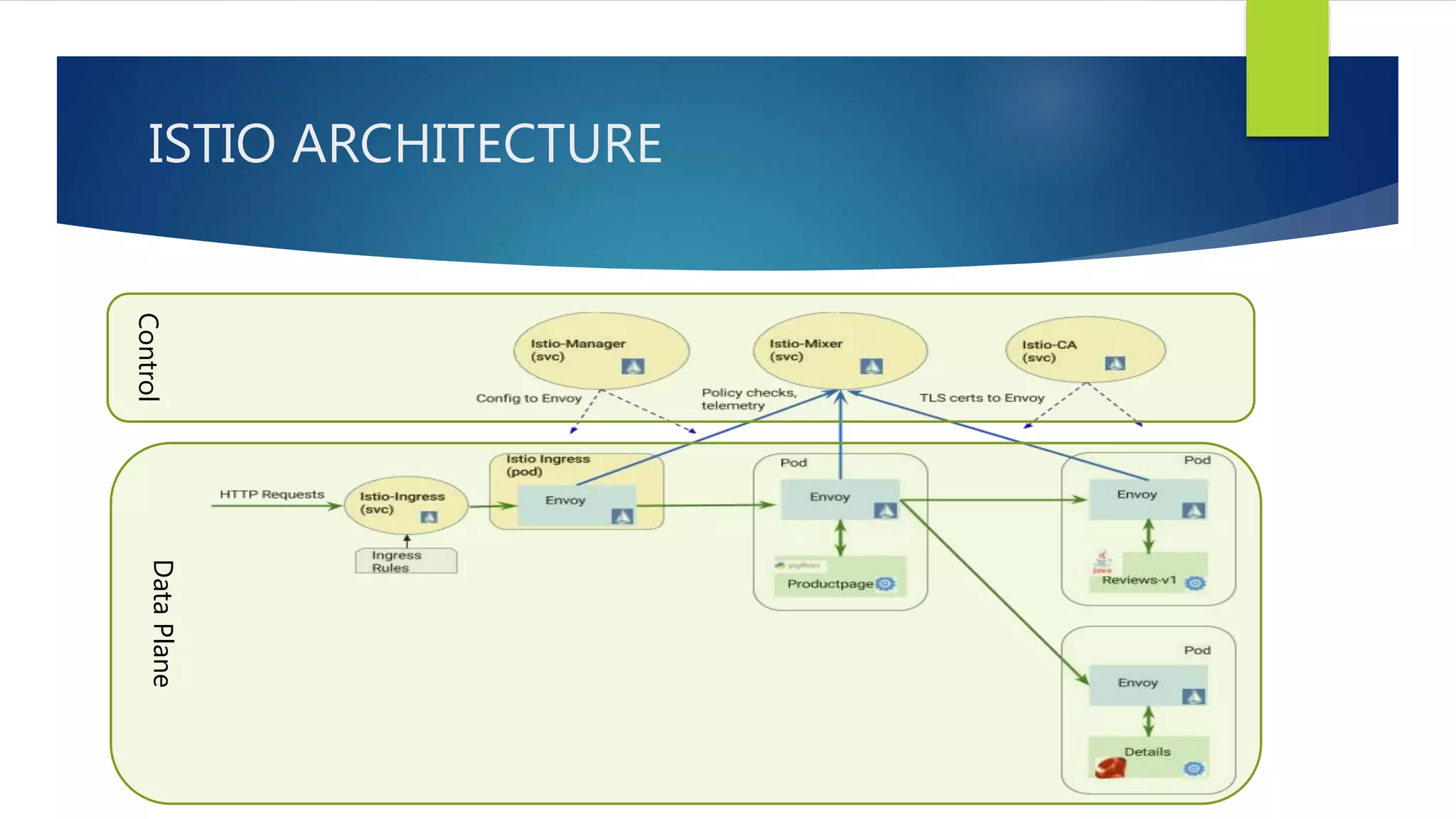

The document discusses managing containers and virtual machines in hybrid networking environments. It provides an overview of Kubernetes networking basics and challenges with Kubernetes and OpenStack interoperability. It then describes the OpenStack Kuryr project which bridges container networking and OpenStack Neutron. It discusses Kuryr components and modes of operation. It also briefly outlines Opendaylight COE architecture for integrating Kubernetes and OpenStack. Finally, it introduces the concept of a service mesh for managing communication between microservices and summarizes key components of the Istio service mesh.