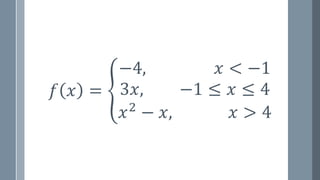

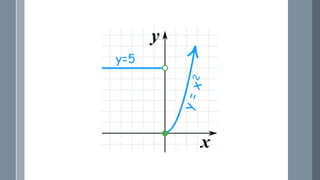

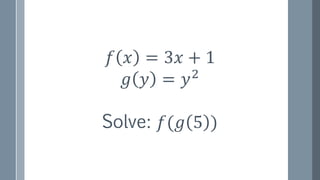

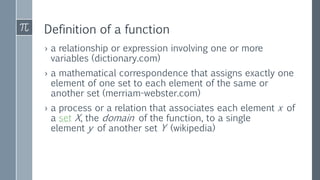

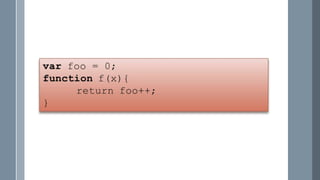

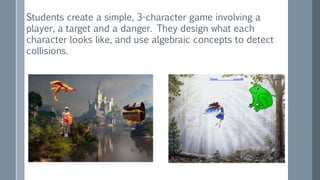

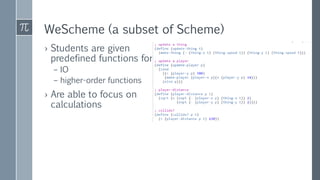

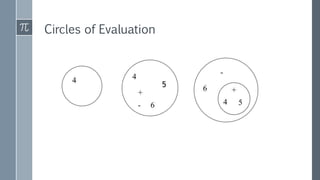

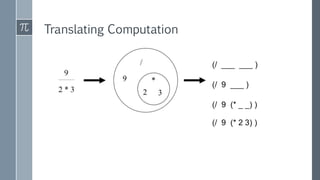

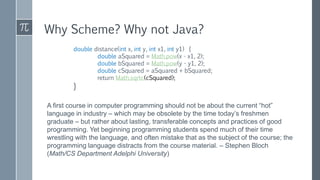

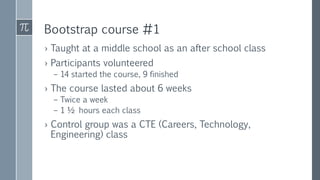

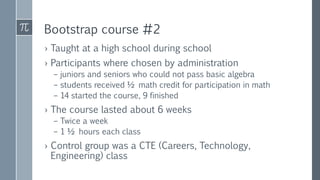

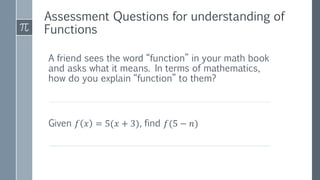

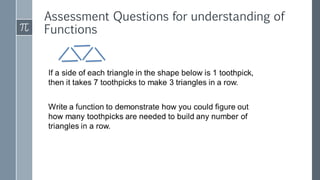

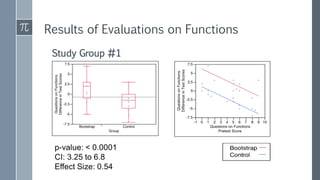

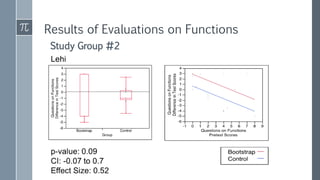

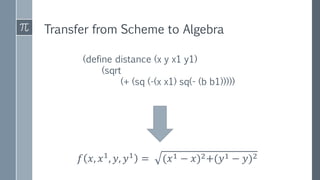

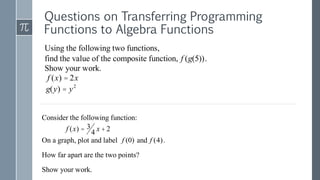

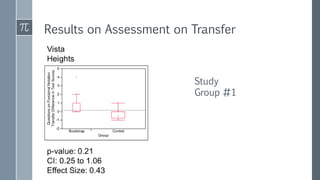

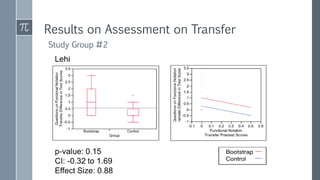

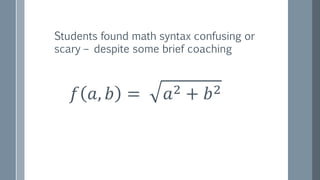

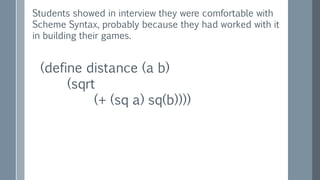

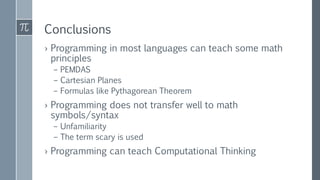

The document discusses a curriculum using functional programming to teach algebra, piloted in the summer of 1967, highlighting how programming provides concrete insights into mathematical concepts like functions. It details the experiences of two Bootstrap courses taught at different schools, their assessment methods, and the students' struggles with mathematical syntax compared to programming syntax. The findings suggest that while programming can teach some mathematical principles, the transfer of knowledge from programming to traditional math understanding is limited.