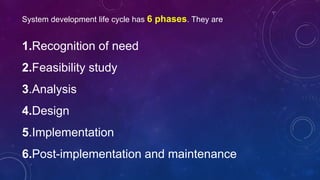

The document discusses the system development life cycle (SDLC), which consists of 6 phases: 1) recognition of need, 2) feasibility study, 3) analysis, 4) design, 5) implementation, and 6) post-implementation and maintenance. It provides details on each phase, including that analysis involves defining system boundaries and collecting data, design determines how the problem will be solved through technical specifications, and implementation includes user training, testing, and file conversion. The overall SDLC process gives a system project meaning and direction by thoroughly understanding user needs from recognition through ongoing maintenance.