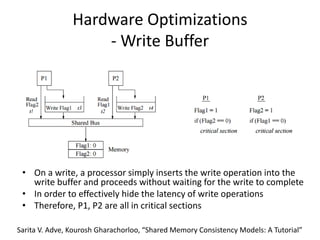

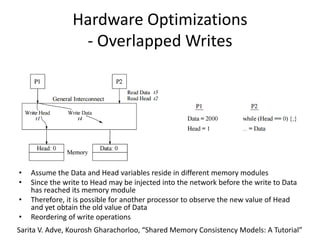

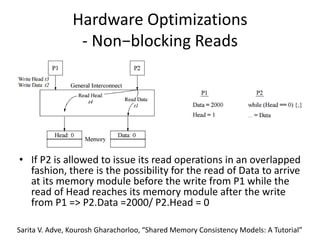

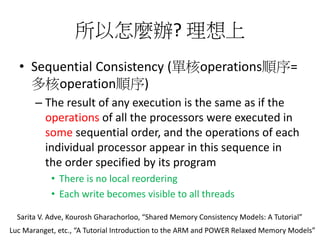

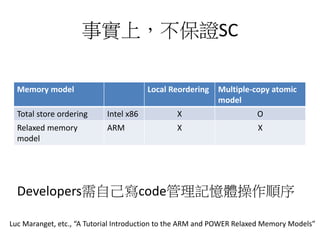

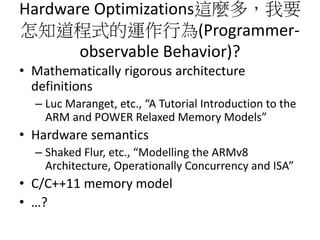

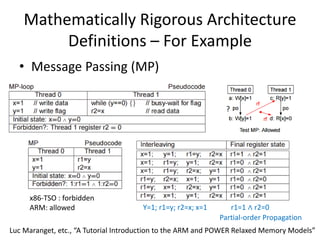

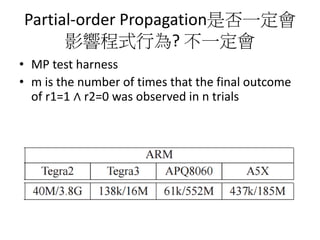

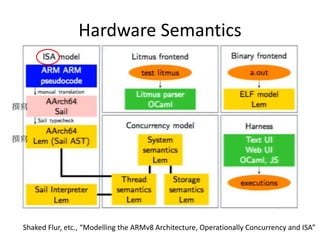

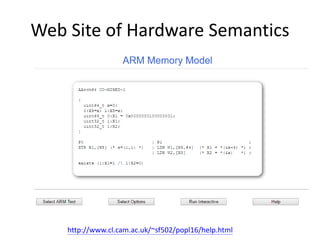

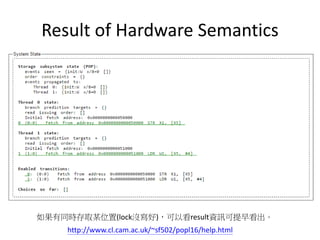

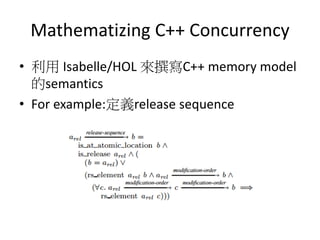

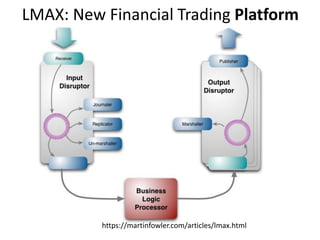

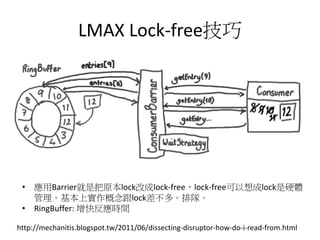

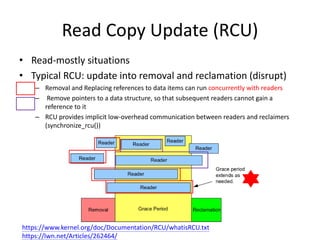

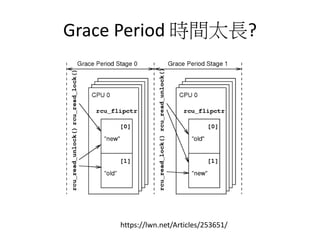

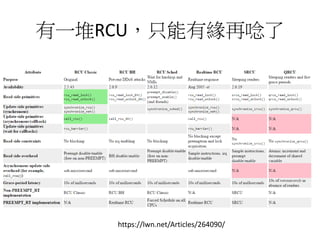

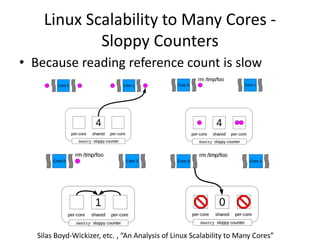

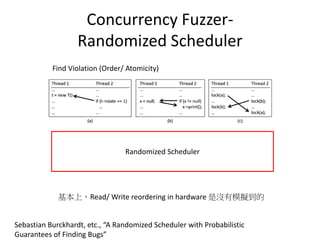

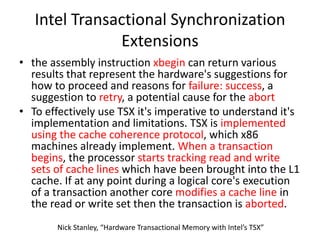

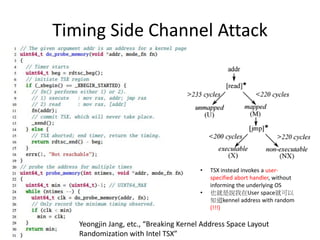

This document discusses challenges in writing concurrent programs and provides examples of concurrency techniques. It explains that hardware and compiler optimizations can result in unexpected program behaviors. It then describes memory model definitions, performance patterns like LMAX and RCU, and security issues such as timing side-channel attacks using Intel TSX. The goal is to understand how to write correct and efficient concurrent code despite relaxed memory consistency models.