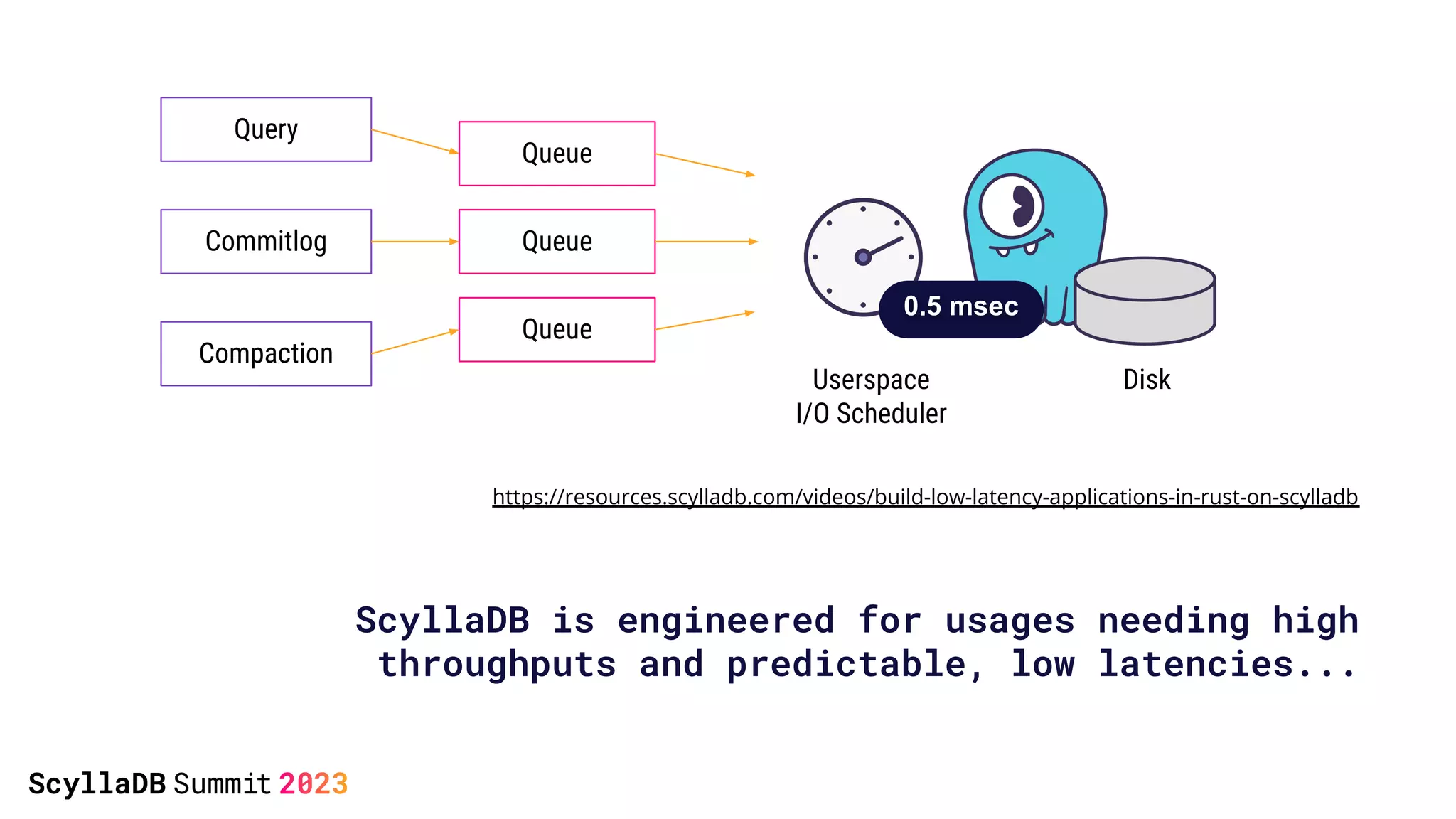

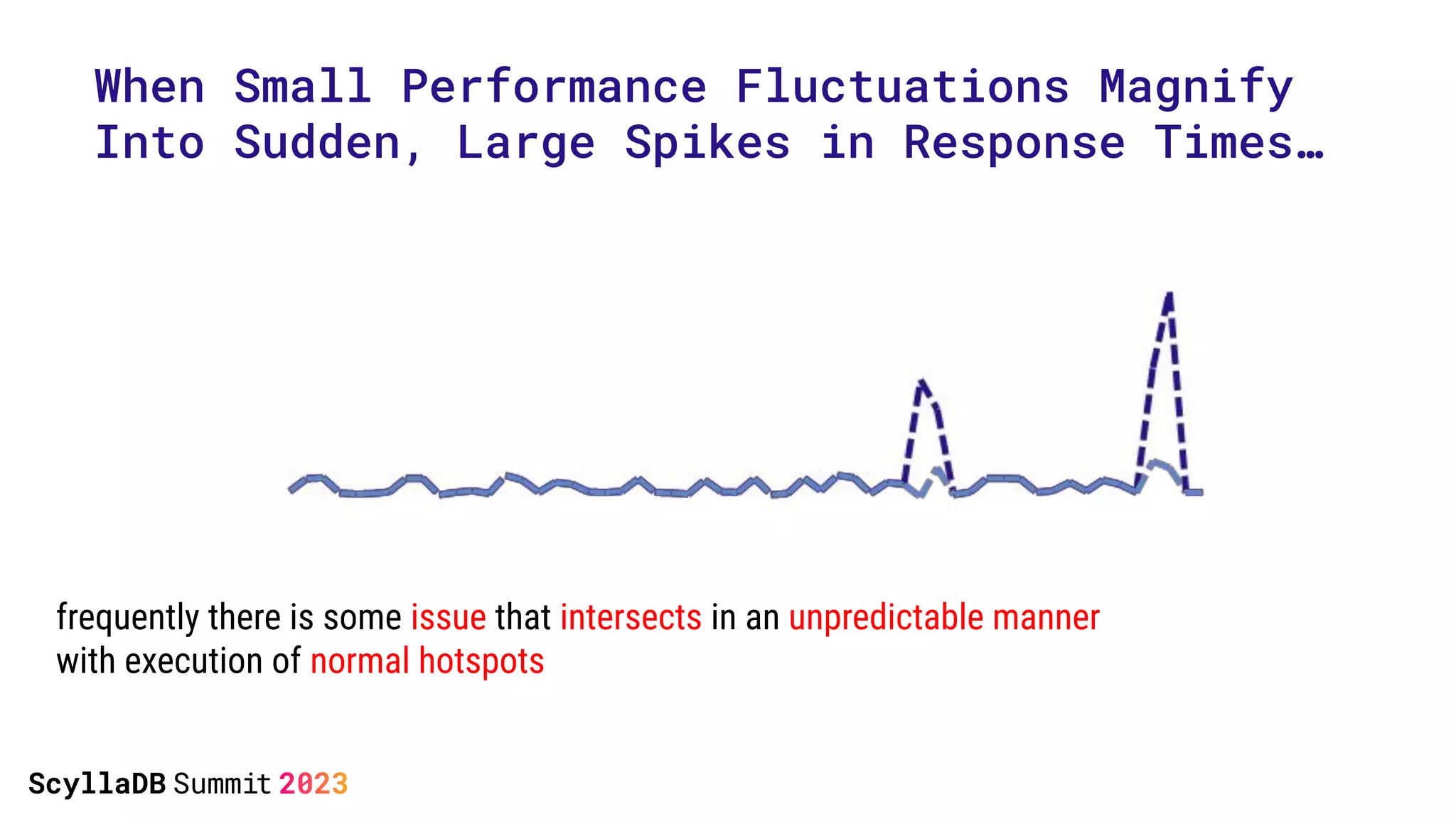

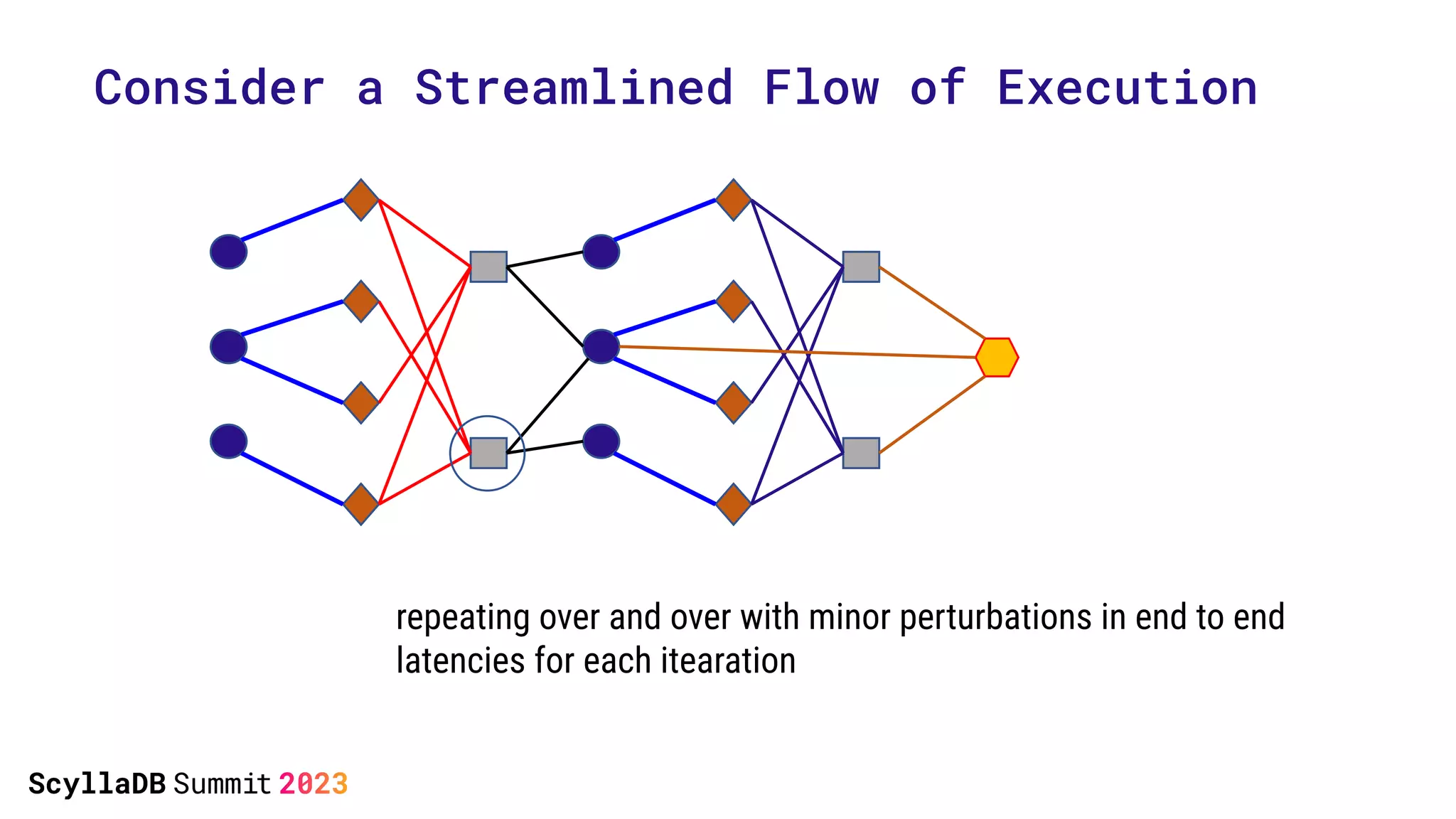

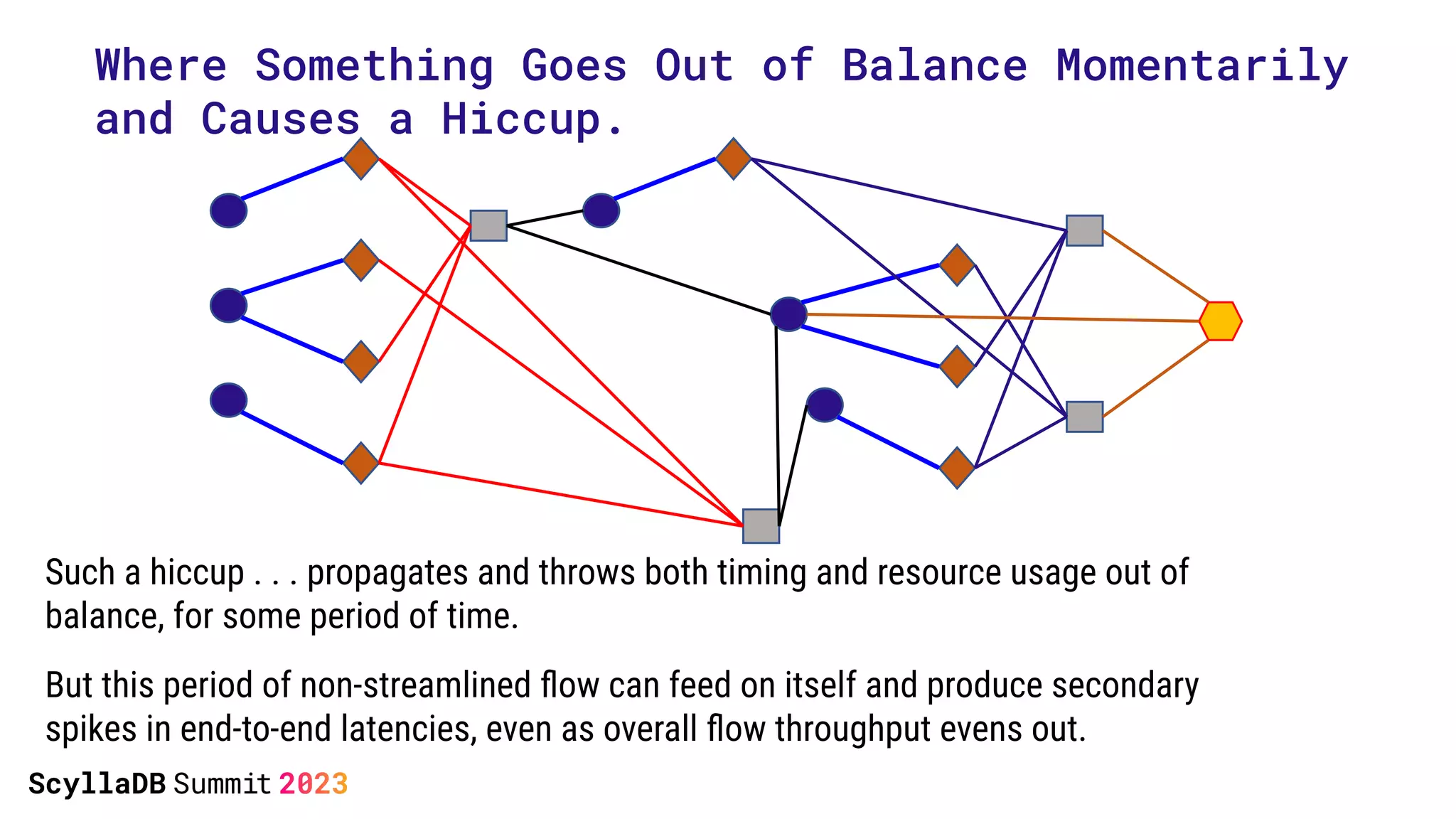

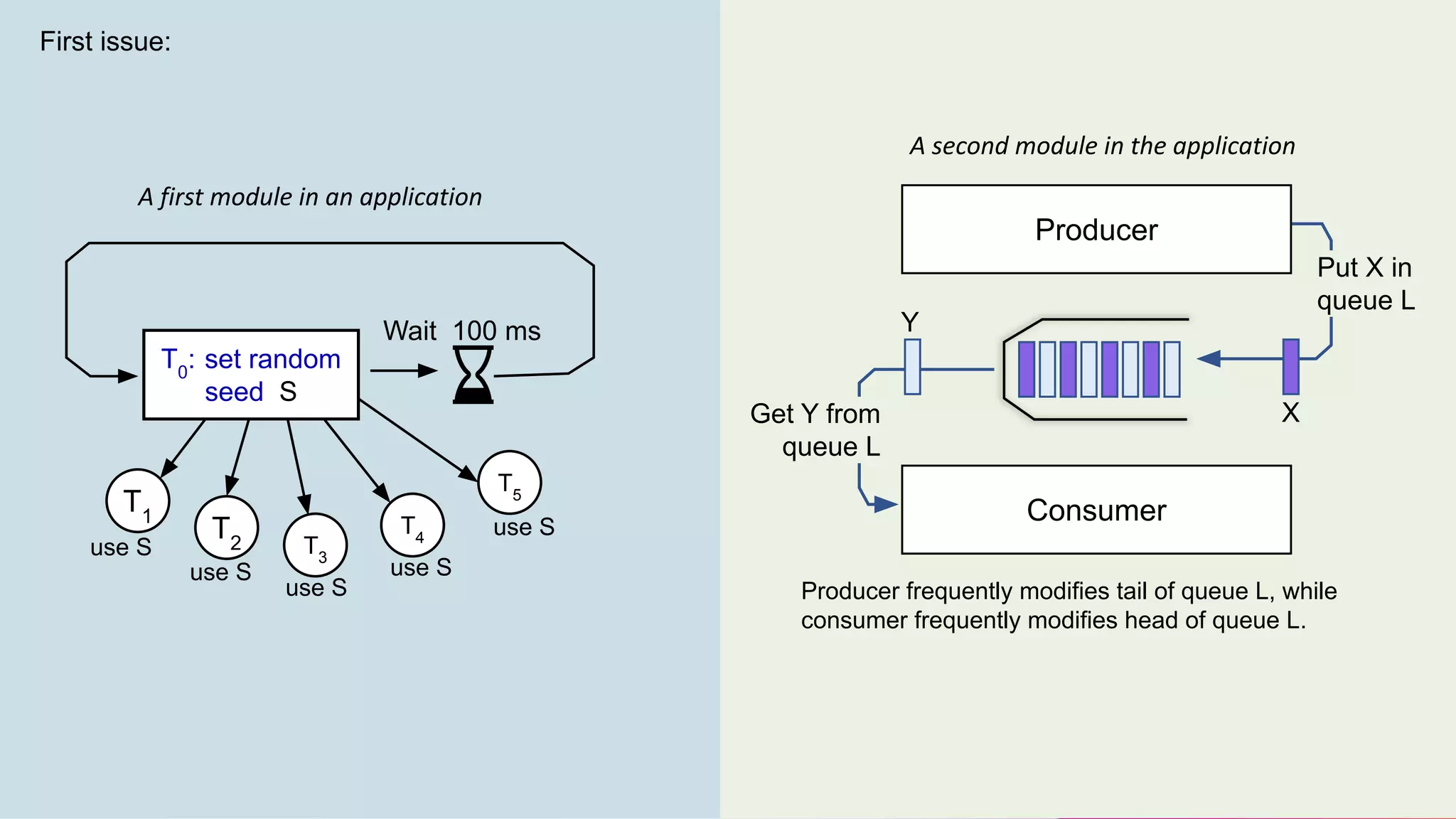

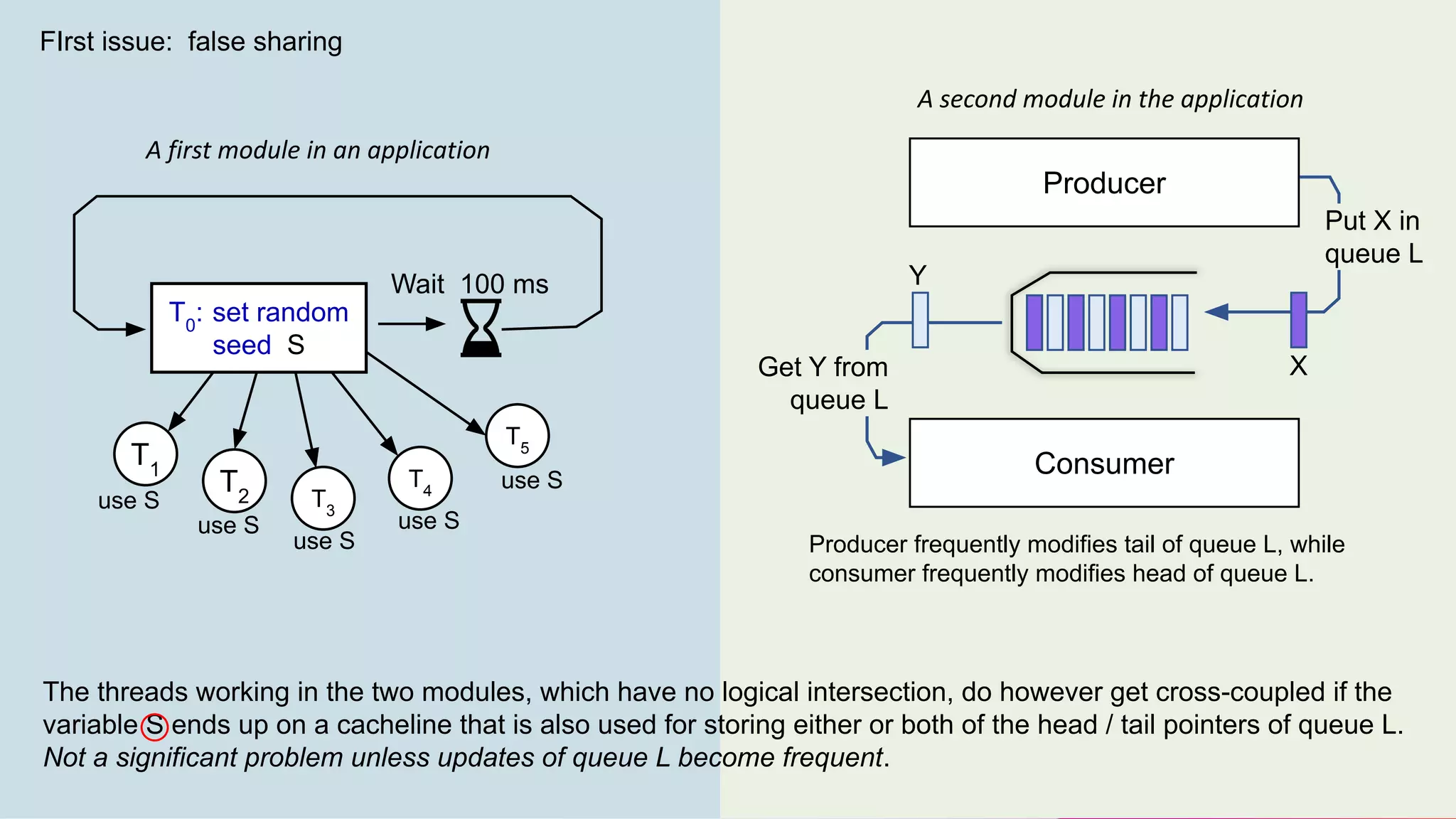

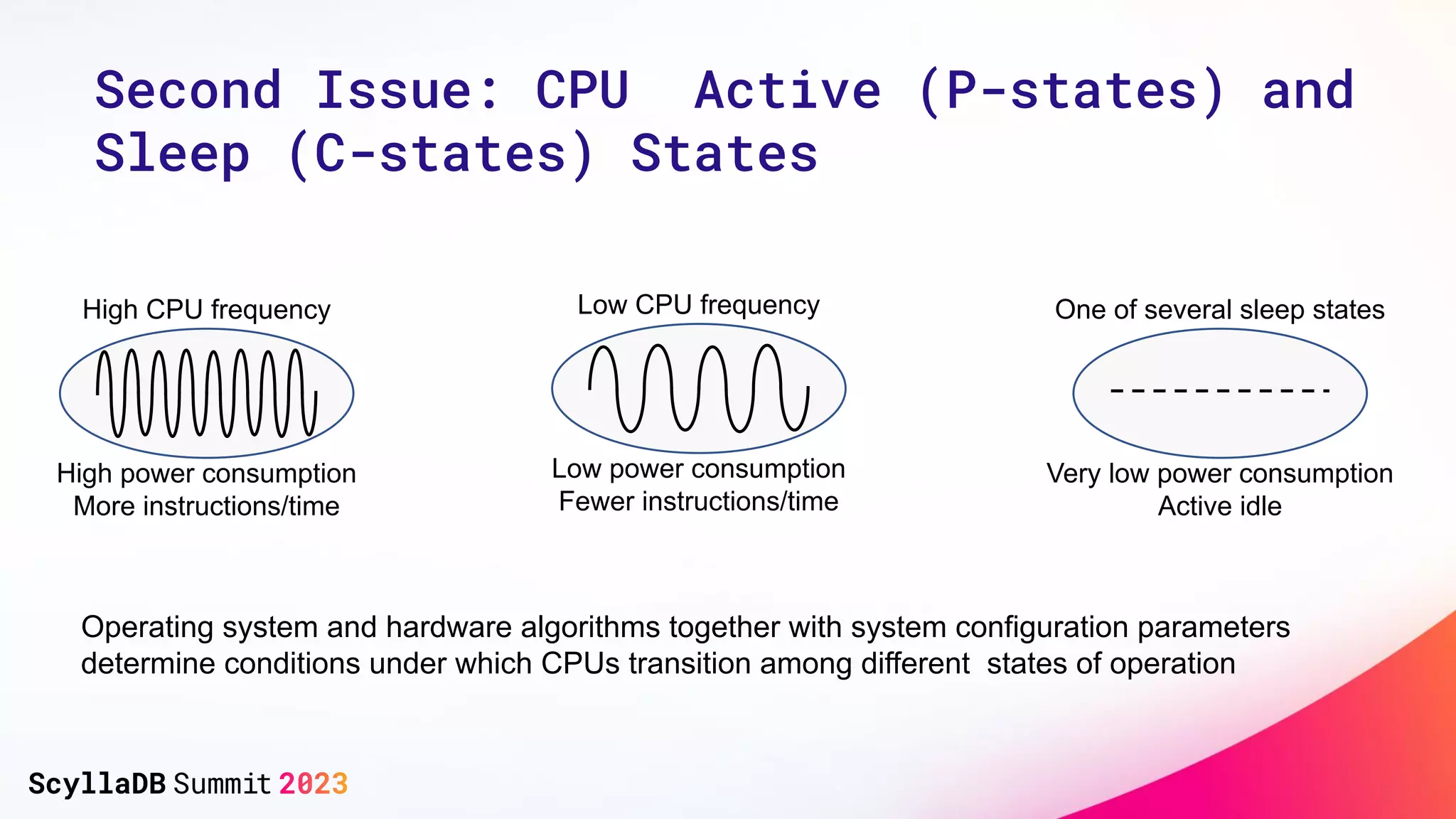

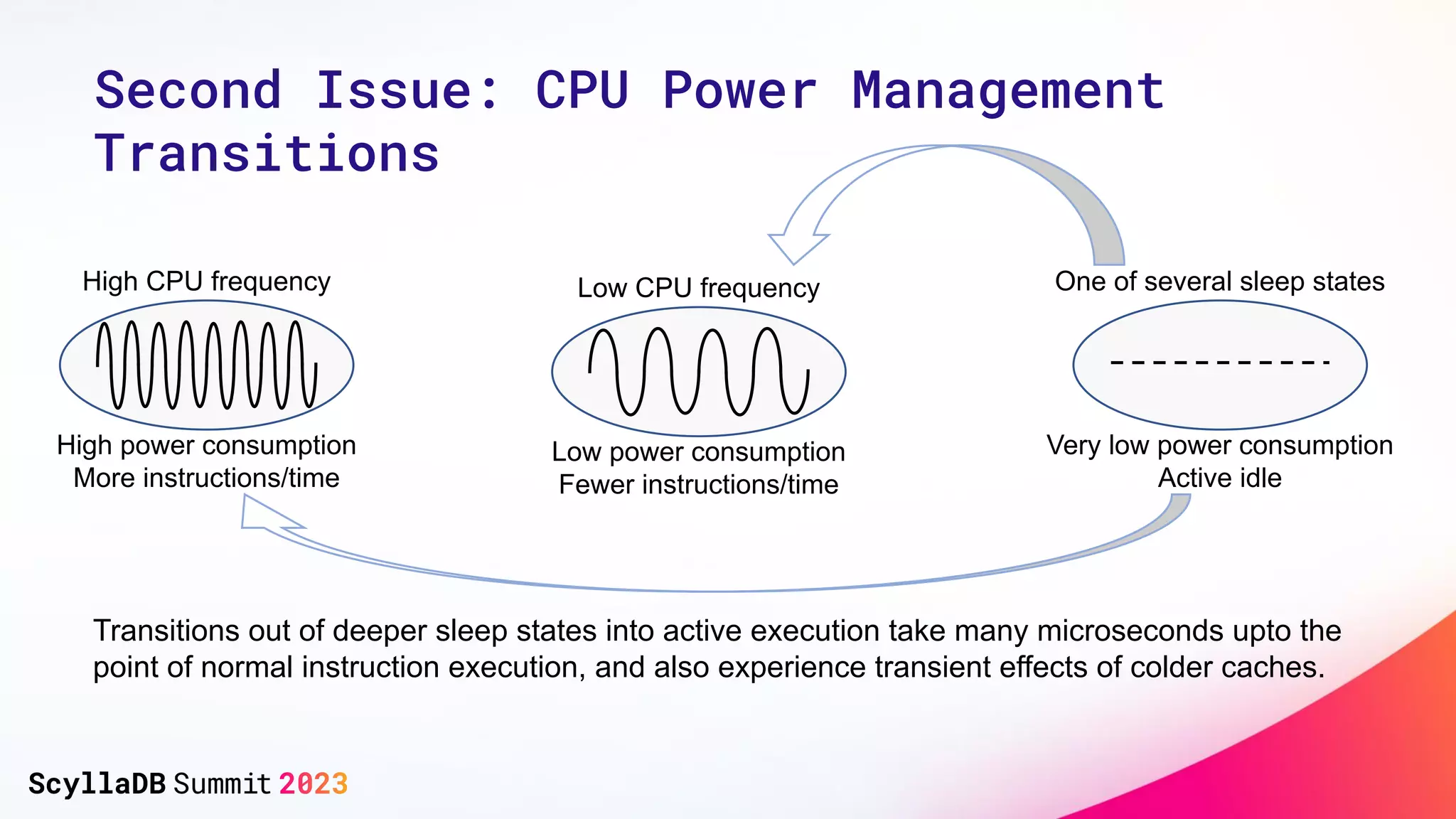

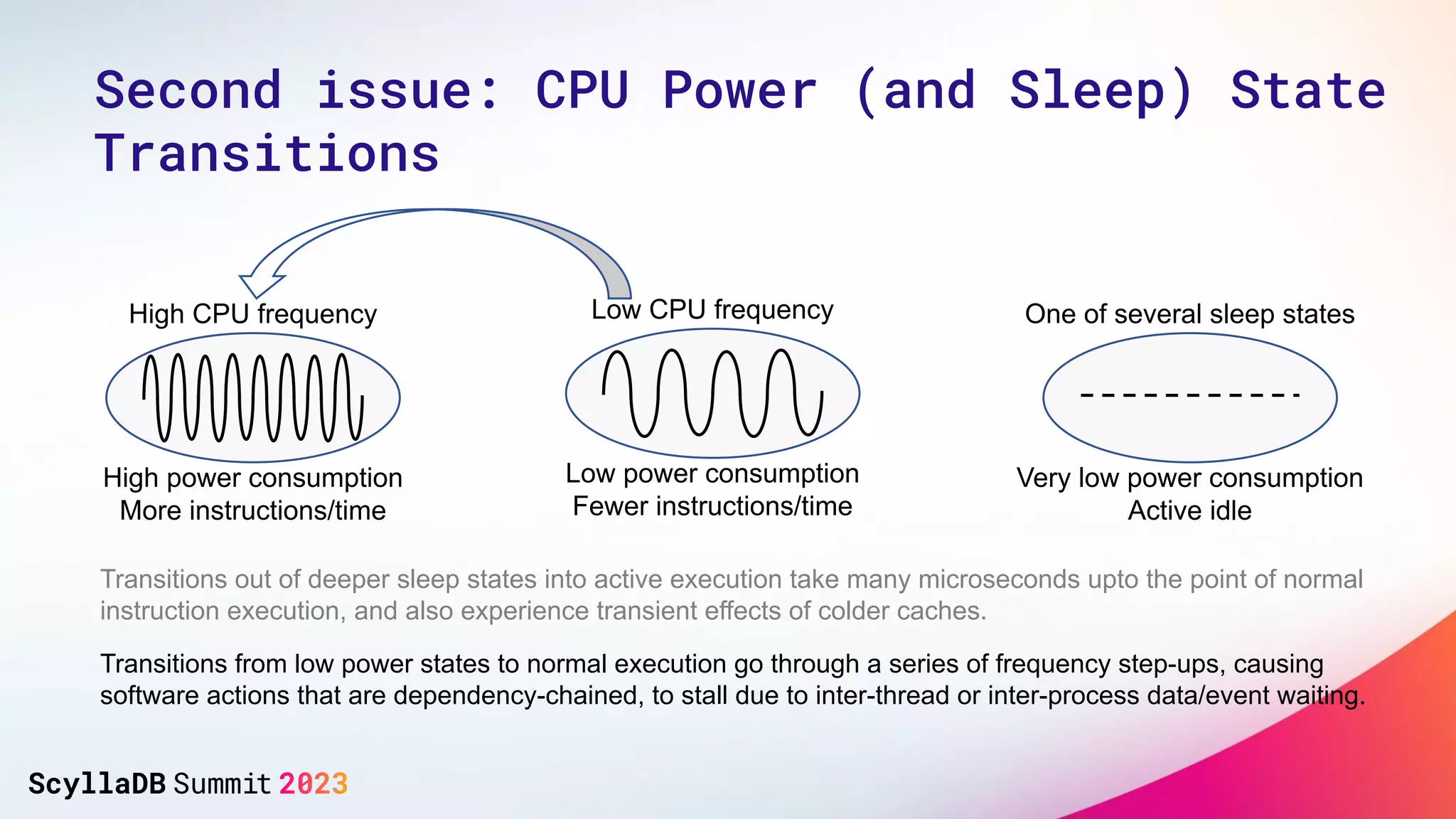

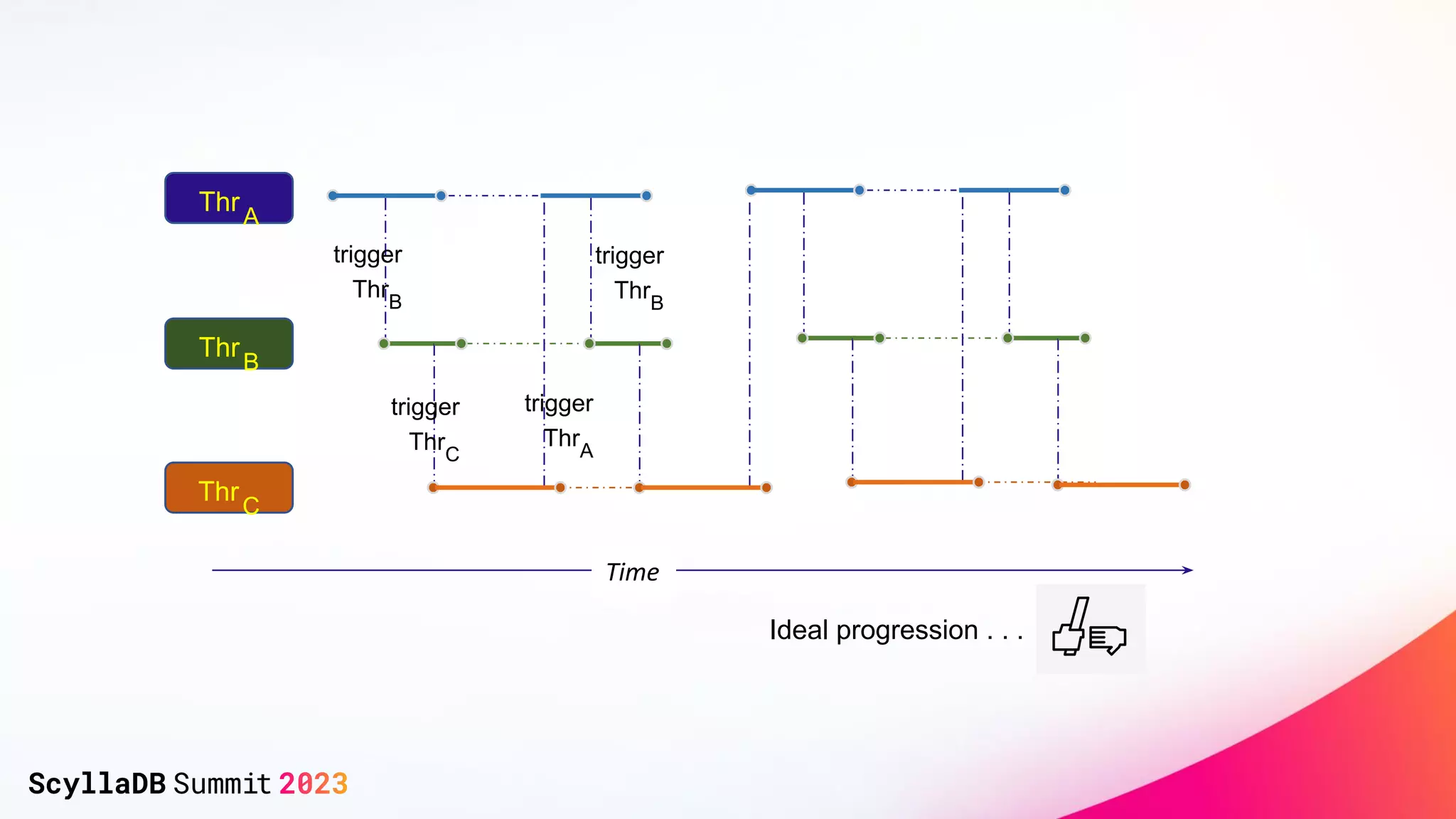

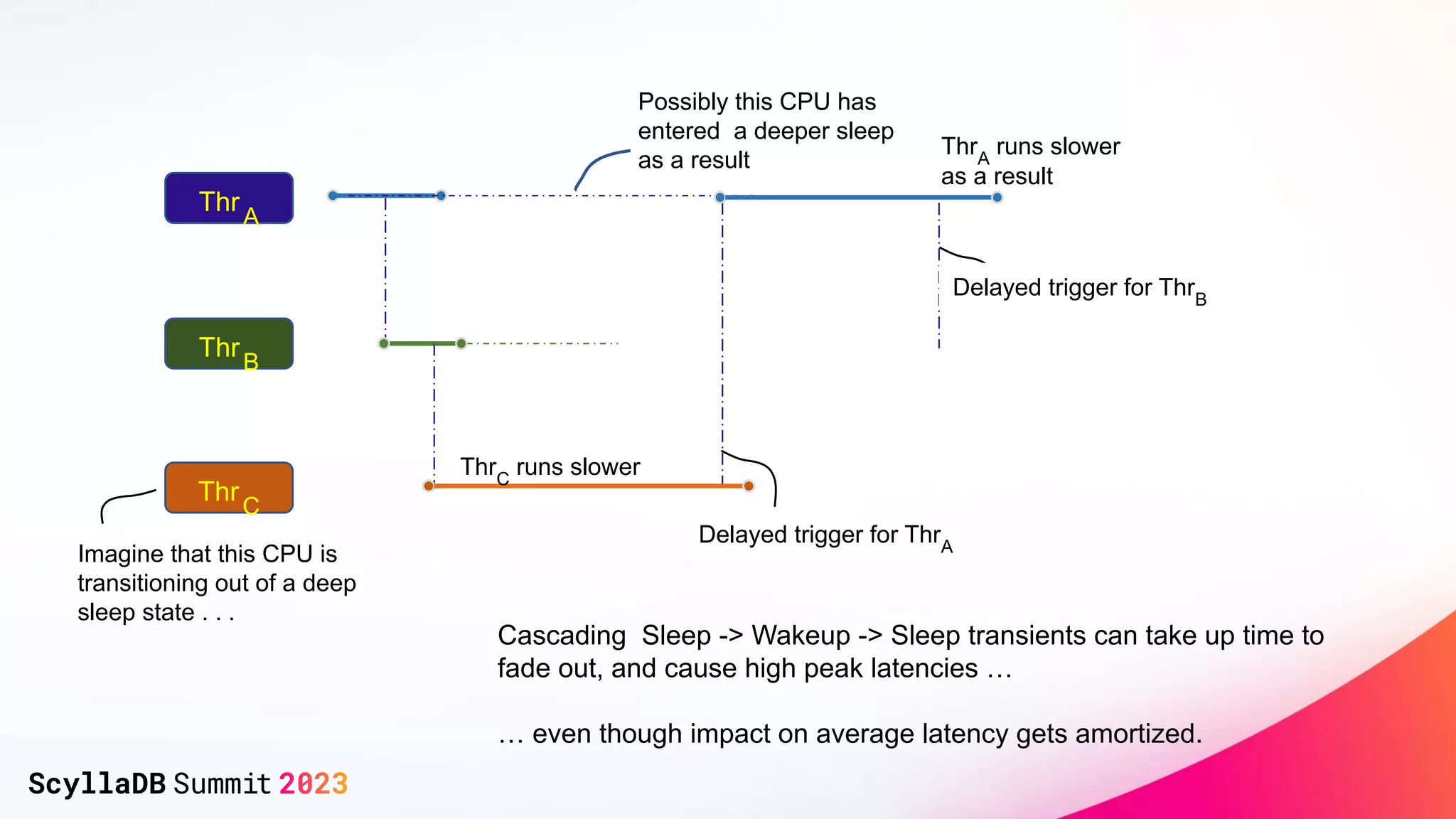

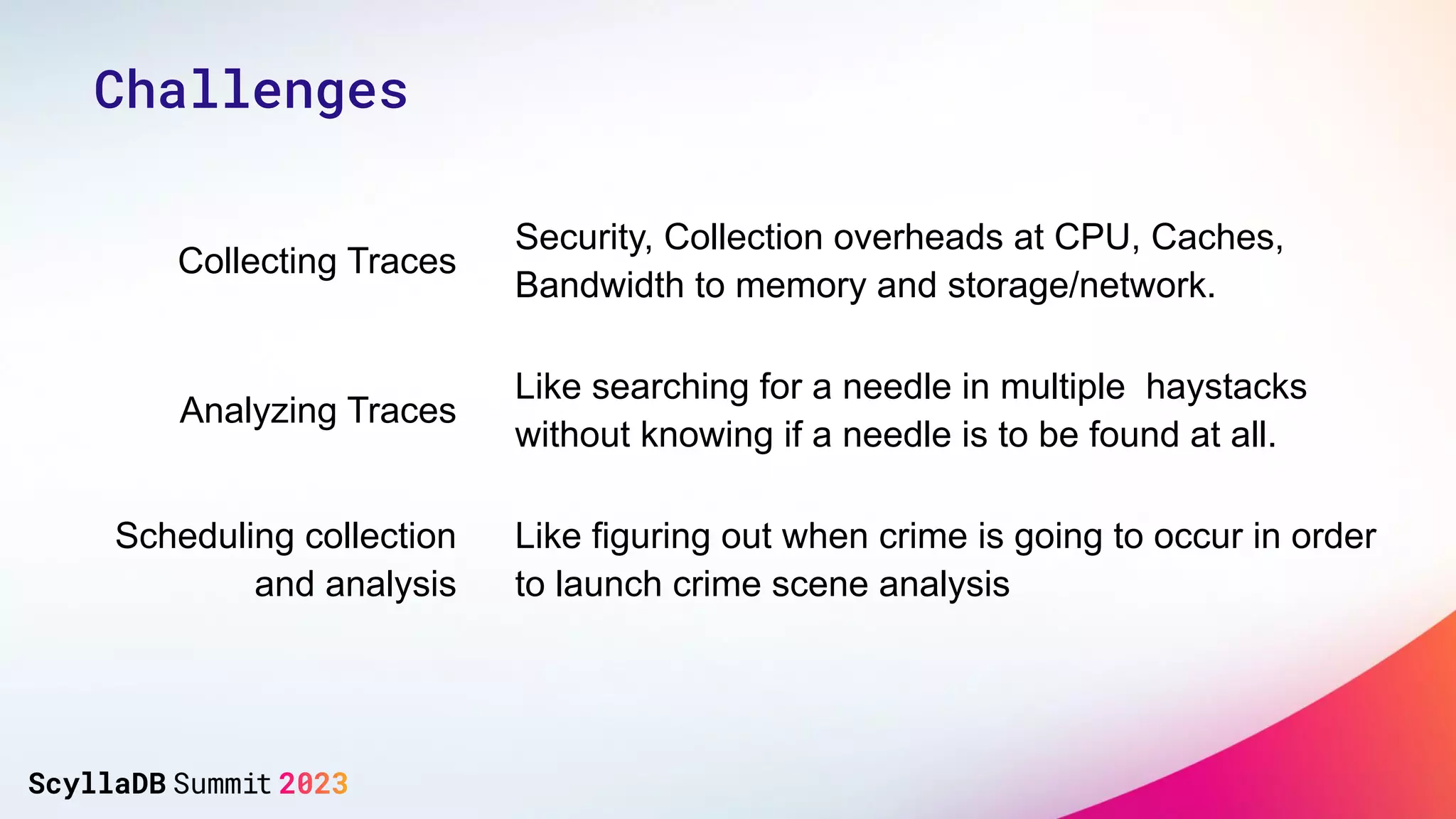

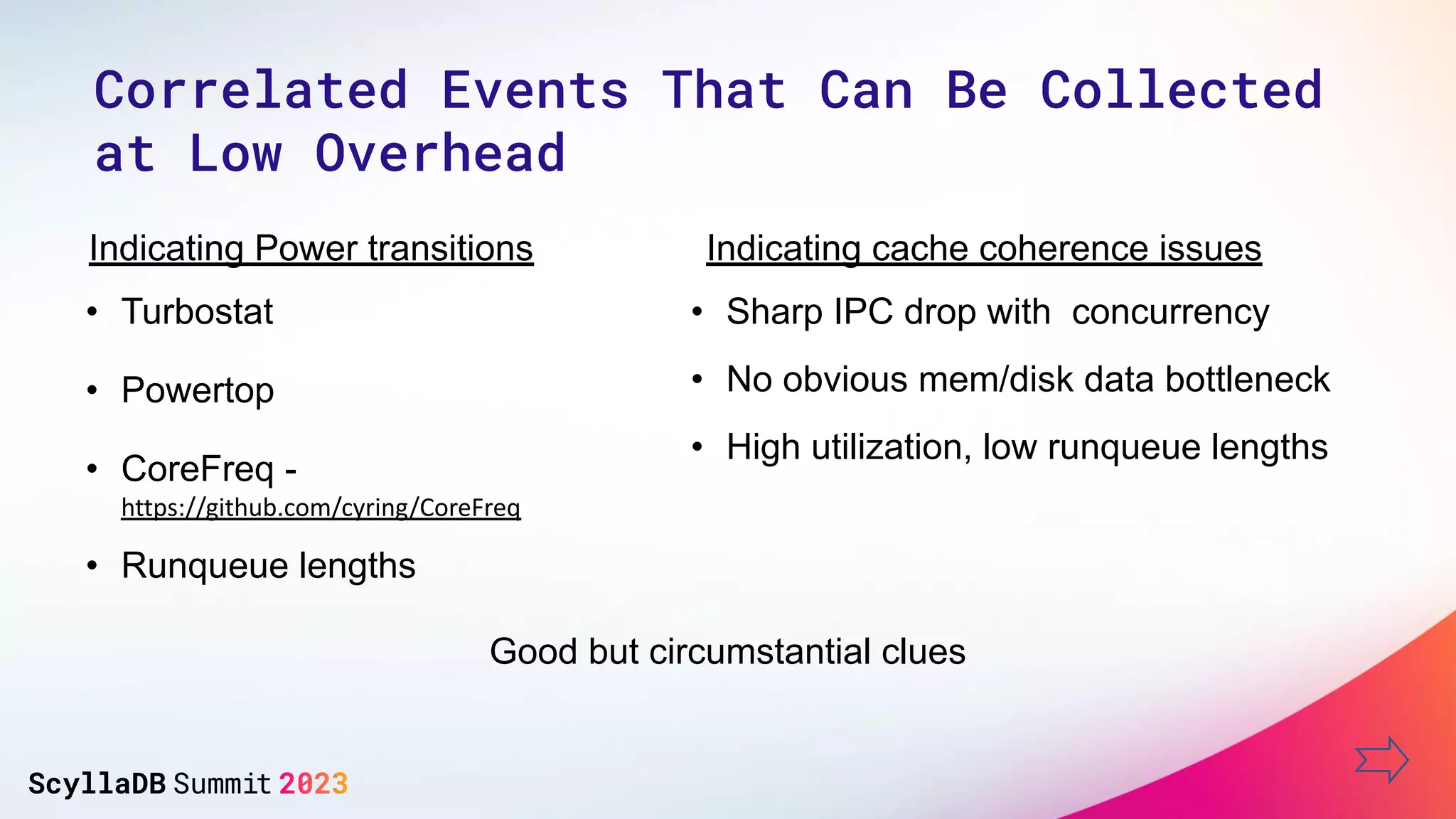

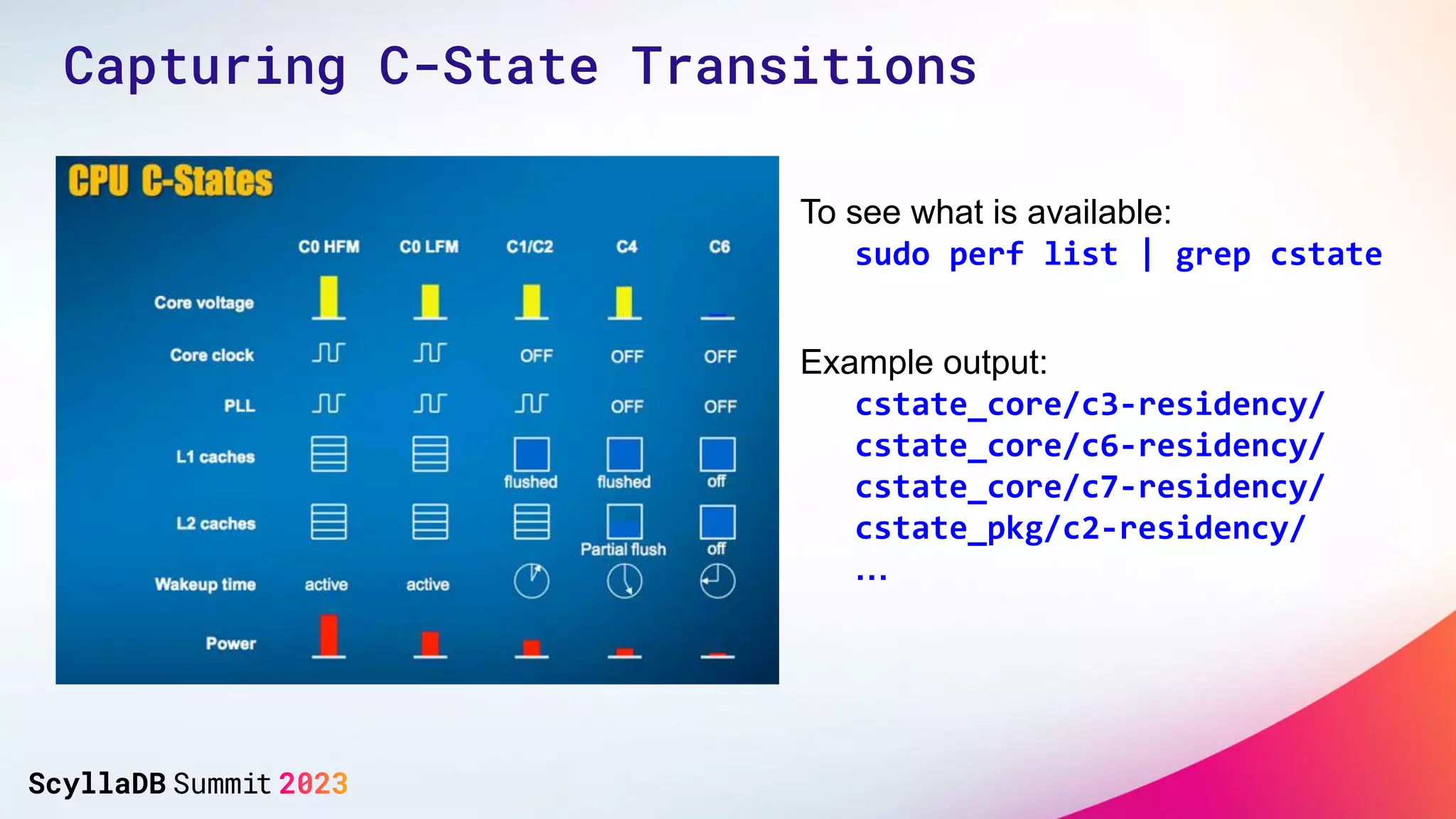

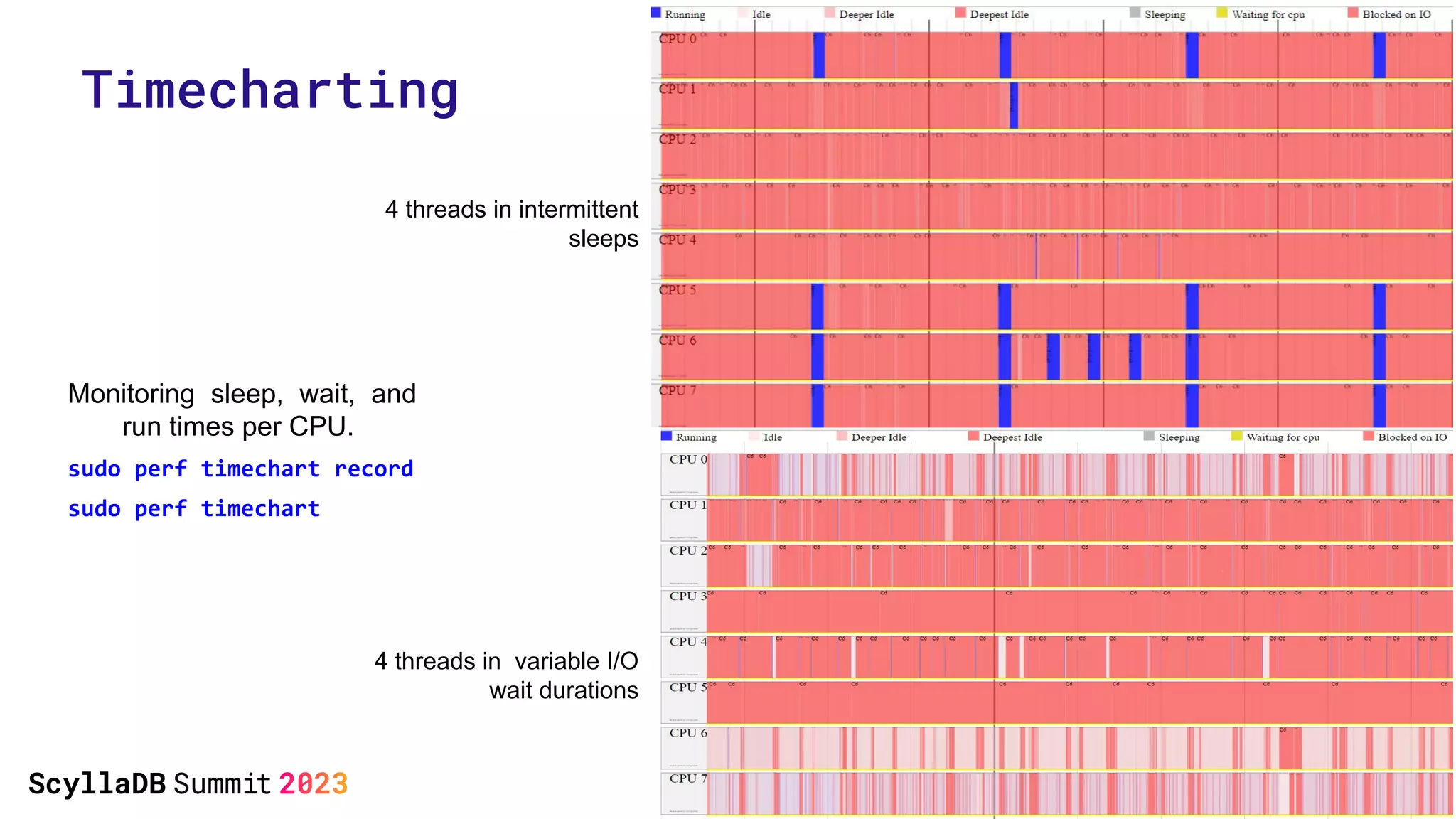

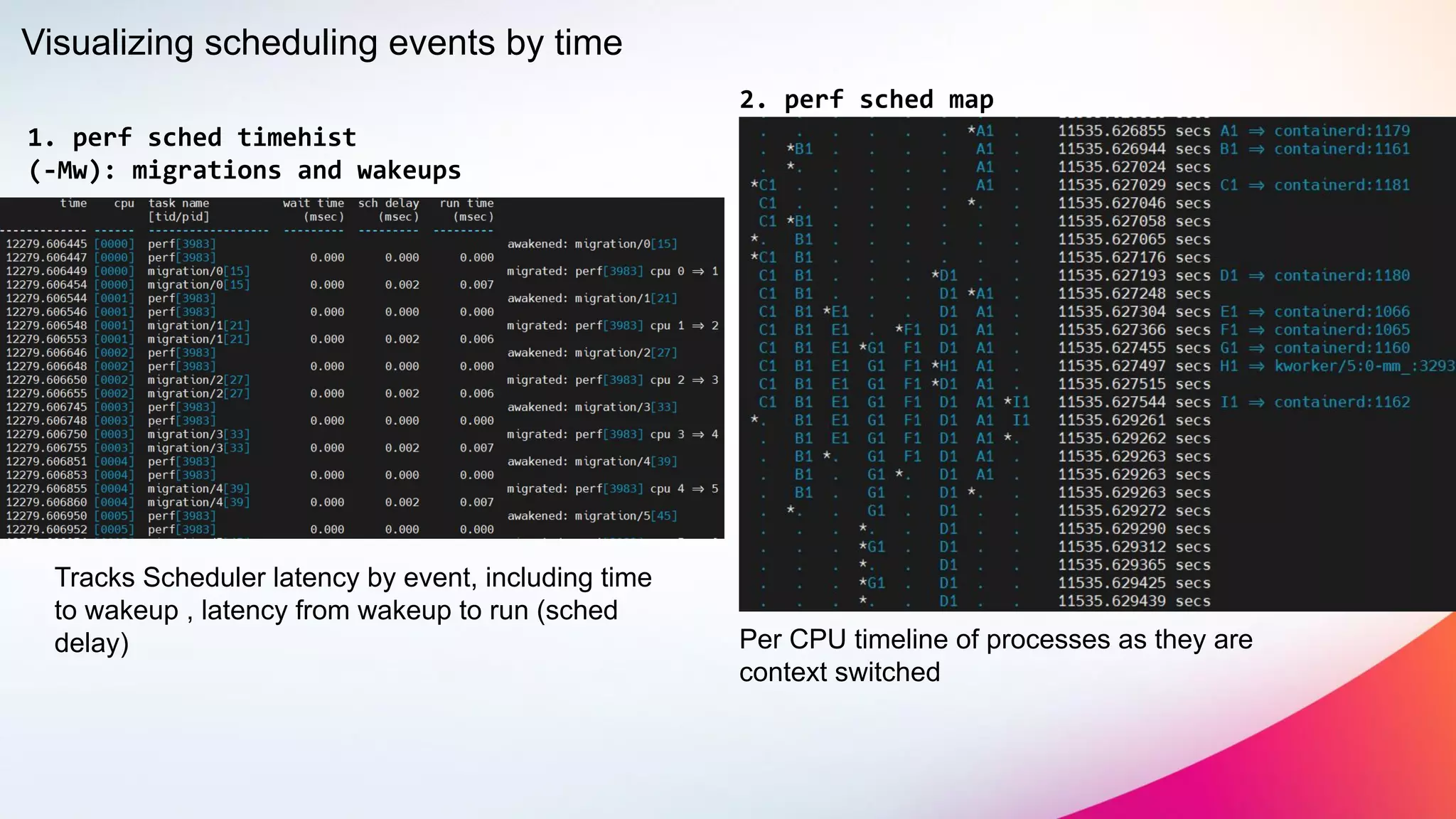

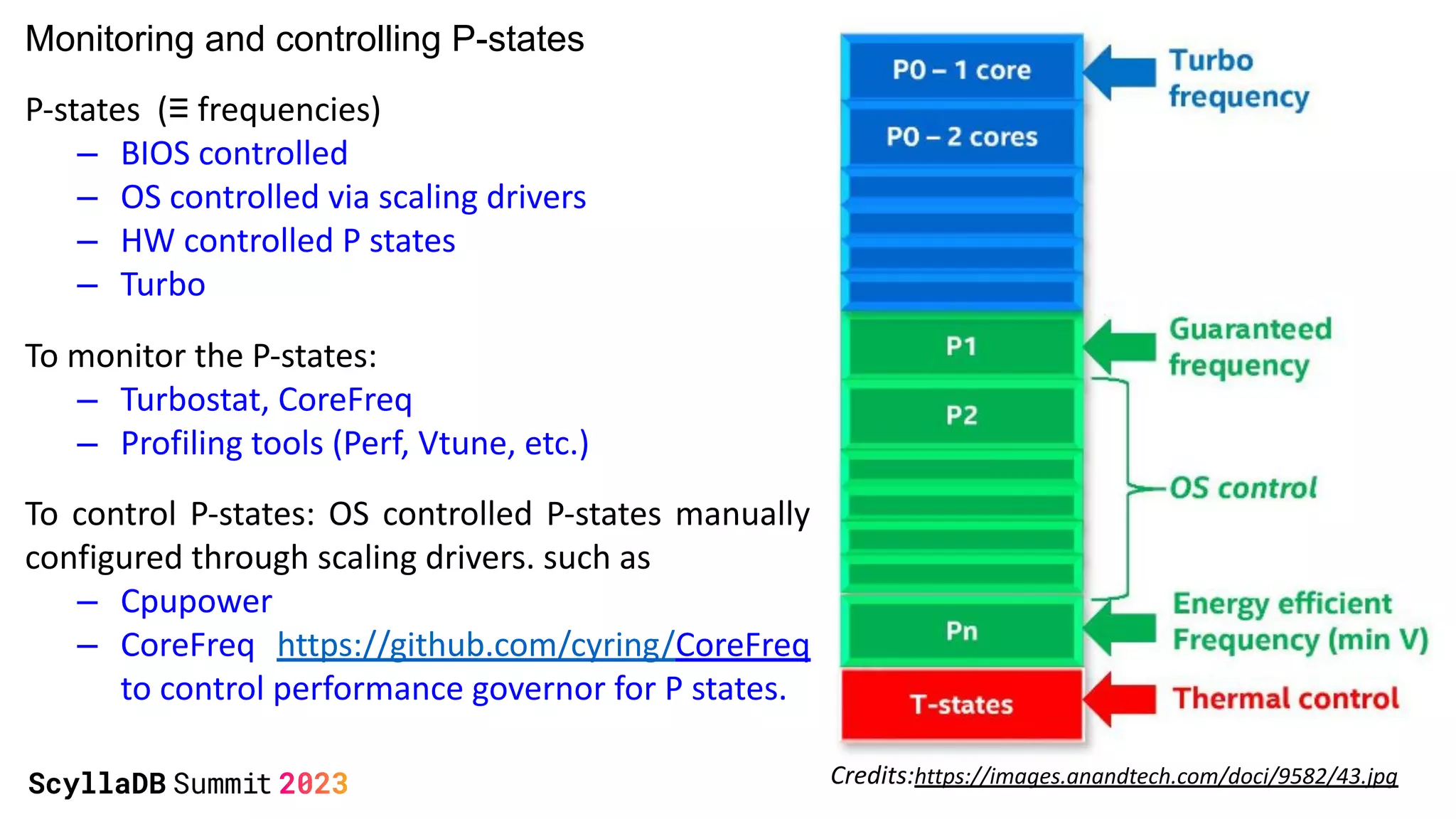

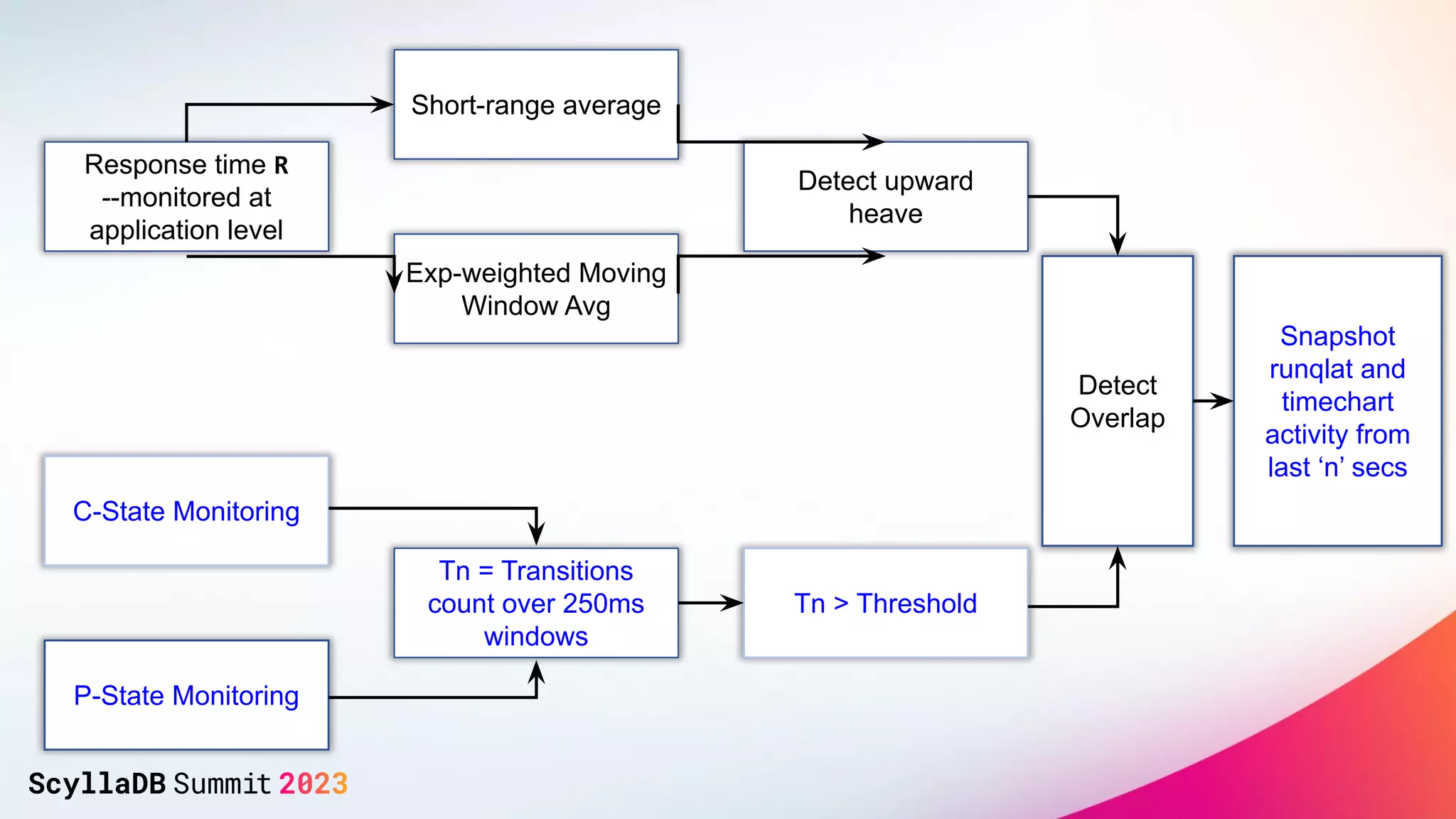

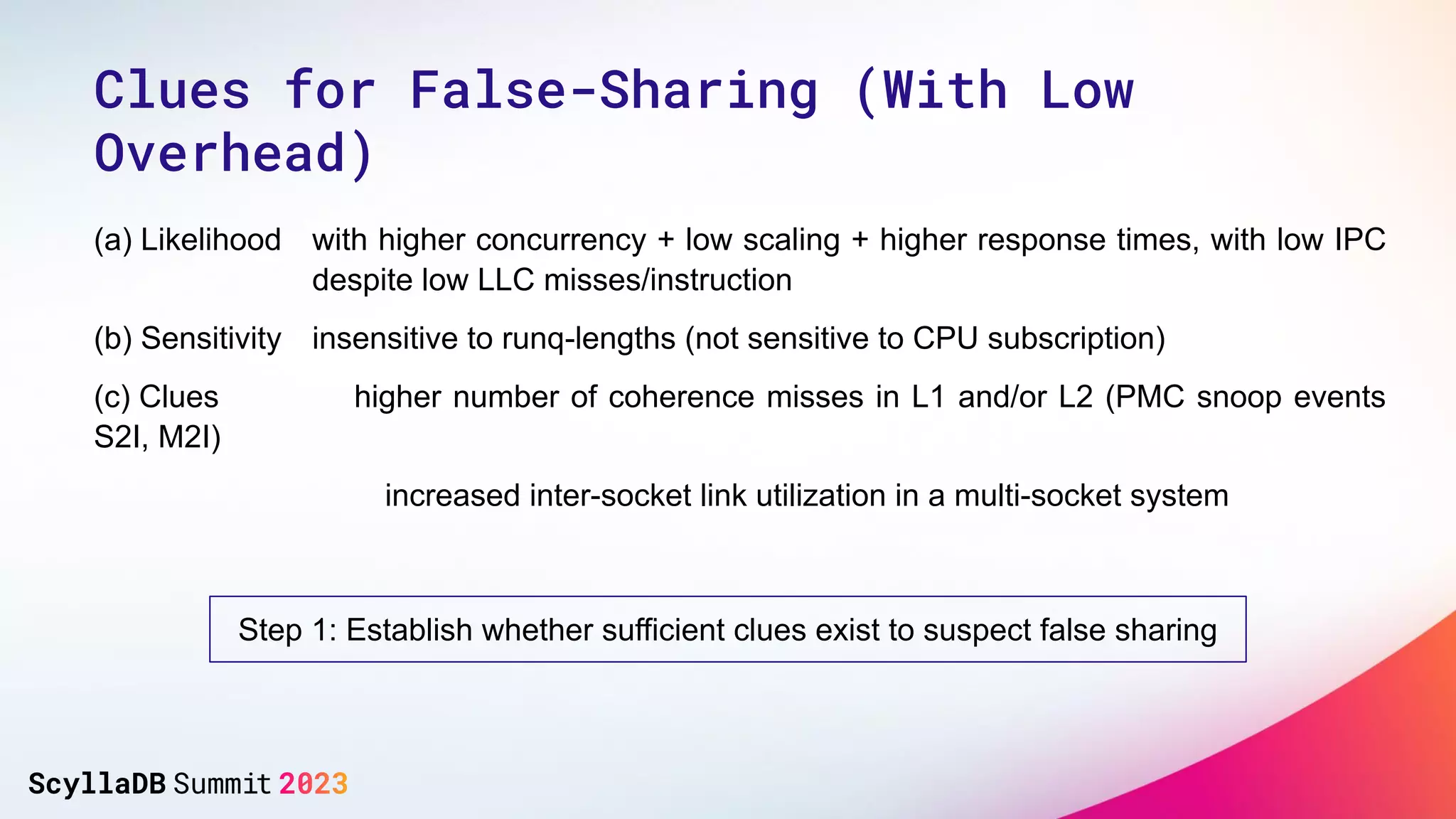

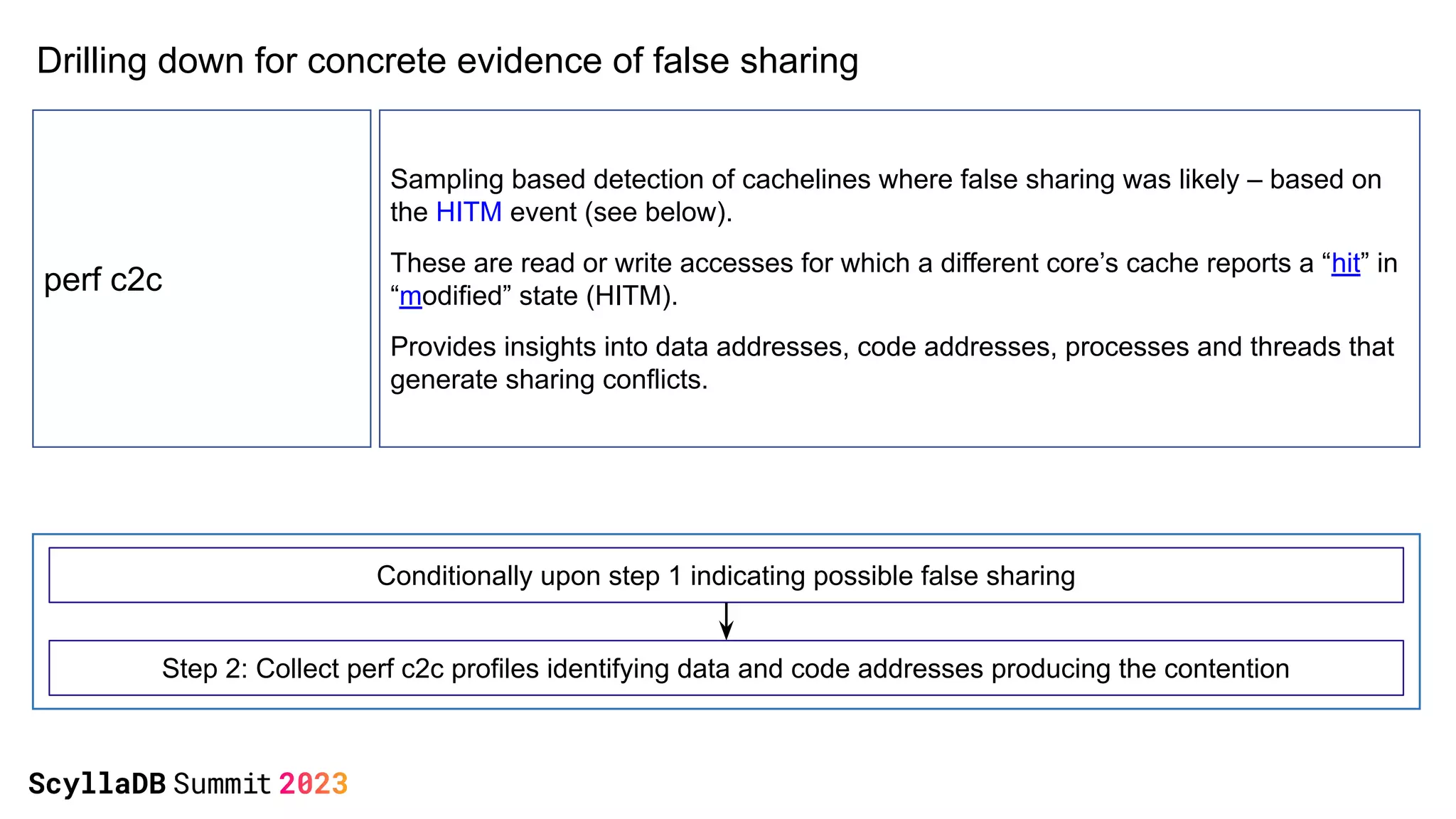

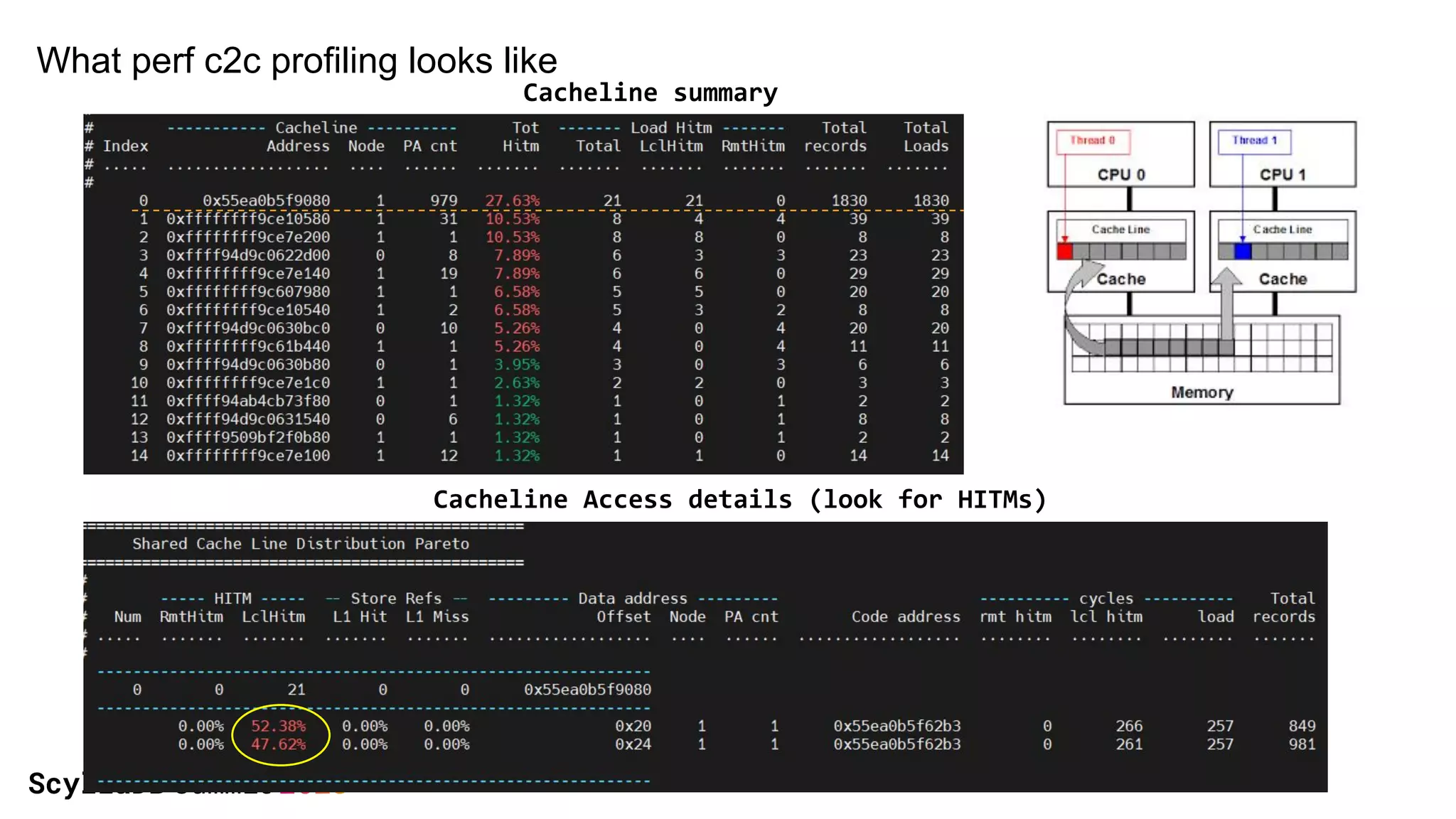

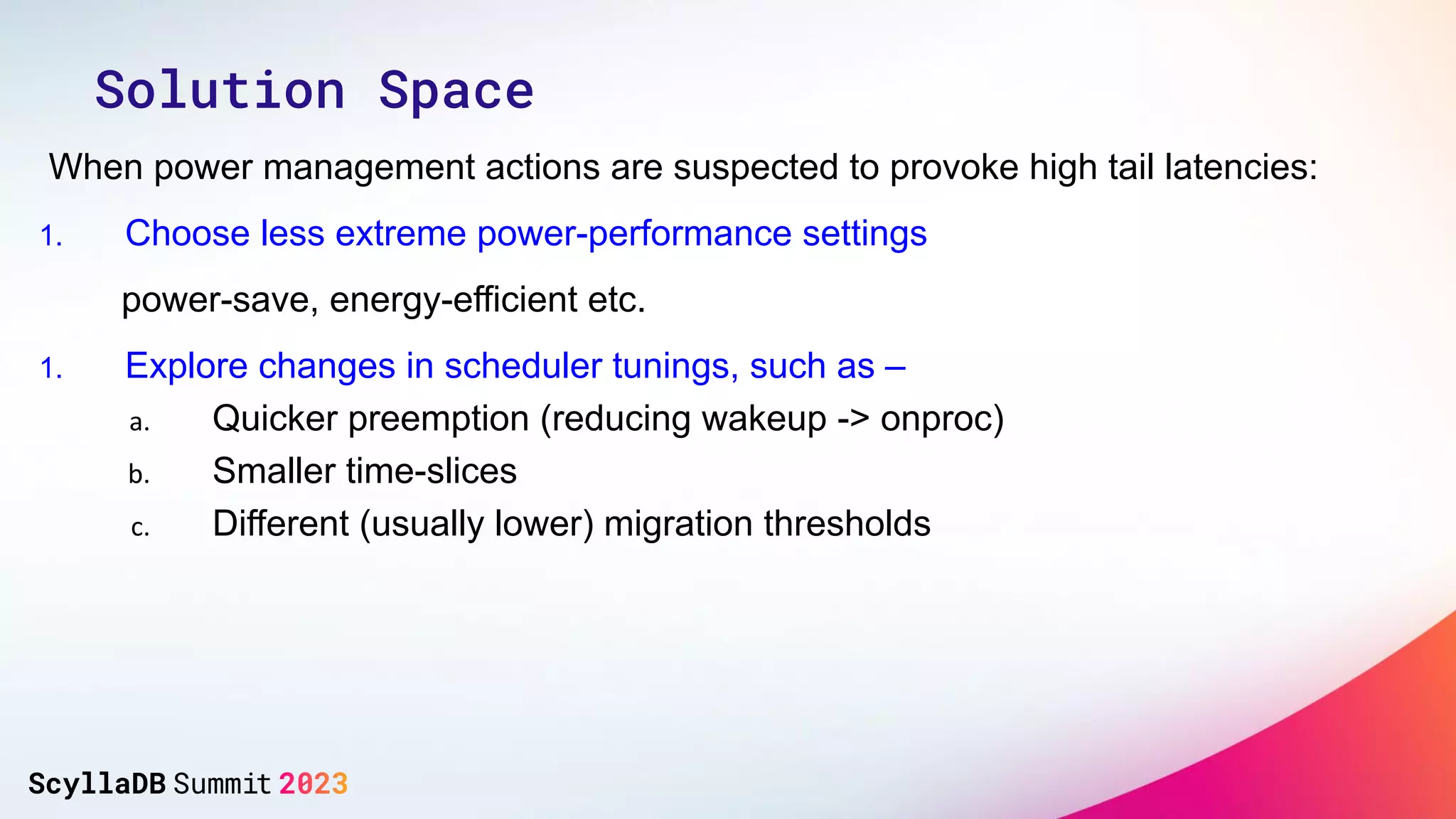

The document discusses two non-obvious sources of latency in applications: false sharing and CPU power management transitions, both of which can lead to unpredictable spikes in response times. It emphasizes the need for effective detection and mitigation strategies to address these issues, including instrumentation and profiling tools. The document concludes by highlighting the importance of understanding these latency causes to improve application performance.