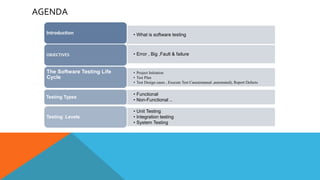

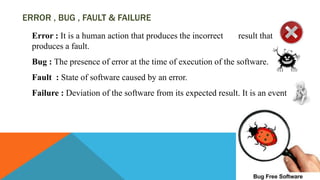

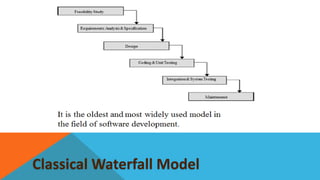

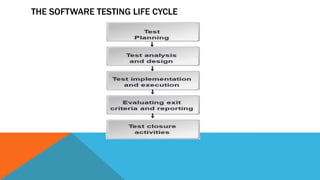

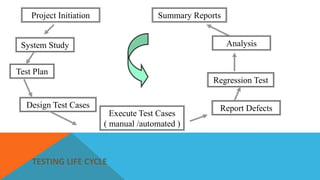

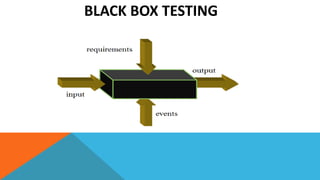

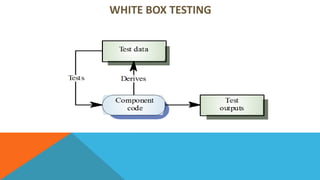

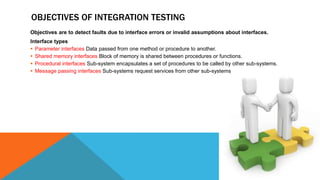

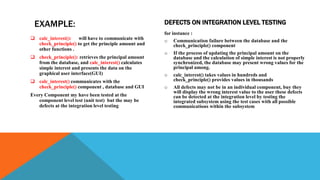

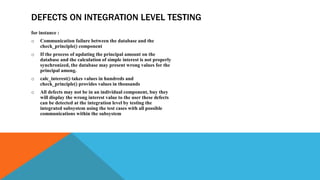

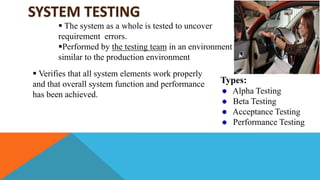

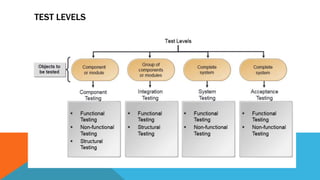

The document outlines the key aspects and processes involved in software testing, emphasizing its aim to ensure the correctness, completeness, and quality of developed software through various testing types. It details the software testing life cycle, including project initiation, test planning, test case design, execution (both manual and automated), defect reporting, and closure activities. Additionally, it describes different testing techniques, levels, and objectives, providing a comprehensive overview of both functional and non-functional testing methodologies.