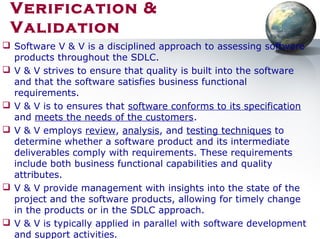

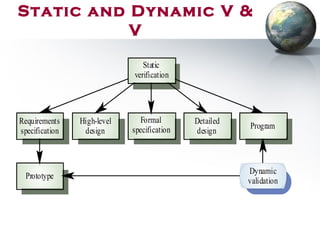

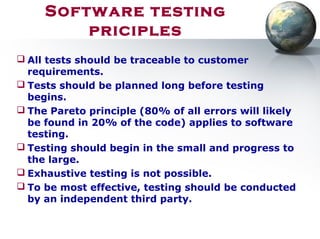

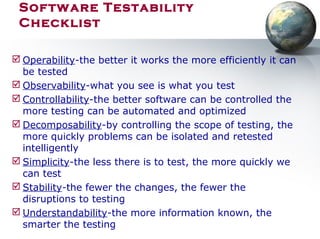

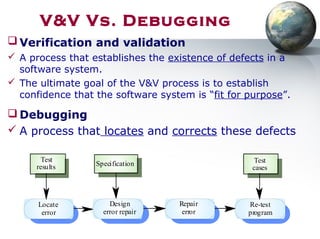

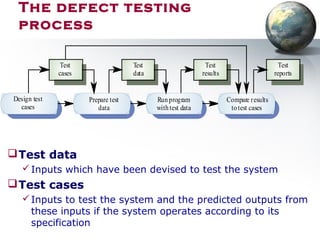

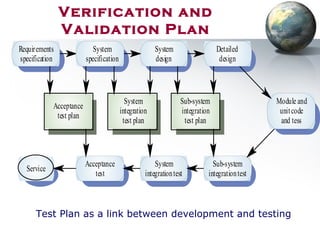

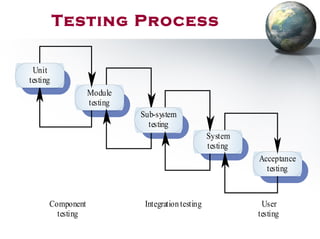

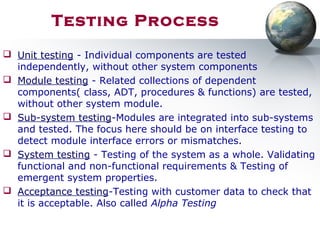

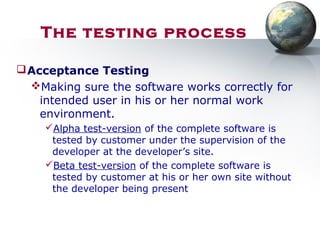

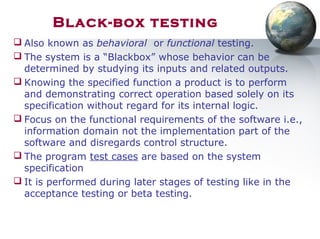

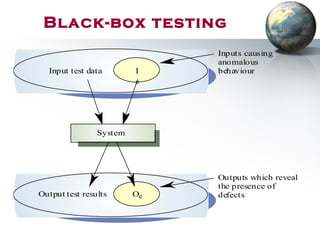

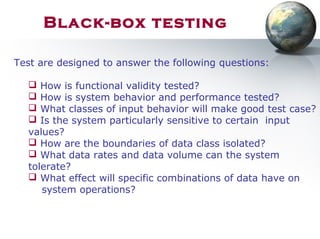

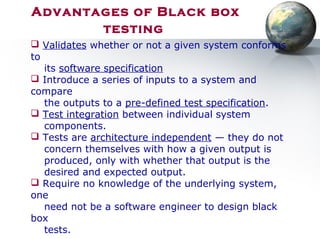

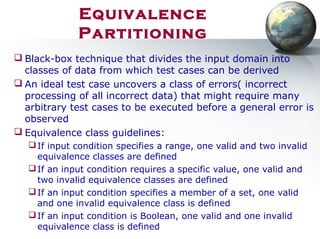

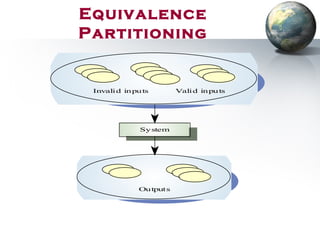

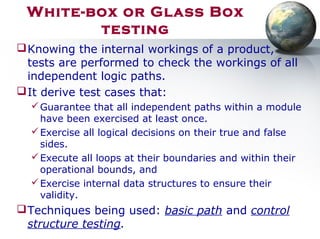

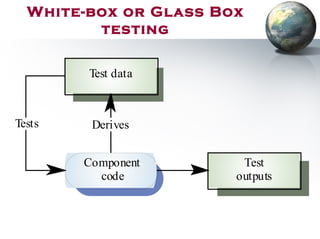

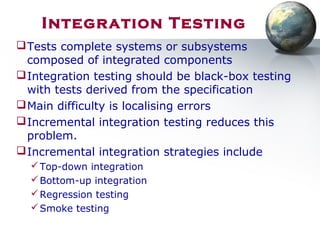

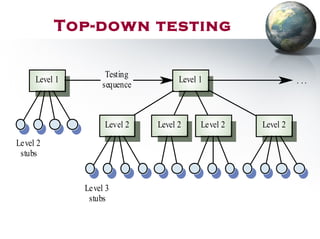

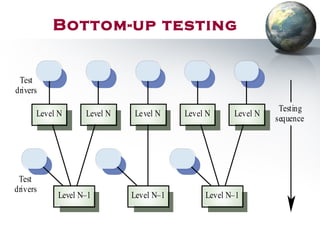

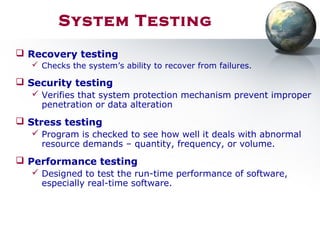

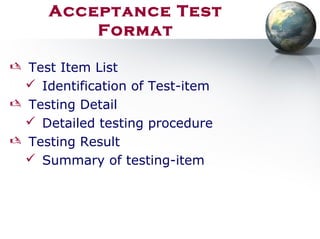

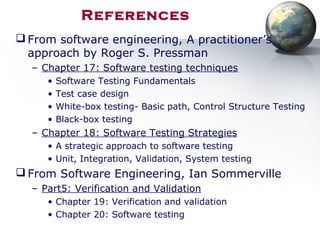

This document discusses various topics related to software testing and verification and validation (V&V). It begins with an overview of test plan creation and different types of testing such as unit, integration, system, and object-oriented testing. It then defines the key differences between verification and validation. The rest of the document provides more details on V&V techniques like static and dynamic verification, software inspections, and testing. It also covers testing fundamentals, principles, testability factors, and different testing techniques like black-box and white-box testing.