Embed presentation

Download as PDF, PPTX

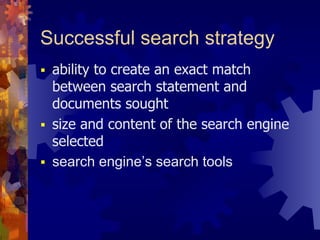

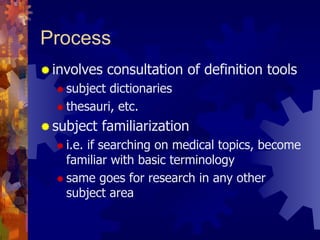

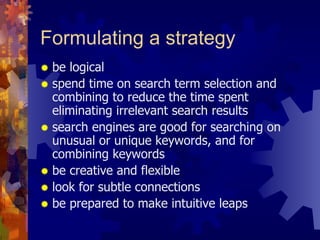

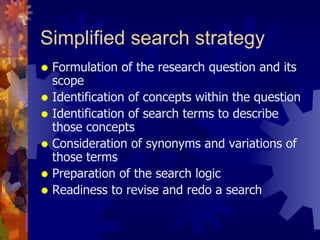

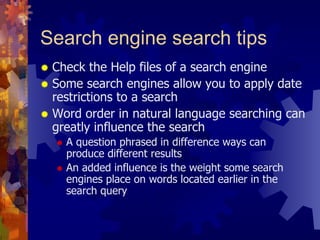

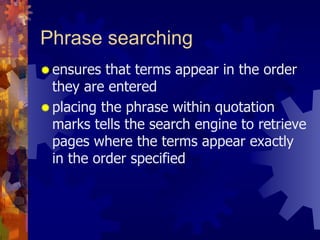

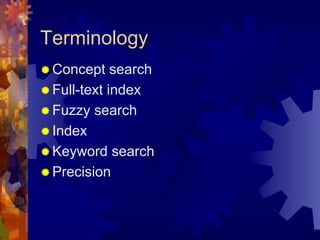

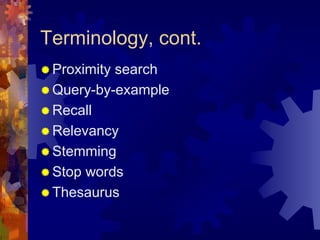

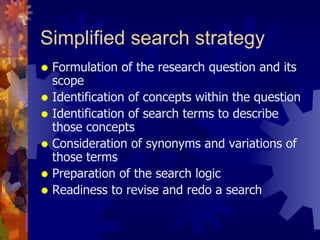

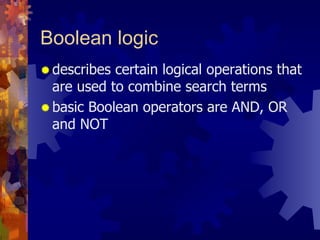

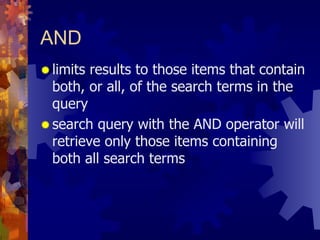

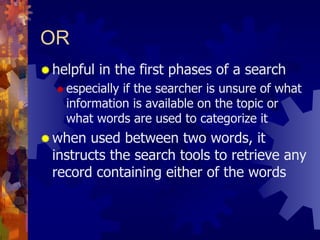

Search engines work by sending out computer programs called spiders or robots to search the web and index pages. They gather information from pages, store it in a database, and allow users to search for pages using keywords. Search engines rank results based on an algorithm that considers things like how many other sites link to a page. However, not all web pages are accessible to search engines, with some excluded due to technical barriers or search engine policies. Developing an effective search strategy involves considering relevance, precision, and recall as well as using tools like Boolean logic and phrase searching.