Semantic Search Component

•

3 likes•1,047 views

Concept and example of a semantic solution implemented with SQL views to cooperate with users on queries over structured data with independence from database schema knowledge and technology.

Report

Share

Report

Share

Recommended

Towards the implementation of a refined data model for a Zulu machine-readabl...

© Ronell van der Merwe, Laurette Pretorius, Sonja Bosch

Some Information Retrieval Models and Our Experiments for TREC KBA

Some Information Retrieval Models and Our Experiments for TREC KBAPatrice Bellot - Aix-Marseille Université / CNRS (LIS, INS2I)

Presentation of the main IR models

Presentation of our submission to TREC KBA 2014 (Entity oriented information retrieval), in partnership with Kware company (V. Bouvier, M. Benoit)Information retrieval concept, practice and challenge

This short lecture gives a brief overview of basic concept of information retrieval, a practical case and some challenges ahead.

Conceptual foundations of text mining and preprocessing steps nfaoui el_habib

These lecture slides describe the conceptual foundations of text mining and preprocessing steps.

Text mining

Text Mining is an Important part of data mining and it is used nowadays on a large scale. This mining technique is used to find patterns in text data collected from many online sources , and to gain some interestings insights from the patterns observed. Since text is basically everywhere on the internet, it becomes quite difficult to get the data in structured format, which is why text mining plays a huge role. It uses NLP(Natural Language Processing Techniques) to automate the text mining and this concept is used in Machine Learning.

Recommended

Towards the implementation of a refined data model for a Zulu machine-readabl...

© Ronell van der Merwe, Laurette Pretorius, Sonja Bosch

Some Information Retrieval Models and Our Experiments for TREC KBA

Some Information Retrieval Models and Our Experiments for TREC KBAPatrice Bellot - Aix-Marseille Université / CNRS (LIS, INS2I)

Presentation of the main IR models

Presentation of our submission to TREC KBA 2014 (Entity oriented information retrieval), in partnership with Kware company (V. Bouvier, M. Benoit)Information retrieval concept, practice and challenge

This short lecture gives a brief overview of basic concept of information retrieval, a practical case and some challenges ahead.

Conceptual foundations of text mining and preprocessing steps nfaoui el_habib

These lecture slides describe the conceptual foundations of text mining and preprocessing steps.

Text mining

Text Mining is an Important part of data mining and it is used nowadays on a large scale. This mining technique is used to find patterns in text data collected from many online sources , and to gain some interestings insights from the patterns observed. Since text is basically everywhere on the internet, it becomes quite difficult to get the data in structured format, which is why text mining plays a huge role. It uses NLP(Natural Language Processing Techniques) to automate the text mining and this concept is used in Machine Learning.

Role of Text Mining in Search Engine

Please leave me a mail at jaimodi891@yahoo.com if you like the content of document.

Tesxt mining

A college level presentation covering the following topics:-

Introduction

Text mining Comparison with other mining

Text Mining Process

How Algorithm is derived for Text Mining

Text Analysis For Google Sheet

Conclusion

Introduction to Text Mining and Semantics

Introduction to Text Mining and Semantics, presented by Seth Grimes at Nstein seminars in London, June 2009, and New York, September 2009.

2011linked science4mccuskermcguinnessfinal

Linked Science 2011 talk on the importance of modeling sources and their usage, such as PML's source usage, and how it can be generalized using FRBR

Big Data & Text Mining

Big Data & Text Mining: Finding Nuggets in Mountains of Textual Data

Big amount of information is available in textual form in databases or online sources, and for many enterprise functions (marketing, maintenance, finance, etc.) represents a huge opportunity to improve their business knowledge. For example, text mining is starting to be used in marketing, more specifically in analytical customer relationship management, in order to achieve the holy 360° view of the customer (integrating elements from inbound mails, web comments, surveys, internal notes, etc.).

Facing this new domain I have make a personal research, and realize a synthesis, which has help me to clarify some ideas. The below presentation does not intend to be exhaustive on the subject, but could perhaps bring you some useful insights.

Information_Retrieval_Models_Nfaoui_El_Habib

These lecture slides describes the information retrieval models.

Tutorial 1 (information retrieval basics)

Part of the Search Engine course given in the Technion (2011)

Keystone Summer School 2015: Mauro Dragoni, Ontologies For Information Retrieval

The presentation provides an overview of what an ontology is and how it can be used for representing information and for retrieving data with a particular focus on the linguistic resources available for supporting this kind of task. Overview of semantic-based retrieval approaches by highlighting the pro and cons of using semantic approaches with respect to classic ones. Use cases are presented and discussed

SYNONYMS, ANTONYMS, POLYSEMY, HOMONYM, AND HOMOGRAPH

definition and examlple SYNONYMS,

defintion and example ANTONYMS,

Definition and example POLYSEMY,

Definition and example HOMONYM, AND Definition and example HOMOGRAPH

More Related Content

What's hot

Role of Text Mining in Search Engine

Please leave me a mail at jaimodi891@yahoo.com if you like the content of document.

Tesxt mining

A college level presentation covering the following topics:-

Introduction

Text mining Comparison with other mining

Text Mining Process

How Algorithm is derived for Text Mining

Text Analysis For Google Sheet

Conclusion

Introduction to Text Mining and Semantics

Introduction to Text Mining and Semantics, presented by Seth Grimes at Nstein seminars in London, June 2009, and New York, September 2009.

2011linked science4mccuskermcguinnessfinal

Linked Science 2011 talk on the importance of modeling sources and their usage, such as PML's source usage, and how it can be generalized using FRBR

Big Data & Text Mining

Big Data & Text Mining: Finding Nuggets in Mountains of Textual Data

Big amount of information is available in textual form in databases or online sources, and for many enterprise functions (marketing, maintenance, finance, etc.) represents a huge opportunity to improve their business knowledge. For example, text mining is starting to be used in marketing, more specifically in analytical customer relationship management, in order to achieve the holy 360° view of the customer (integrating elements from inbound mails, web comments, surveys, internal notes, etc.).

Facing this new domain I have make a personal research, and realize a synthesis, which has help me to clarify some ideas. The below presentation does not intend to be exhaustive on the subject, but could perhaps bring you some useful insights.

Information_Retrieval_Models_Nfaoui_El_Habib

These lecture slides describes the information retrieval models.

Tutorial 1 (information retrieval basics)

Part of the Search Engine course given in the Technion (2011)

Keystone Summer School 2015: Mauro Dragoni, Ontologies For Information Retrieval

The presentation provides an overview of what an ontology is and how it can be used for representing information and for retrieving data with a particular focus on the linguistic resources available for supporting this kind of task. Overview of semantic-based retrieval approaches by highlighting the pro and cons of using semantic approaches with respect to classic ones. Use cases are presented and discussed

What's hot (20)

Keystone Summer School 2015: Mauro Dragoni, Ontologies For Information Retrieval

Keystone Summer School 2015: Mauro Dragoni, Ontologies For Information Retrieval

Viewers also liked

SYNONYMS, ANTONYMS, POLYSEMY, HOMONYM, AND HOMOGRAPH

definition and examlple SYNONYMS,

defintion and example ANTONYMS,

Definition and example POLYSEMY,

Definition and example HOMONYM, AND Definition and example HOMOGRAPH

Seven types of ambiguity - the role of communications in the modern media soc...

Keynote address given at conference "Shaping the digital future", TH Wildau, Berlin, 27 May 2010. The role of communications in the modern media world is analyzed and different types of ambiguities are highlighted. The text of the keynote can also be read in the document section.

Ad polysemy

Presentation of the text MEANING MATTERS Polysemy in Advertising by Stefano Puntoni, Jonathan E. Schroeder, and Mark Ritson

Idioms Interface between semantics & pragmatics - letras usp Elizabeth Harkot

Idioms Interface between semantics & pragmatics - letras usp Elizabeth HarkotRoberto Candido Francisco

Viewers also liked (20)

SYNONYMS, ANTONYMS, POLYSEMY, HOMONYM, AND HOMOGRAPH

SYNONYMS, ANTONYMS, POLYSEMY, HOMONYM, AND HOMOGRAPH

Seven types of ambiguity - the role of communications in the modern media soc...

Seven types of ambiguity - the role of communications in the modern media soc...

Idioms Interface between semantics & pragmatics - letras usp Elizabeth Harkot

Idioms Interface between semantics & pragmatics - letras usp Elizabeth Harkot

Similar to Semantic Search Component

Semantic Web & Information Brokering: Opportunities, Commercialization and Ch...

Amit Sheth, "Semantic Web & Info. Brokering Opportunities, Commercialization and Challenges," Keynote talk at the workshop on Semantic Web: Models, Architecture and Management, September 21, 2000, Lisbon, Portugal.

This was the keynote given at probably the first international event with "Semantic Web" in title (and before the well known SciAm article). As in TBL's use of Semantic Web in his 1999 book, (semantic) metadata plays central role. The use of Worldmodel/Ontology is consistent with our use of ontology for (Web) information integration in 1994 CIKM paper. Summary of the talk by event organizers and other details are at: http://knoesis.org/library/resource.php?id=735

Prof. Sheth started a Semantic Web company Taalee, Inc. in 1999 (product was called MediaAnywhere A/V search engine- discussed in this paper in the context of one of its use by a customer Redband Broadcasting). The product included Semantic Web/populated Ontology based semantic (faceted) search, semantic browsing, semantic personalization, semantic targeting (advertisement), etc as is described in U.S. Patent #6311194, 30 Oct. 2001 (filed 2000). MediaAnywhere has about 25 ontologies in News/Business, Sports, Entertainment, etc.

Taalee merged to become Voquette in 2001 (product was called SCORE), Semagix in 2004 (product was called Semagix Freedom), and then Fortent in 2006 (products included Know Your Customers).

Relationships at the Heart of Semantic Web: Modeling, Discovering, Validating...

Relationships at the Heart of Semantic Web: Modeling, Discovering, Validating...Artificial Intelligence Institute at UofSC

Keynote at: SOFSEM 2002: 29th Annual Conference on Current Trends in Theory and Practice of Informatics, Milovy, Czech Republic, 24-29 November 2002.INTELLIGENT QUERY PROCESSING IN MALAYALAM

The paper presents a model for developing intelligent query processing in Malayalam. For this the

investigator has selected a domain as time enquiry system in Malayalam language. This work discusses

issues involved in Natural Language Processing. NLQPS is a restricted domain system, deals with the

natural Language Queries on time enquiry for different modes of transportation. The system performs a

shallow syntactic and semantic analysis of the input query. After the knowledge level understanding of the

query, the system triggers a reasoning process to determine the type of query and the result slots that are

required. The investigator tries to extract the hidden intelligent behind a Natural Language Query

submitted by a user.

Schema-agnositc queries over large-schema databases: a distributional semanti...

The evolution of data environments towards the growth in the size, complexity, dy-

namicity and decentralisation (SCoDD) of schemas drastically impacts contemporary

data management. The SCoDD trend emerges as a central data management concern

in Big Data scenarios, where users and applications have a demand for more complete

data, produced by independent data sources, under different semantic assumptions and

contexts of use. Most Database Management Systems (DBMSs) today target a closed

communication scenario, where the symbolic schema of the database is known a priori

by the database user, which is able to interpret it in an unambiguous way. The context

in which the data is consumed and produced is well-defined and it is typically the

same context in which the data was created. In contrast, data management under the

SCoDD conditions target an open communication scenario where the symbolic system of

the database is unknown by the user and multiple interpretation contexts are possible.

In this case the database can be created under a different context from the database

user. The emergence of this new data environment demands the revisit of the semantic

assumptions behind databases and the design of data access mechanisms which can

support semantically heterogeneous (open communication) data environments.

This work aims at filling this gap by proposing a complementary semantic model for

databases, based on distributional semantic models. Distributional semantics provides a

complementary perspective to the formal perspective of database semantics, which supports

semantic approximation as a first-class database operation. Differently from models

which describe uncertain and incomplete data or probabilistic databases, distributional-

relational models focuses on the construction of conceptual approximation approaches

for databases, supported by a comprehensive semantic model automatically built from

large-scale unstructured data external to the database, which serves as a semantic/com-

monsense knowledge base. The semantic model can be used to support schema-agnosticqueries, i.e. abstracting the data consumer from a specific conceptualization behind the

data.

The proposed distributional-relational semantic model is supported by a distributional

structured vector space model, named τ −Space, which represents structured data under

a distributional semantic model representation which, in coordination with a query plan-

ning approach, supports a schema-agnostic query mechanism for large-schema databases.

The query mechanism is materialized in the Treo query engine and is evaluated using

schema-agnostic natural language queries.

The evaluation of the query mechanism confirms that distributional semantics provides

a high-recall, medium-high precision, and low maintainability solution to cope with

the abstraction and conceptual-level differences in schema-agnostic queries over largeschema/

schema-less open domain dataset

The Triplex Approach for Recognizing Semantic Relations from Noun Phrases, Ap...

The Triplex Approach for Recognizing Semantic Relations from Noun Phrases, Appositions, and Adjectives

Using topic modelling frameworks for NLP and semantic search

As the volume of content continues to grow exponentially helping search engines to understand context and the topical themes within your site is increasingly important. Understanding some of the concepts are covered and also ways to utilise these in your marketing strategy.

Recent Trends in Semantic Search Technologies

A talked given by Peter Mika and Thanh Tran at SemTechBiz 2013

Semantic Interoperability in Infocosm: Beyond Infrastructural and Data Intero...

Amit Sheth, Keynote: International Conference on Interoperating Geographic Systems (Interop’97),

Santa Barbara, December 3-4 1997

.

Related technical paper: http://knoesis.org/library/resource.php?id=00230

Synthesys Technical Overview

This white paper provides an overview of the patented technologies and associated processes that combine to deliver a unique and highly scalable solution for unstructured text/data analytics

Leveraging Flat Files from the Canvas LMS Data Portal at K-State

A lot of data are created in an LMS instance, and much of this can be analyzed for insight. In 2016, Instructure, the makers of Canvas, made their LMS data available to their customers through a data portal (updated monthly). This portal enables access to a number of flat files related to that particular instance. This presentation showcases how this big data was analyzed on a regular laptop with basic office software, to summarize Kansas State University’s use of the LMS. Methods for analysis include the following: basic descriptive statistics, survival analysis, computational linguistic analysis, and others.

The results are reported out with both numbers and data visualizations, including classic pie charts, line graphs, bar charts, mixed-charts, word clouds, and others. The findings provide some insights about how to approach the data, how to use a data dictionary, and other methods for extracting the data for awareness and practical decision-making. This work also is suggestive of next steps for more advanced analysis (using the flat files in a SQL database).

More information about this may be accessed at http://scalar.usc.edu/works/c2c-digital-magazine-spring--summer-2017/wrangling-big-data-in-a-small-tech-ecosystem.

The impact of domain-specific stop-word lists on ecommerce website search per...

are of good quality).sac #1Copyright 2007 Gale, Cengage Learning. IBM's item lines are the ...

Literature Based Framework for Semantic Descriptions of e-Science resources

Literature Based Framework for Semantic Descriptions of e-Science resources

Similar to Semantic Search Component (20)

Semantic Web & Information Brokering: Opportunities, Commercialization and Ch...

Semantic Web & Information Brokering: Opportunities, Commercialization and Ch...

Relationships at the Heart of Semantic Web: Modeling, Discovering, Validating...

Relationships at the Heart of Semantic Web: Modeling, Discovering, Validating...

Schema-agnositc queries over large-schema databases: a distributional semanti...

Schema-agnositc queries over large-schema databases: a distributional semanti...

The Triplex Approach for Recognizing Semantic Relations from Noun Phrases, Ap...

The Triplex Approach for Recognizing Semantic Relations from Noun Phrases, Ap...

Using topic modelling frameworks for NLP and semantic search

Using topic modelling frameworks for NLP and semantic search

Semantic Interoperability in Infocosm: Beyond Infrastructural and Data Intero...

Semantic Interoperability in Infocosm: Beyond Infrastructural and Data Intero...

Leveraging Flat Files from the Canvas LMS Data Portal at K-State

Leveraging Flat Files from the Canvas LMS Data Portal at K-State

The impact of domain-specific stop-word lists on ecommerce website search per...

The impact of domain-specific stop-word lists on ecommerce website search per...

Literature Based Framework for Semantic Descriptions of e-Science resources

Literature Based Framework for Semantic Descriptions of e-Science resources

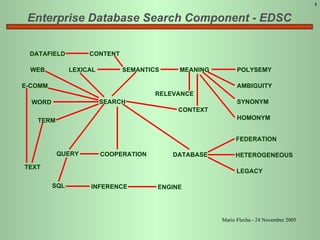

Semantic Search Component

- 1. Enterprise Database Search Component - EDSC DATAFIELD CONTENT WEB LEXICAL SEMANTICS MEANING RELEVANCE CONTEXT POLYSEMY AMBIGUITY SYNONYM HOMONYM DATABASE FEDERATION HETEROGENEOUS LEGACY E-COMM WORD TERM TEXT SQL QUERY INFERENCE ENGINE COOPERATION SEARCH 1 Mario Flecha - 24 November 2005

- 5. Handle context to cope with....... AMBIGUITY -> Polysemy, Homonymy, Synonymy POLYSEMY TERM MEANING SAME SOUND ONE MEANING T M 1 M 2 M n . . . ONE TERM VARIOUS MEANINGS M T 1 T 2 T n . . . ONE MEANING VARIOUS TERMS HOMONYMY BY PHONETIC CONVERGENGE T 1 T 2 M 1 M 2 HOMONYMY BY SEMANTIC DIVERGENCE T T 1 T 2 M 1 M 2 SAME SOUND PASSAGE TO POLYSEMY T 1 M 1 T 2 M 2 T M 1 M 2 5 Legend: T = Term M = Meaning

- 6. Handle context to cope with....... SEMANTIC CONSTELLATION (SEMANTIC FIELD) TEACHING ANALPHABETISM STUDY STUDENT ANALPHABET TEACH KNOWLEDGE EDUCATE EDUCATION ALPHABETIZE ALPHABETIZING LEARN APPRENTICESHIP APPRENTICE 6

- 11. Contextualizing Terms 11 (a) Example: city*Seattle; state*WS; year*1998. The context database, beyond the prefix, keeps processing information for term treatment, like phonetization, words breaking etc Context Prefix * Term = Contextualized Term Lexical Domain

- 12. Overall Search Component Architecture 12 User’s Application RIM’s Auxiliary Objects Facts Databases - X,Y,Z... And Instances Facts database instance X Contextualized Term (Semantic Knowledge Base) Contextualized Term (Ontology) Database X Instance 1 . . . . . . . . . . . . . . . . . . . . . . . . . . Database X Instance 1 Term 3 . . . . . . . . . . . . term 1 . . . . . . . . . . . . RIM User * Knowledge Acquisition Consultation * User could be a human or software Mediator Mediator Mediator Database Y Database Z Instance 2 Instance N Instance 1 . Instance 2 Instance N . Instance N Database X Instance 1 Term 2 Database X Instance 2 Term 90 Database X Instance 1000 Term 10 Database Y Instance 5 Term 100 Database Y Instance 3 Term 100 term 2 term 3 term 10 term 30 term 100 term 1000 term K Database L Instance 2 Term 2000 Database Z Instance Z Term K Relations and composite Views of RIM Downward Upward User’s Application Answer (set of tuple Ids) Question (set of questions Knowledge Acquisition Methods

- 13. EXAMPLES CITY NAME SEARCH 13

- 14. EXAMPLES: LOCATION SEARCH 14 State City Kind of Street Street’s Name Quarter’s Name Did CepDigital find? Did Medi a tor find? Aníbal Matos * São Pedro ** N SL St Aníbal Matos São Pedro N SL Street Aníbal Matos São Pedro N SL Street Professor Aníbal Matos São Pedro N Y Street Professor Aníbal de Matos São Pedro N Y Avenue Prof.Aníbal Matos São Pedro N Y MG Belo Horizonte Street Professor Aníbal de Matos Santo Antônio N Y MG Belo Horizonte Street Prof Anïbal de Matos Santo Antônio N Y MG Belo Horizonte St Professor Aníbal de Matos Santo Antônio Y Y MG Belo Horizonte Street Professor Aníbal de Matos or S Antônio N N BL BL MG Belo Horizonte Street Professor or S Antônio BL BL BL SL MG Belo Horizonte Street Anïbal or S Antônio N SL

- 15. CONCLUSION 15