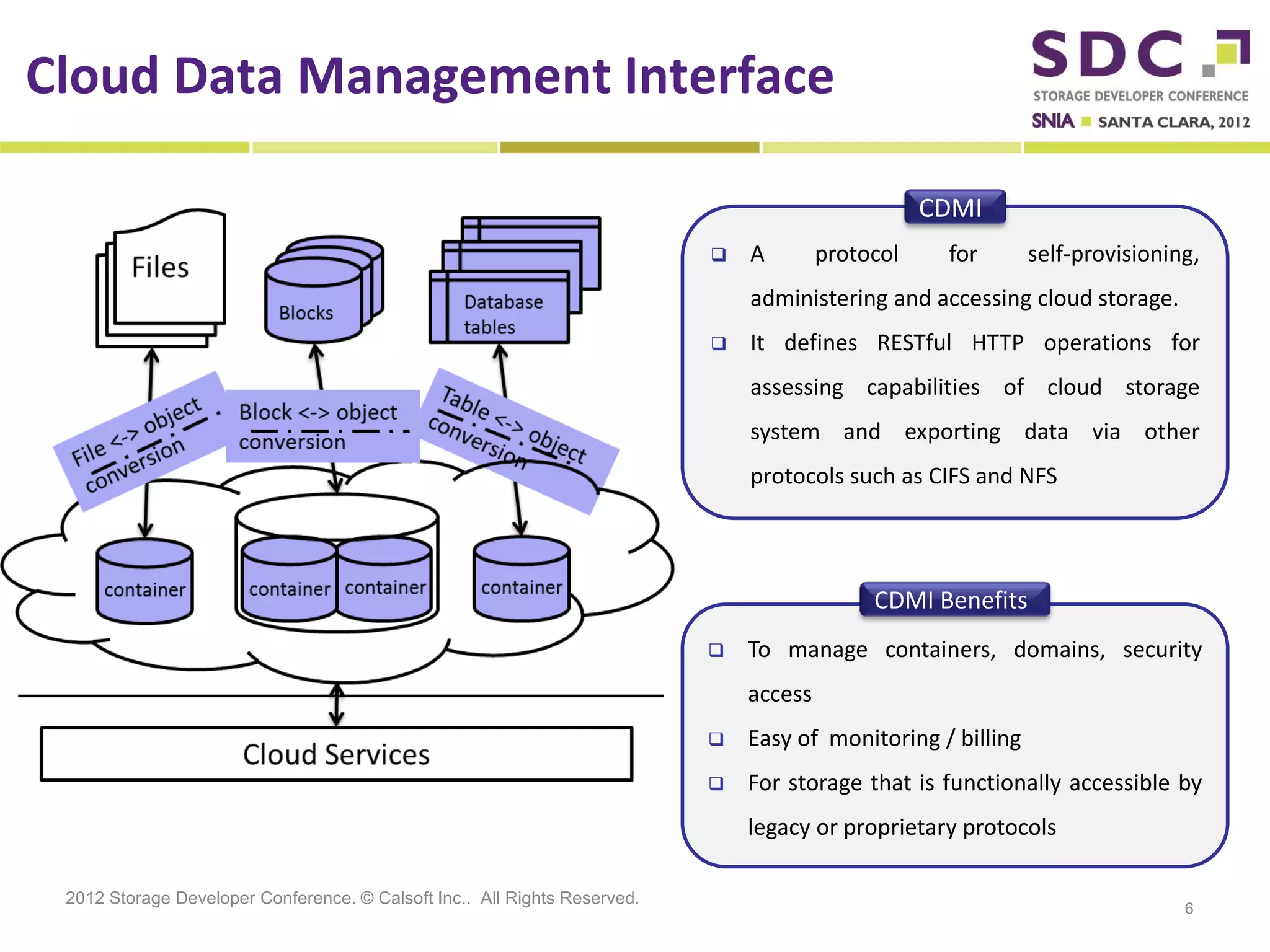

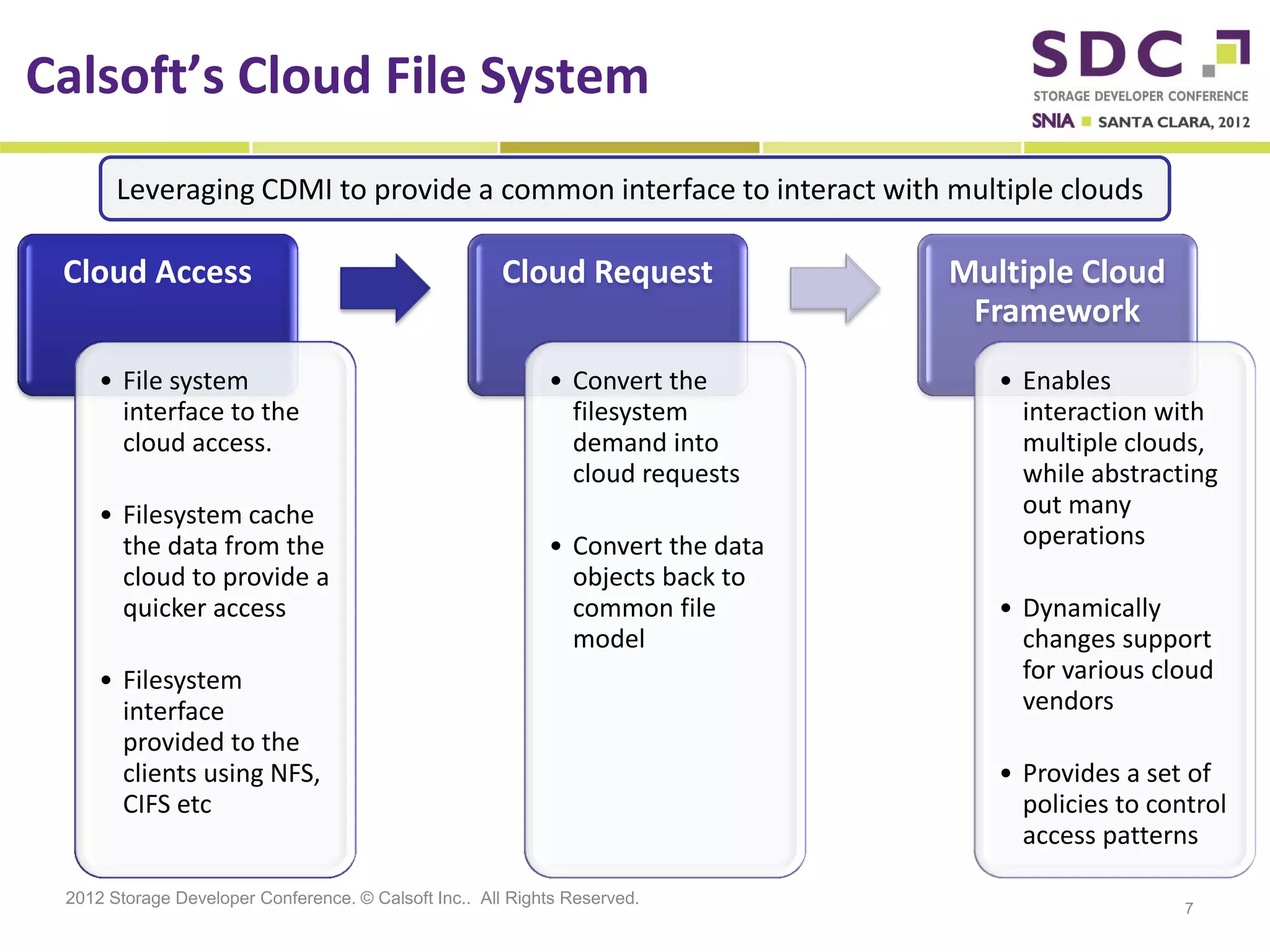

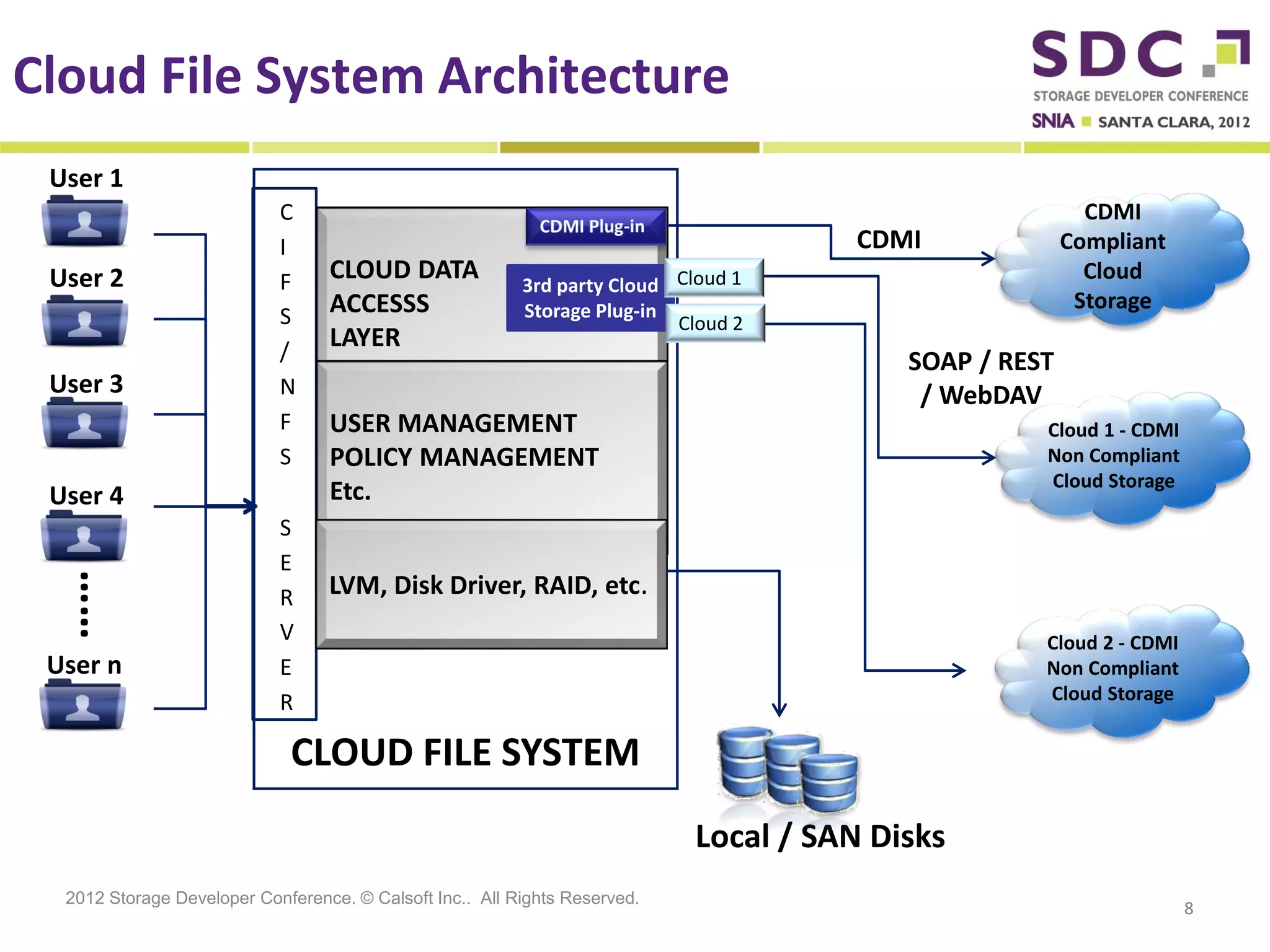

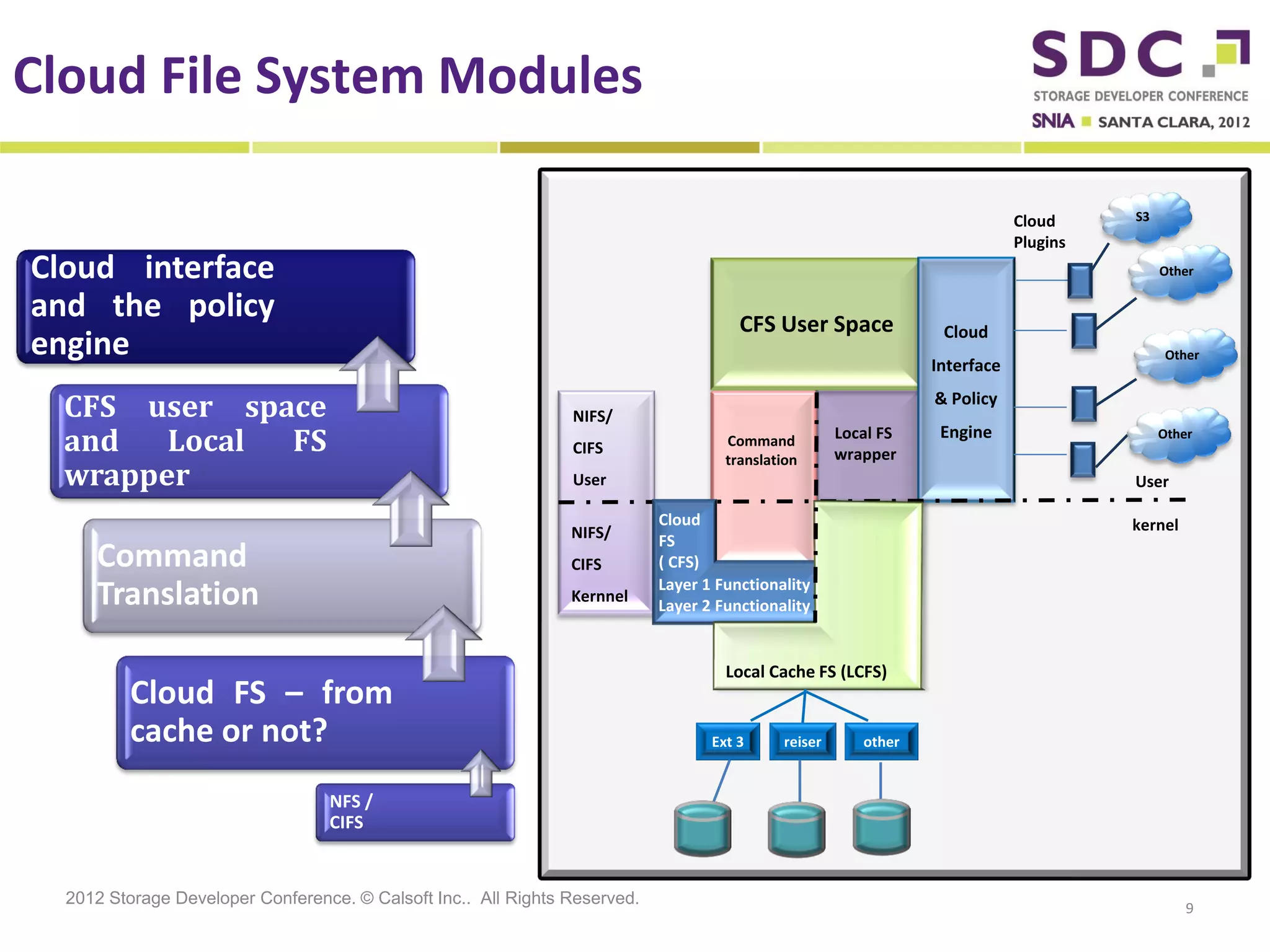

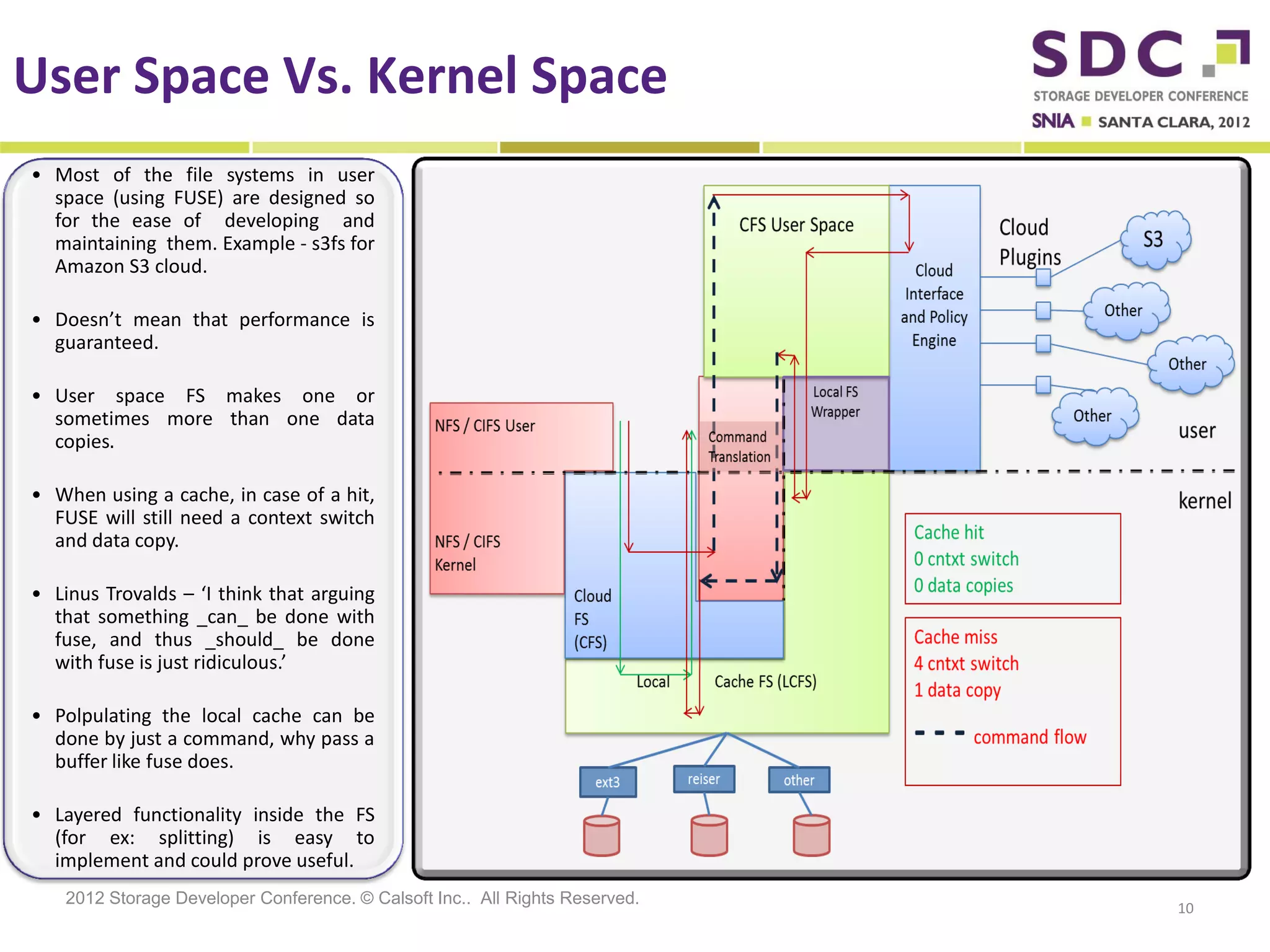

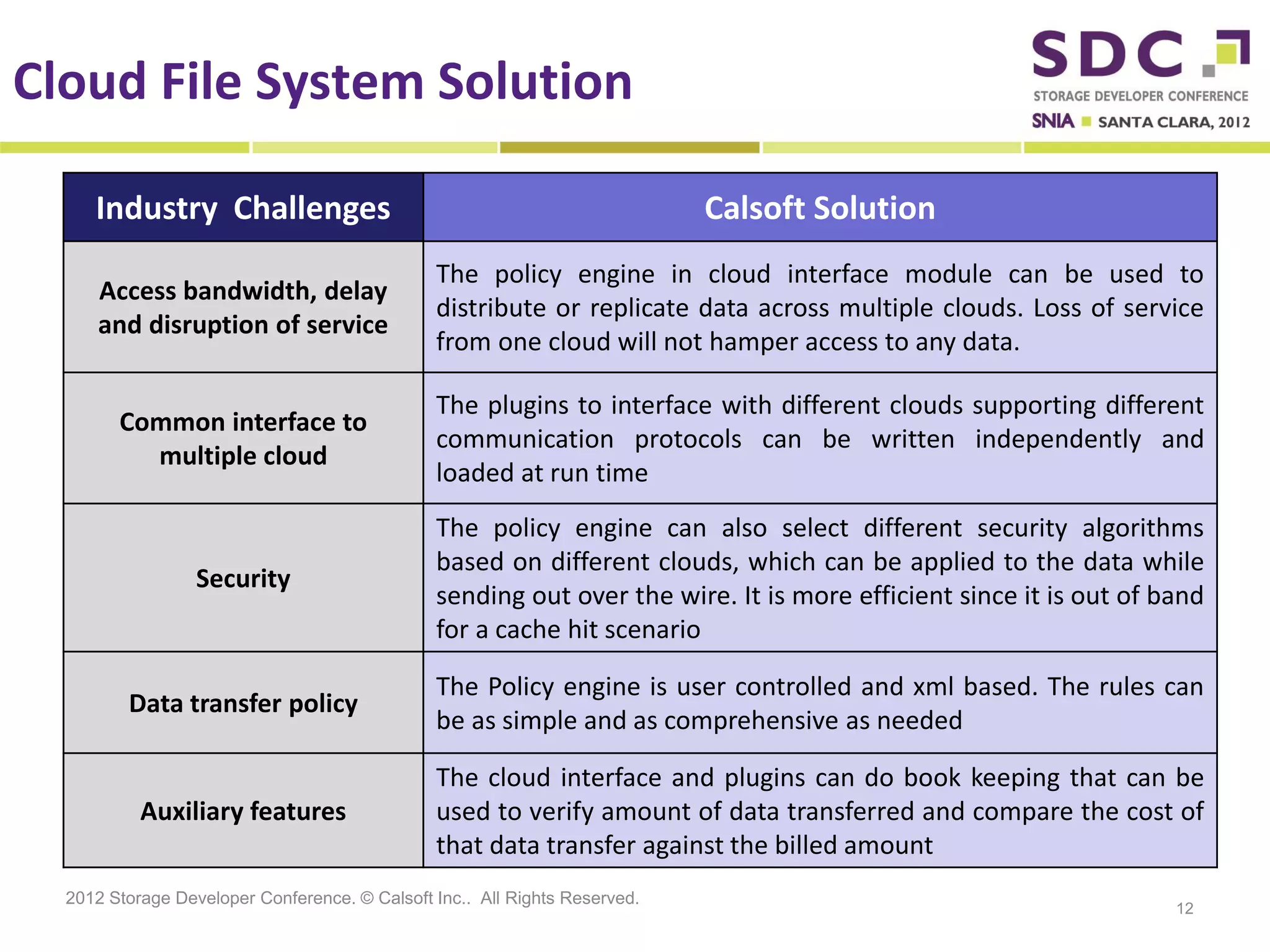

The document discusses the integration of cloud storage with network-attached storage (NAS) using the Cloud Data Management Interface (CDMI) to enhance data management and access. Calsoft's Cloud File System enables seamless interaction between multiple cloud providers, facilitating efficient storage solutions and policy management for enterprises. It addresses industry challenges while promoting an efficient approach to managing large-scale data storage across compliant and non-compliant clouds.