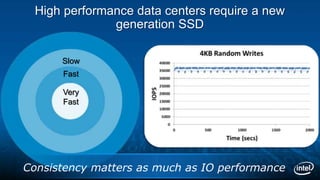

The document introduces the Intel SSD DC S3700 Series solid state drive. It notes that high performance data centers now require SSDs that provide consistently fast and reliable performance. The Intel SSD DC S3700 Series delivers consistently amazing and fast performance along with high endurance and stress-free protection. It uses Intel's 3rd generation controller and continuous background cleaning to maintain consistent performance. Case studies show the drives provide up to 4x speedup for database operations and support new classes of high performance computing applications compared to traditional HDDs.