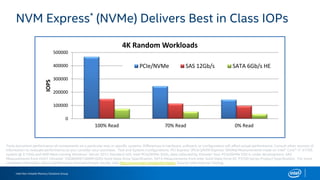

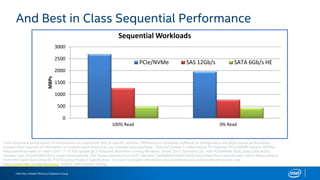

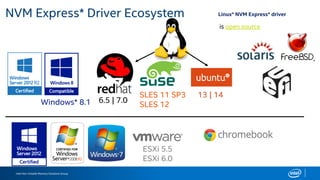

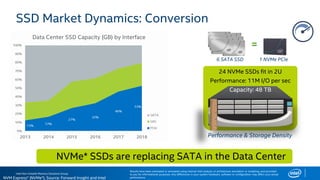

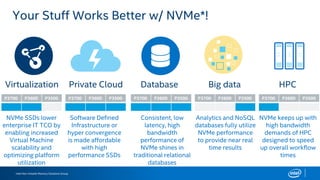

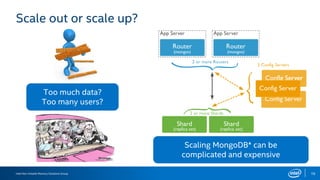

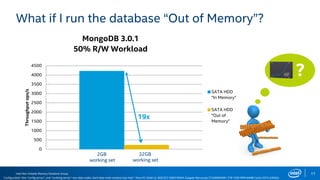

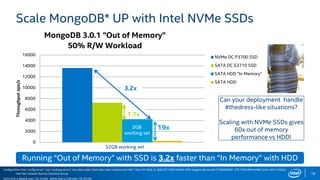

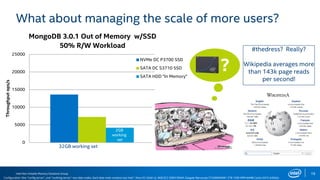

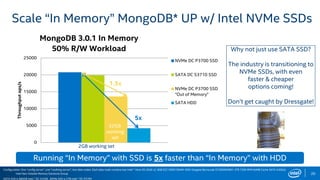

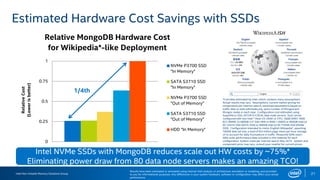

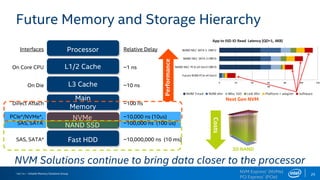

The document discusses the benefits of using NVMe SSDs to scale MongoDB applications, emphasizing improvements in performance, efficiency, and cost-effectiveness compared to traditional SSDs and HDDs. It highlights that NVMe technology can significantly enhance database throughput and reduce hardware costs for enterprises by improving scalability and optimizing resource utilization. Additionally, it provides insights into market trends and expected future developments in storage solutions.