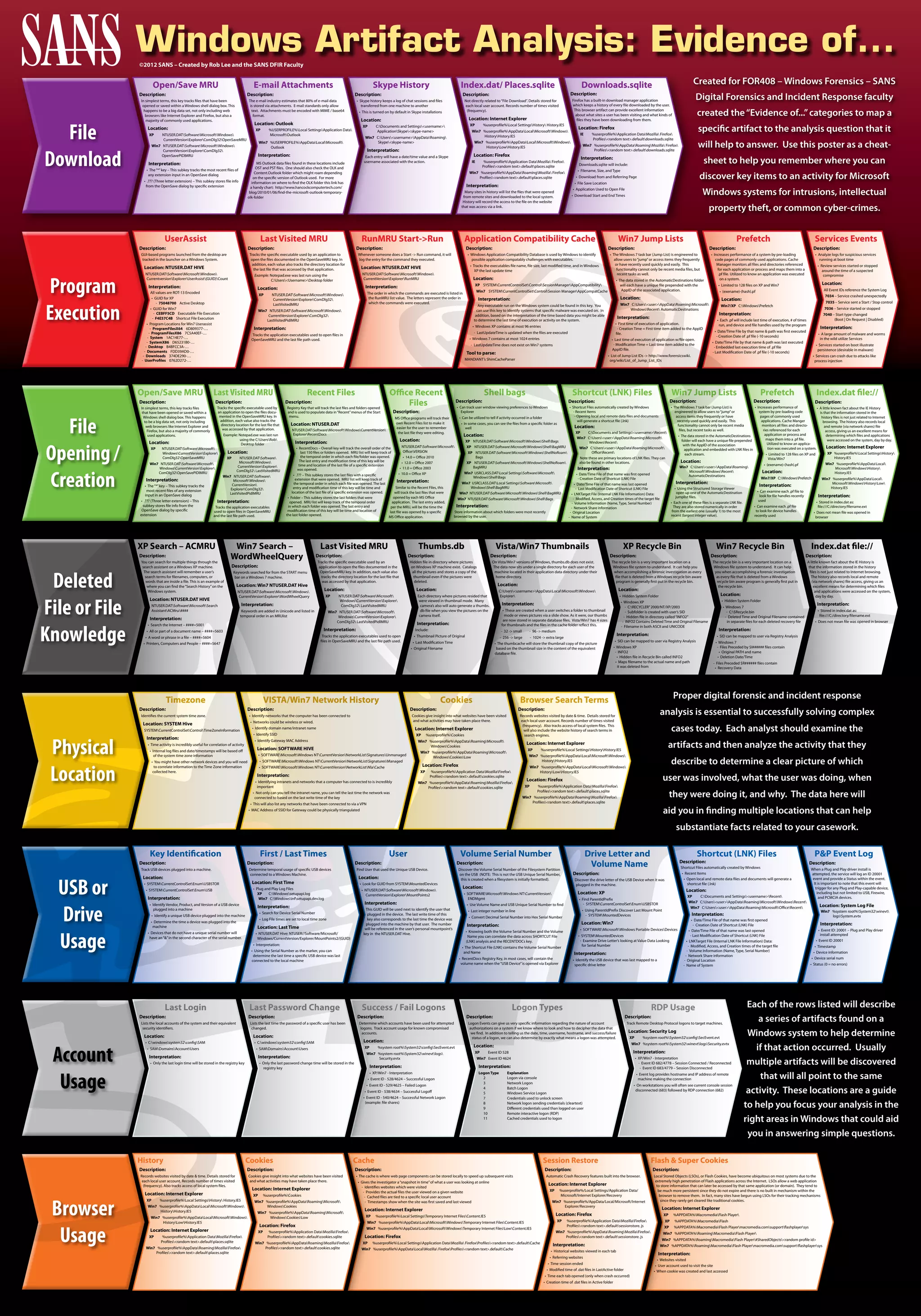

This document summarizes several Windows registry keys and artifacts that can provide information about a user's digital activities. It describes keys that track recently opened files, installed applications, browser history and search terms, network connections, and more. Examining these locations can reveal details like the files and websites a user accessed, when they were accessed, and from where they originated.