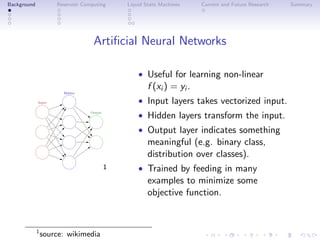

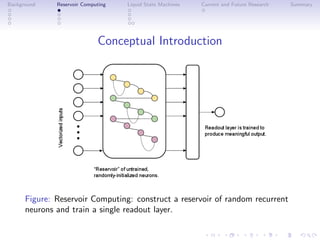

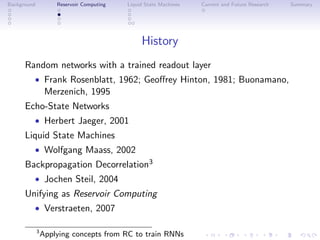

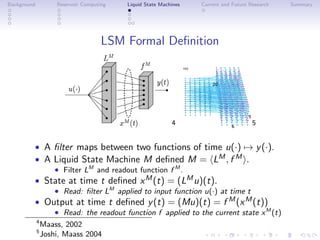

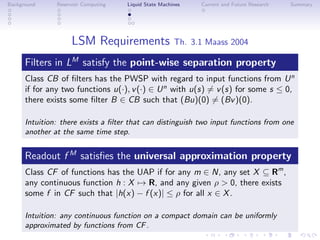

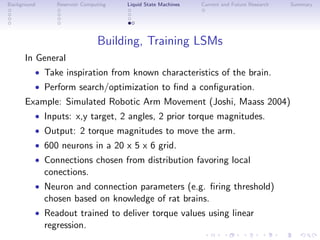

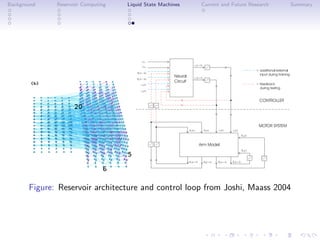

This document discusses reservoir computing with a focus on liquid state machines, highlighting their advantages over traditional artificial neural networks and recurrent neural networks for solving temporal problems. It outlines key concepts, historical developments, and successful applications in various fields while emphasizing the need for further research in hardware implementations and reservoir optimization. Liquid state machines require specific properties for universal computation, making them particularly effective for temporal data tasks.