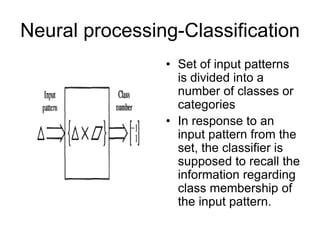

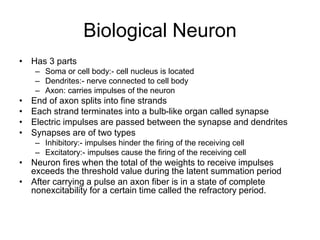

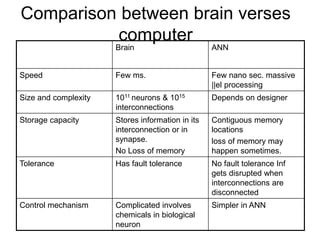

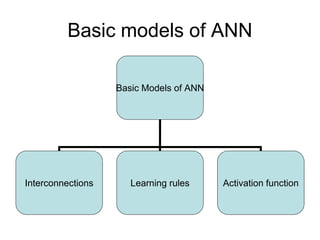

The document provides an overview of artificial neural networks (ANN). It discusses how ANN are constructed to model the human brain and can perform tasks like pattern matching and classification. The key points are:

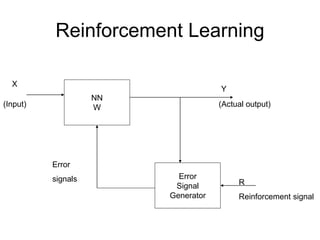

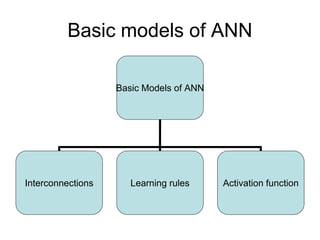

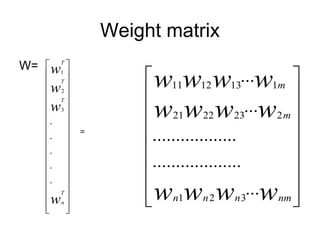

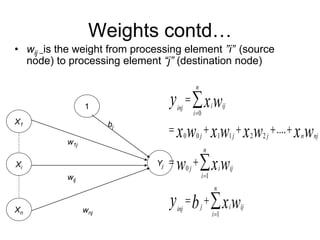

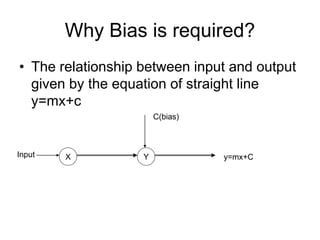

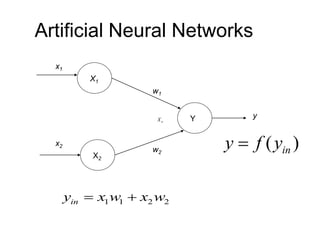

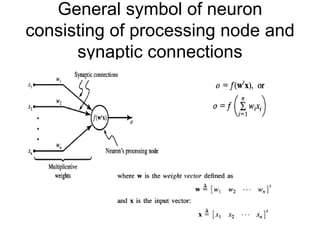

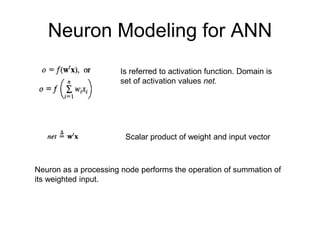

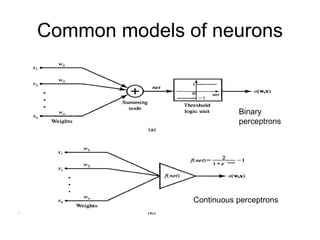

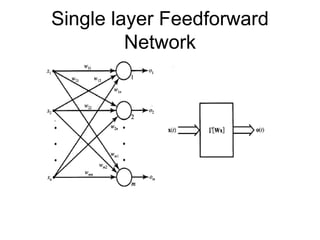

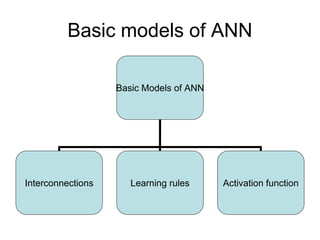

- ANN consist of interconnected nodes that operate in parallel, and connections between nodes are associated with weights. Each node receives weighted inputs and its activation level is calculated.

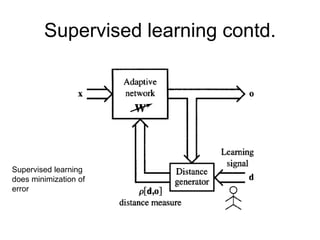

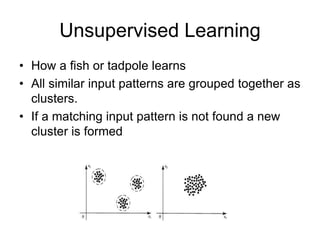

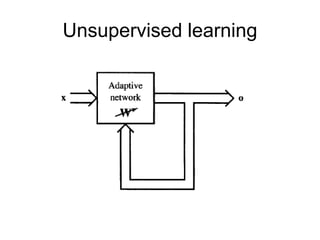

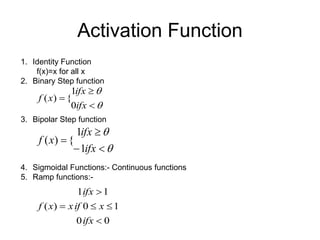

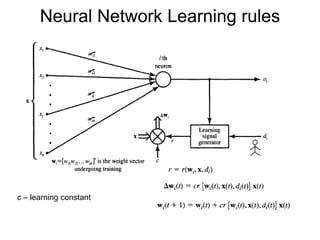

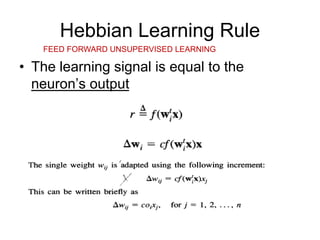

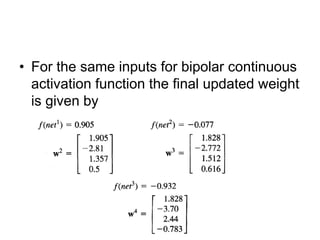

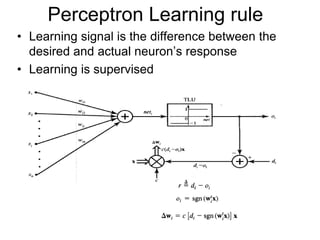

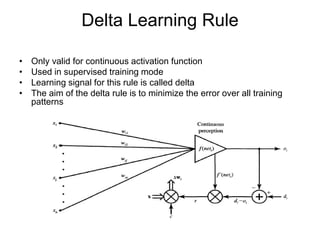

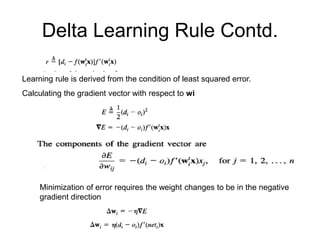

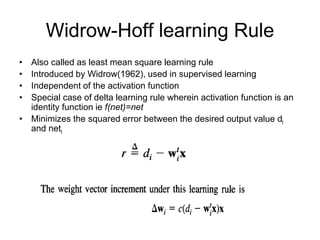

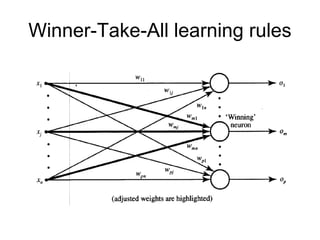

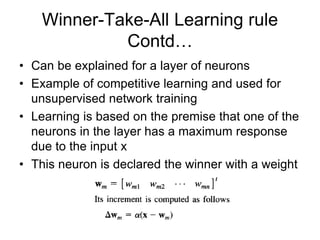

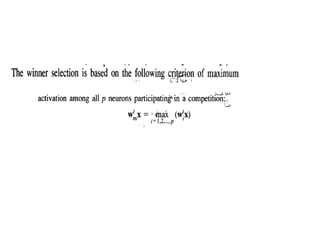

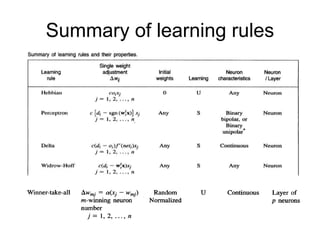

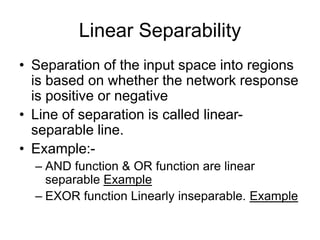

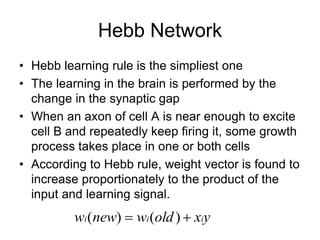

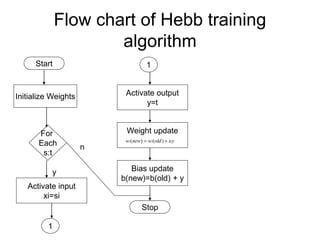

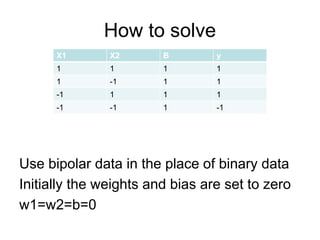

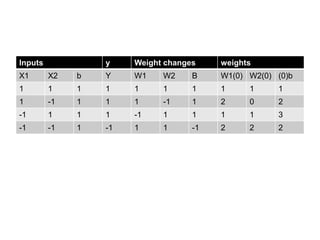

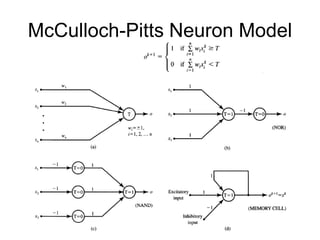

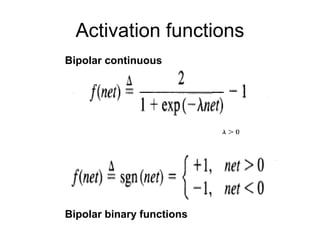

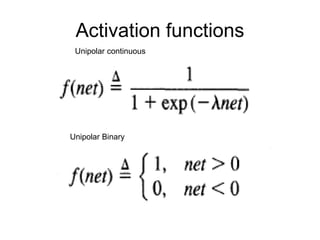

- Early models include the McCulloch-Pitts neuron model and Hebb network. Learning can be supervised, unsupervised, or reinforcement. Common activation functions and learning rules like backpropagation and Hebbian learning are described.

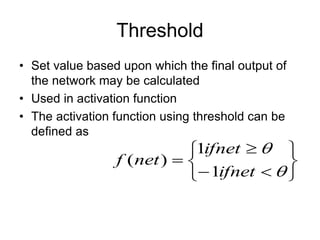

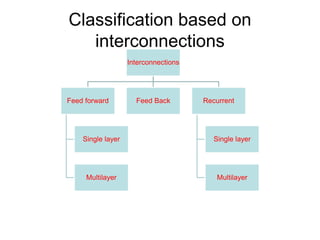

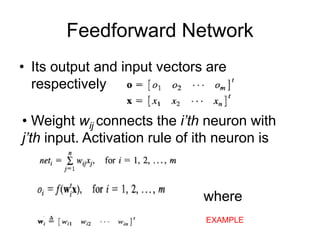

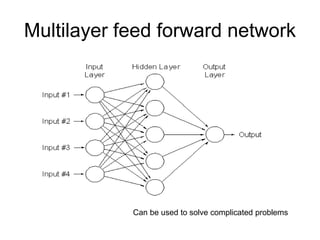

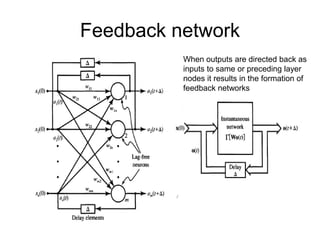

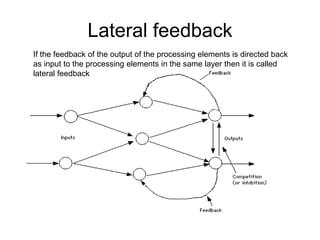

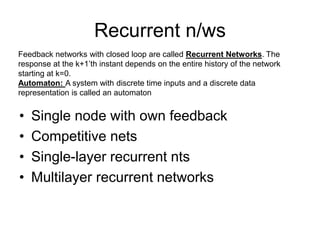

- Terminology includes weights, bias, thresholds, learning rates, and more. Different network architectures like feed

![Supervised Learning

• Child learns from a teacher

• Each input vector requires a

corresponding target vector.

• Training pair=[input vector, target vector]

Neural

Network

W

Error

Signal

Generator

X

(Input)

Y

(Actual output)

(Desired Output)

Error

(D-Y)

signals](https://image.slidesharecdn.com/artificial-neural-networks-rev-230407180239-a65dd4d3/85/artificial-neural-networks-rev-ppt-32-320.jpg)