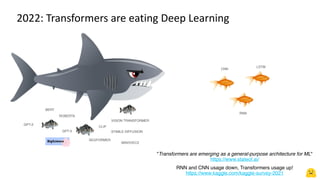

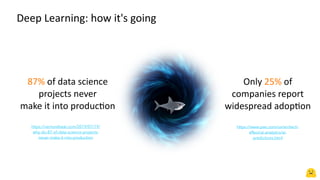

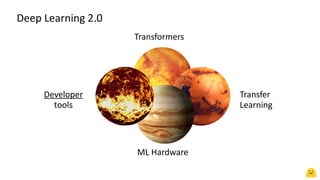

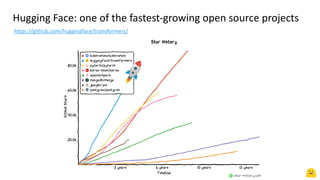

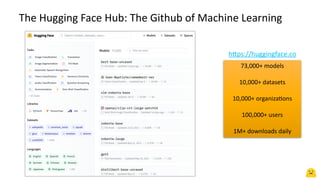

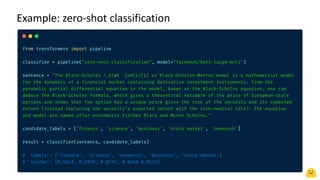

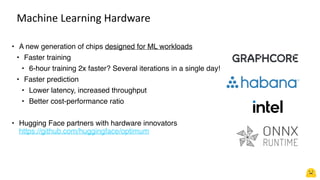

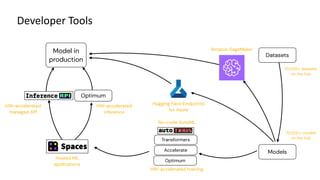

The document discusses how transformers have become a general-purpose architecture for machine learning, with various transformer models like BERT and GPT-3 seeing widespread adoption. It introduces Hugging Face as a company working to make transformers more accessible through tools and libraries. Hugging Face has seen rapid growth, with its hub hosting over 73,000 models and 10,000 datasets that are downloaded over 1 million times daily. The document outlines Hugging Face's vision of facilitating the entire machine learning process from data to production through tools that support tasks like transfer learning, hardware acceleration, and collaborative model development.