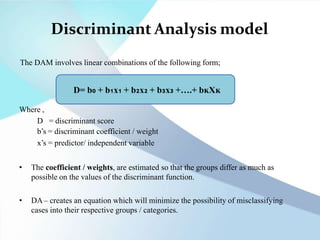

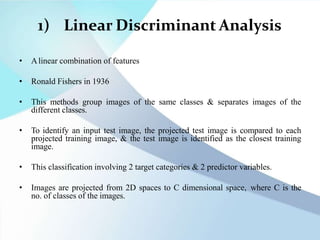

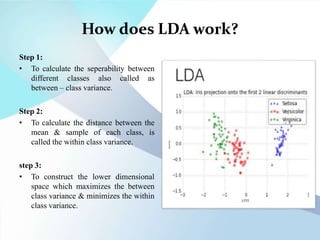

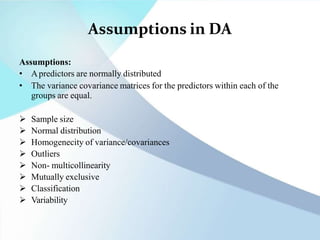

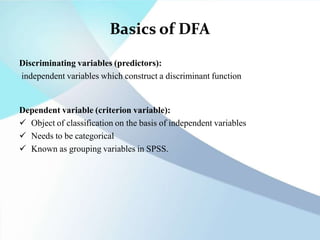

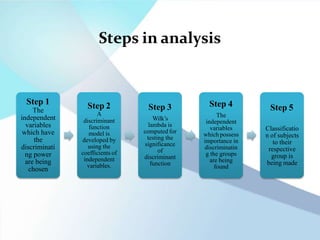

Discriminant function analysis (DFA) is a statistical technique used to determine which variables are best at predicting group membership. It creates linear combinations of predictor variables called discriminant functions that discriminate between categories of a dependent variable. DFA is similar to regression and ANOVA. It works by maximizing between-group differences and minimizing within-group differences to classify cases into groups based on predictor variables. The assumptions of DFA include normally distributed predictors and equal variance-covariance matrices within groups.

![DA in R programming

DFA (2 groups)

library(dplyr)

library(haven)

library(ggplot2)

mydat <- read_sav("C:/Users/cpflower/Dropbox (UNC

Charlotte)/RSCH8140/R/DFA/pope.sav")

View(mydat)

scatterplotMatrix(mydat[2:4])

install.packages("DFA.data")

library(DFA.data)

DFA(data=mydat, groups="gp", variables=c('wi', 'wc', 'pc'),

predictive=TRUE, prior='SIZES', verbose=TRUE)](https://image.slidesharecdn.com/rstudio-230403164848-2201243c/85/R-studio-pptx-10-320.jpg)