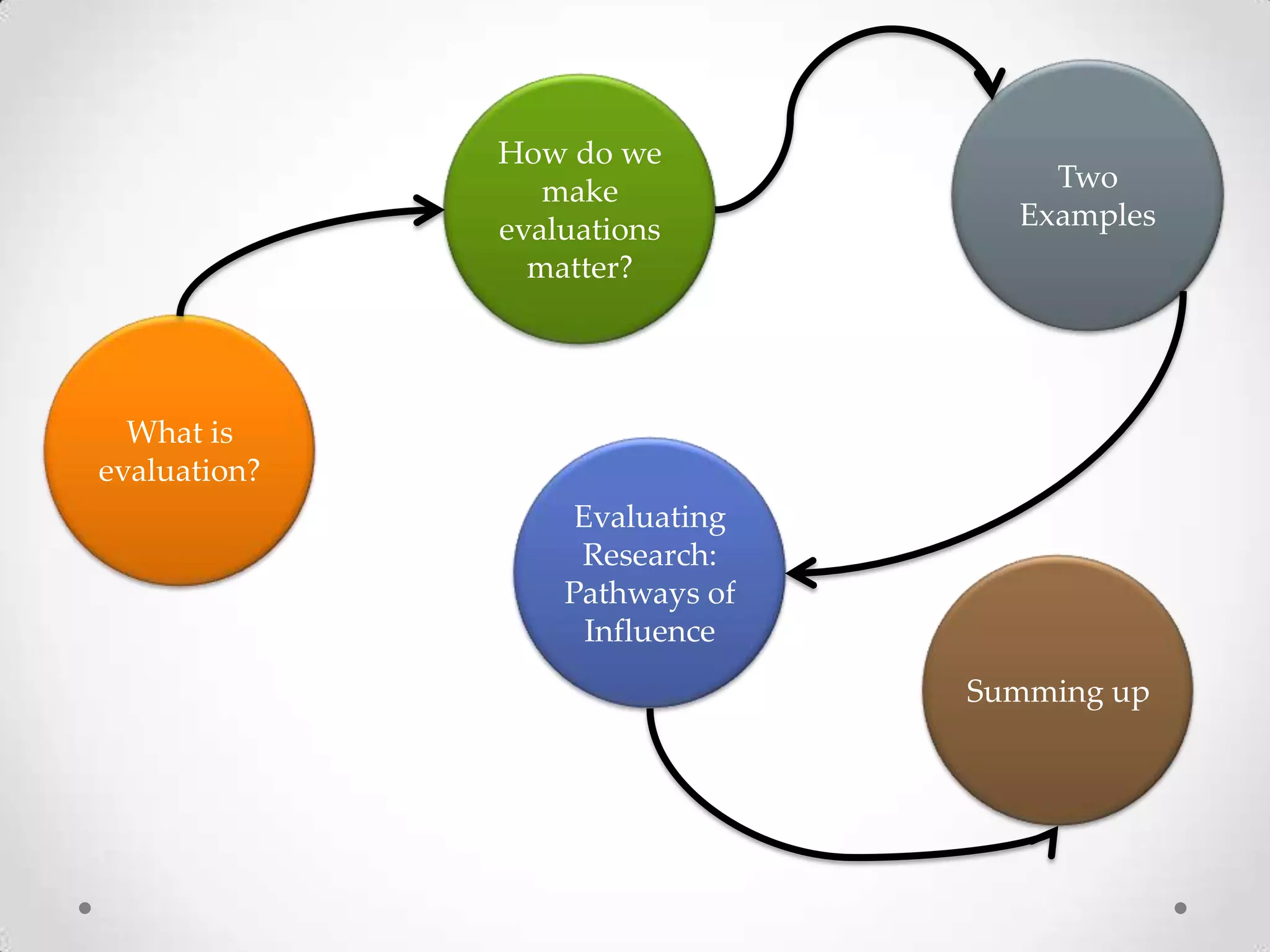

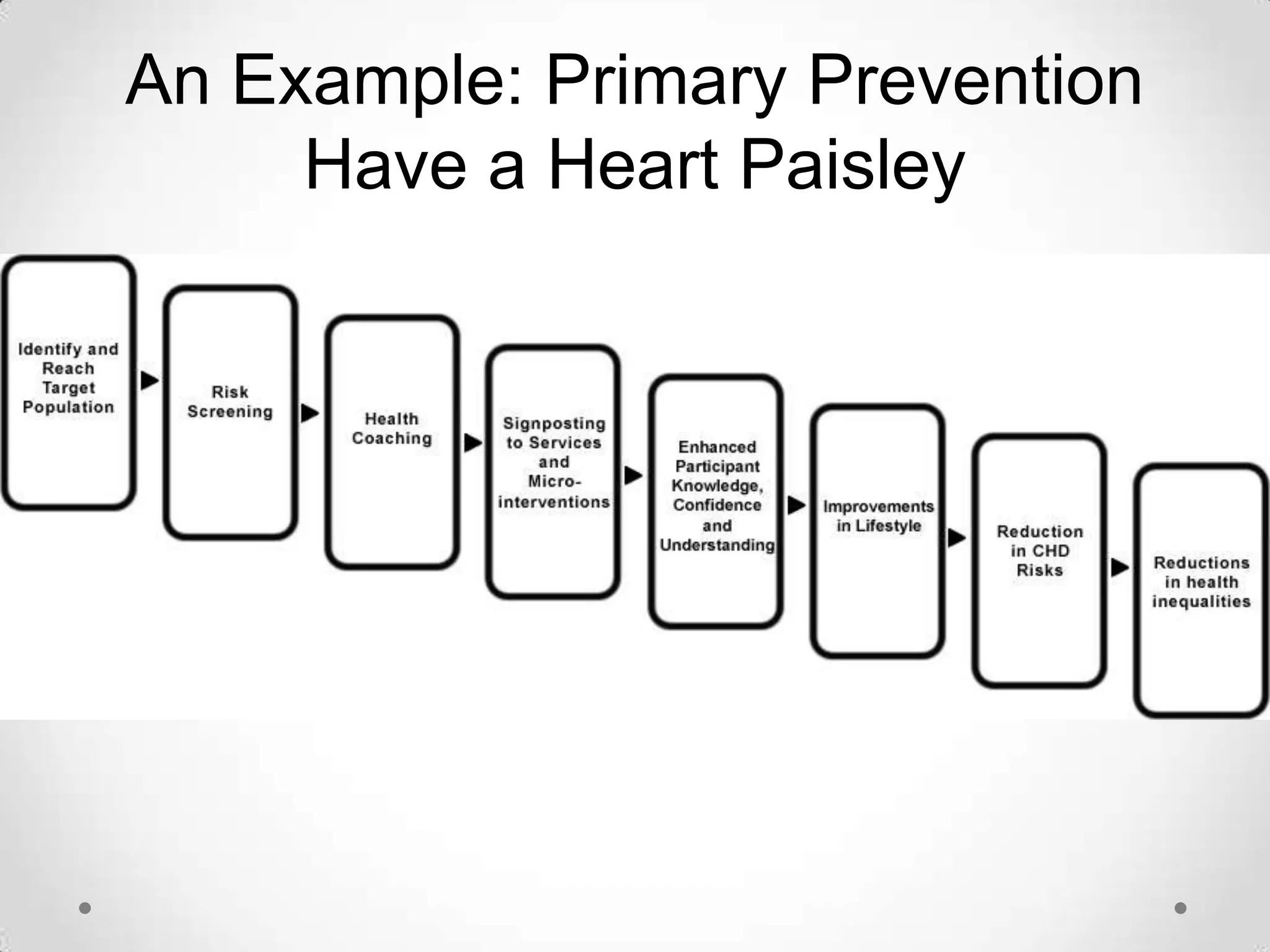

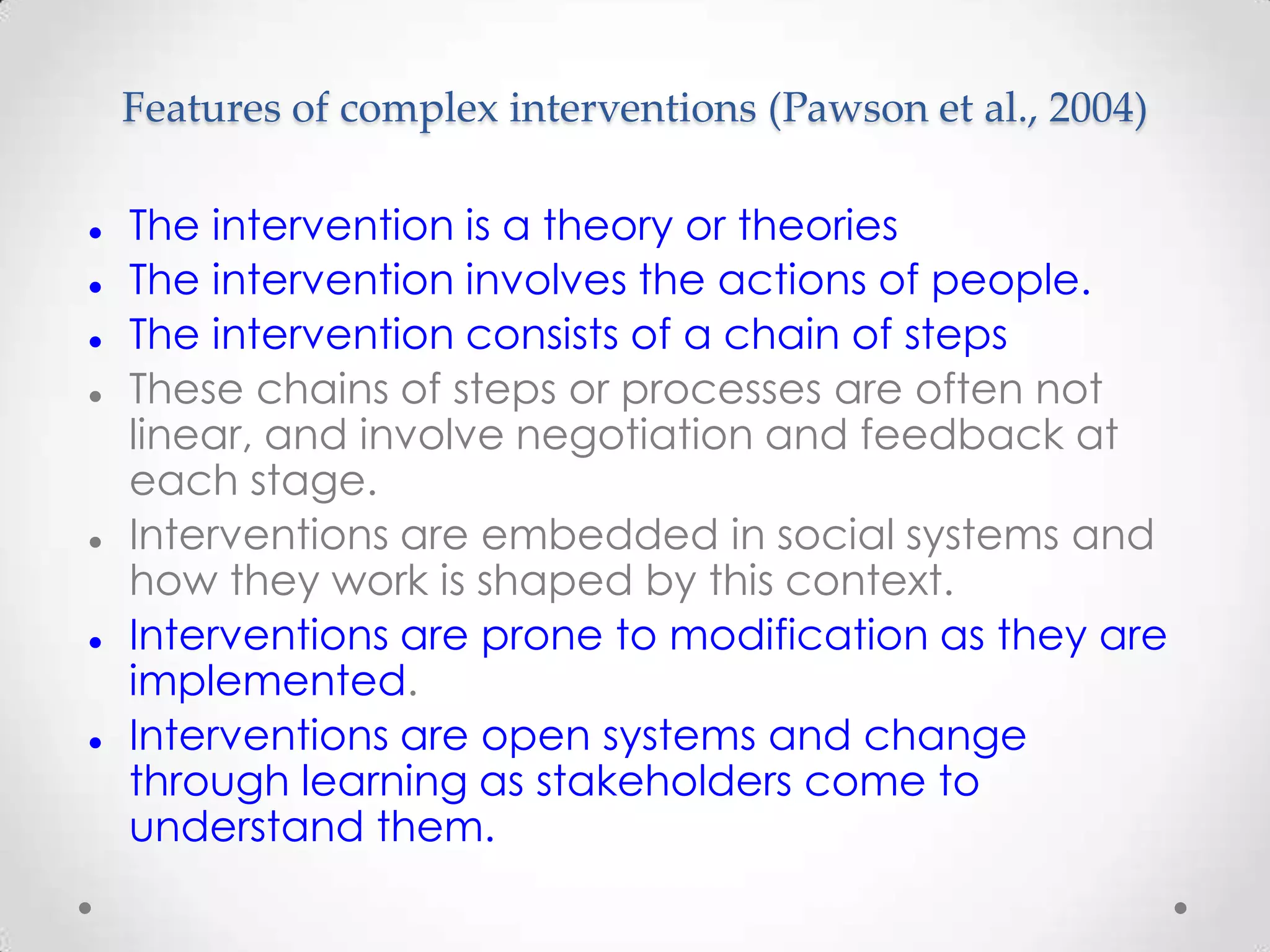

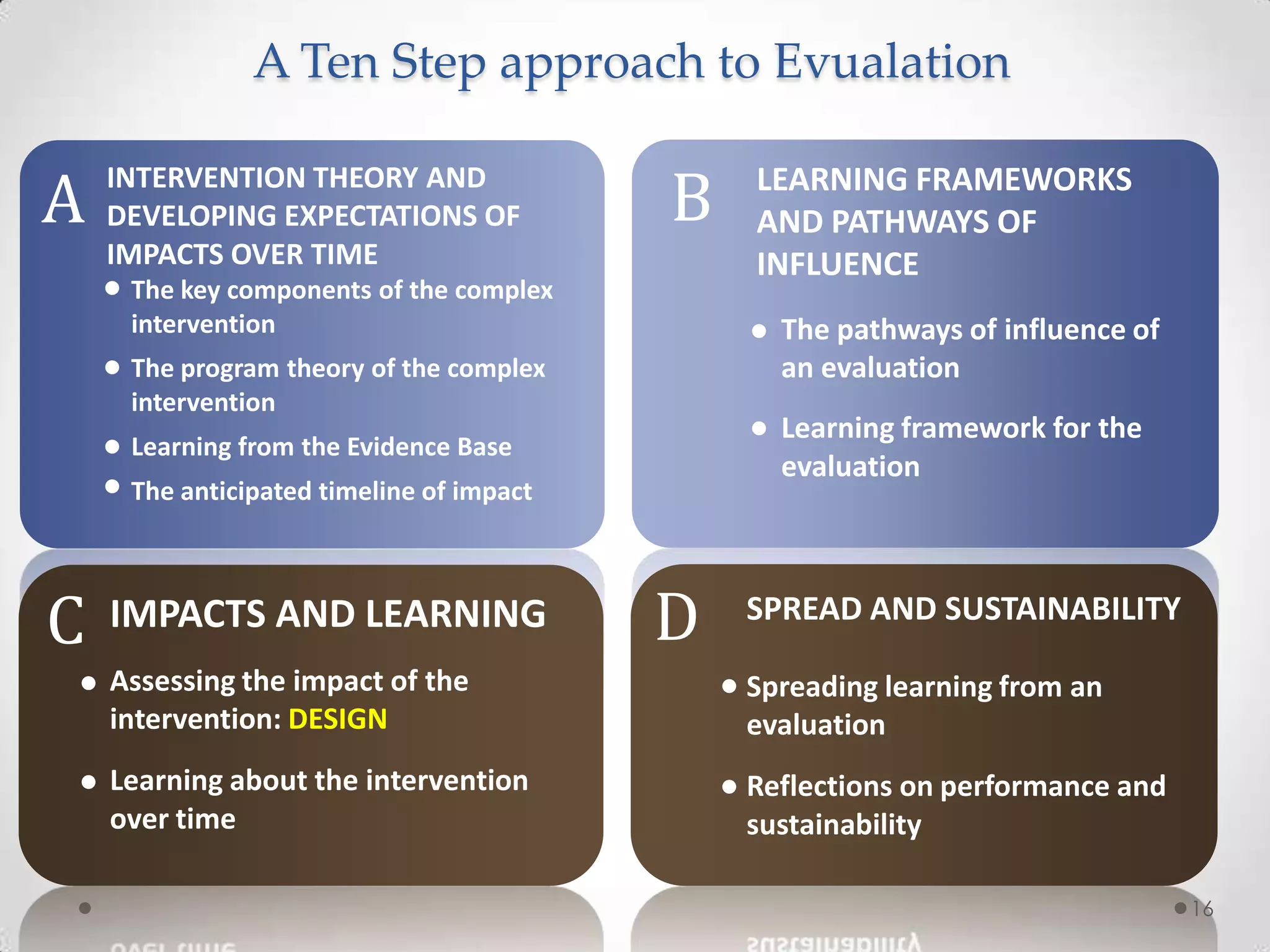

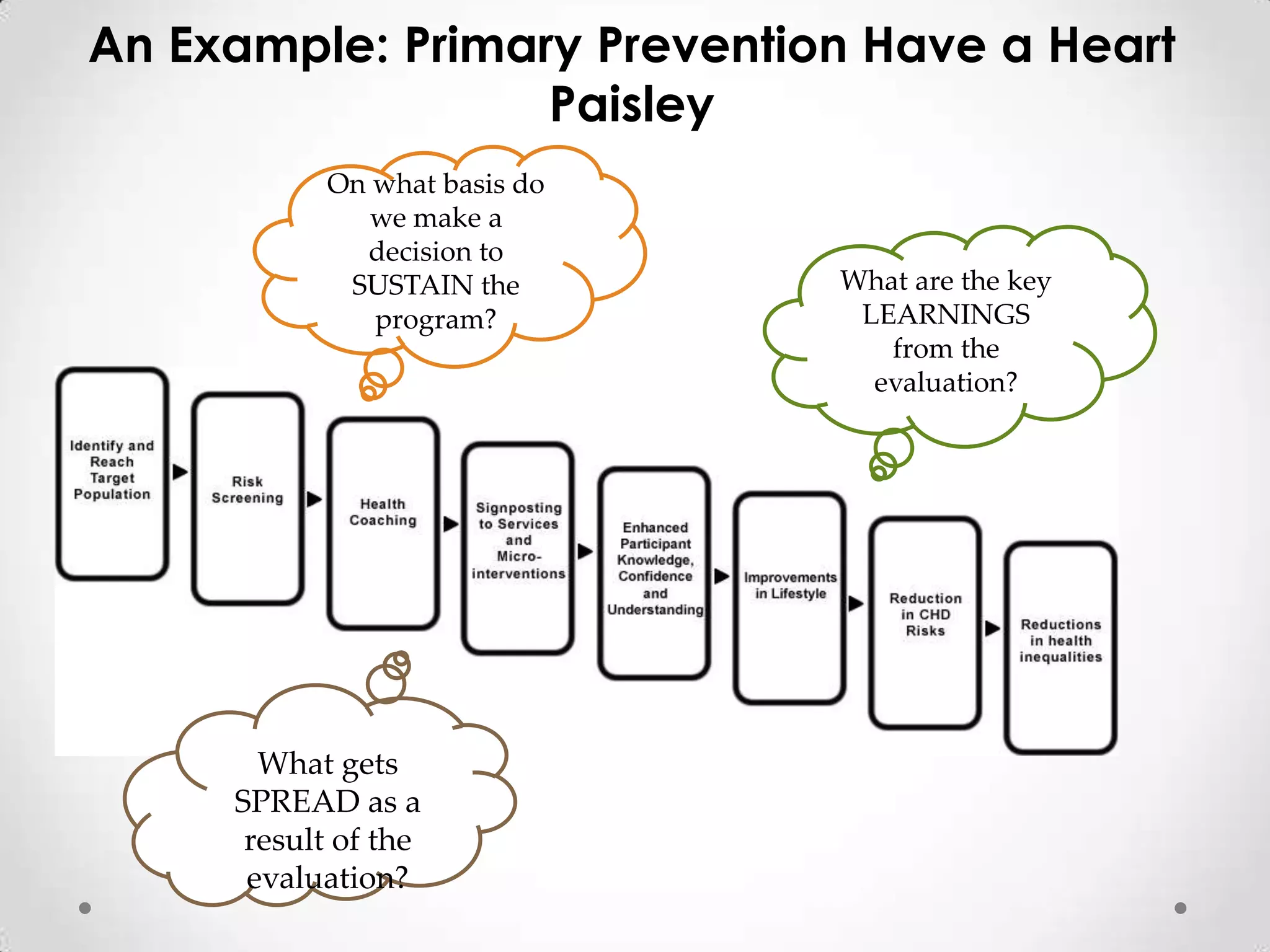

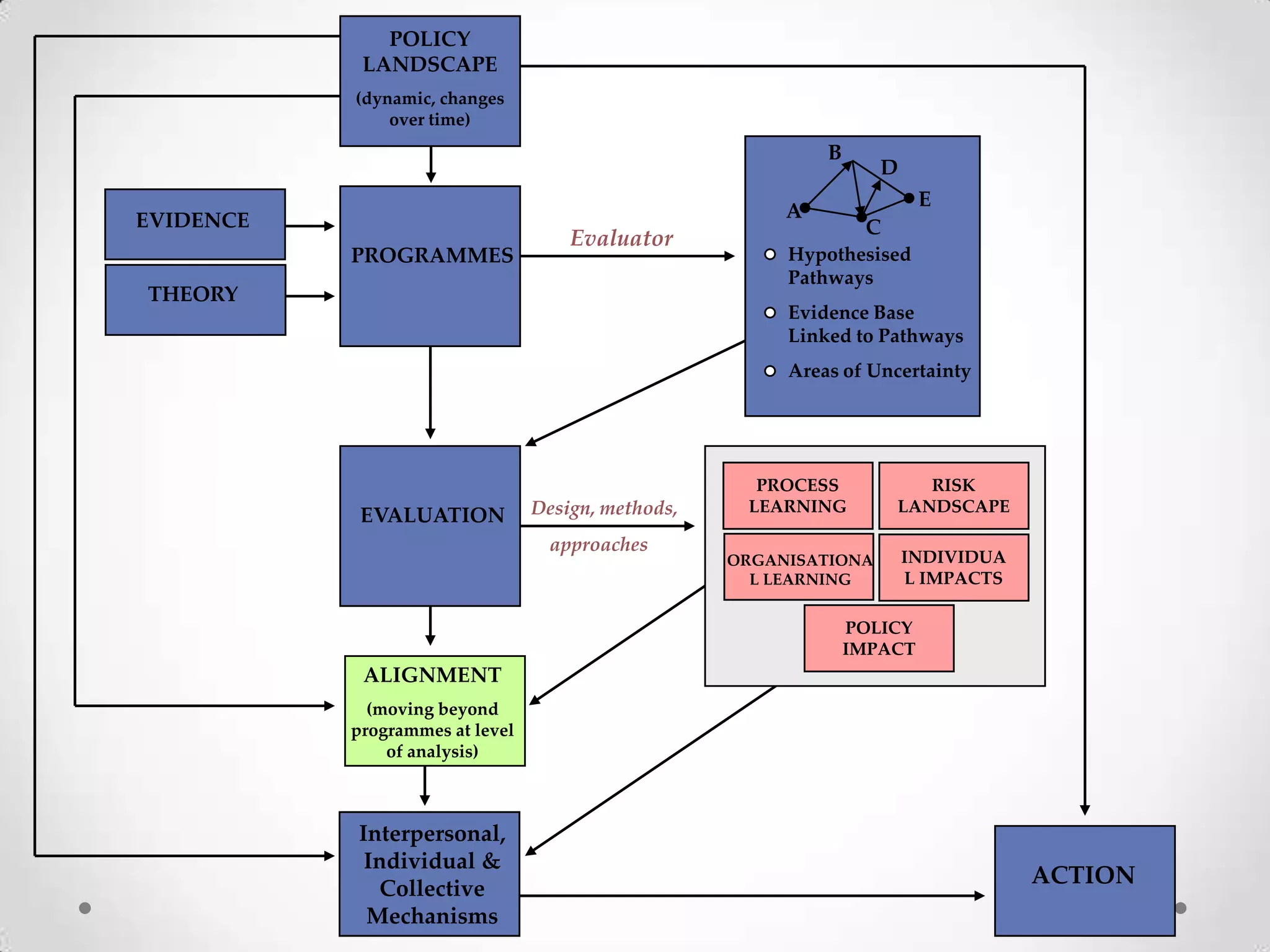

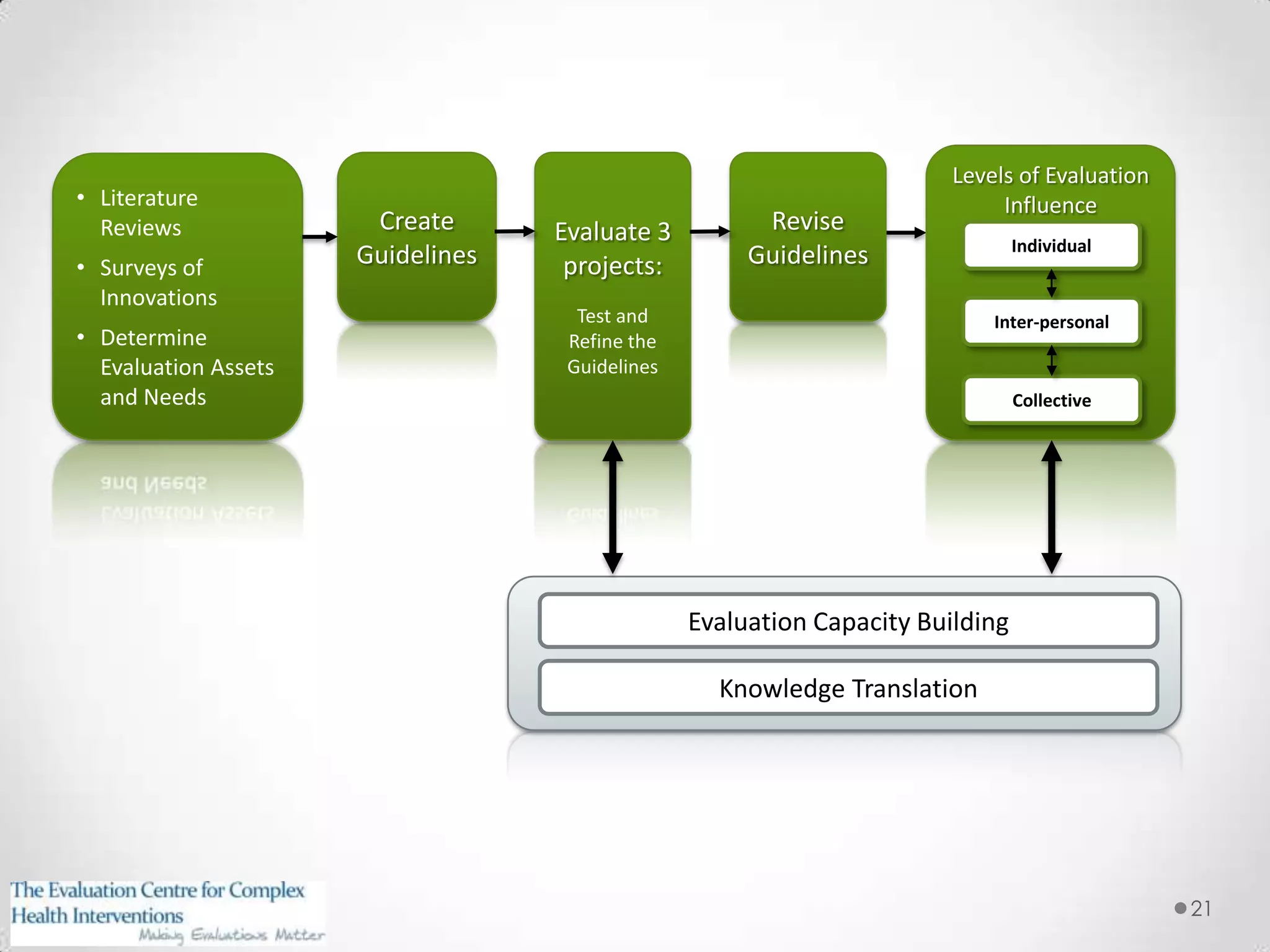

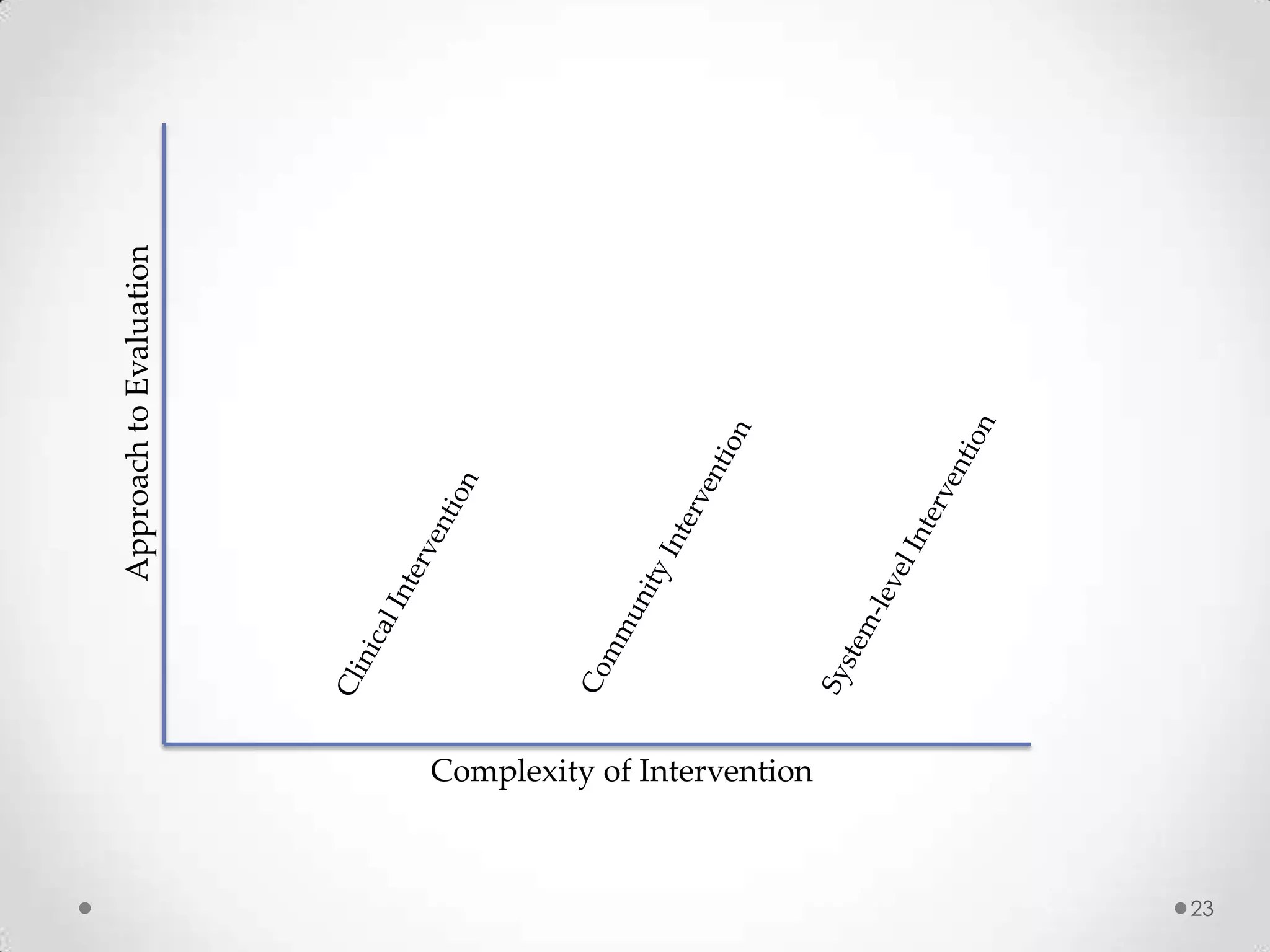

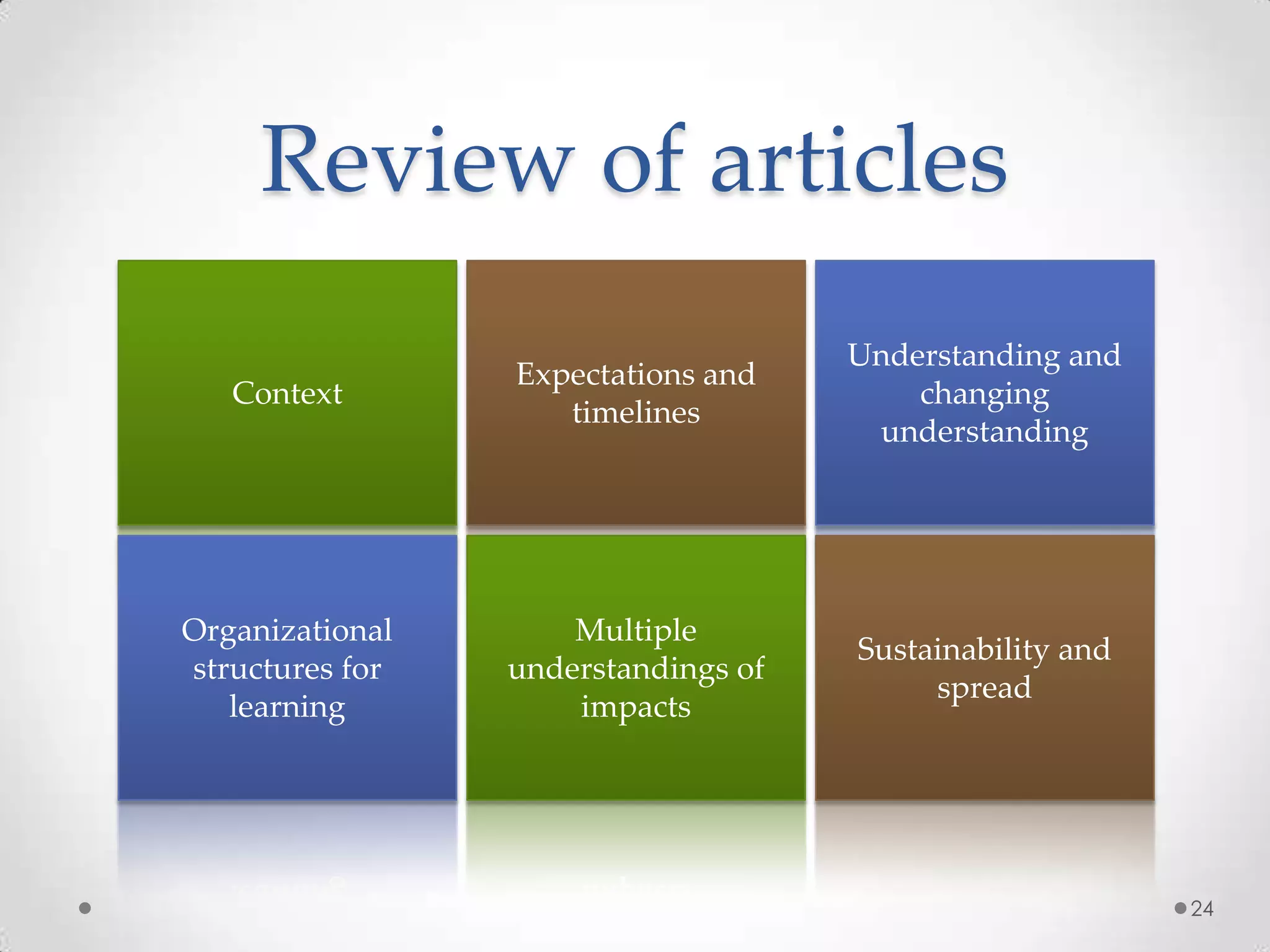

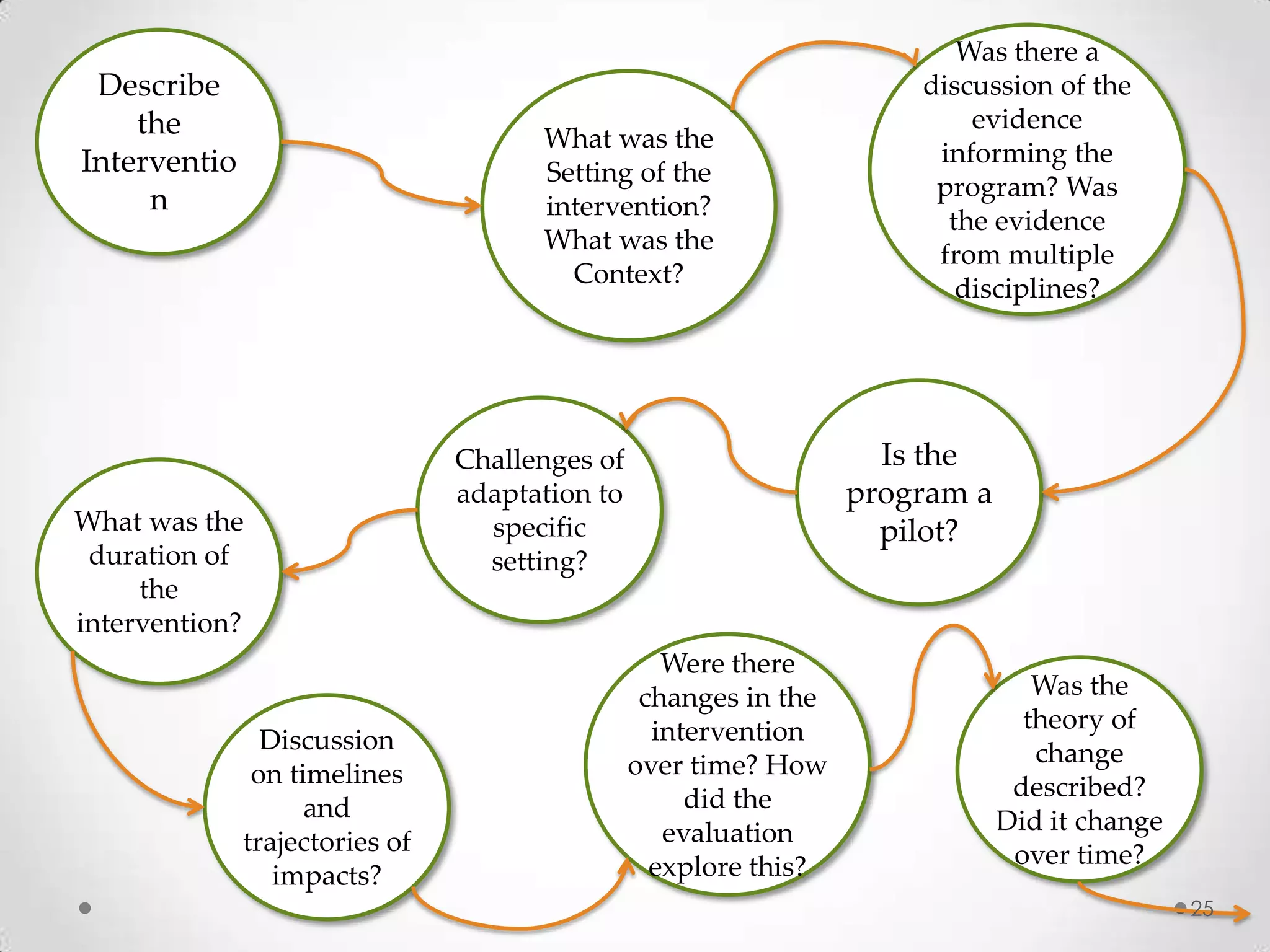

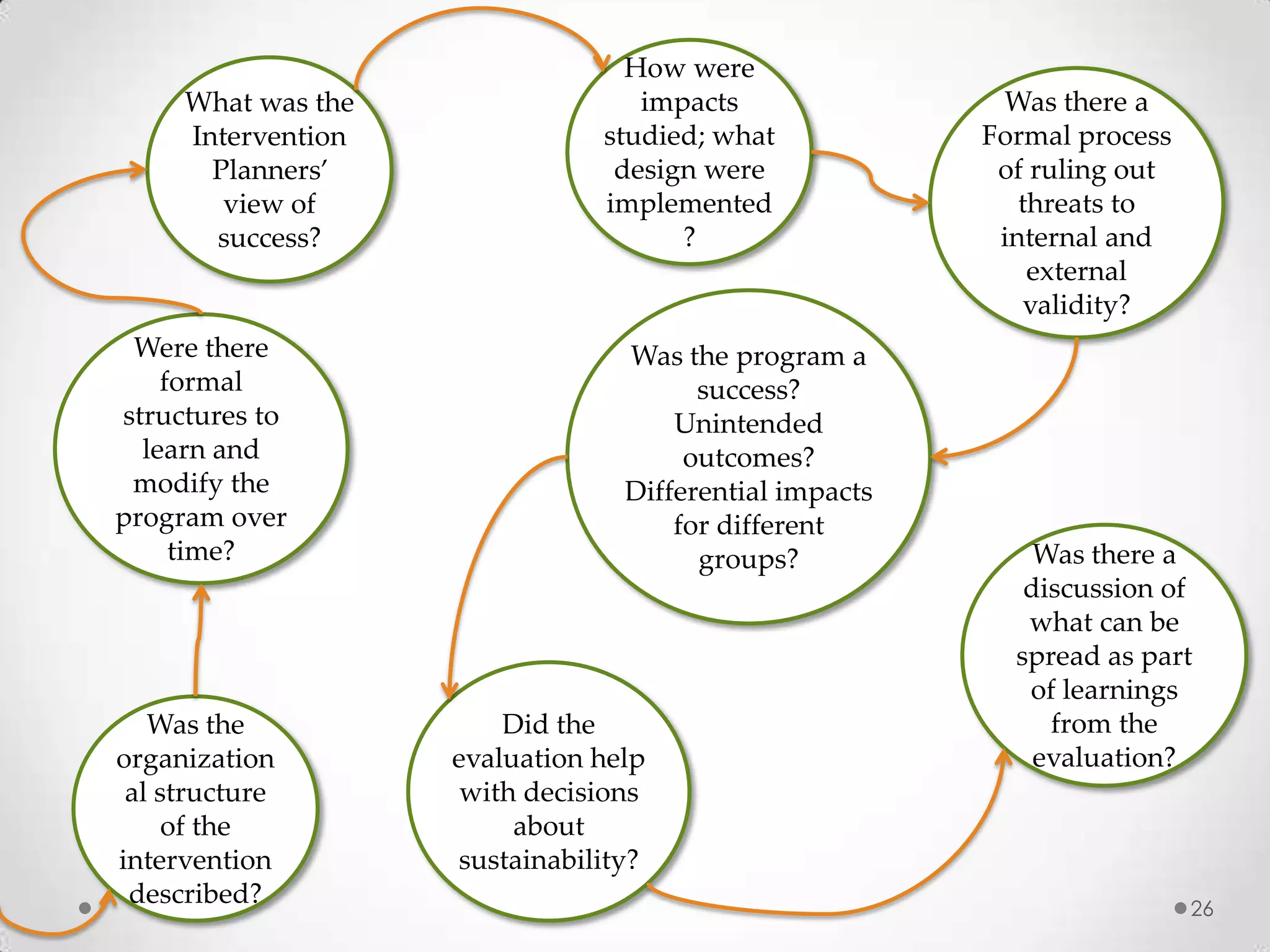

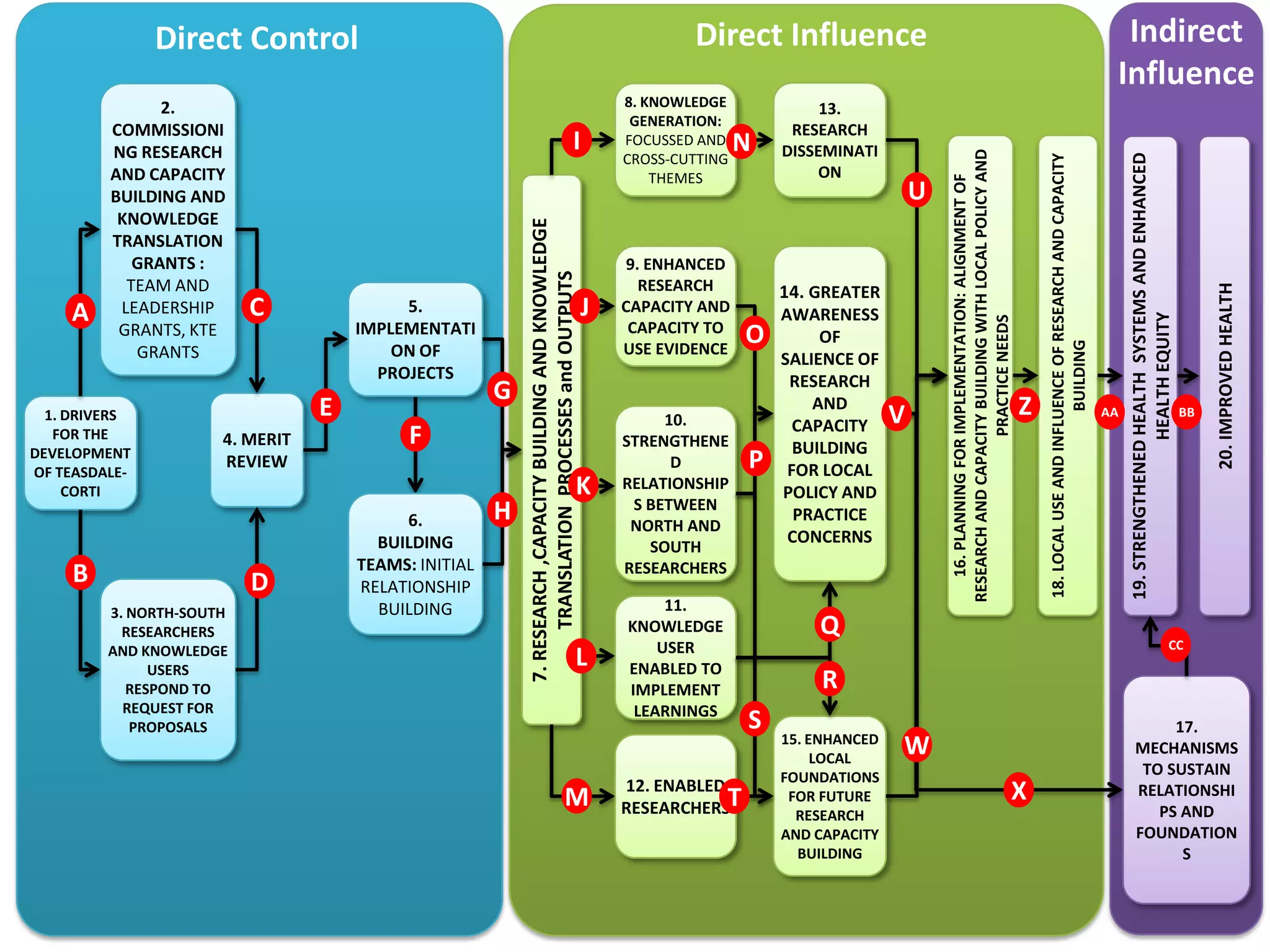

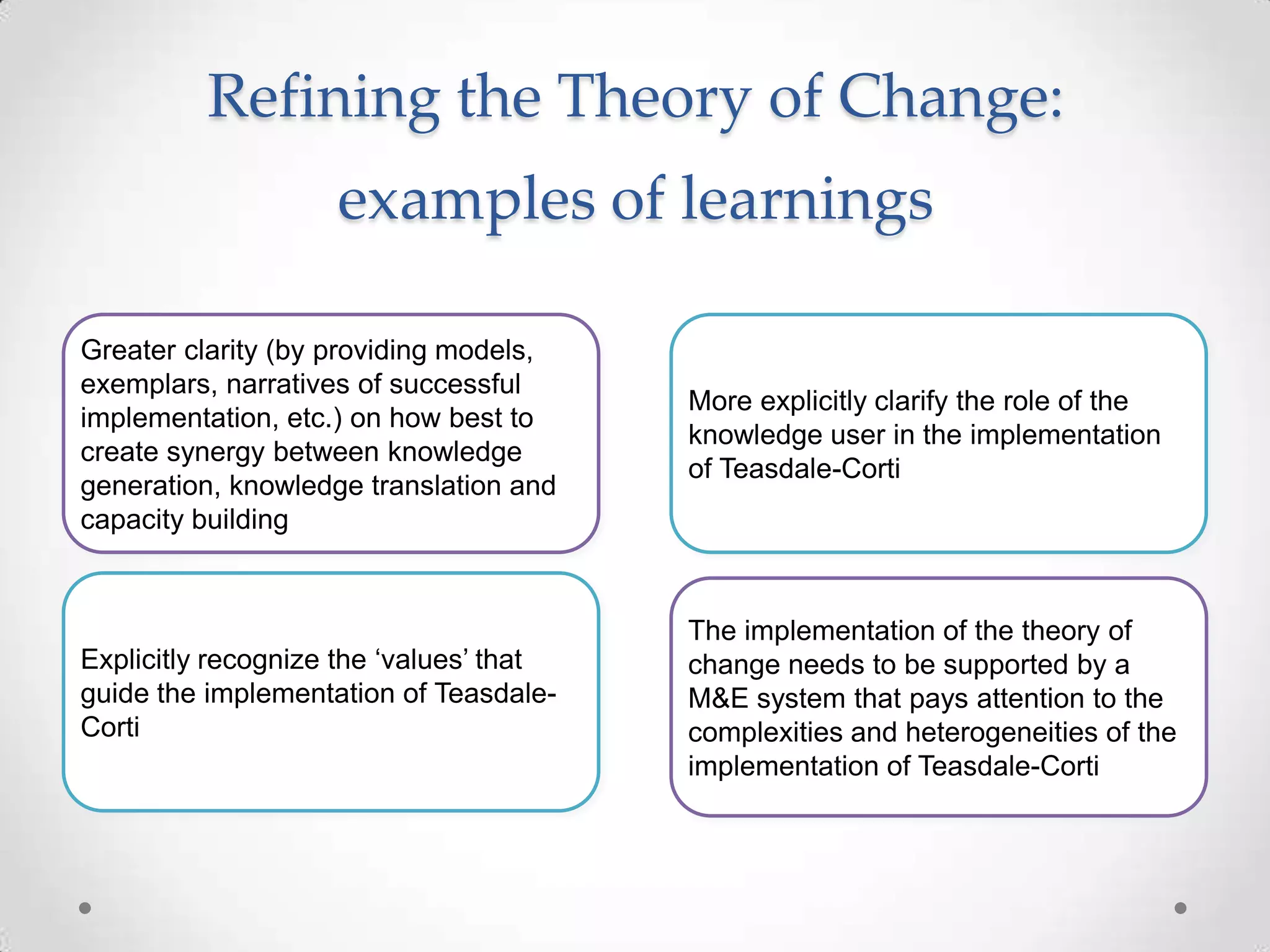

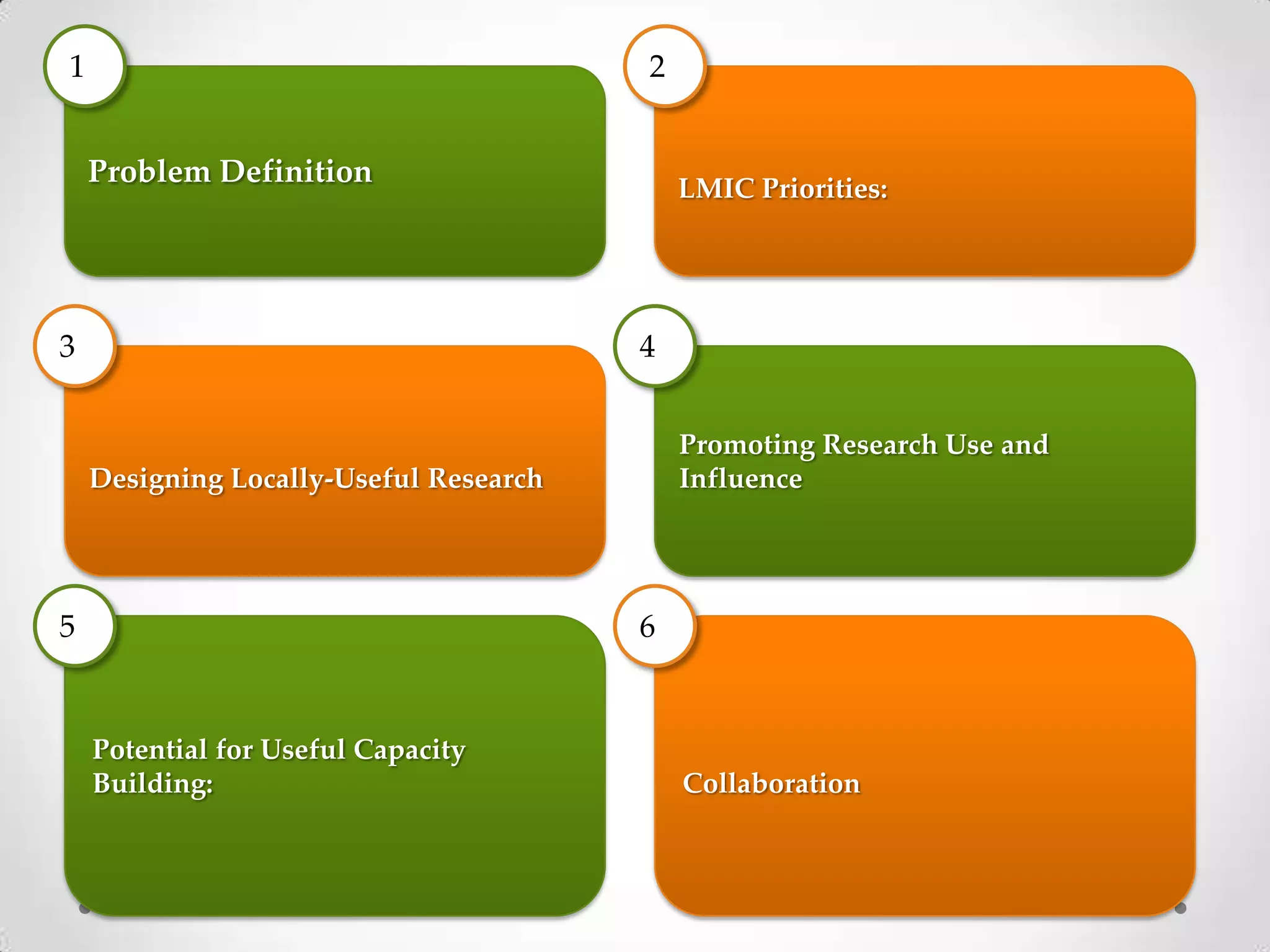

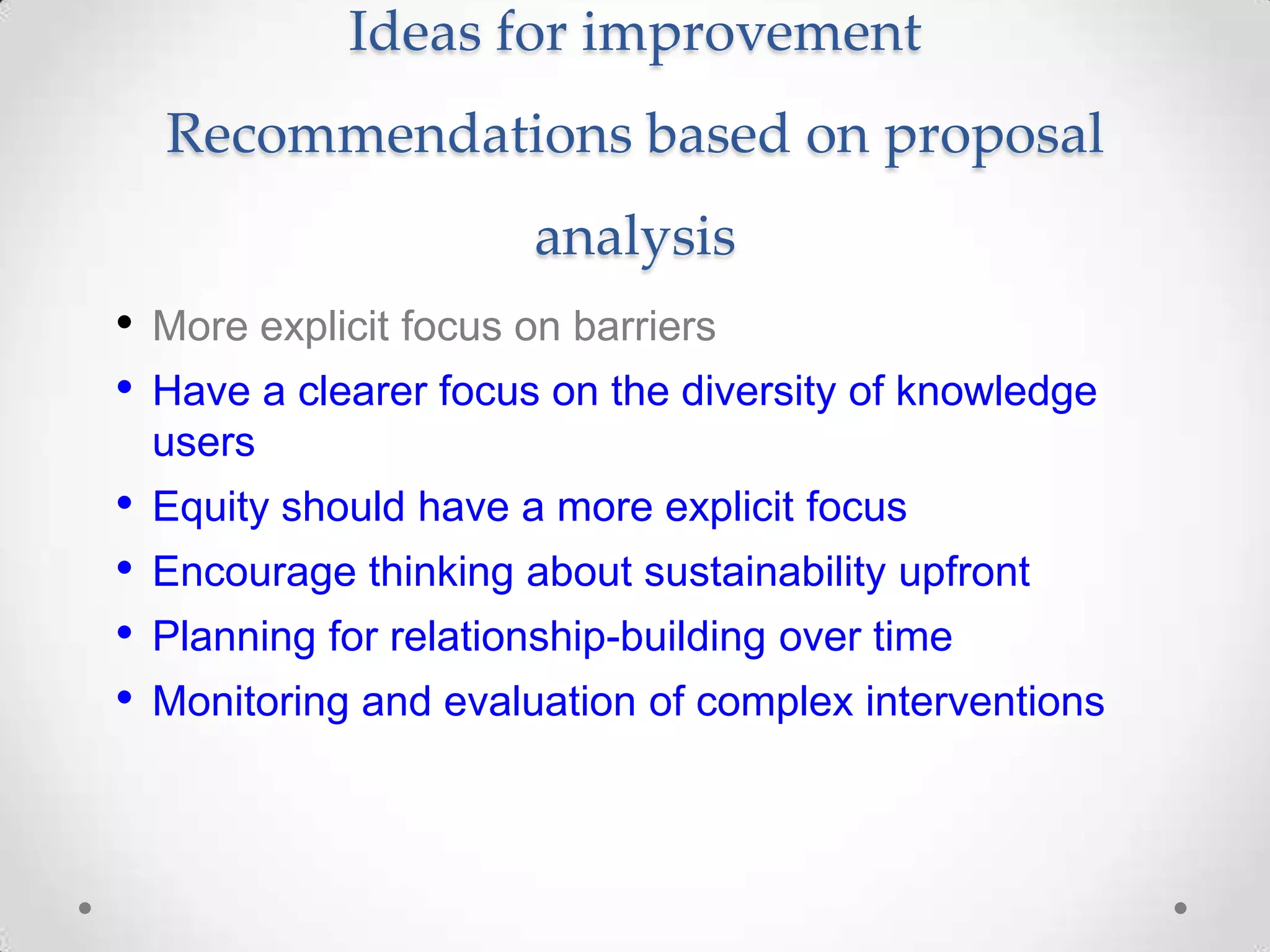

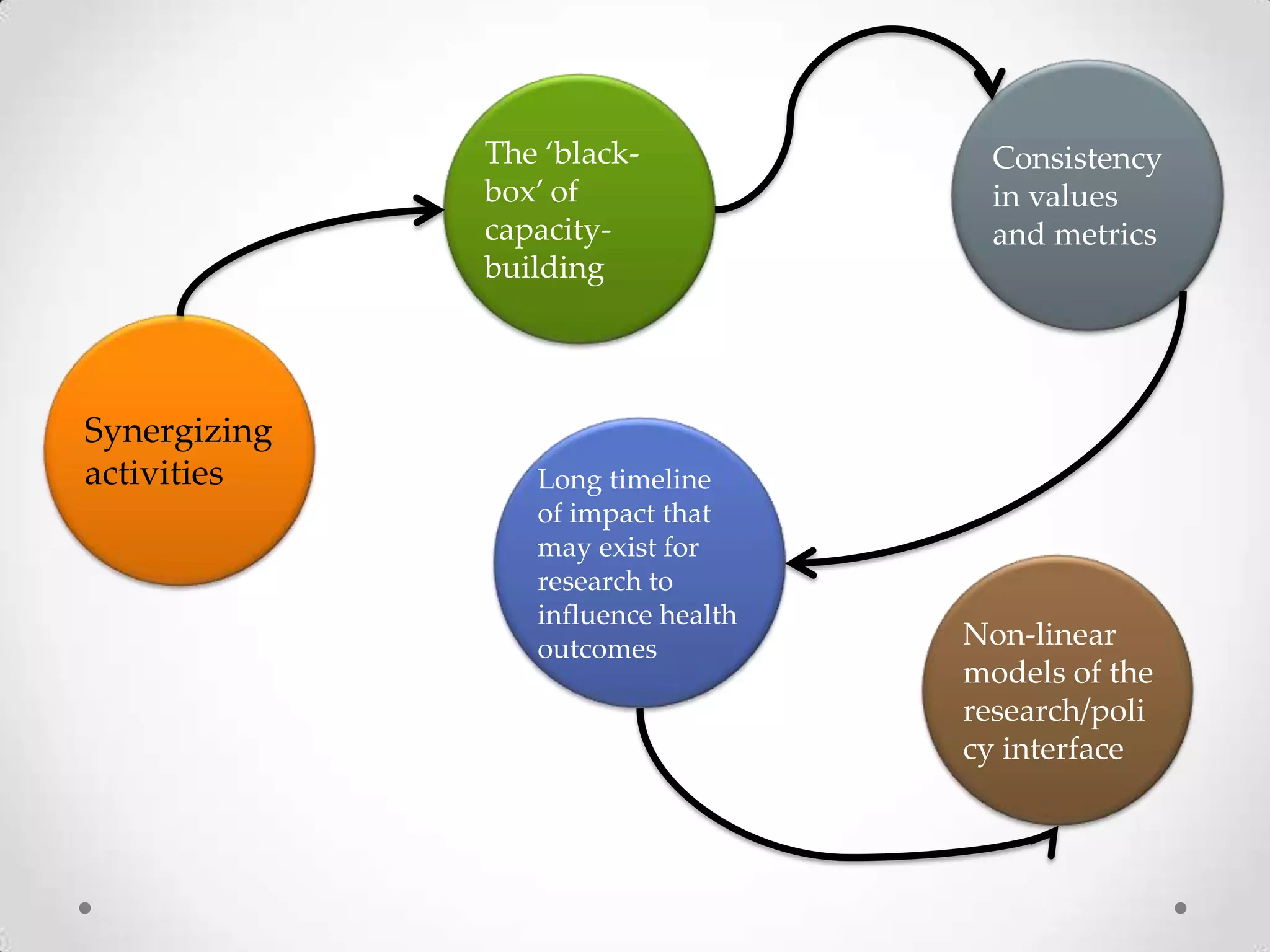

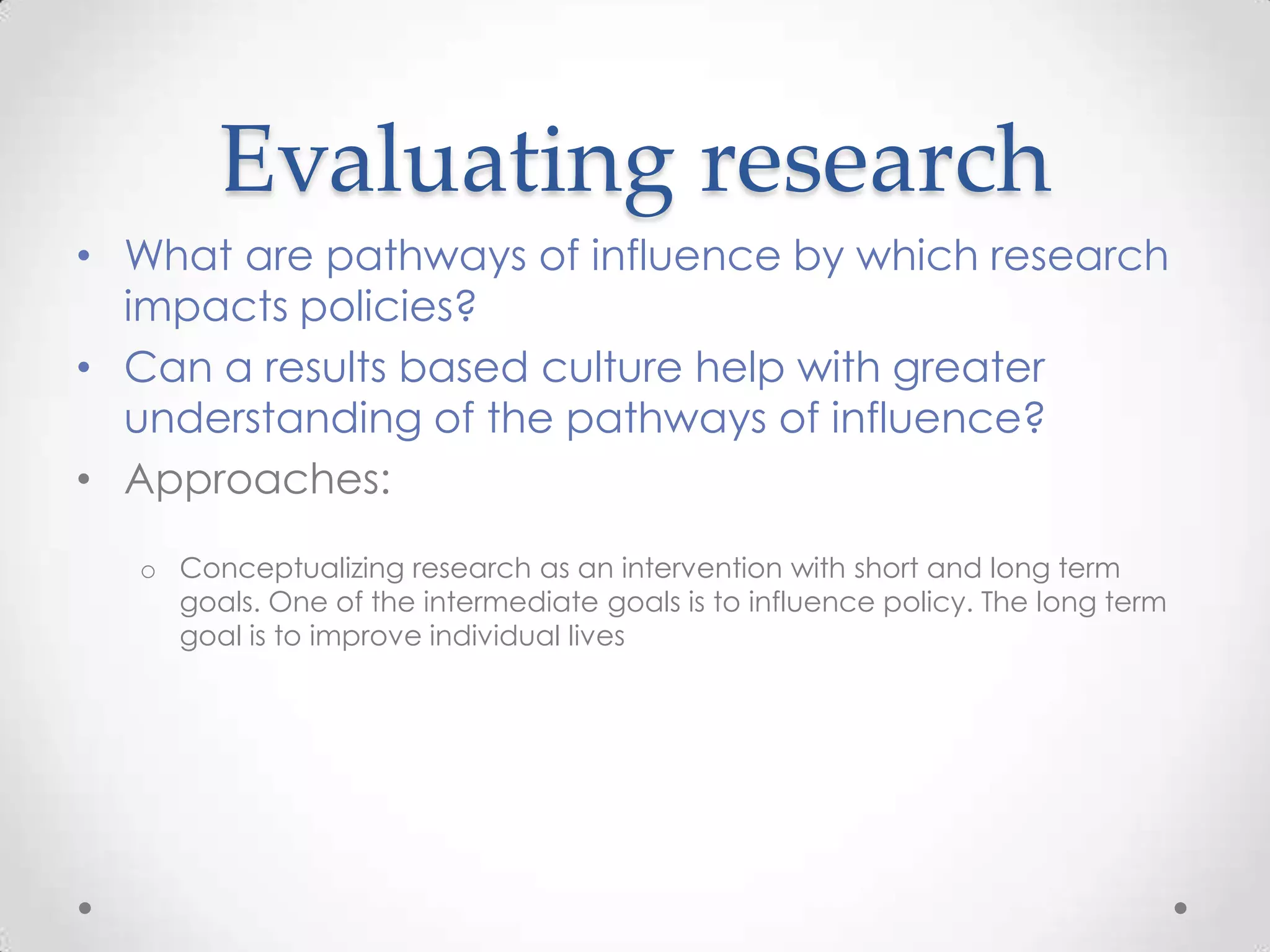

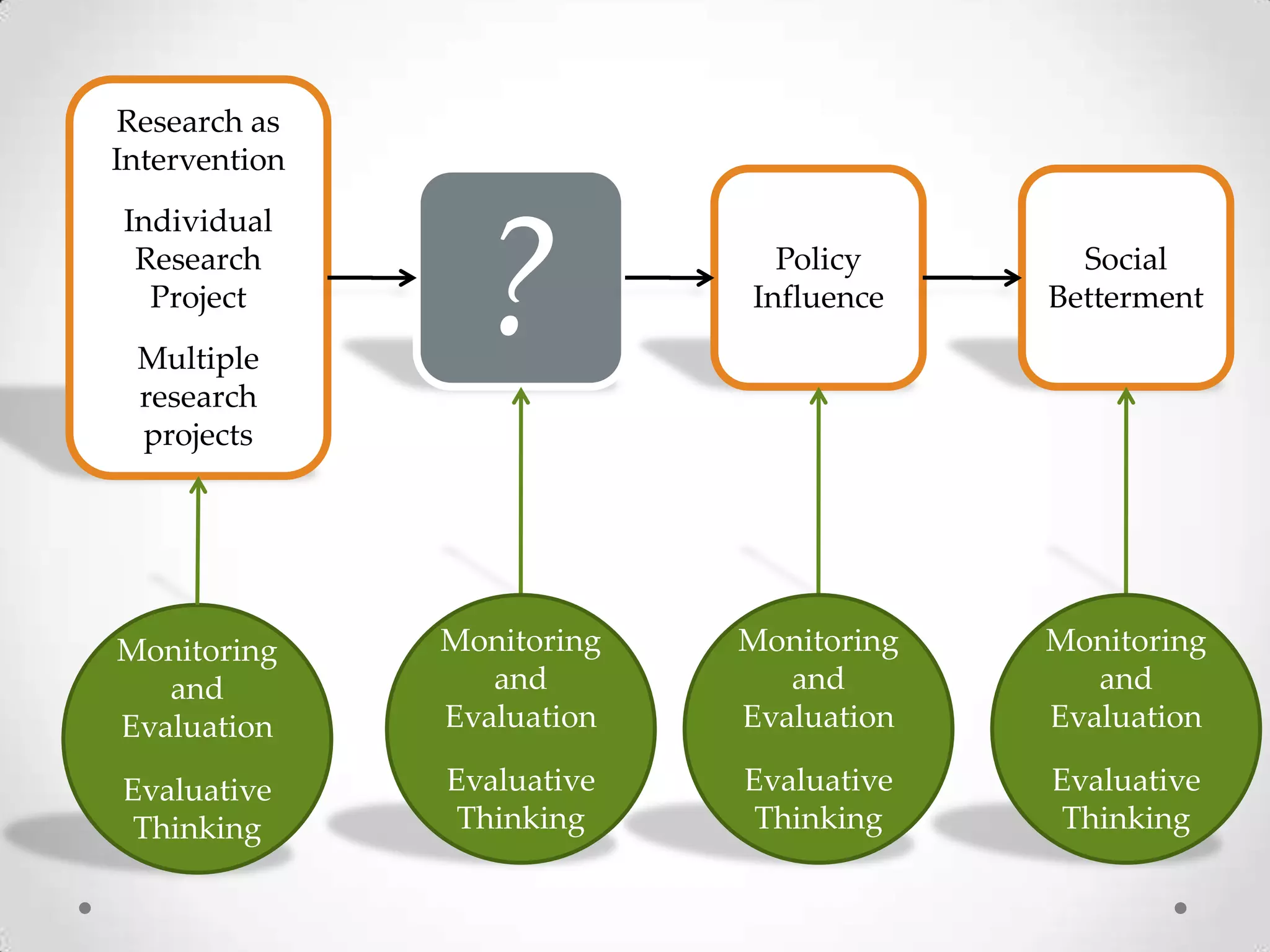

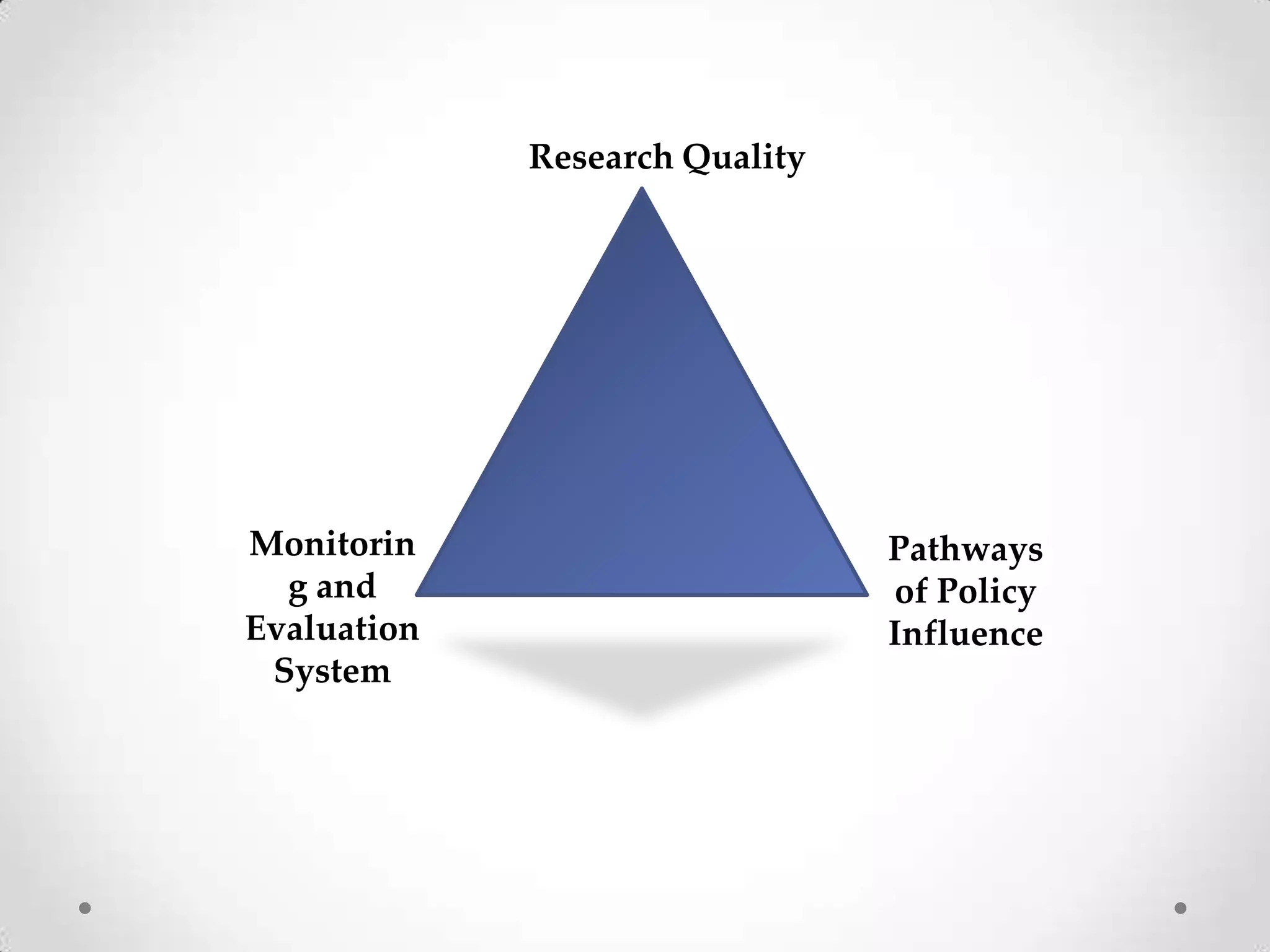

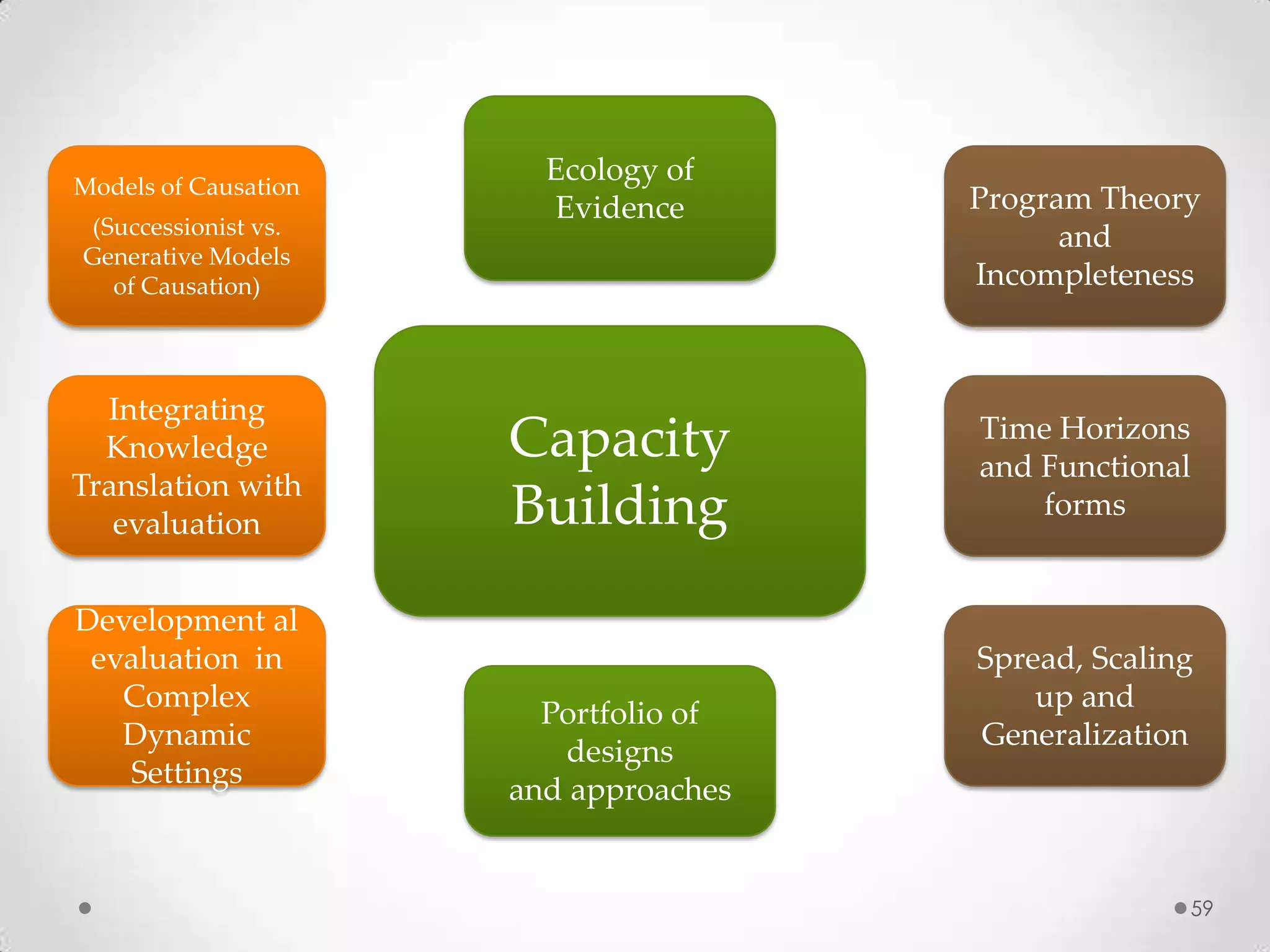

The document discusses transformative evaluation approaches for global health interventions, emphasizing the importance of evaluative thinking in assessing programs and their effectiveness. It critiques traditional evaluation methods, outlines the complexities of health interventions, and highlights the need for adaptive approaches to evaluation that account for evolving contexts and stakeholder involvement. Key recommendations include focusing on sustainability, clarity in partnerships, and aligning evaluation metrics with desired health outcomes.