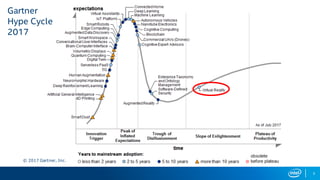

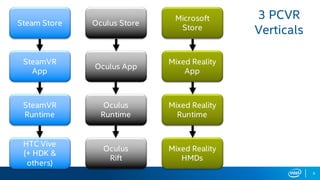

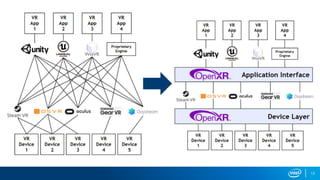

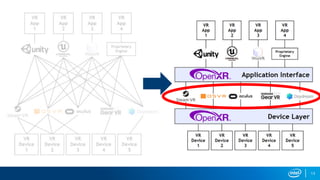

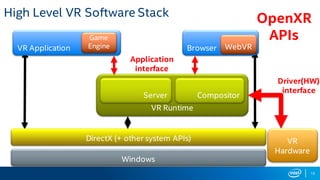

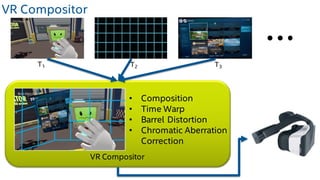

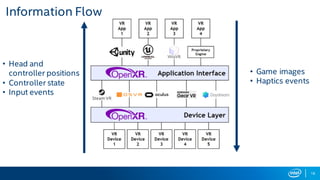

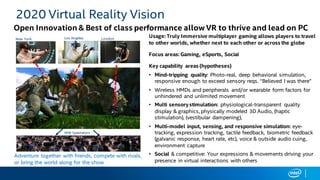

The document discusses the current state and future of virtual reality (VR) technologies, emphasizing challenges such as content availability, comfort, and cost. It outlines various VR platforms, the software stack of VR runtimes, and the advancements in user interface and sensory feedback that Intel envisions for immersive experiences. The future of VR gaming will rely on innovations in multi-sensory stimulation, cross-platform capabilities, and enhanced user interactions to create more engaging experiences.