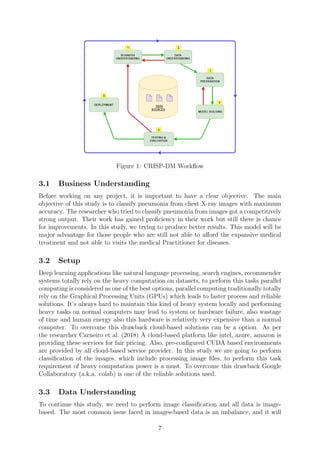

This document outlines a research project by Tushar Shailesh Dalvi on classifying pneumonia from chest X-rays using transfer learning techniques, specifically employing a custom VGG19 model. The study highlights the significance of early detection of pneumonia, challenges faced in identifying the disease, and the implementation of data augmentation to address class imbalance issues in medical datasets. Results showed an achieved recall of 98% and precision of 82%, indicating the custom model's efficacy compared to existing methods.