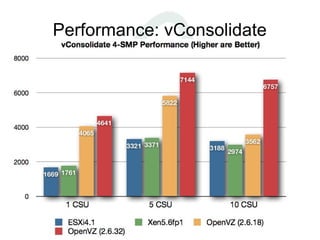

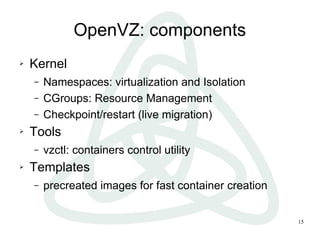

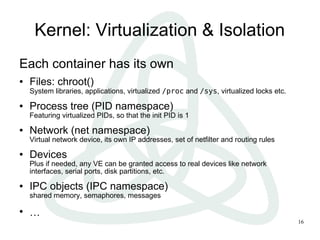

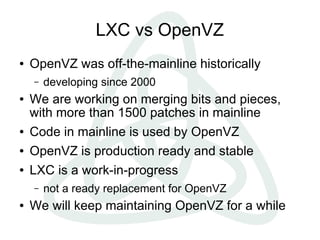

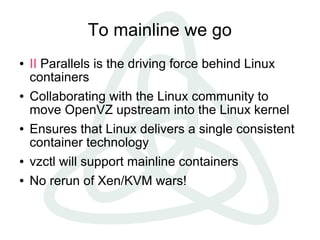

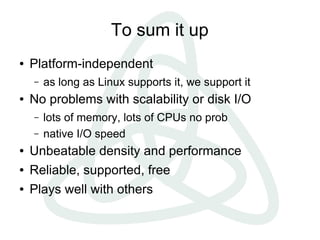

The document presents an overview of OpenVZ and Linux container virtualization, comparing it to hypervisors. It discusses various virtualization techniques, the architecture of OpenVZ, and its components, including kernel features for resource management and migration. Additionally, it highlights the advantages of container-based virtualization in terms of performance, scalability, and density, and mentions ongoing efforts to integrate OpenVZ into the Linux kernel.

![Comparison

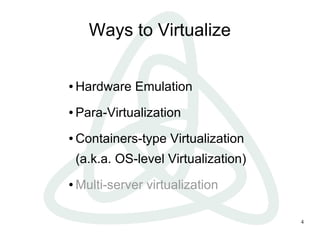

Hypervisor (VM) Containers (CT)

One real HW, many virtual One real HW (no virtual

HWs, many OSs HW), one kernel, many

High versatility – can run userspace instances

different OSs Higher density,

Lower density, natural page sharing

performance, scalability Dynamic resource

«Lowers» are mitigated by allocation

new hardware features

(such as VT-D)

Native performance:

[almost] no overhead](https://image.slidesharecdn.com/2012-04-openstack-openvz-linux-containers-120416140246-phpapp01/85/OpenVZ-Linux-Containers-7-320.jpg)