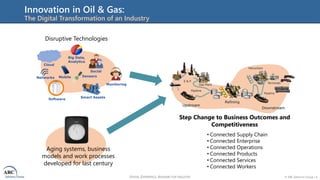

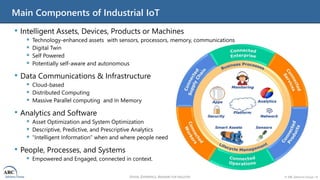

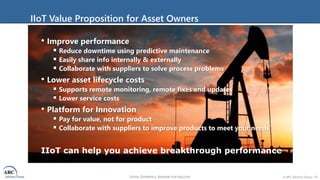

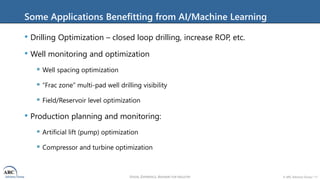

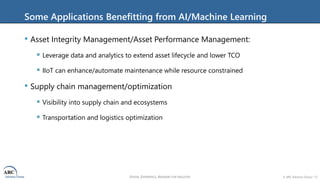

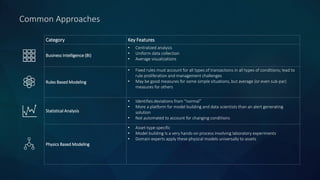

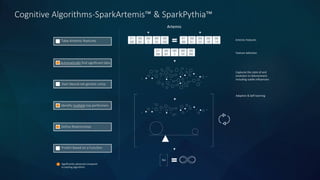

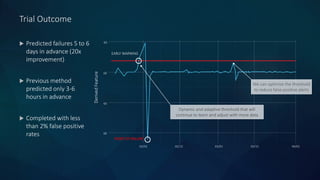

The document discusses the importance of predictive technologies and cognitive analytics in the oil and gas industry, emphasizing the need for innovation due to low oil prices and disruptions. It outlines how the Industrial Internet of Things (IIoT) can enhance operational efficiency and reduce costs through predictive maintenance and data analytics. Additionally, it provides a case study on Flowserve, illustrating the successful implementation of predictive analytics for pump failure monitoring, achieving significant improvements in advance warning and accuracy.