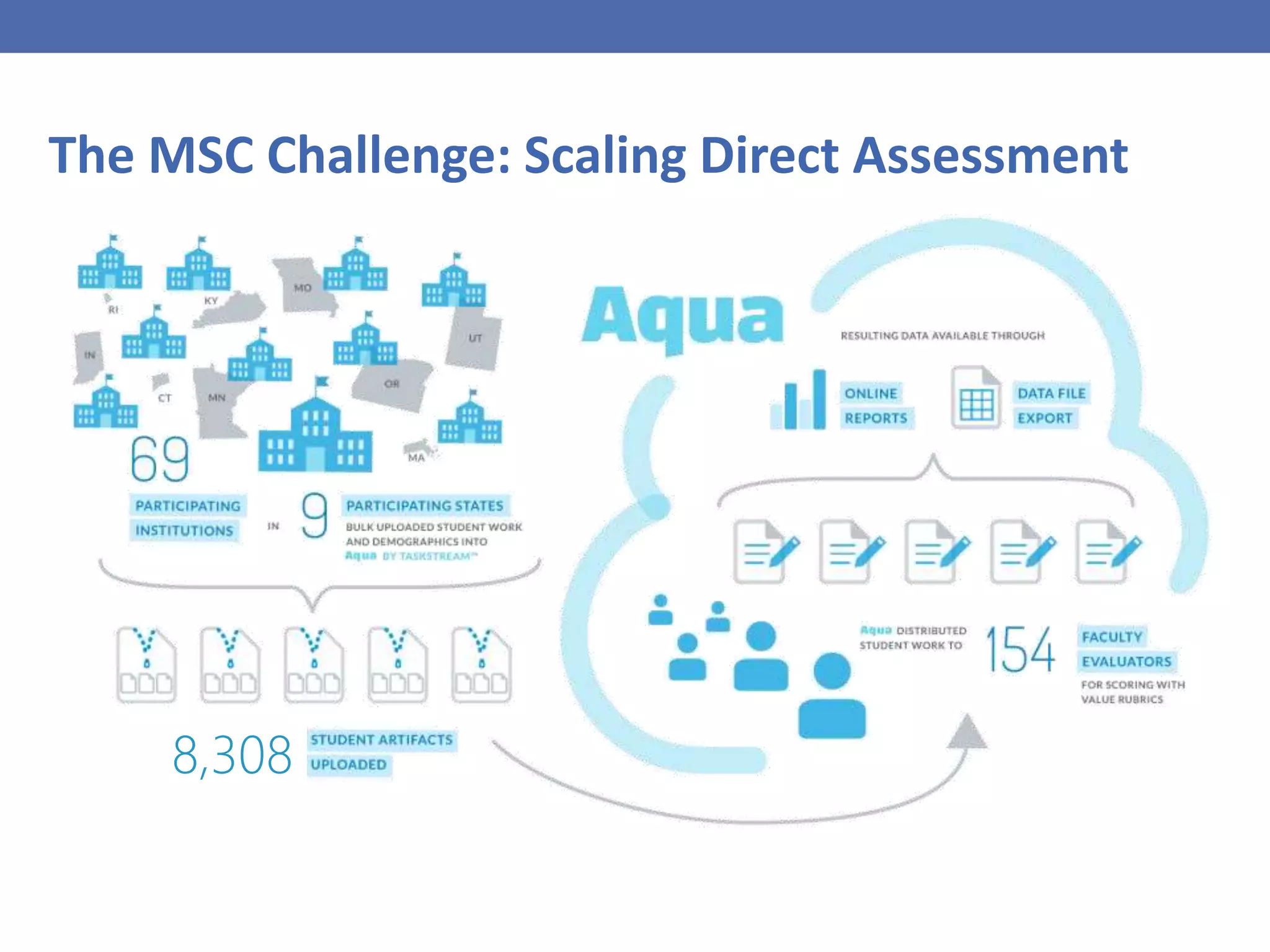

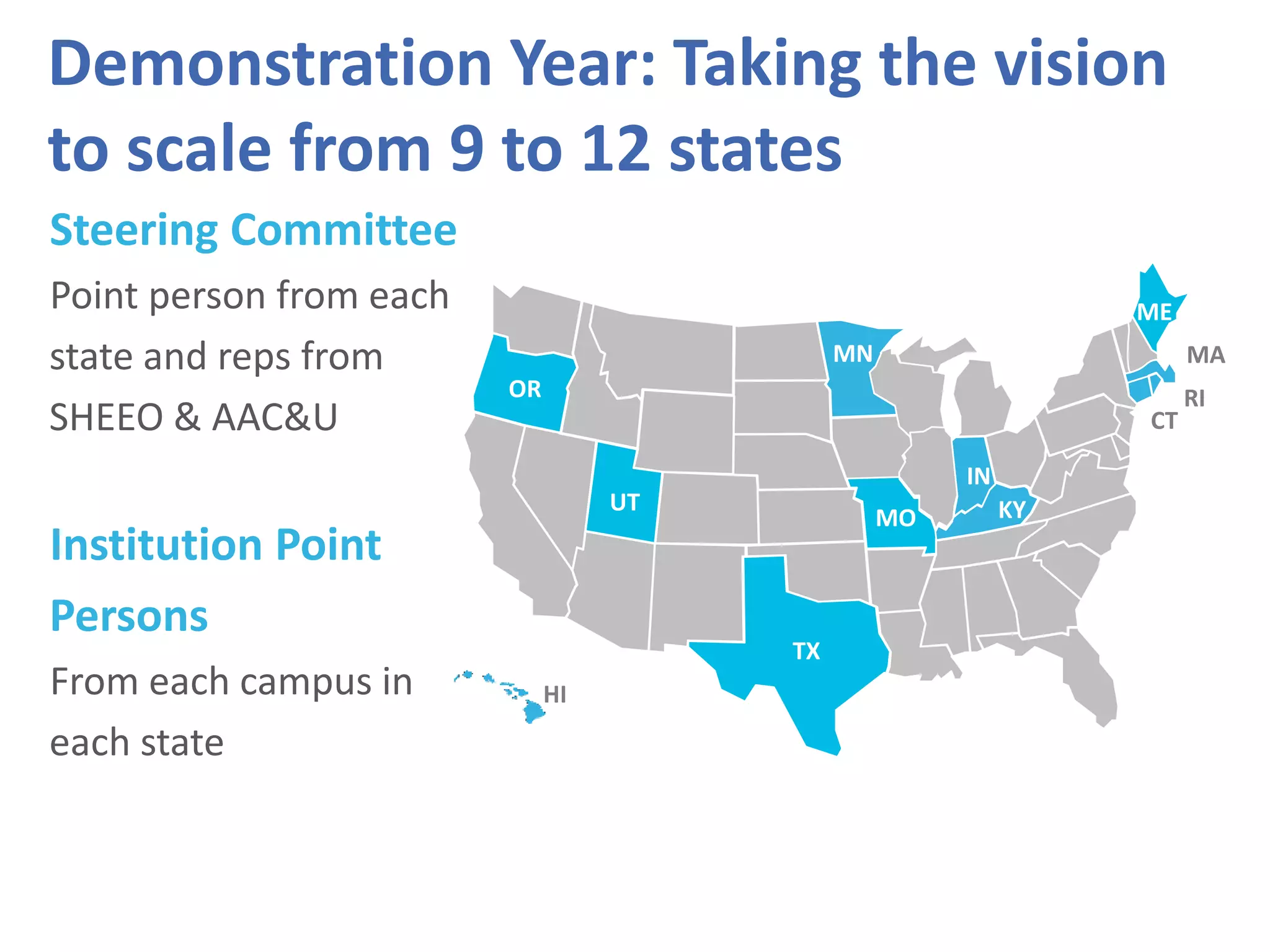

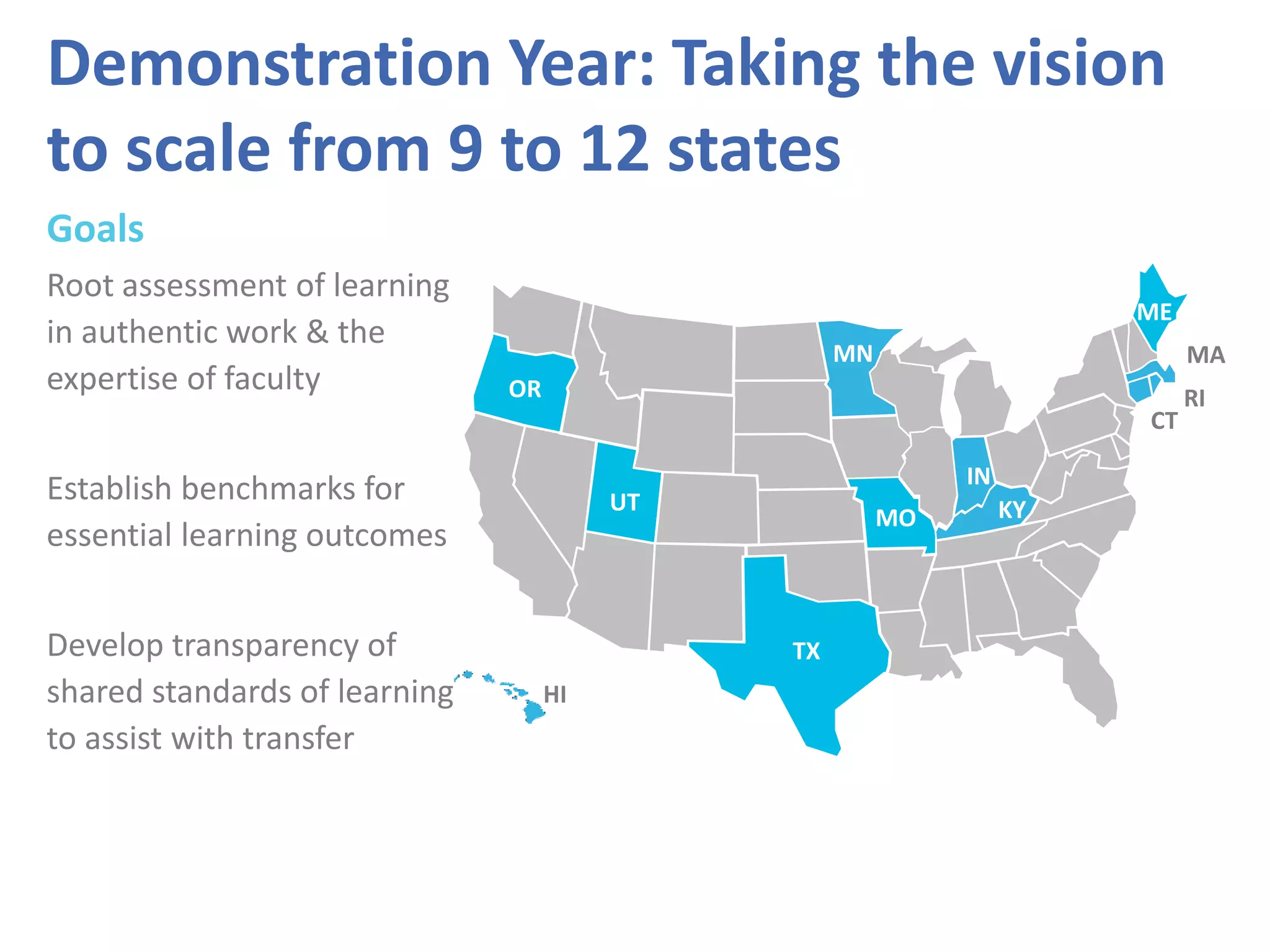

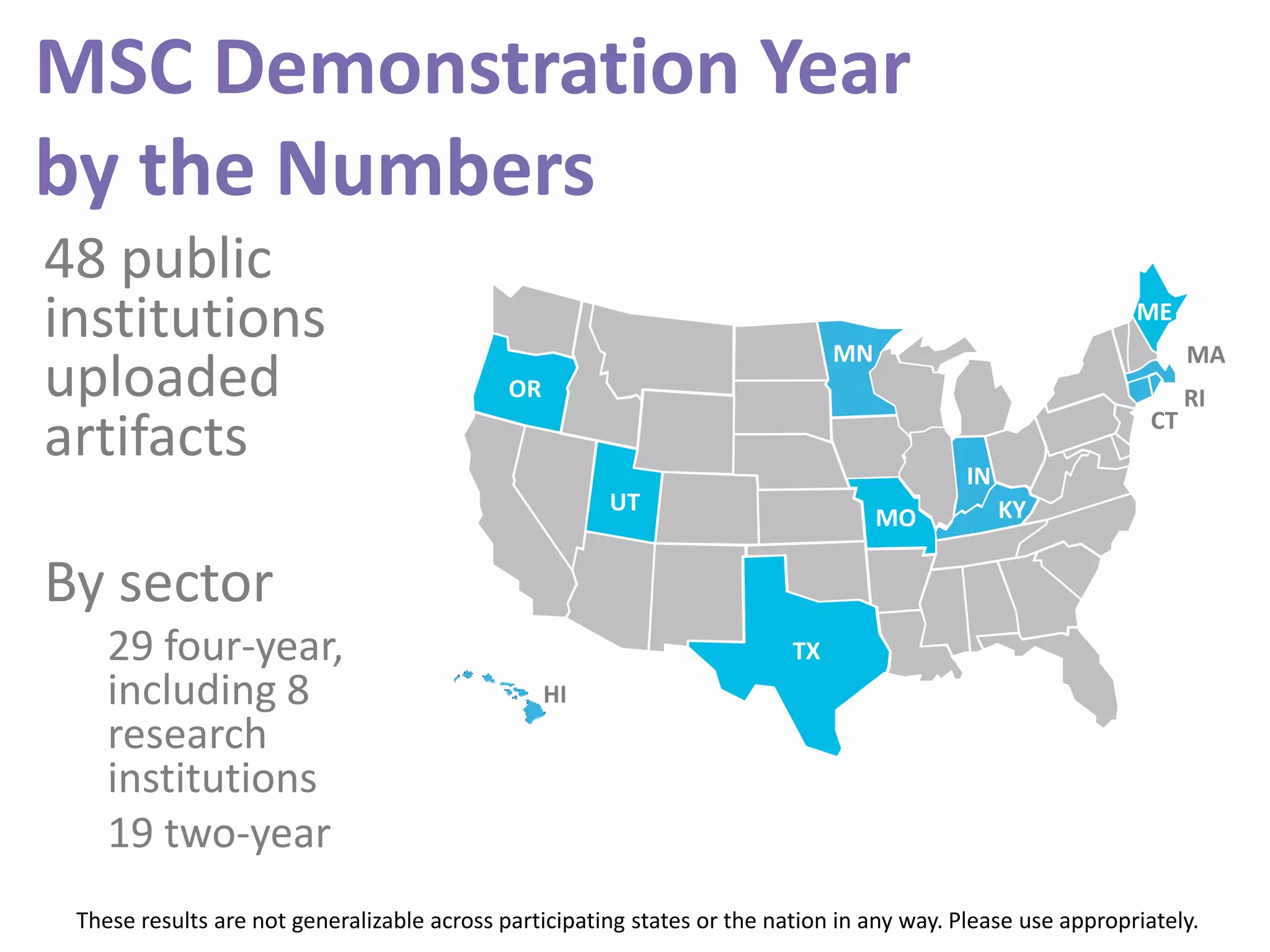

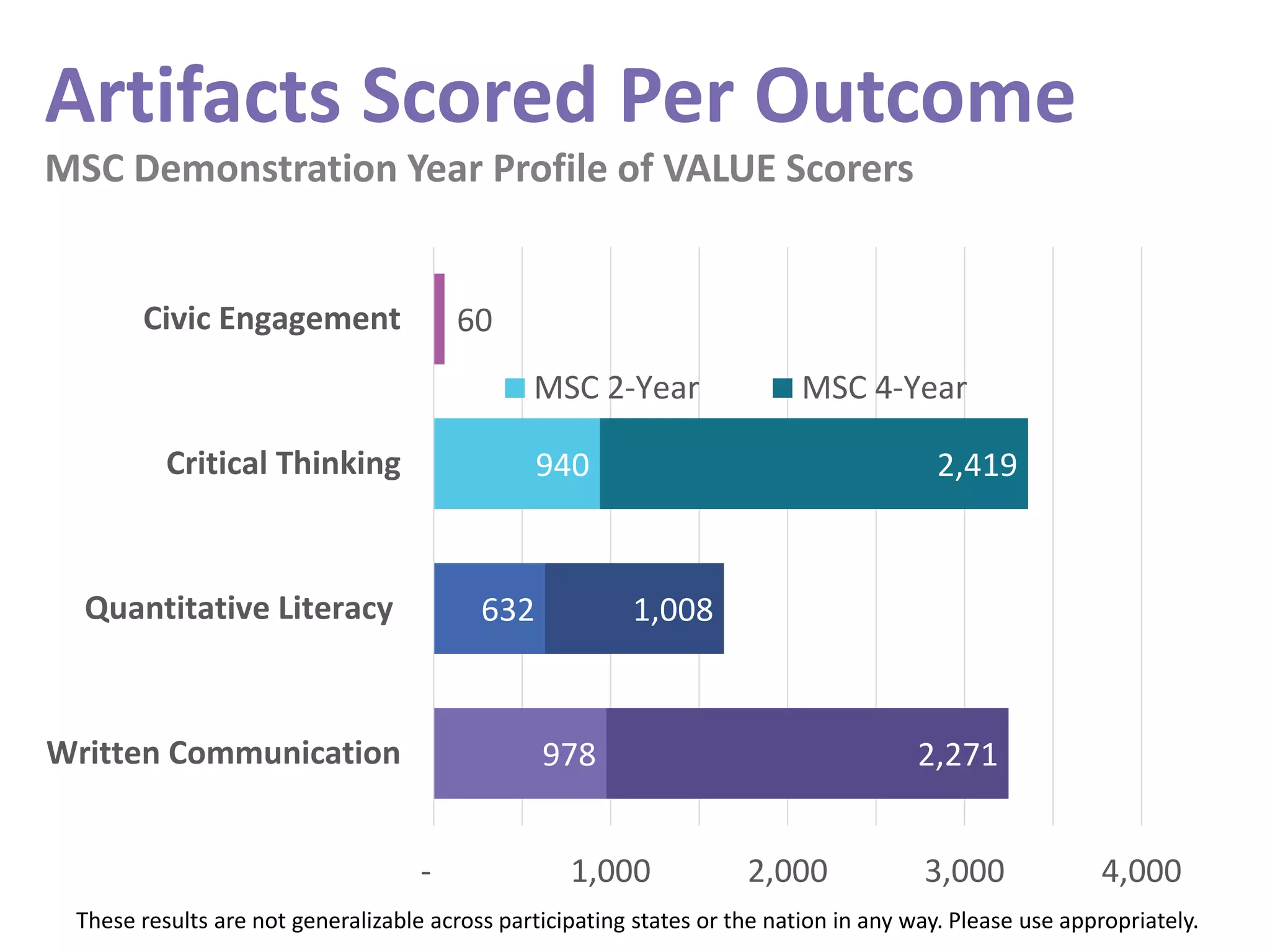

This document summarizes the results of a demonstration study involving 48 institutions across 12 states that used common rubrics to assess over 8,000 student work products. Key findings include:

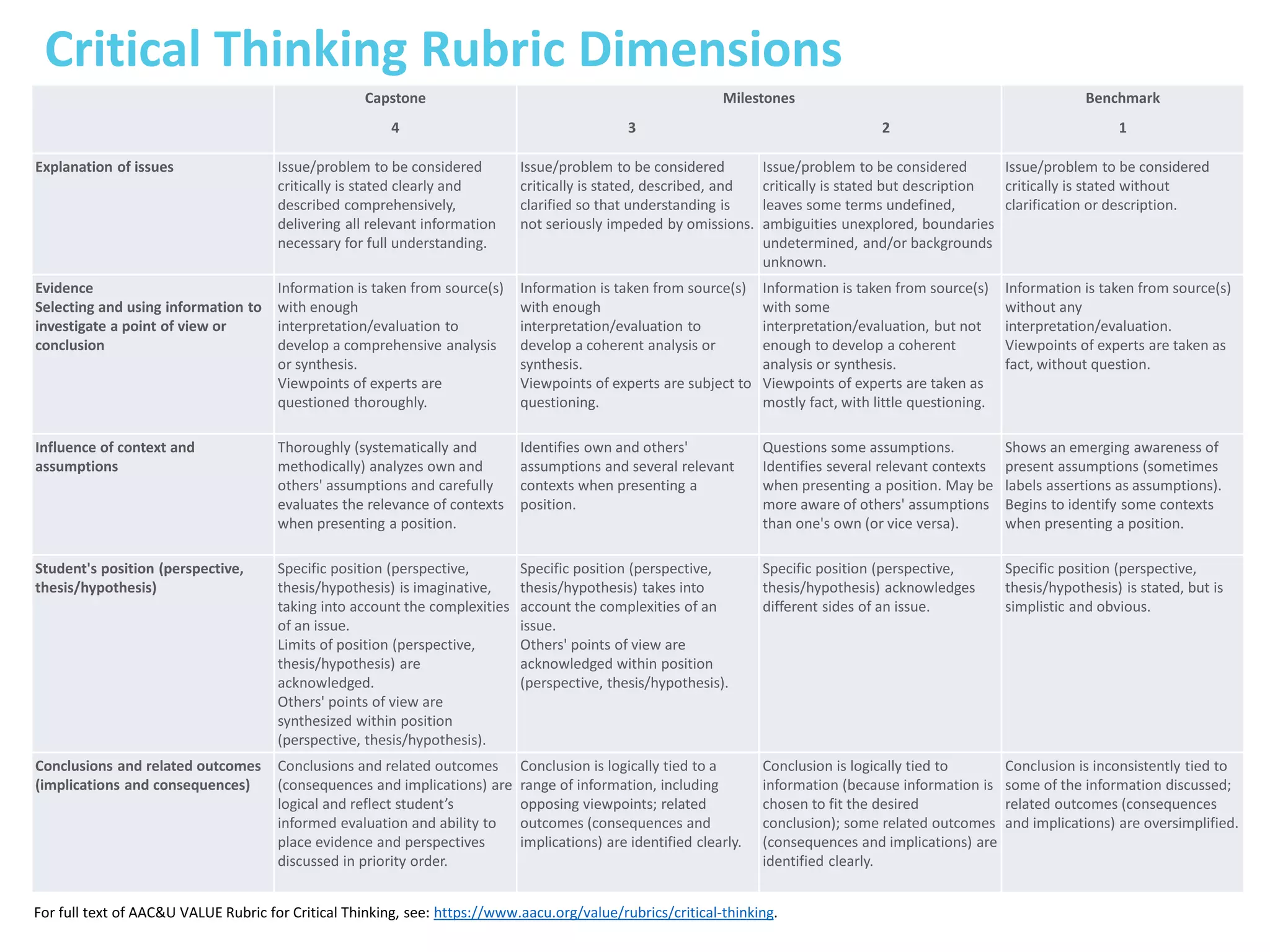

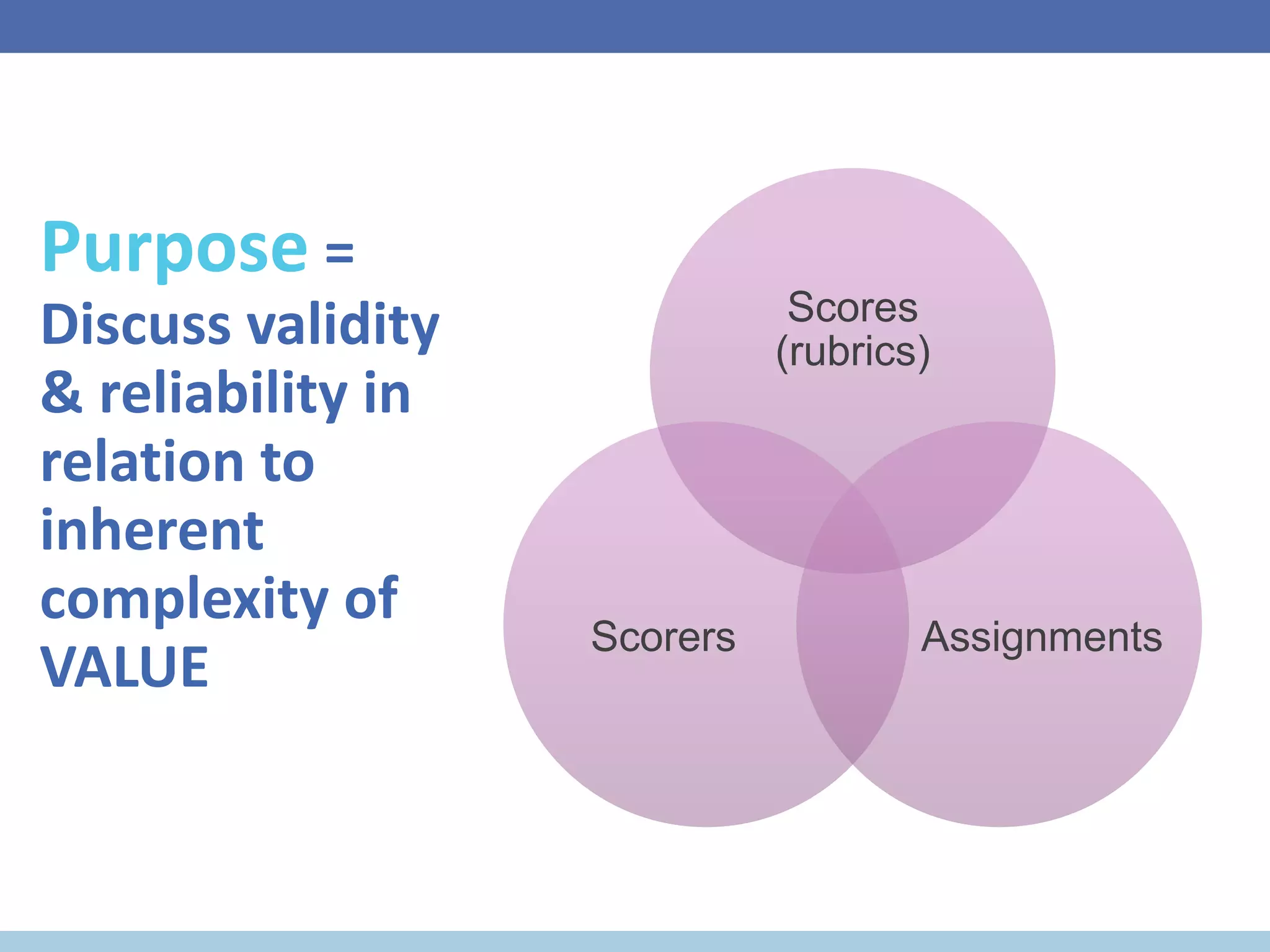

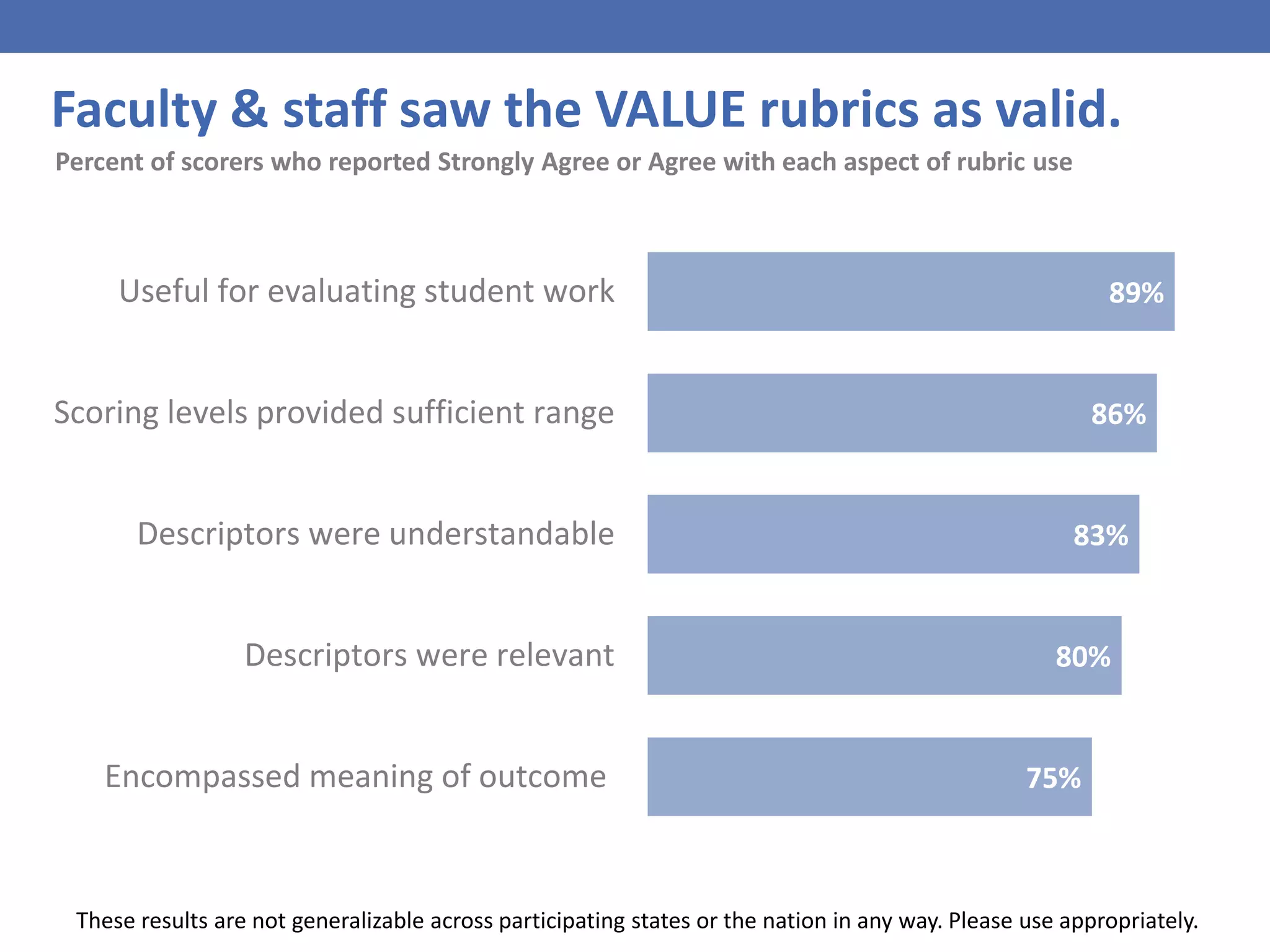

- Faculty generally found the VALUE rubrics to be valid assessments of student learning outcomes.

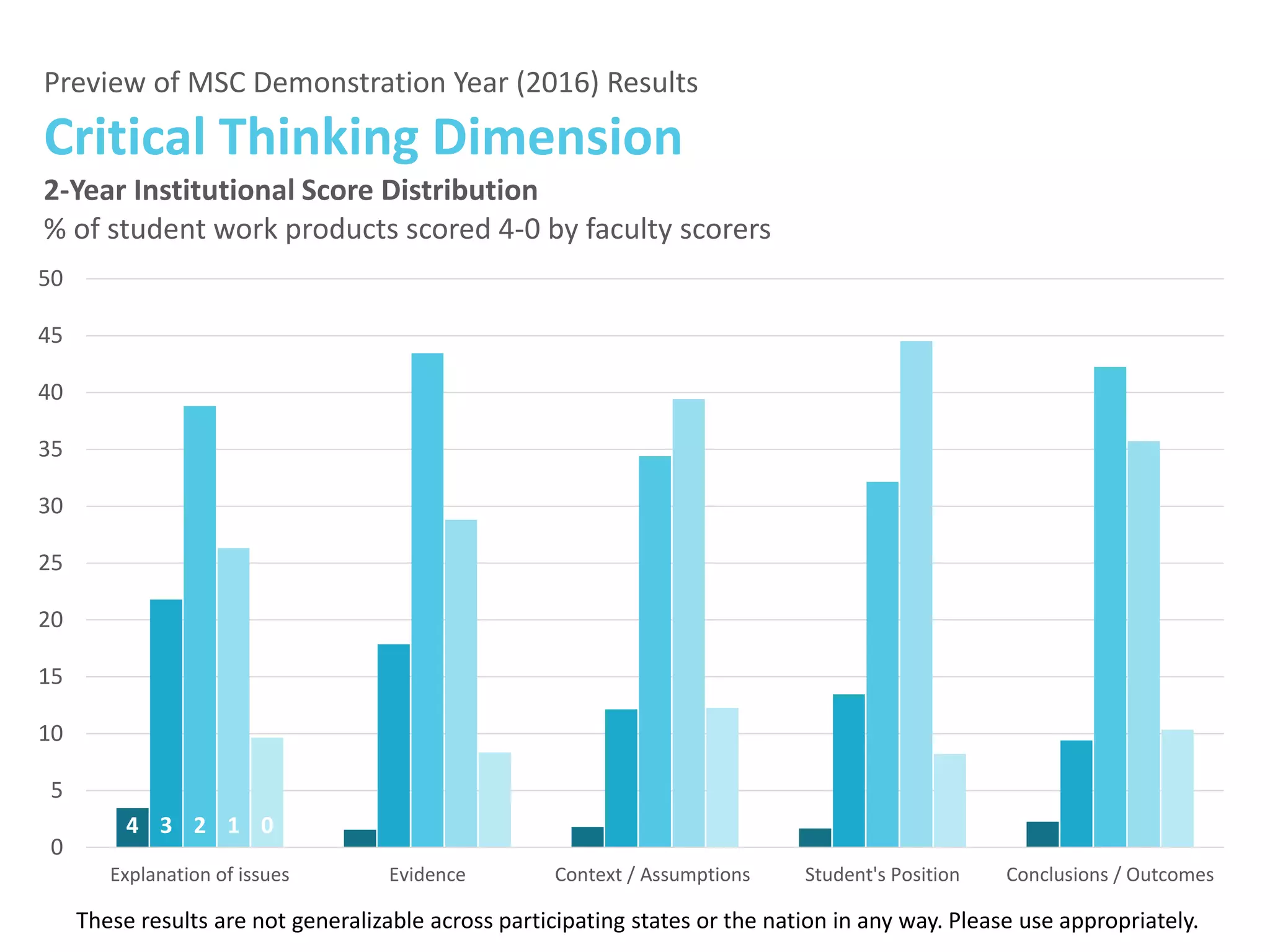

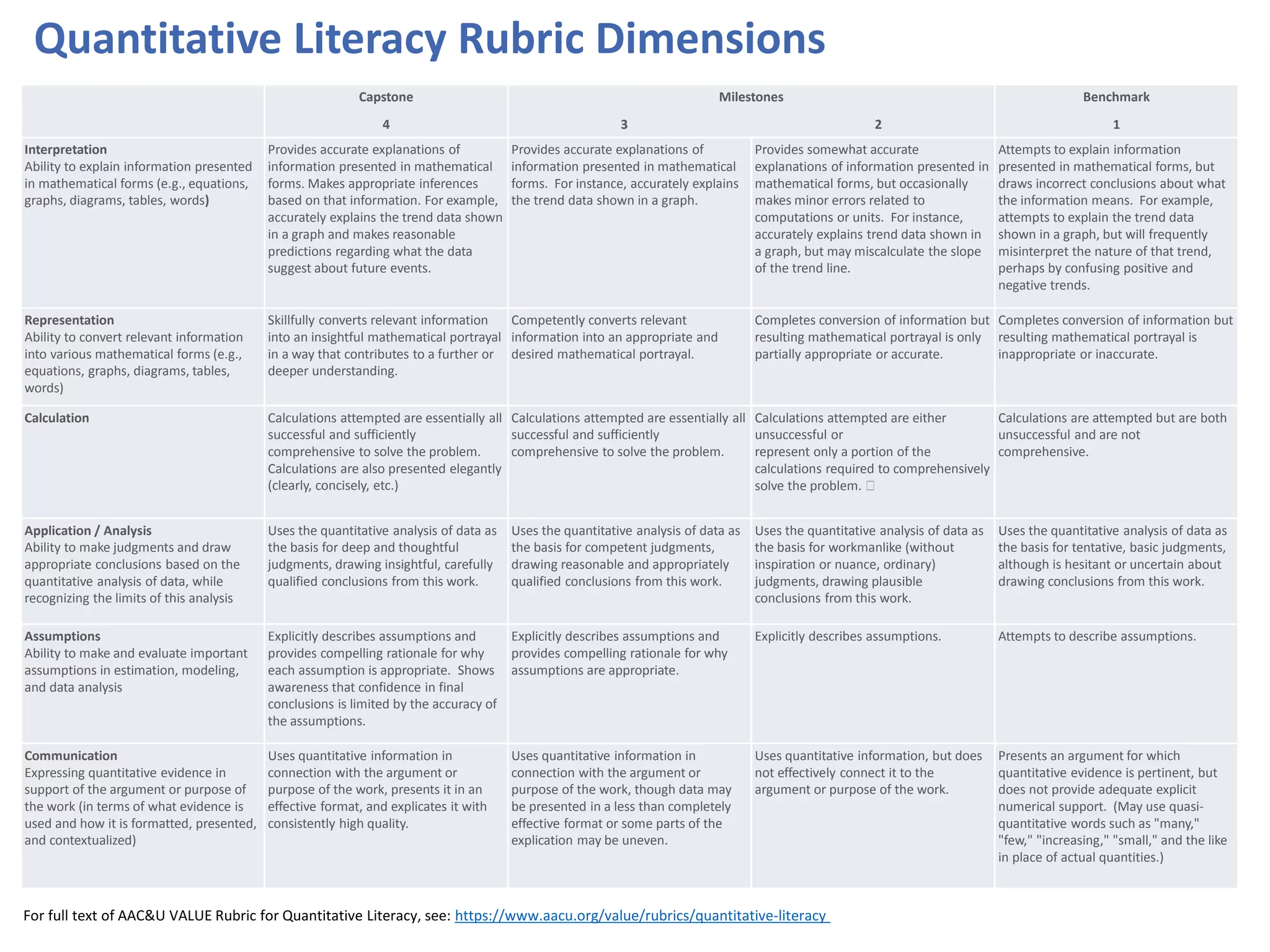

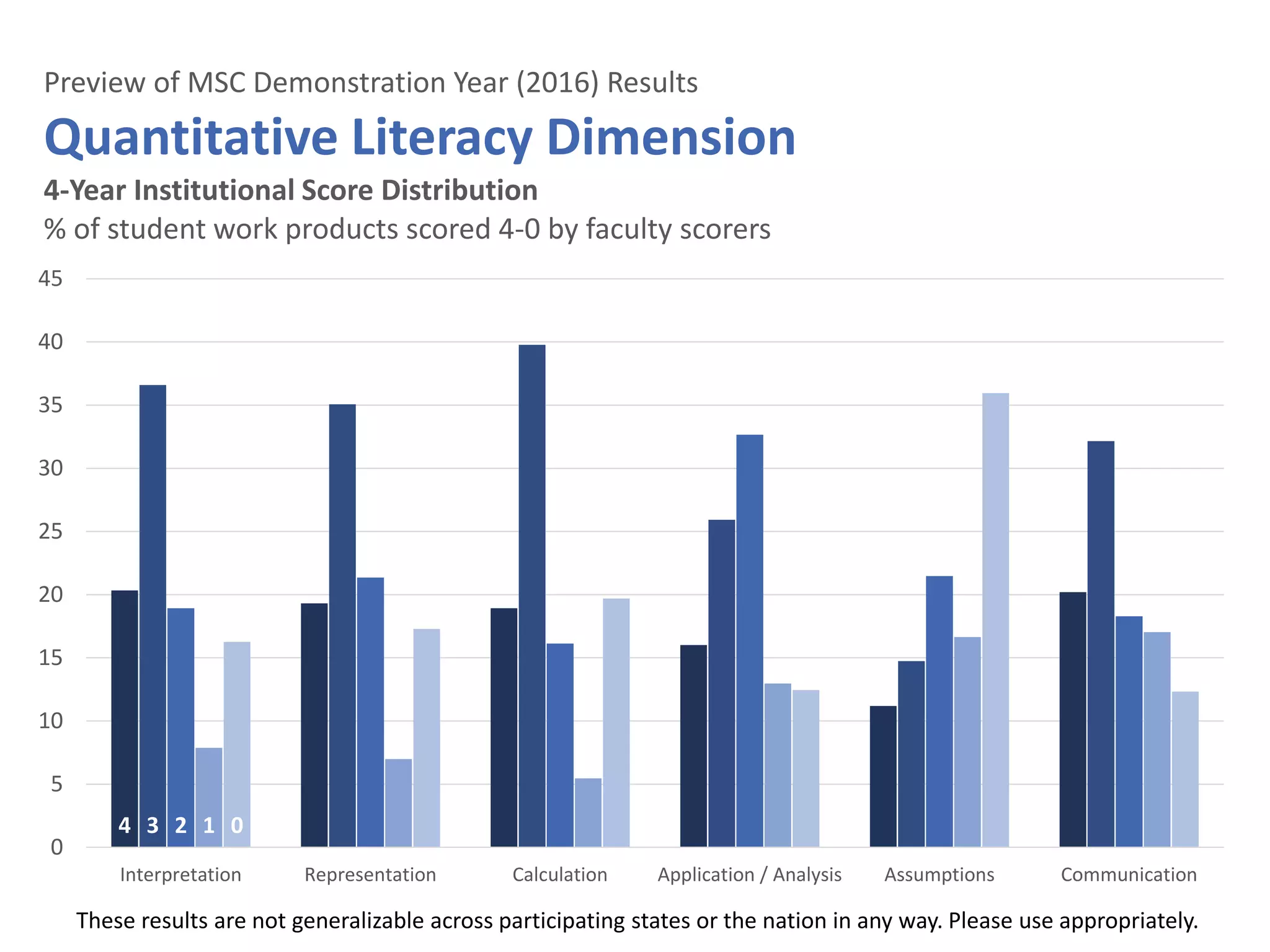

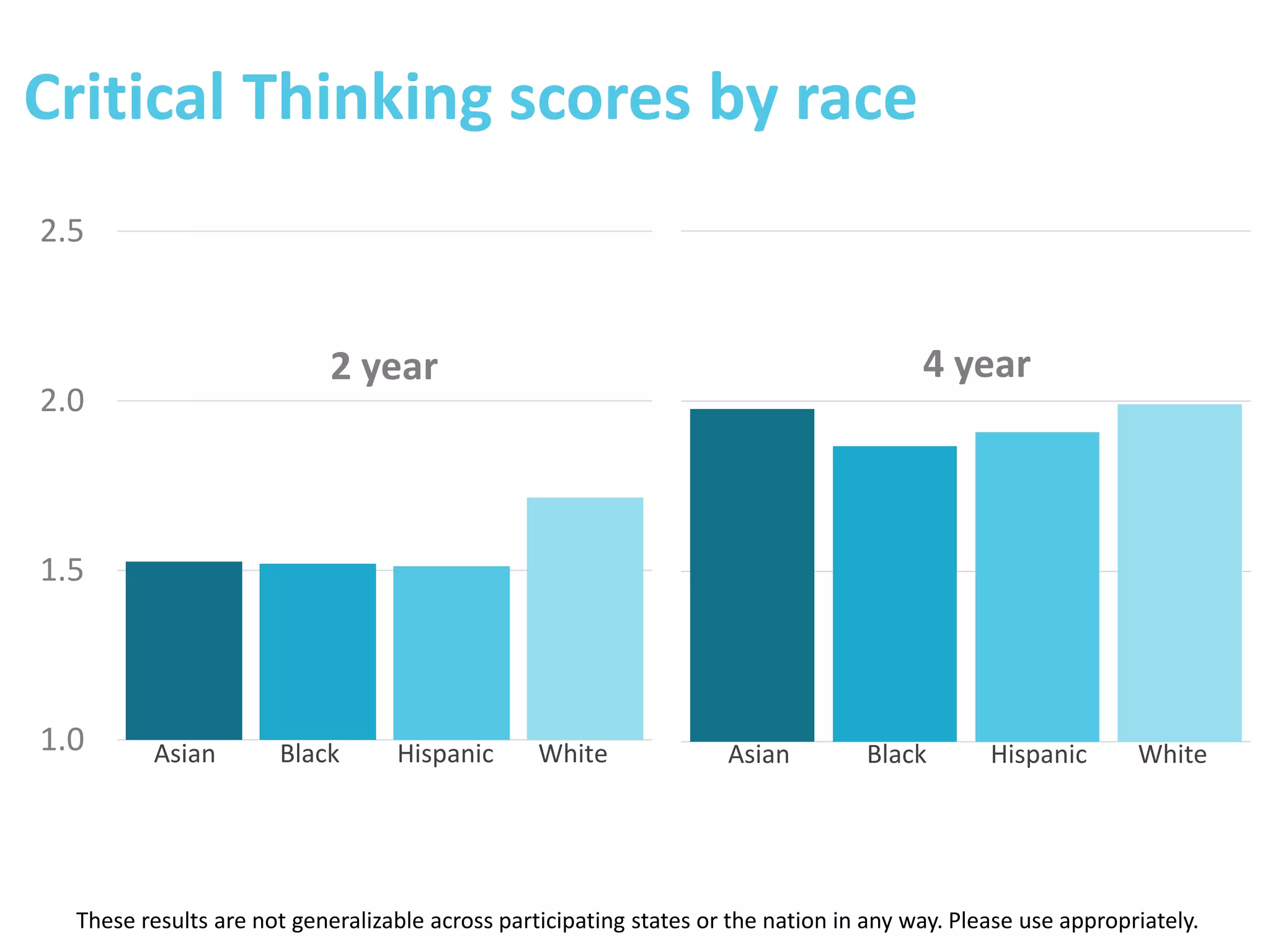

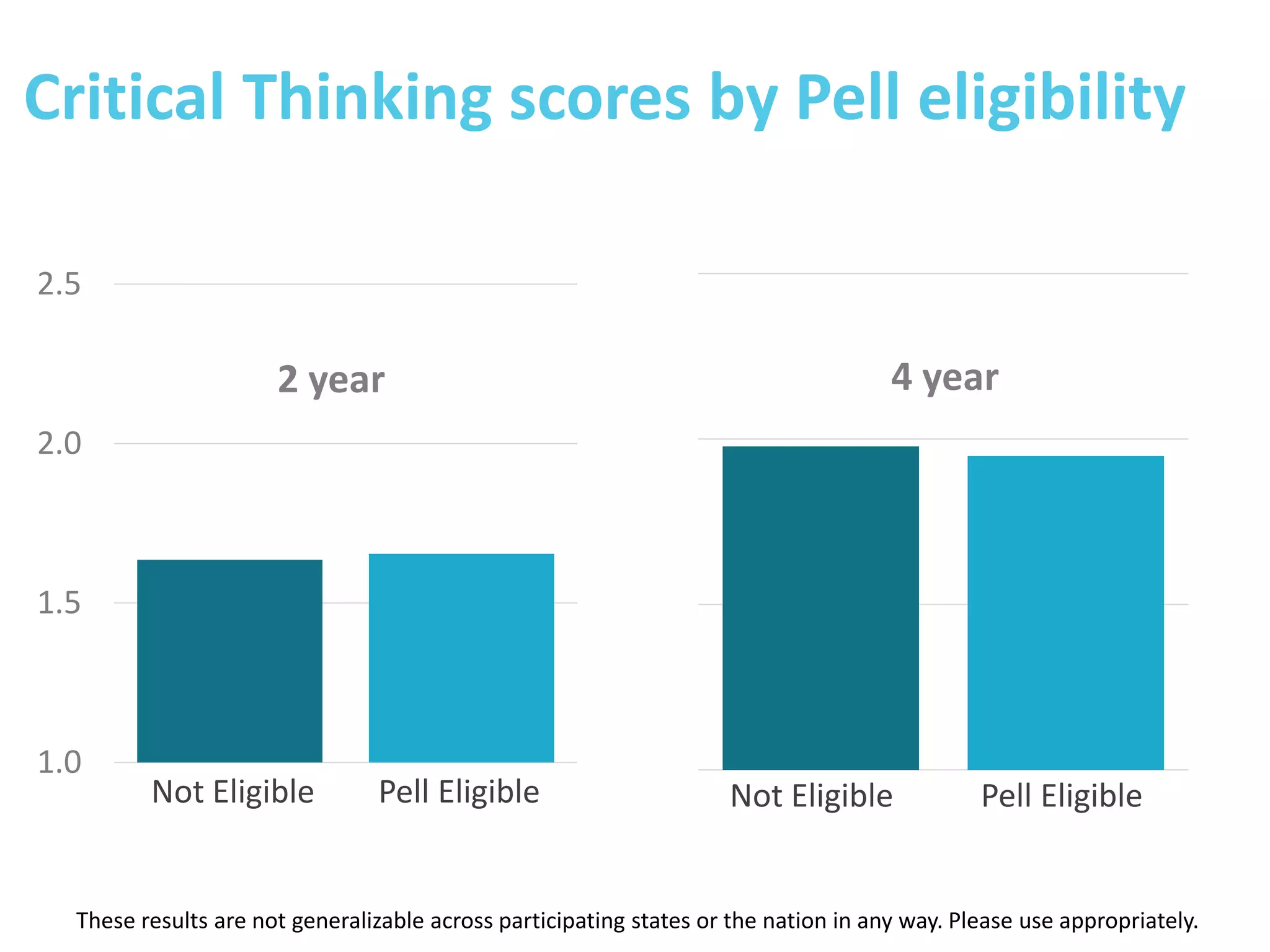

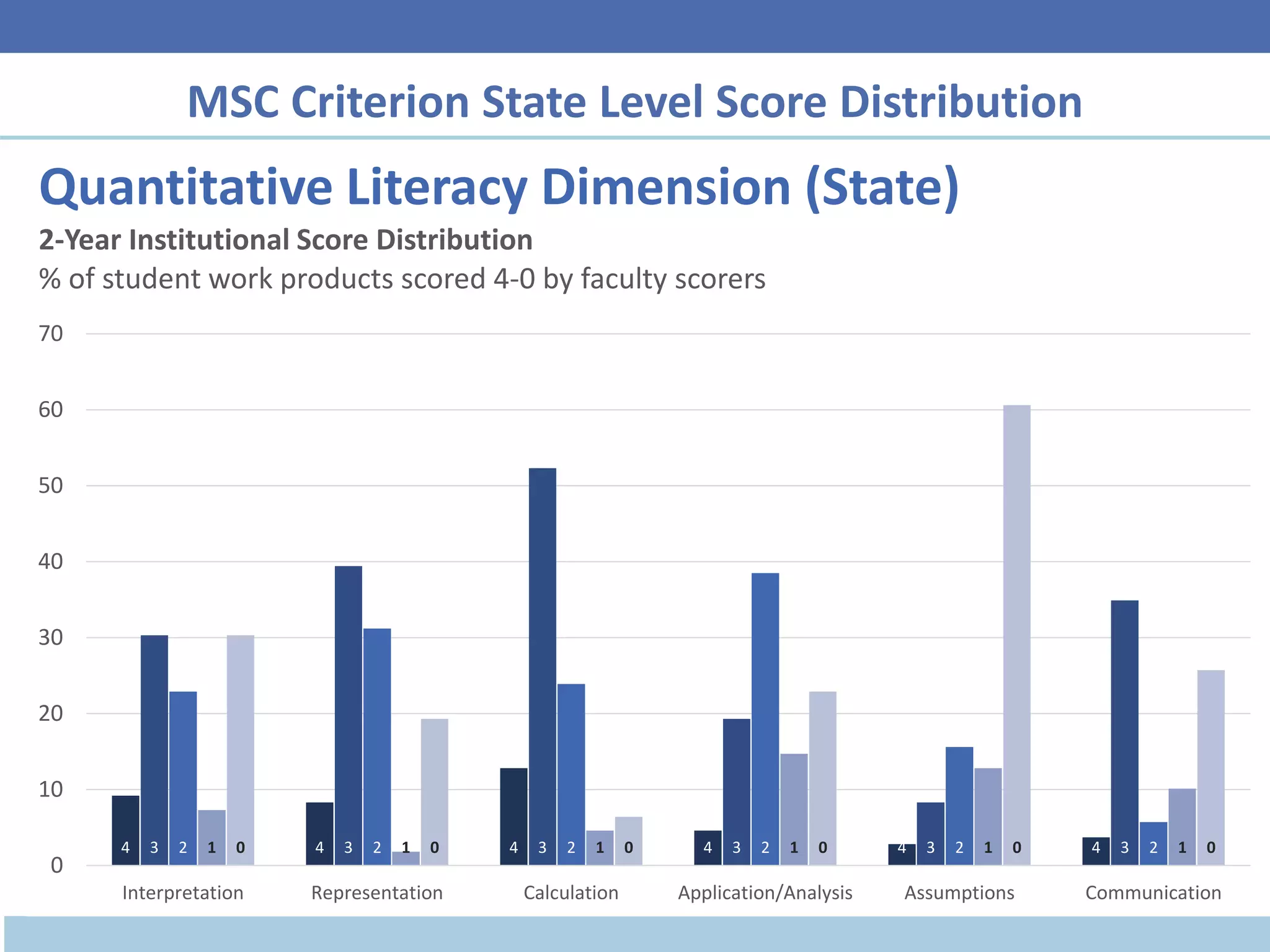

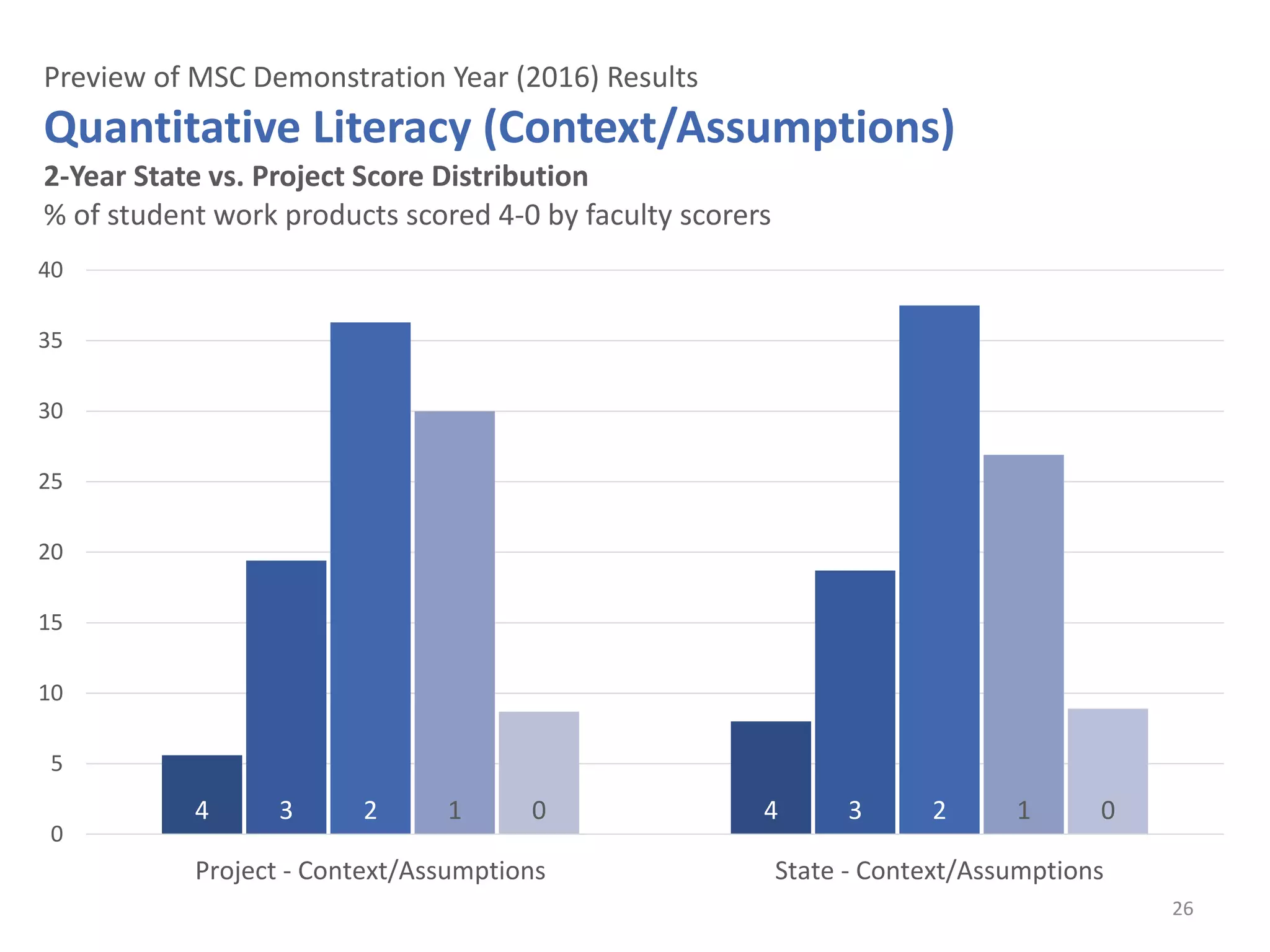

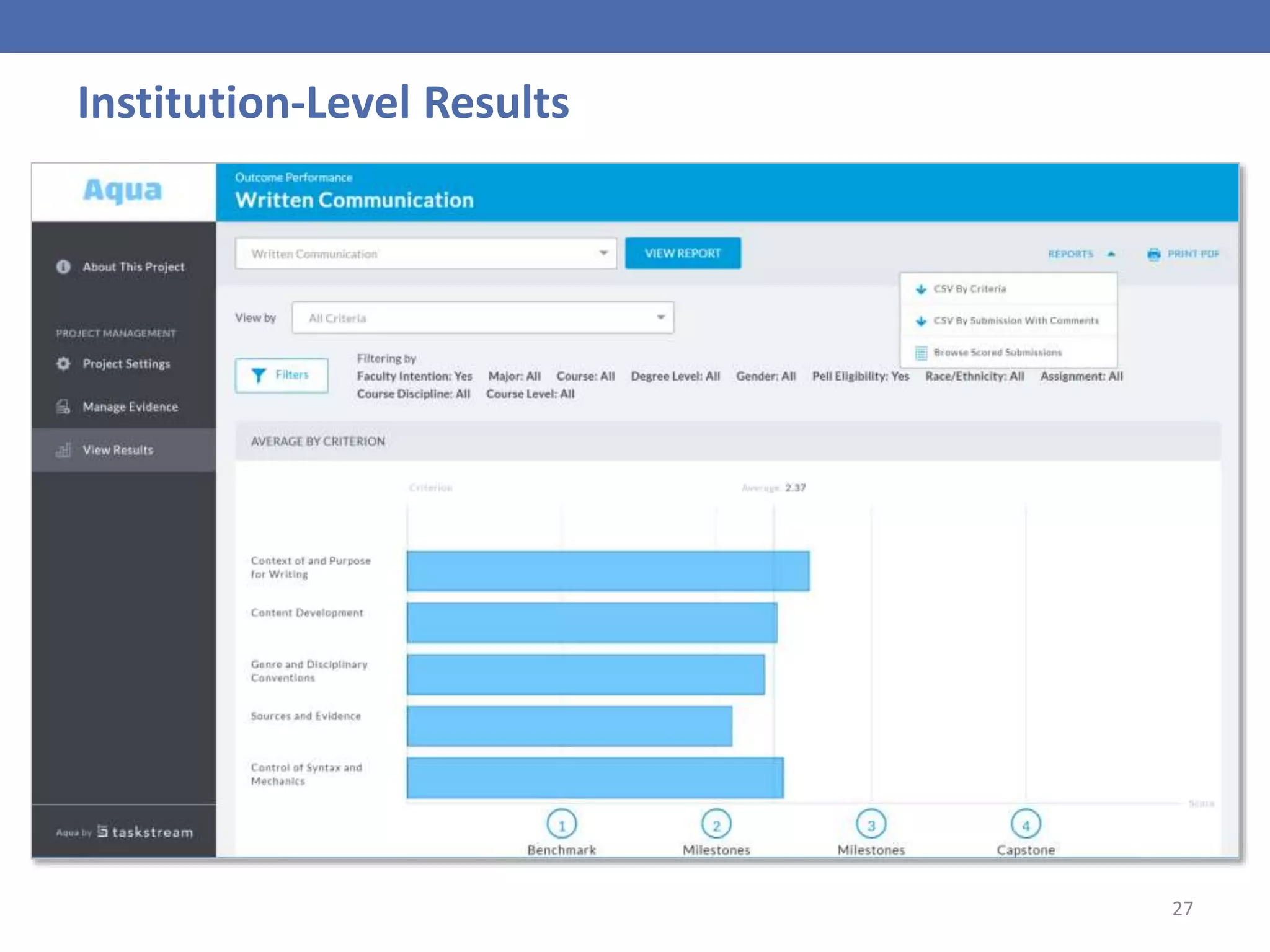

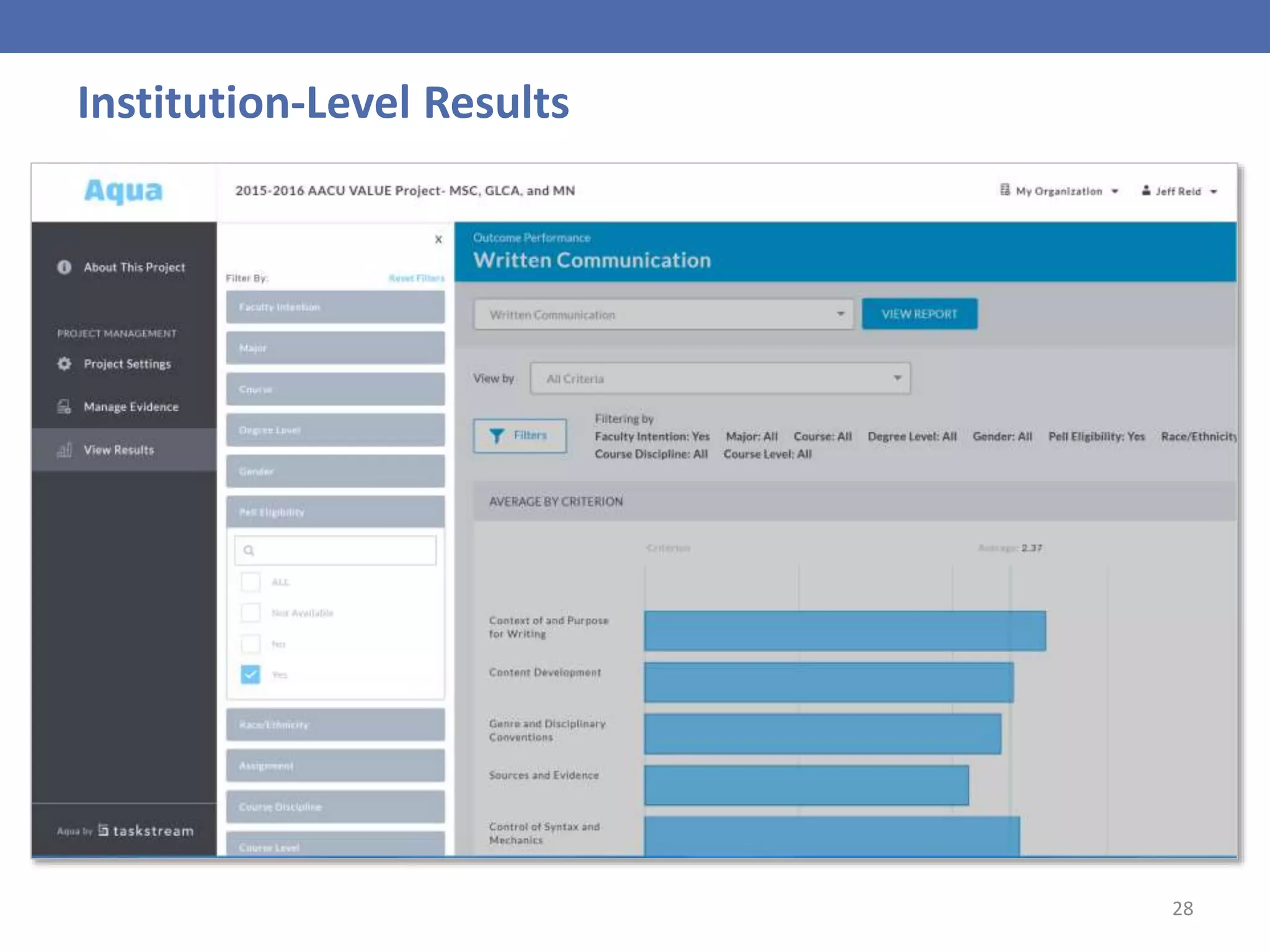

- Results provided actionable data on student achievement and areas for improvement in critical thinking, quantitative literacy, and other skills.

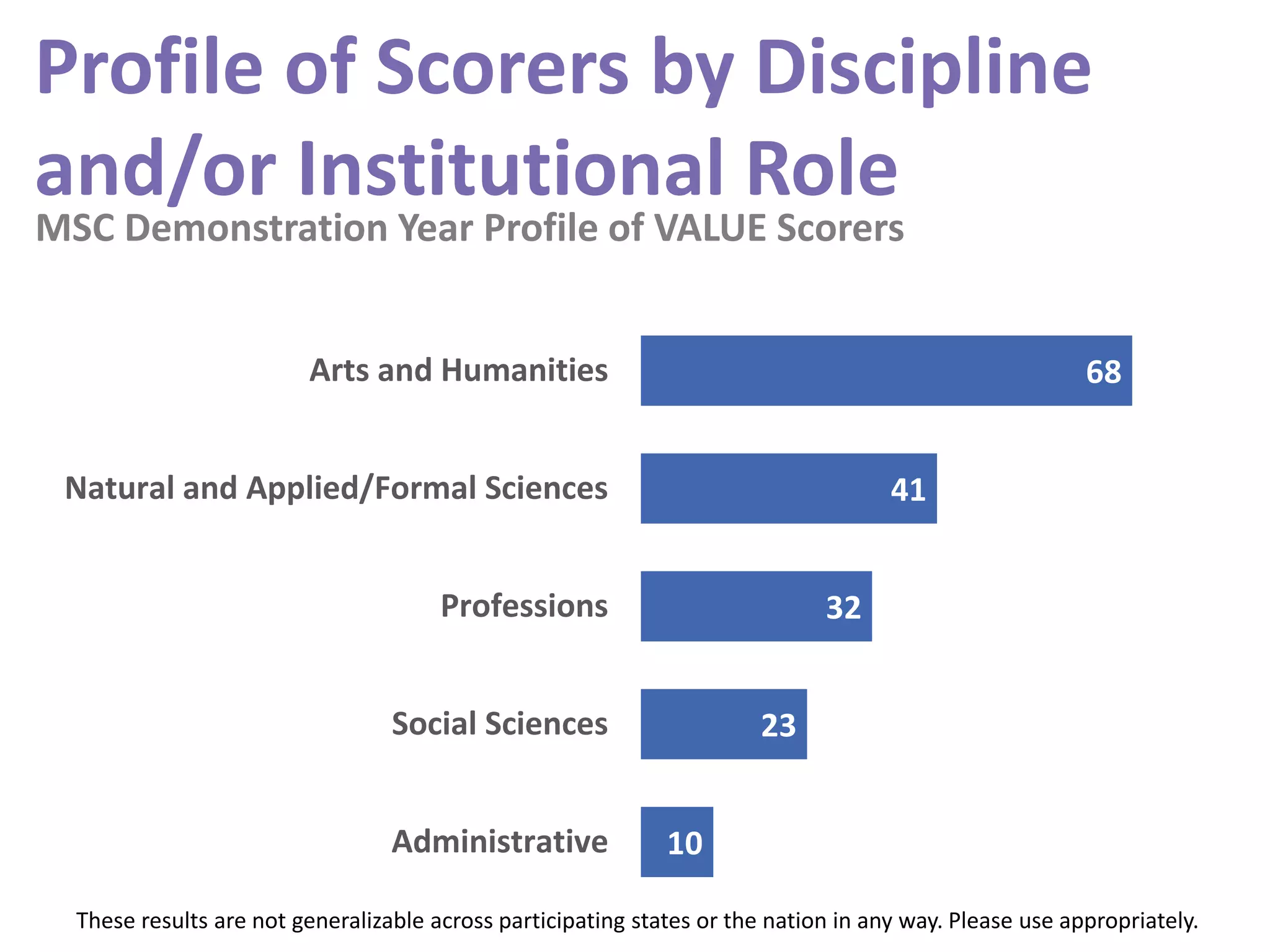

- Faculty reliably scored student work from other institutions and disciplines after training.

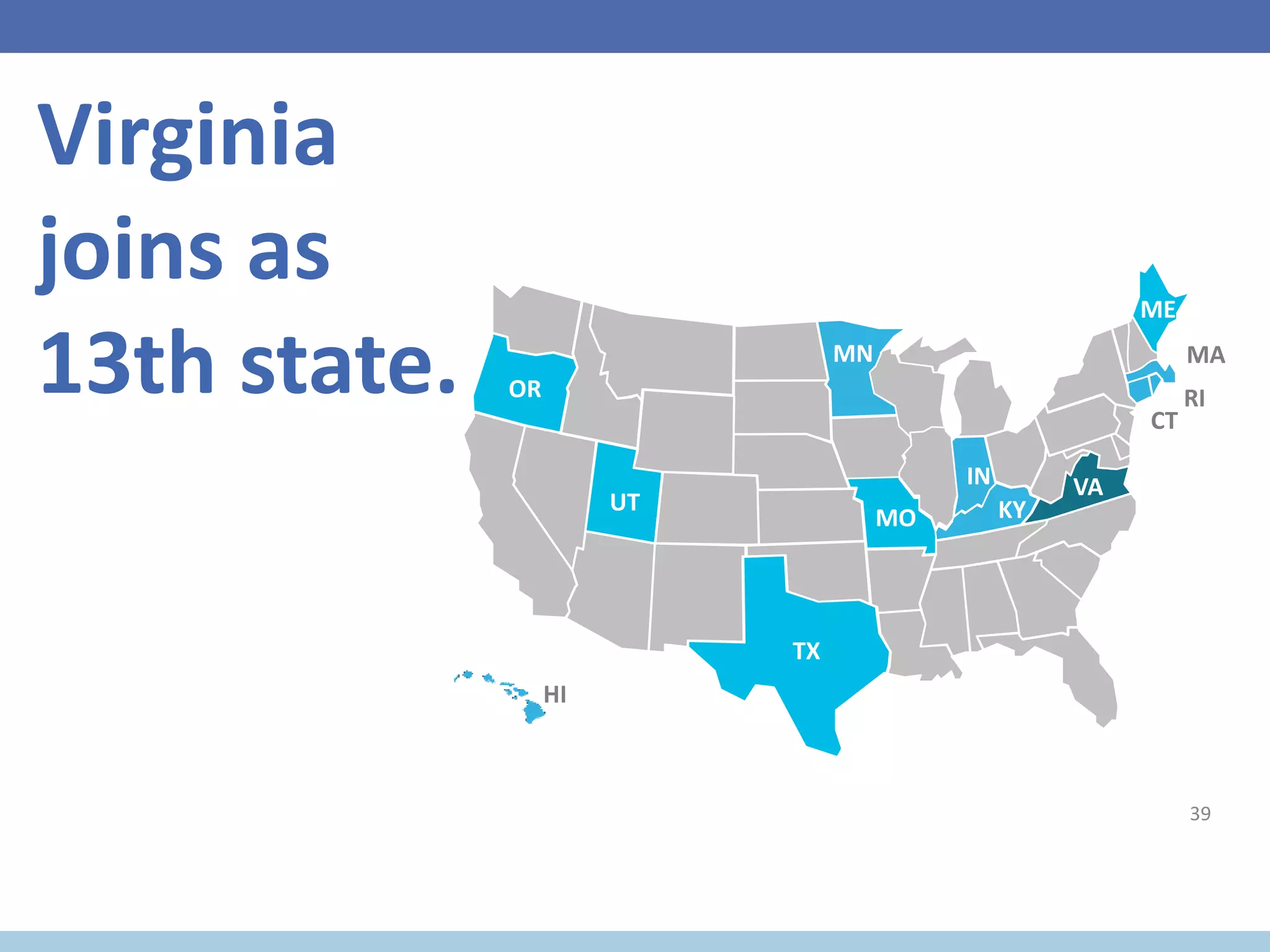

- Next steps include expanding the study to 13 states and 20,000 artifacts to further evaluate the approach and focus on equity.