Embed presentation

Download as PDF, PPTX

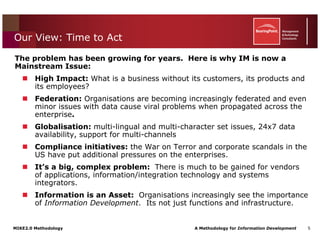

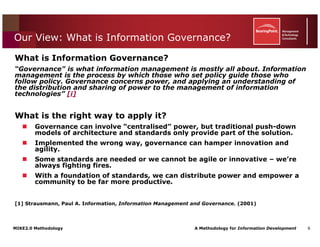

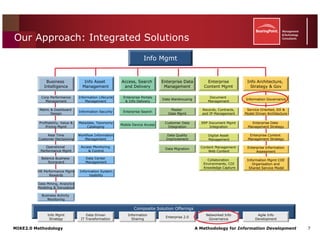

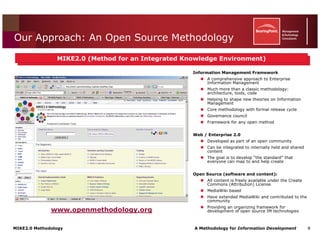

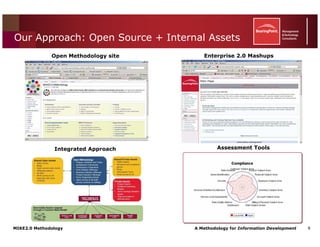

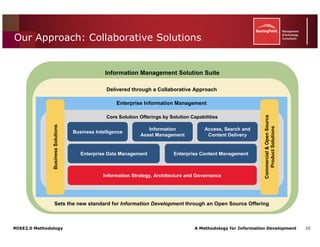

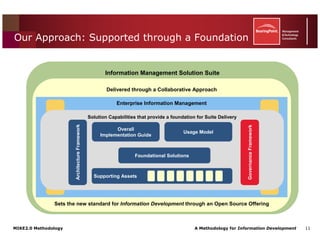

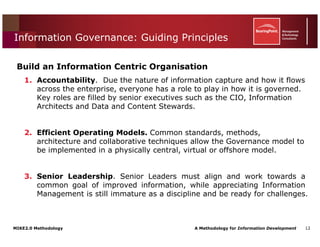

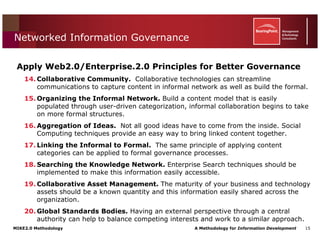

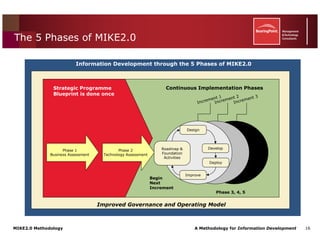

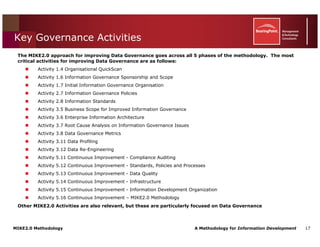

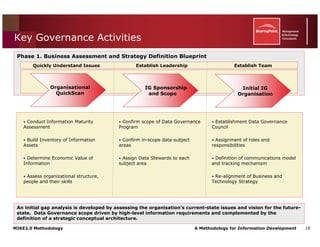

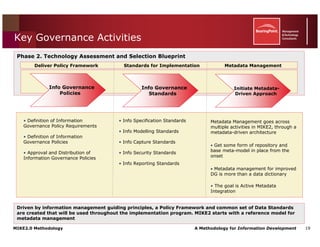

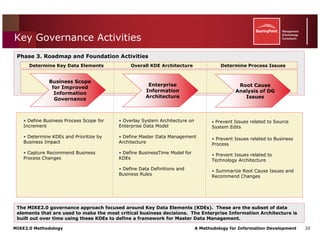

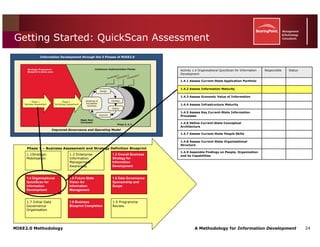

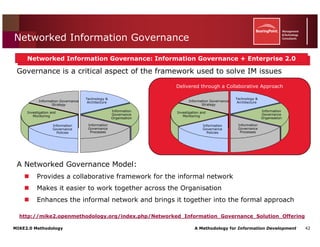

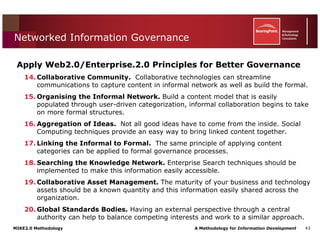

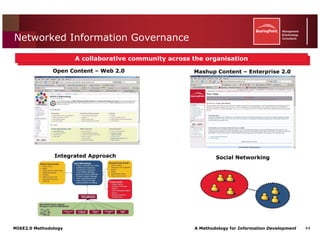

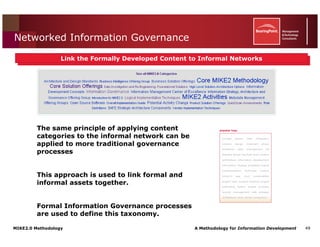

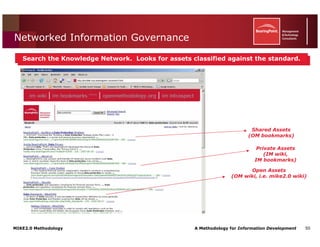

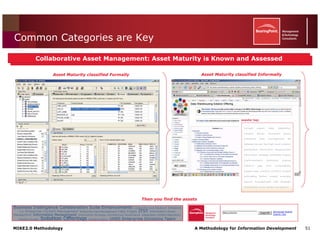

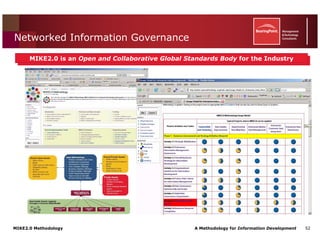

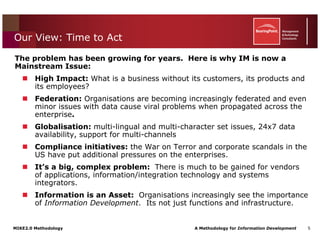

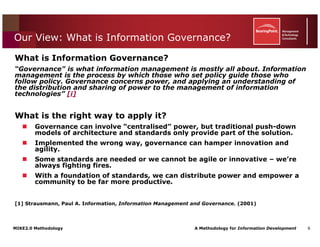

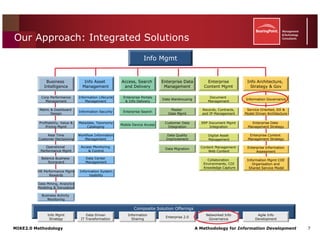

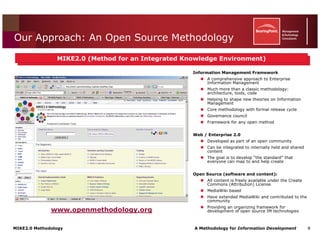

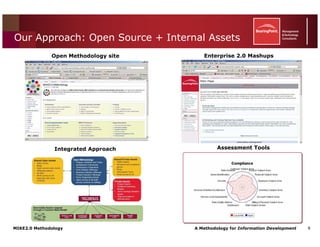

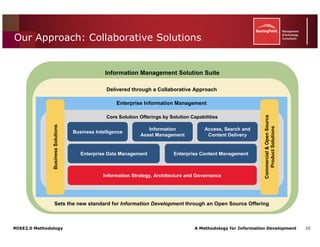

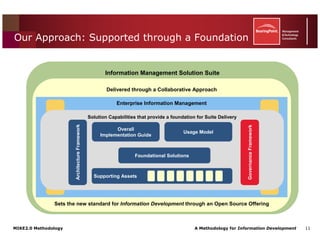

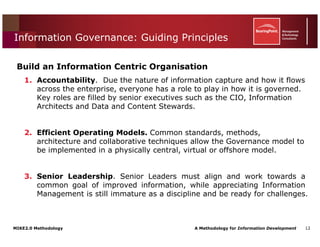

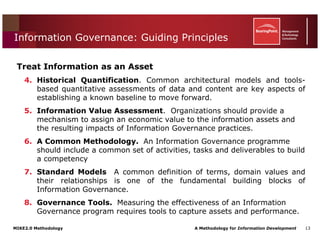

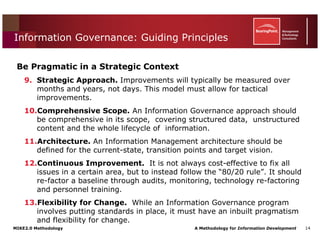

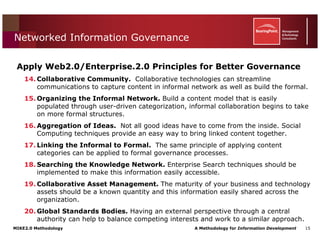

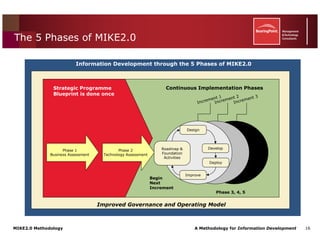

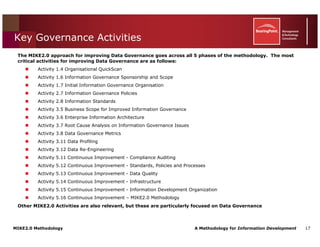

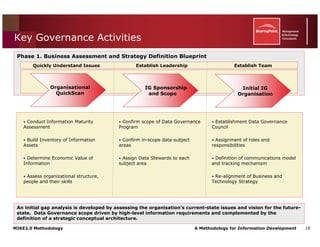

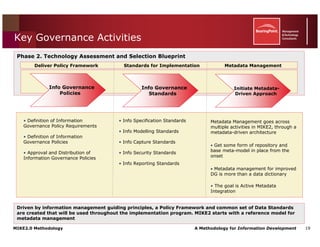

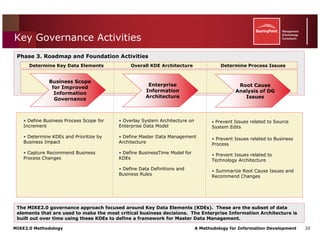

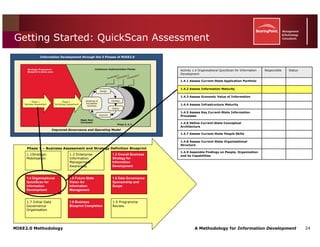

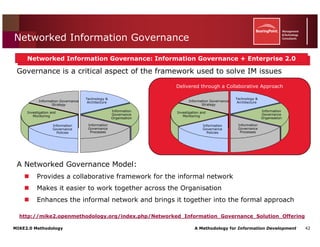

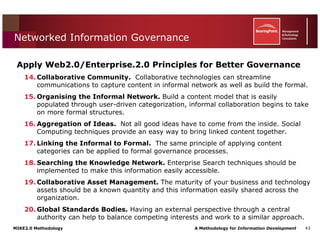

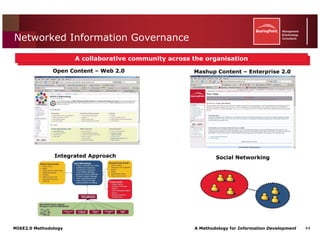

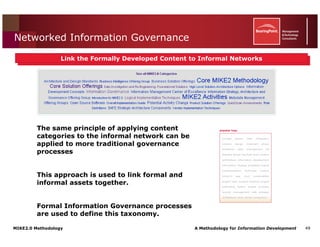

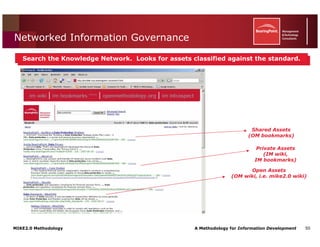

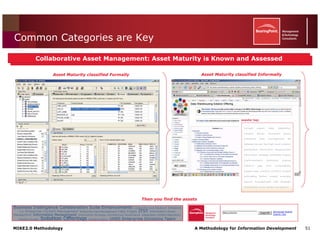

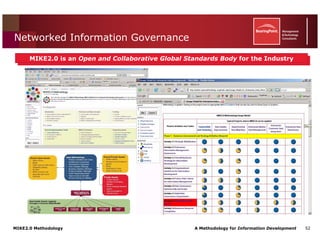

This document introduces the MIKE2.0 methodology for information governance. MIKE2.0 is an open source methodology that provides a comprehensive framework for enterprise information management. It addresses the growing complexity of managing exponential data growth across increasingly federated organizations. The methodology promotes standards and transparency to improve data quality and business insights while increasing efficiency.