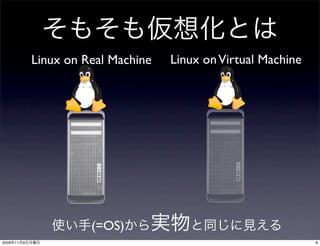

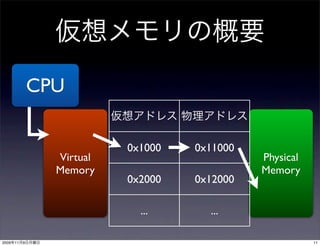

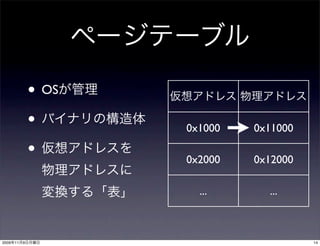

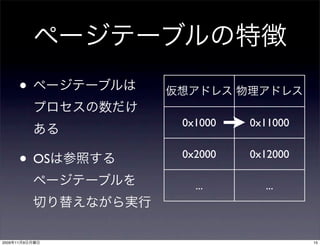

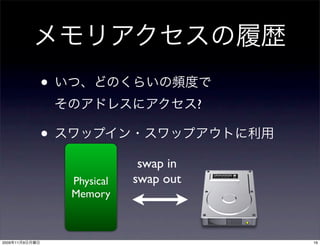

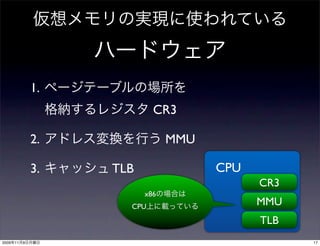

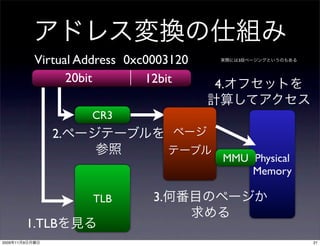

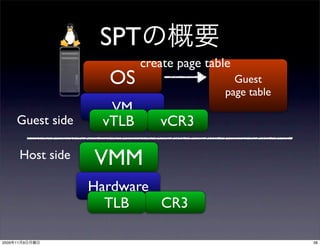

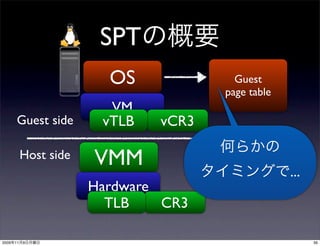

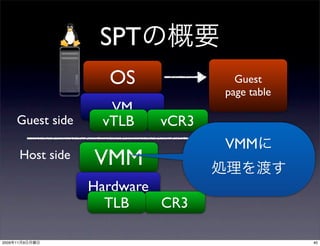

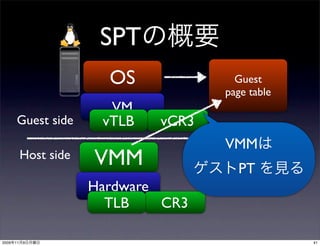

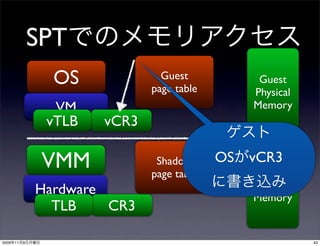

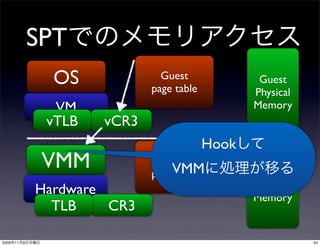

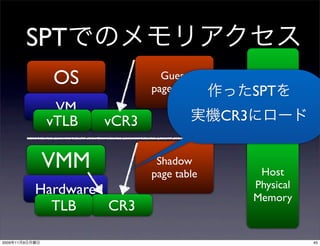

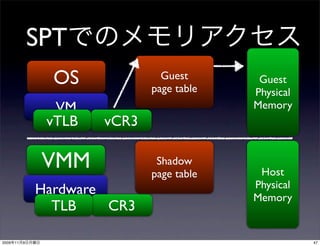

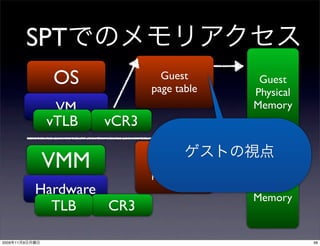

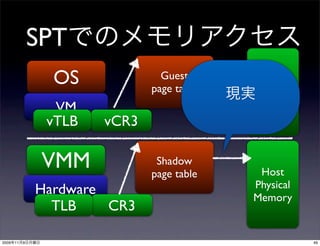

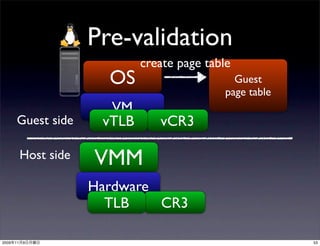

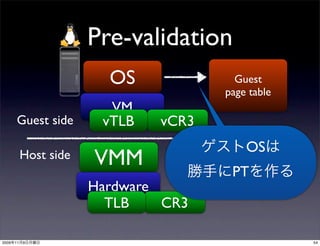

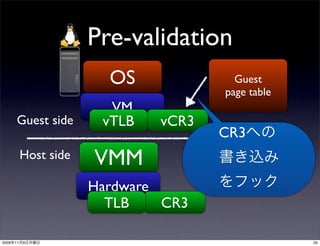

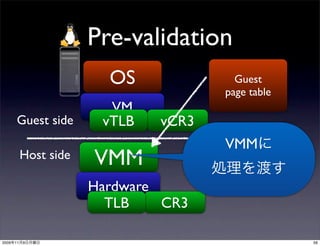

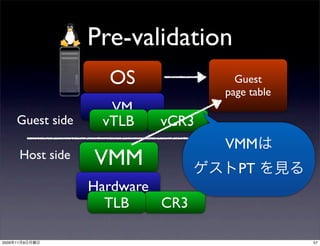

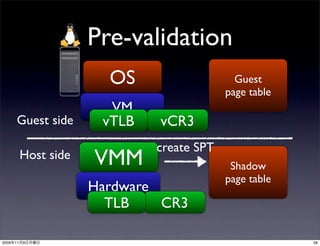

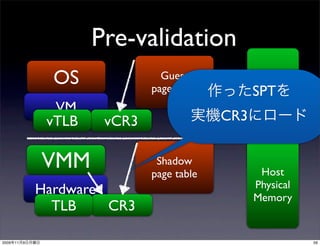

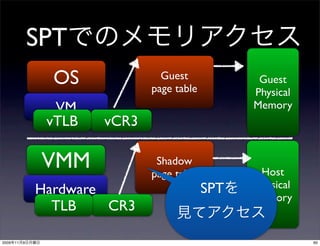

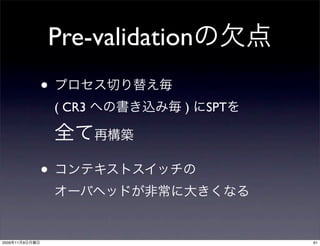

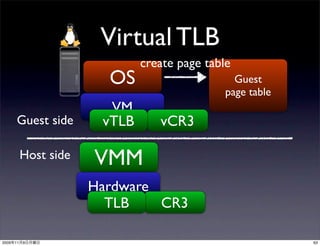

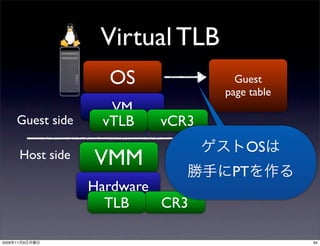

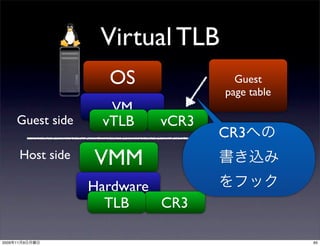

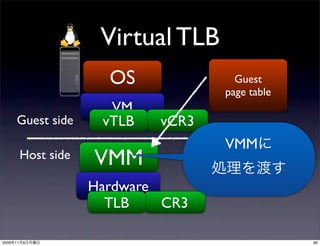

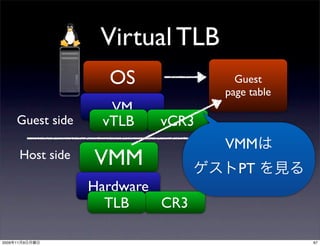

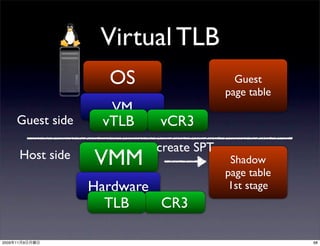

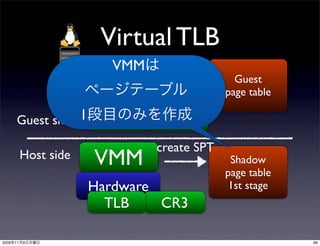

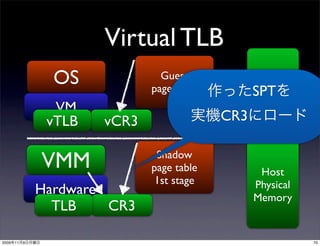

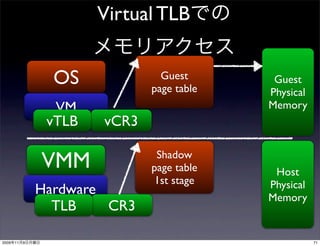

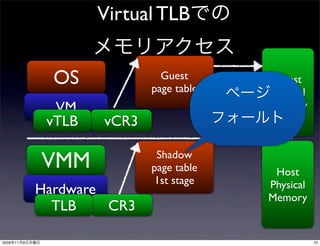

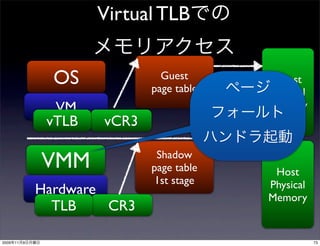

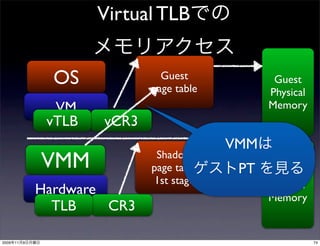

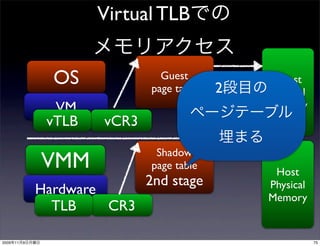

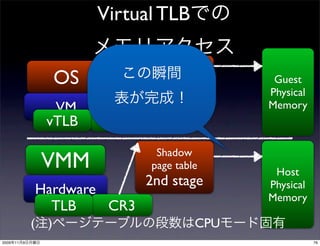

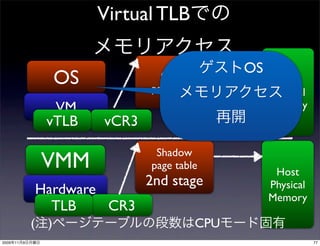

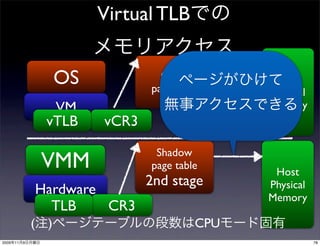

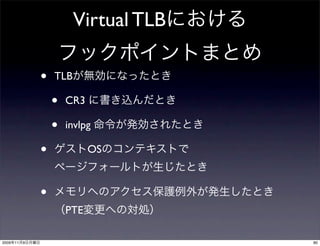

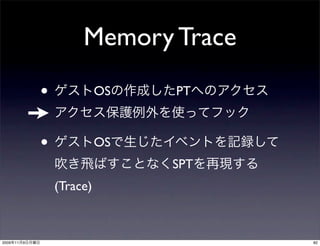

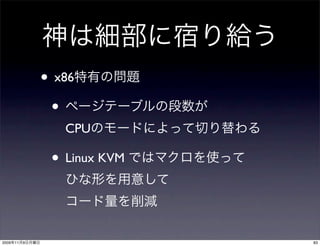

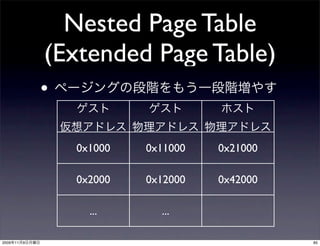

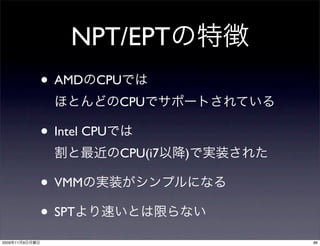

The document discusses virtualization techniques used in KVM. It describes how KVM uses shadow page tables to virtualize memory management. The shadow page tables allow virtual addresses used by a guest OS to be translated to physical addresses on the host machine. Different techniques for implementing shadow page tables are described, including pre-validation of guest page tables and using a virtual translation lookaside buffer to cache translations.