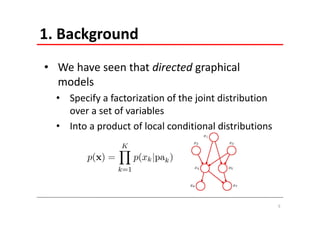

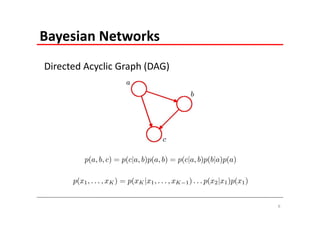

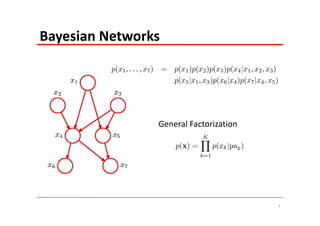

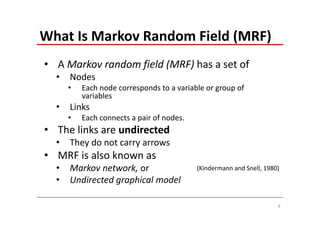

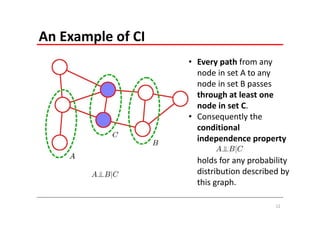

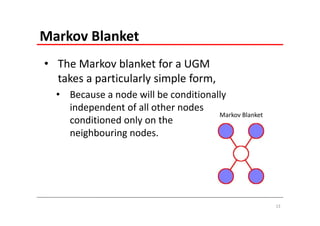

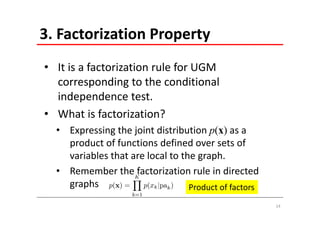

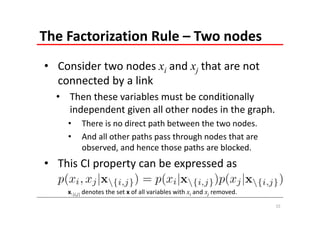

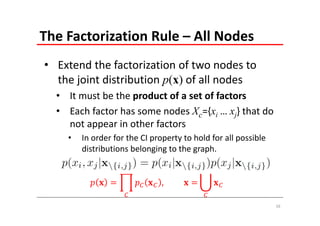

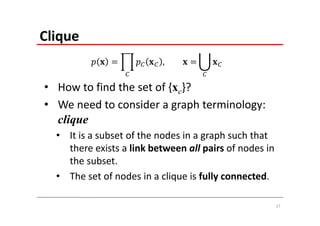

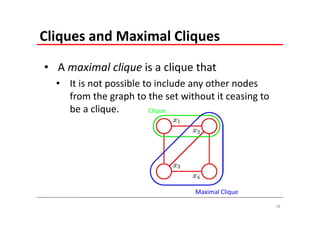

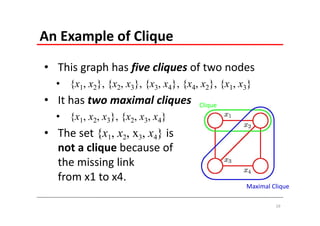

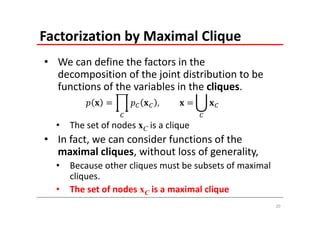

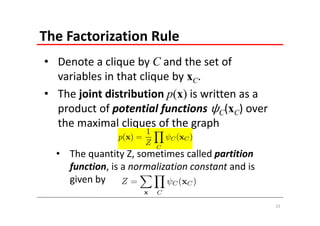

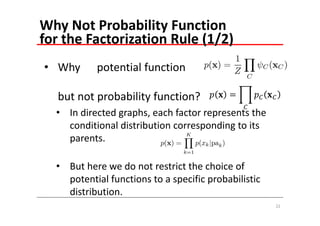

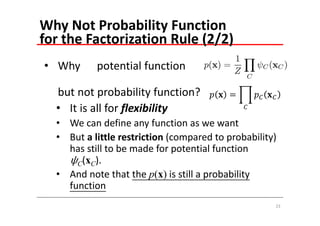

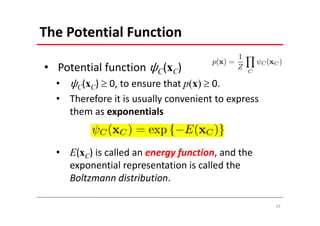

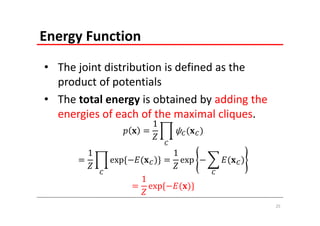

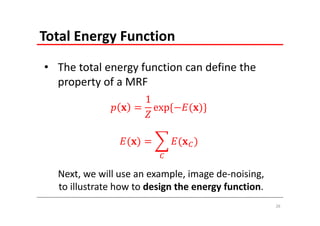

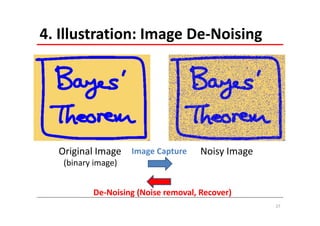

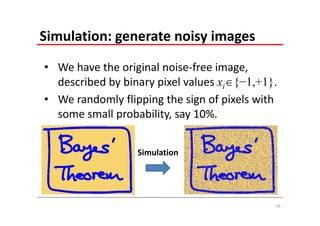

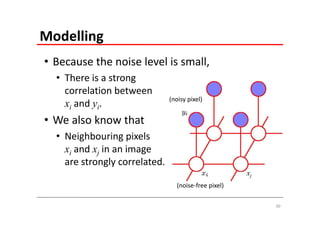

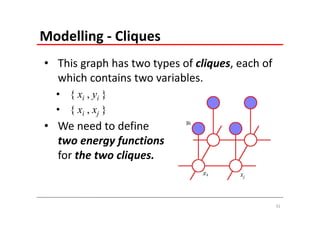

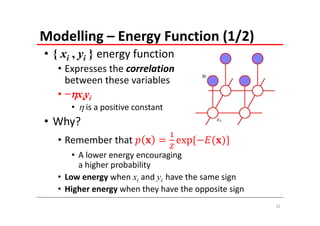

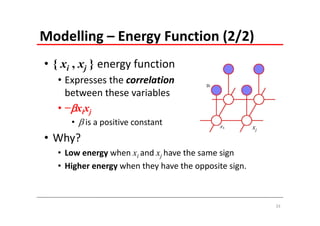

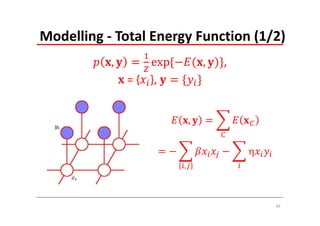

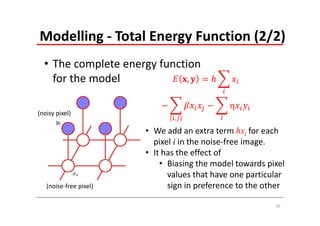

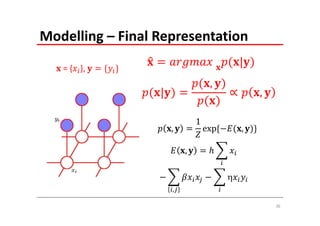

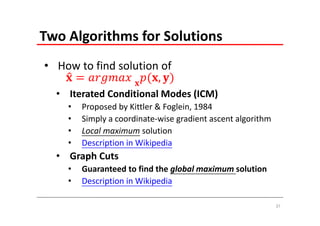

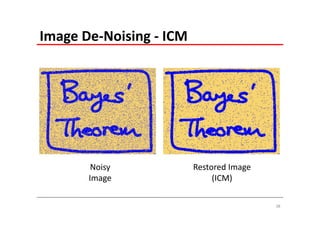

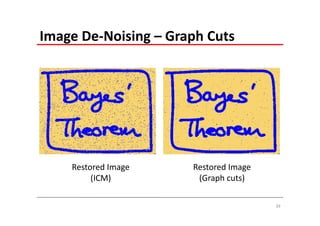

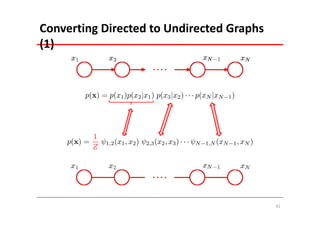

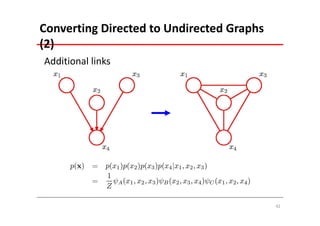

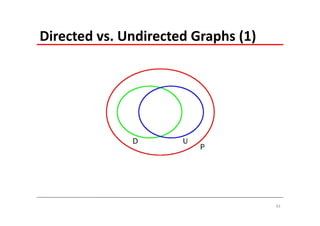

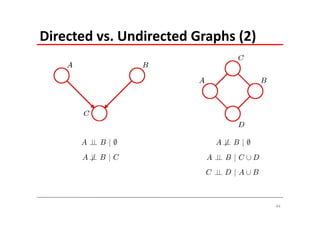

The document explains Markov Random Fields (MRFs) as a class of undirected graphical models used for modeling joint distributions and conditional independence properties, contrasting them with directed graphical models like Bayesian networks. It covers the factorization of joint distributions, the significance of energy functions in applications such as image de-noising, and the underlying relationships between directed and undirected graphs. Key concepts such as cliques, potential functions, and solution algorithms are also discussed.