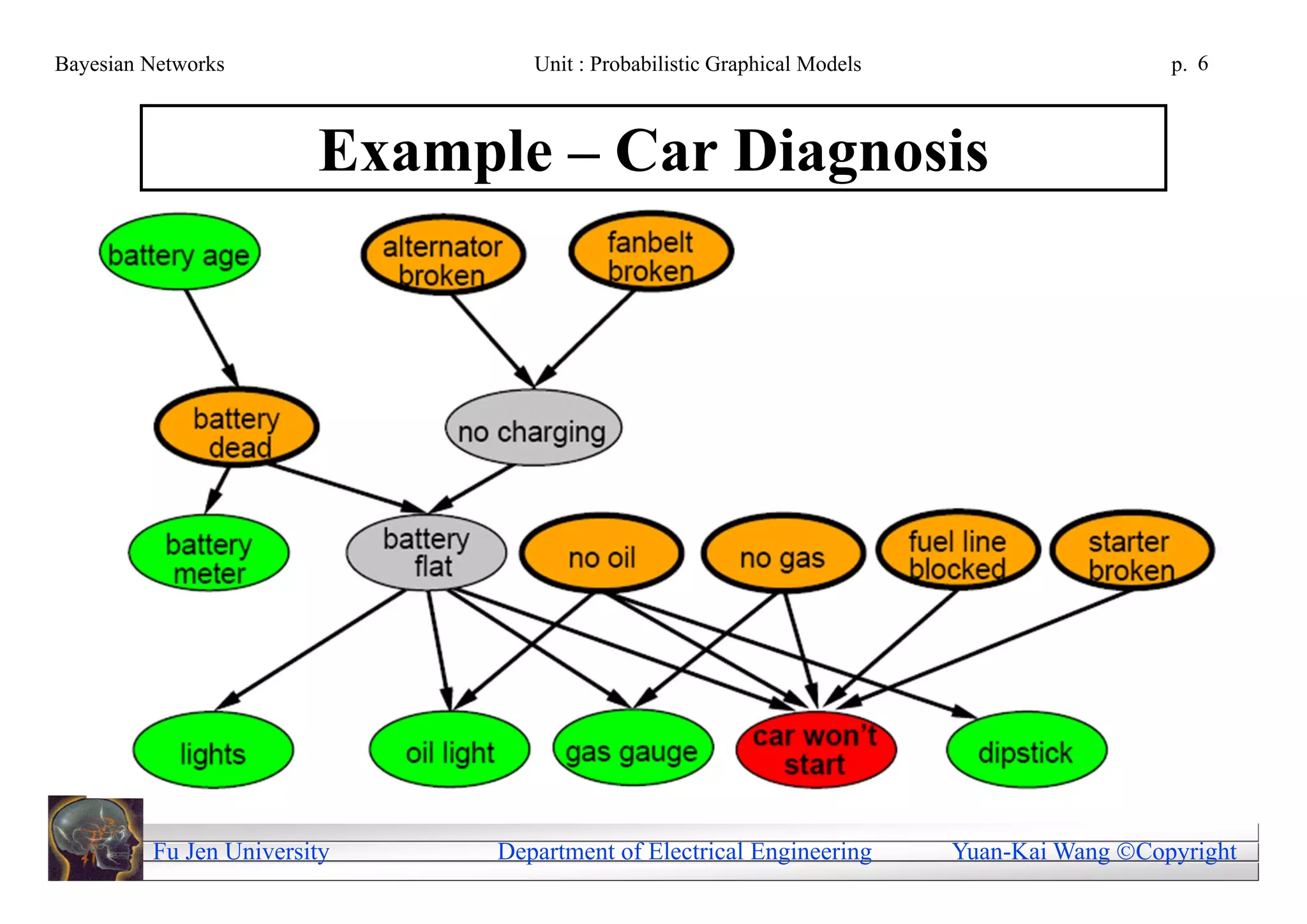

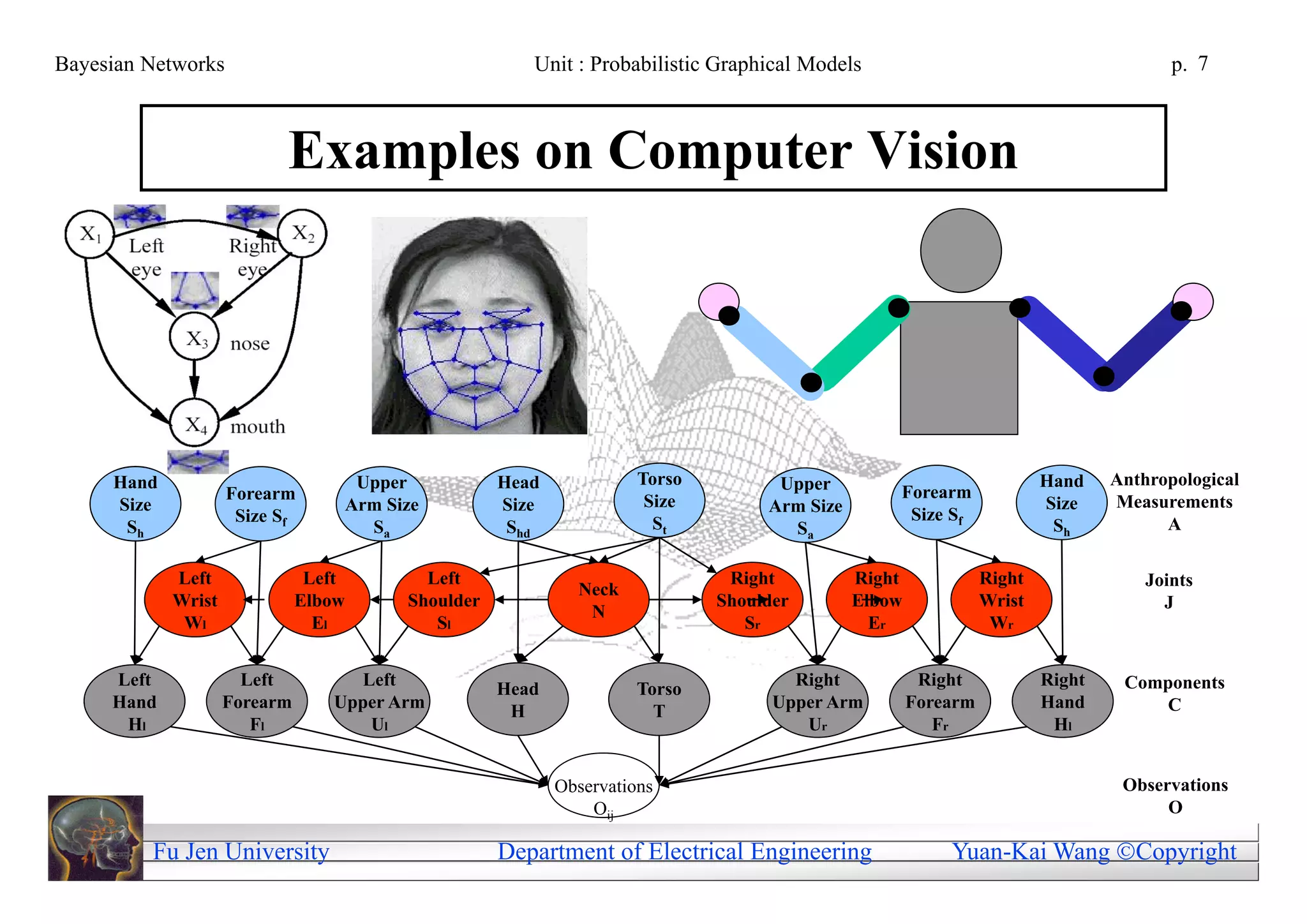

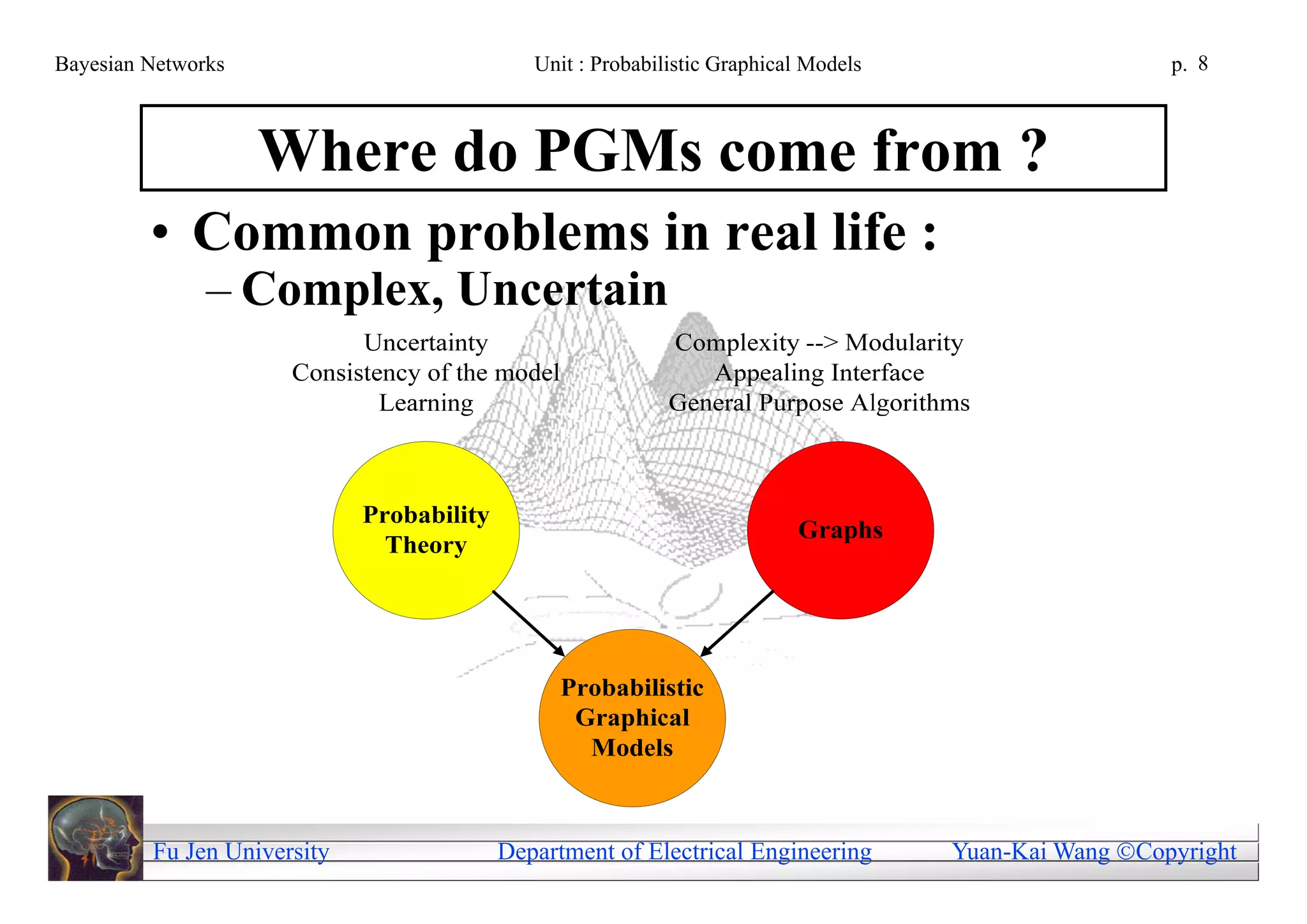

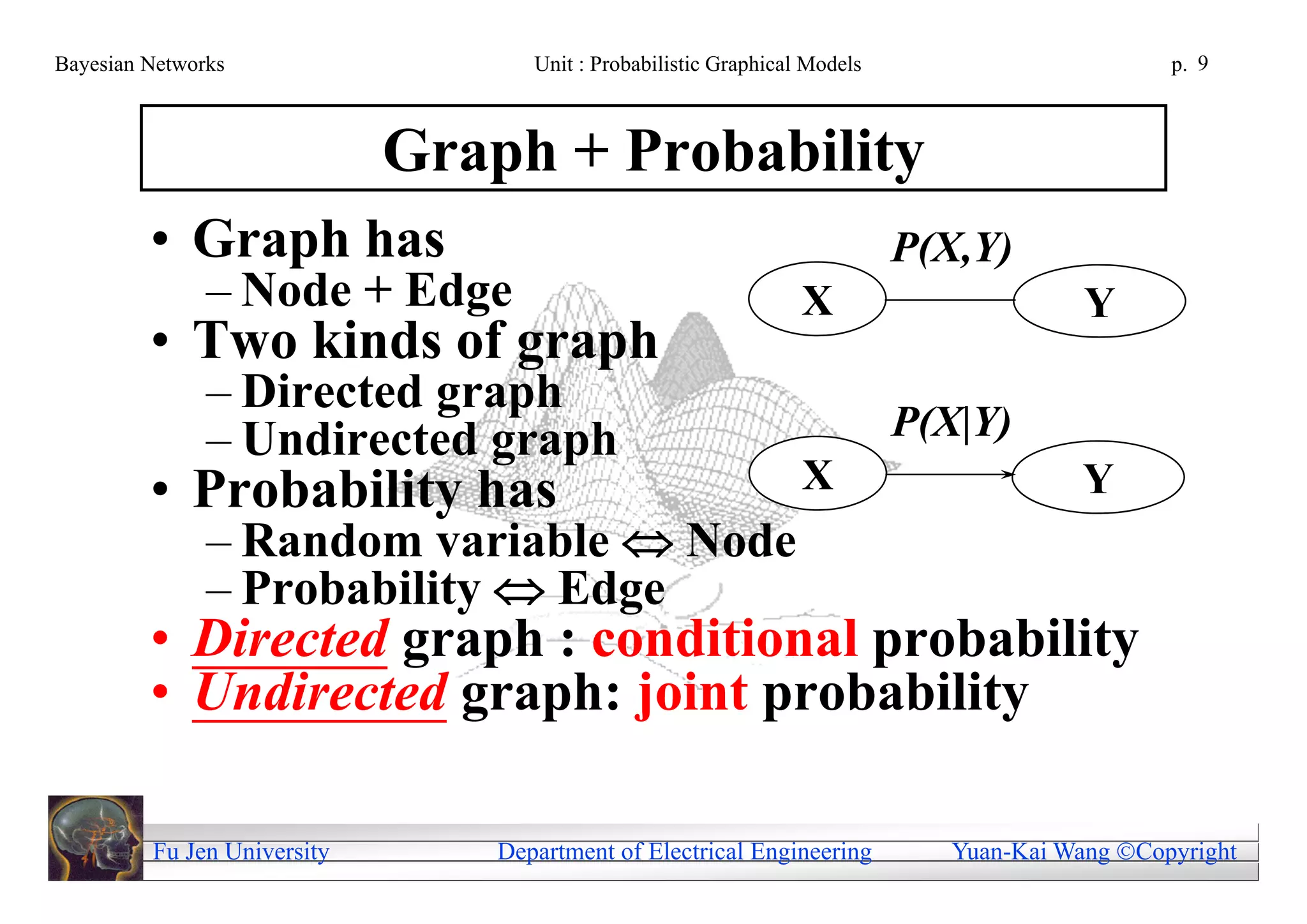

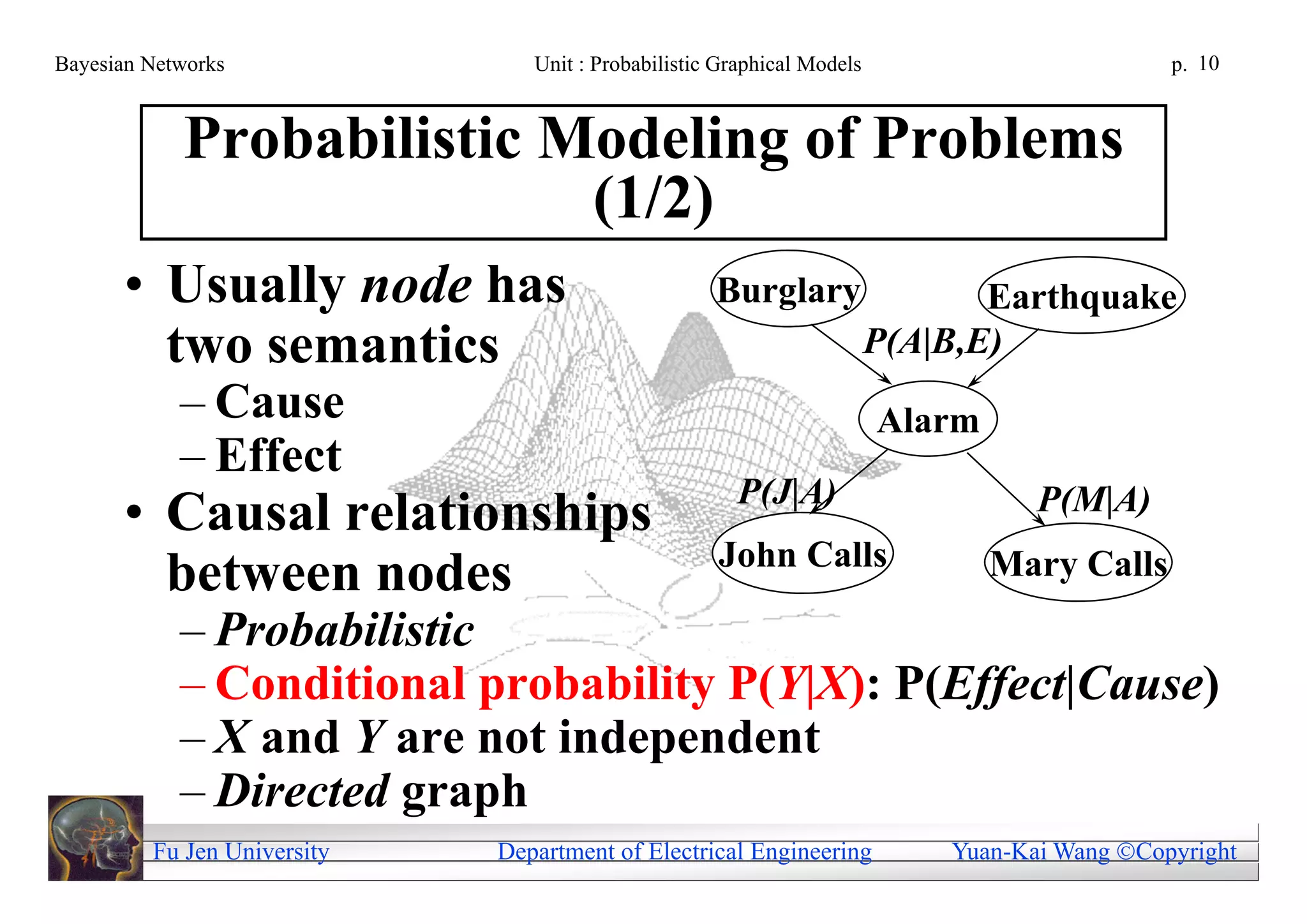

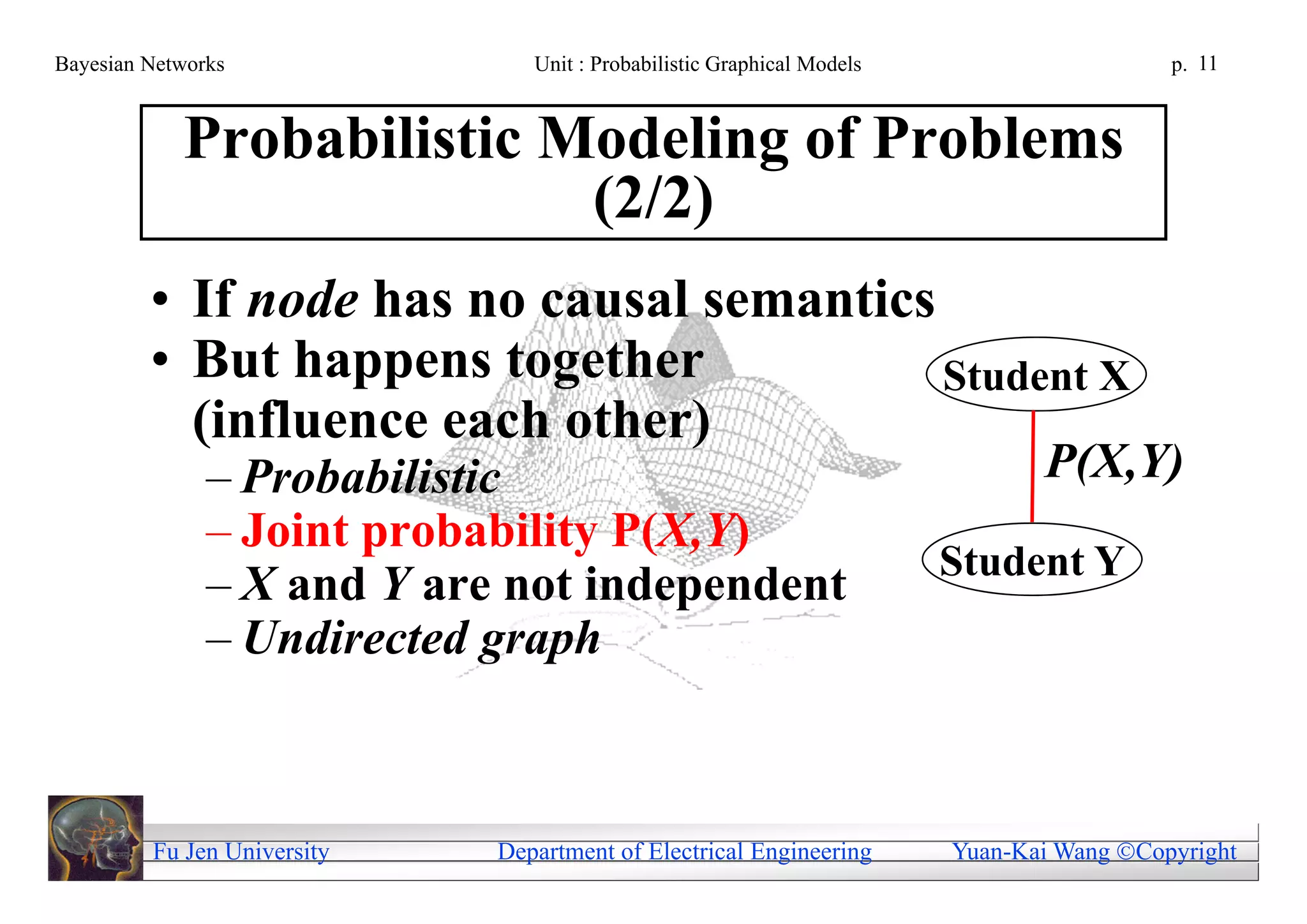

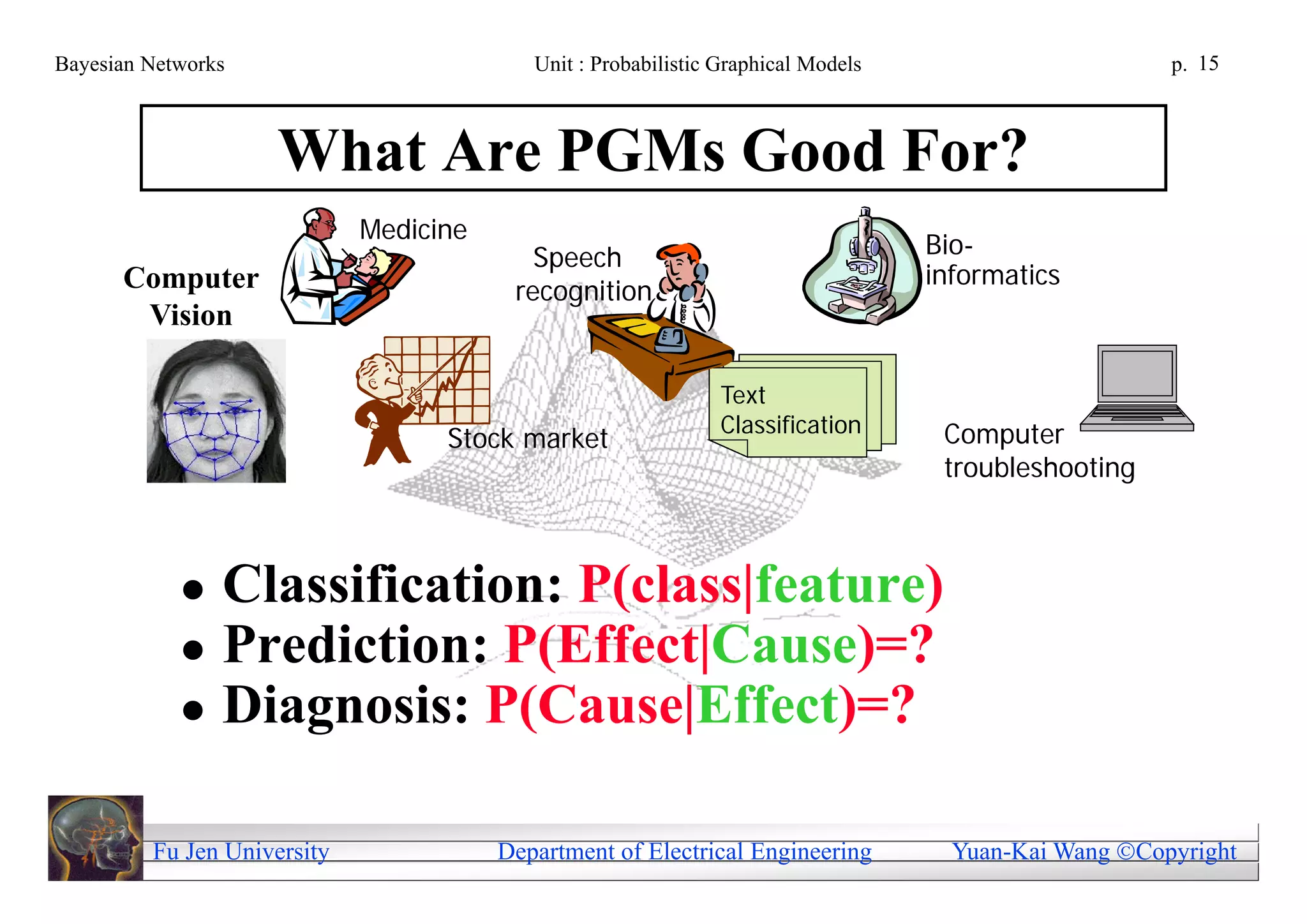

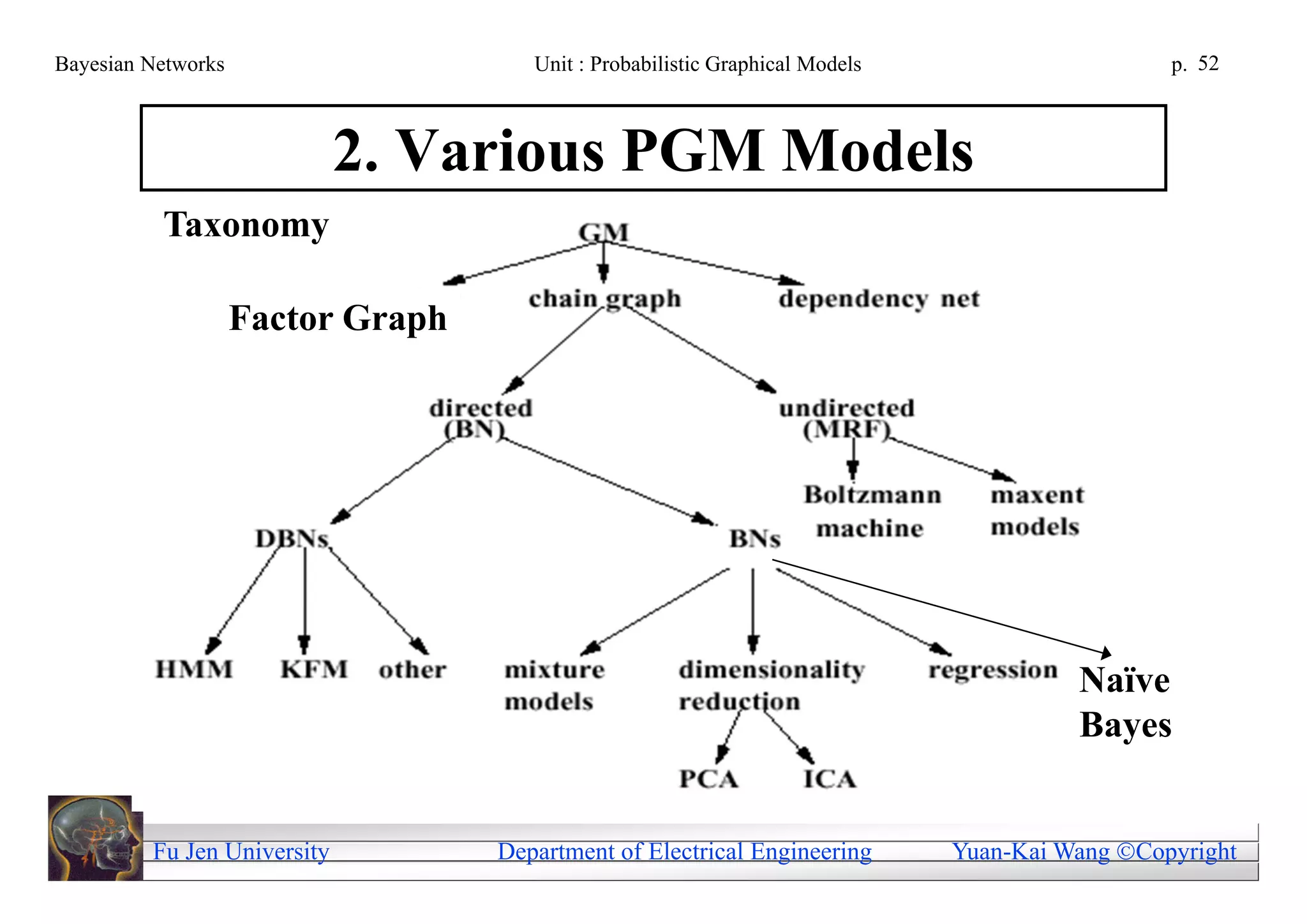

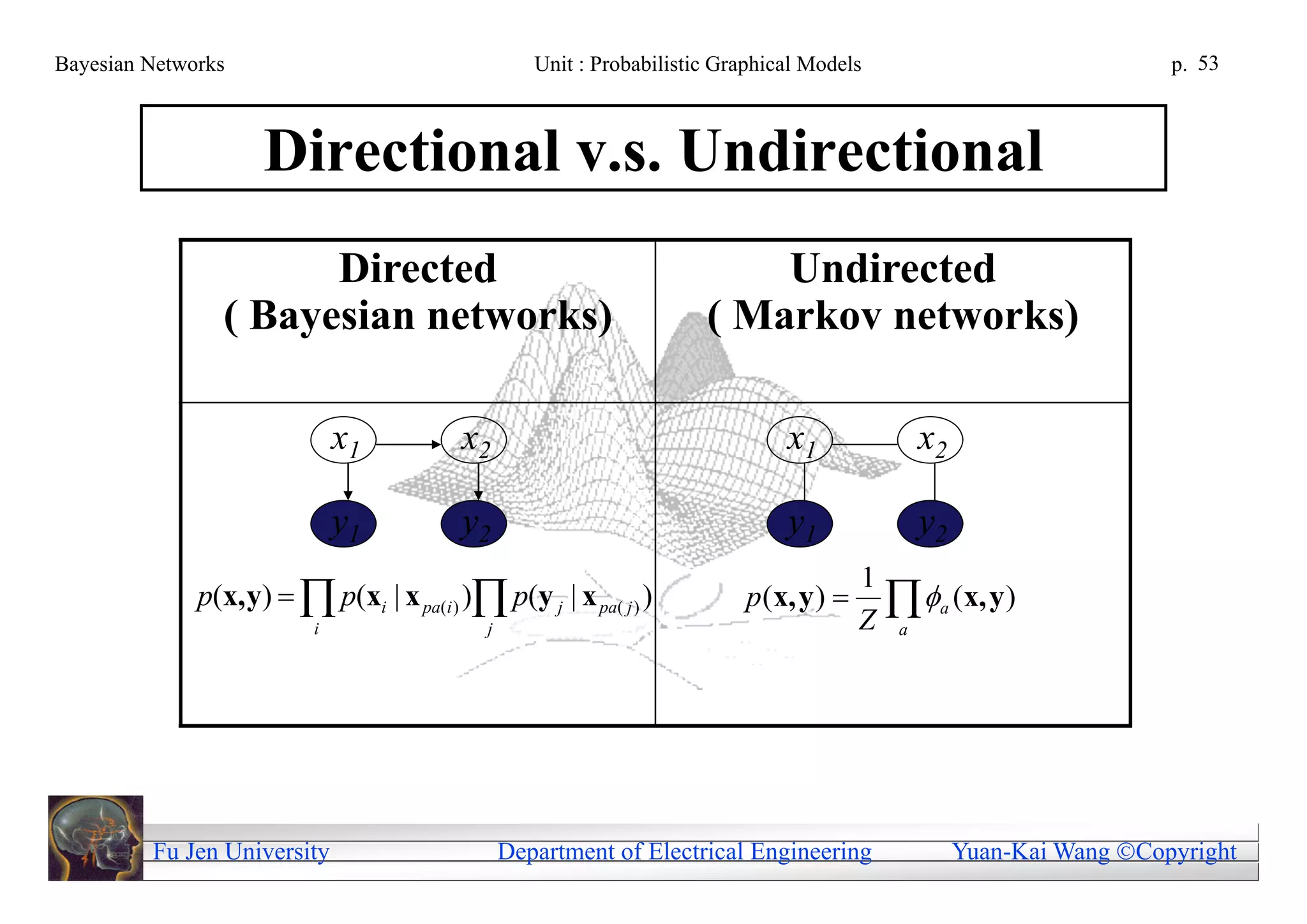

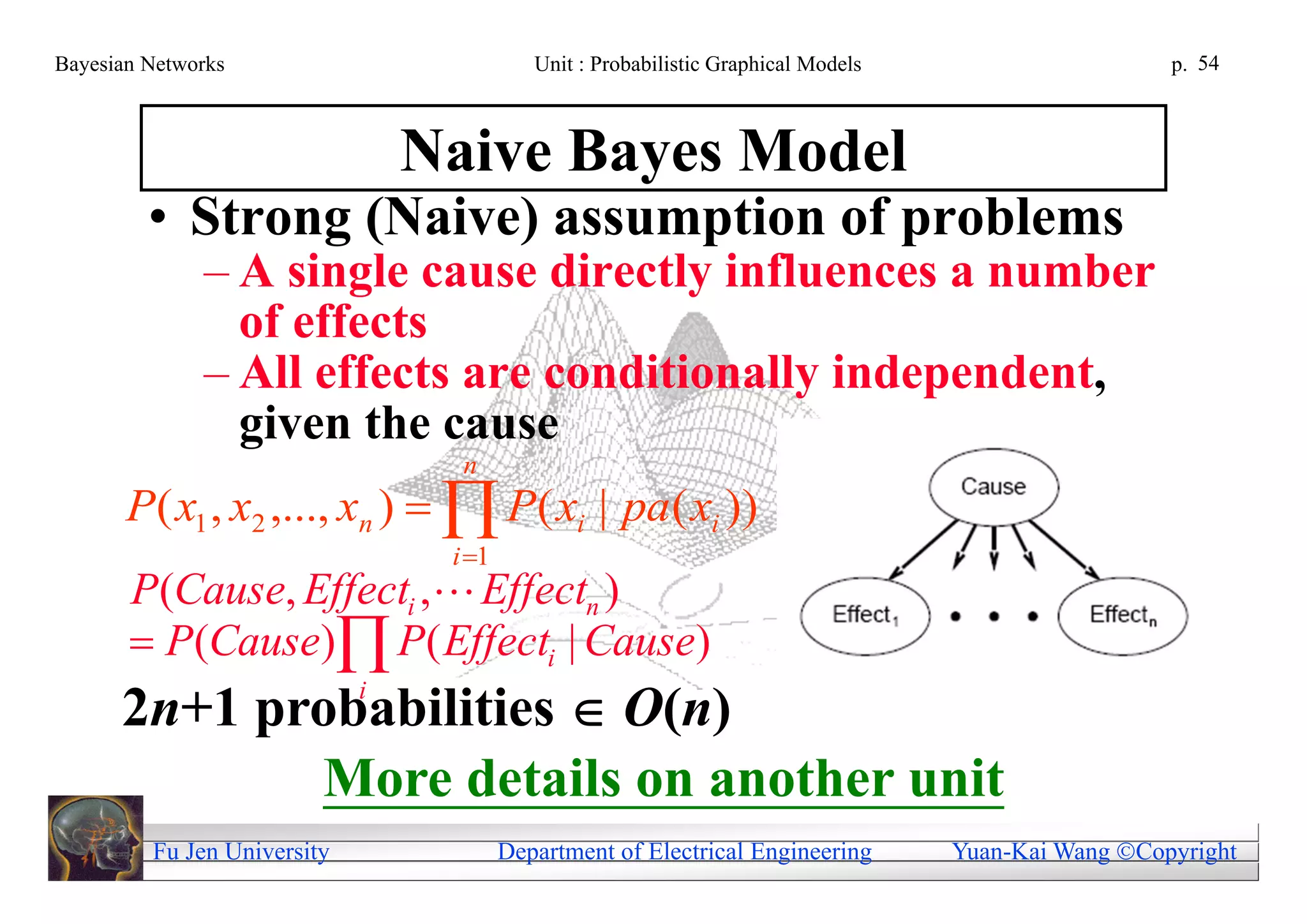

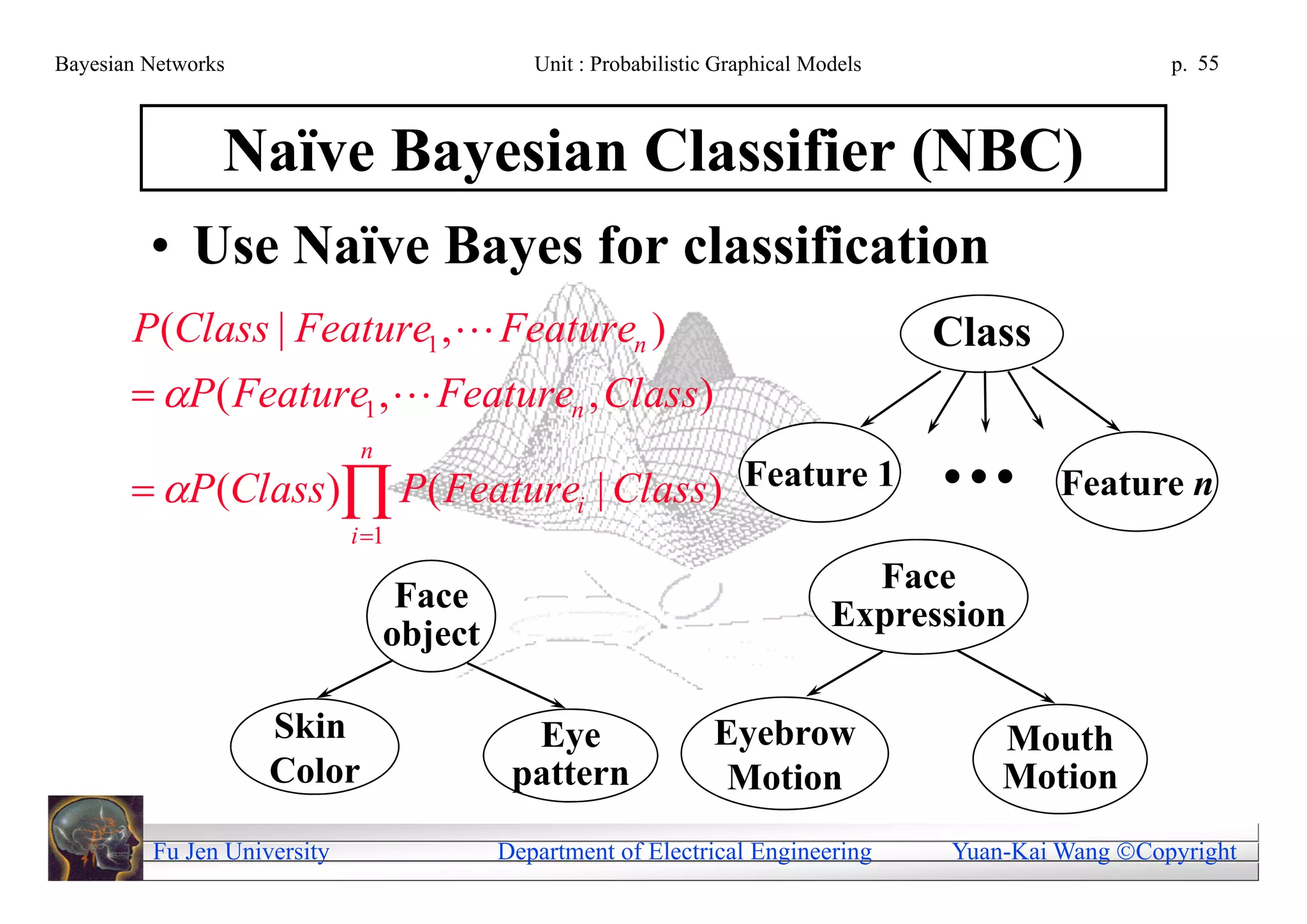

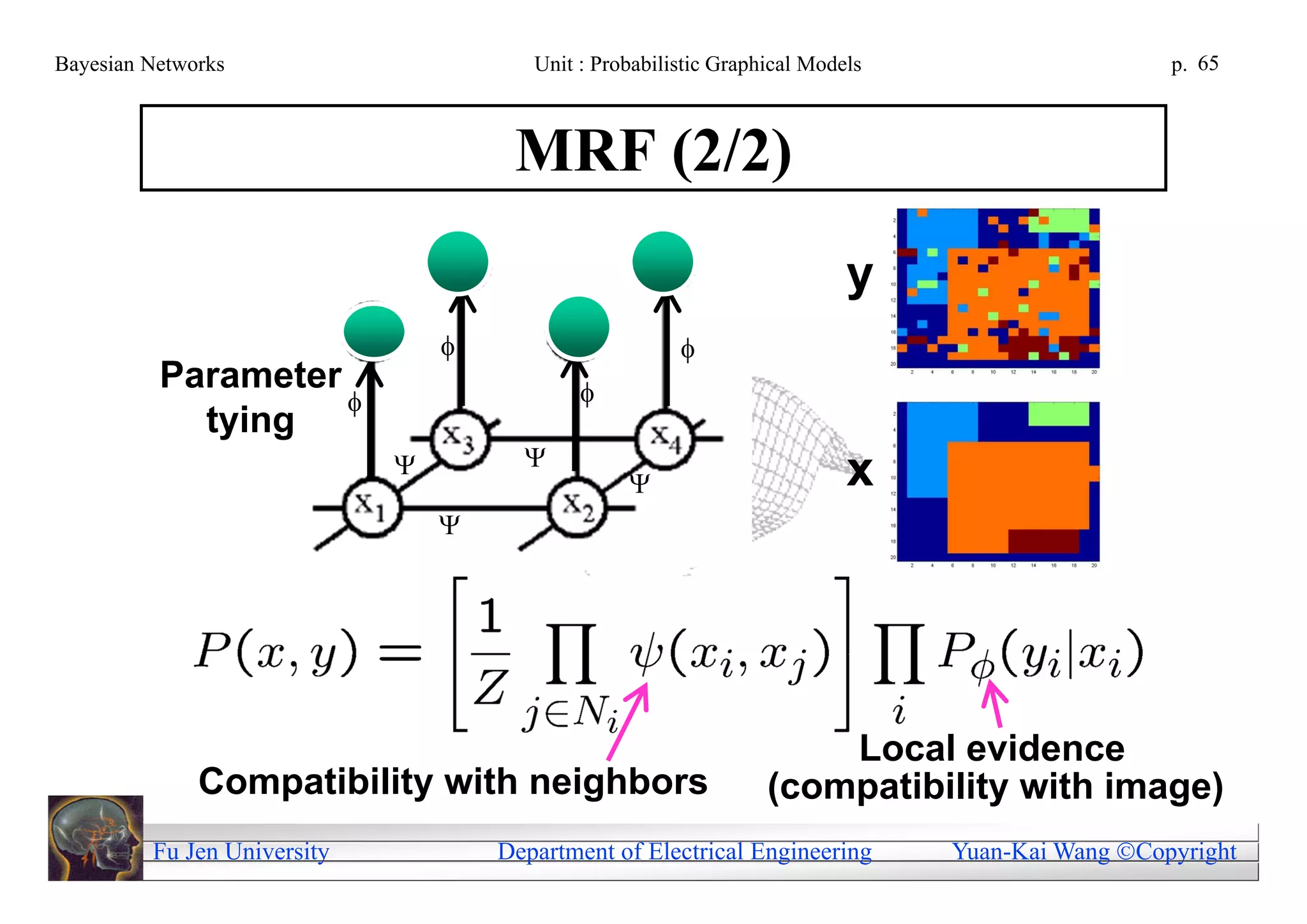

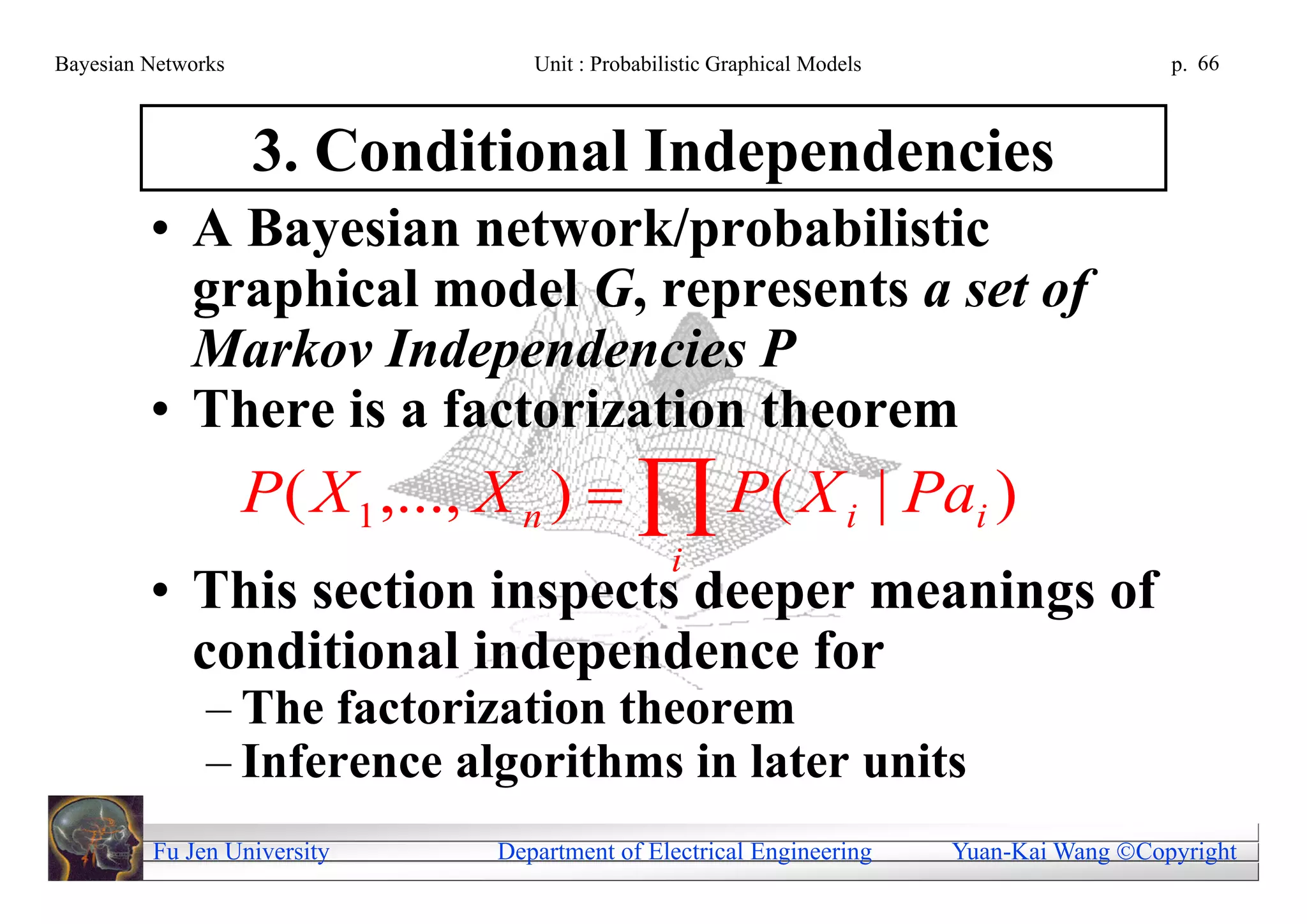

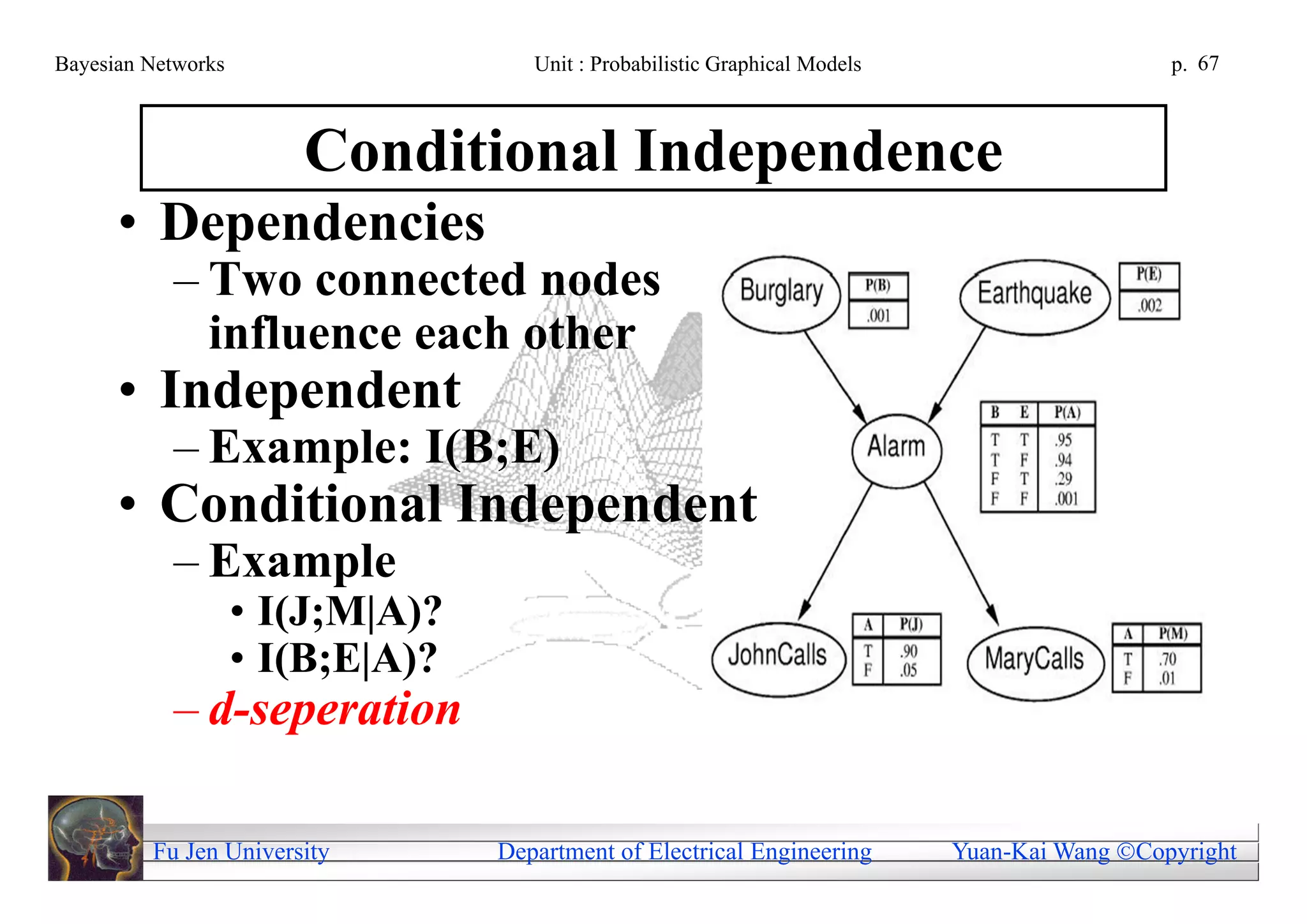

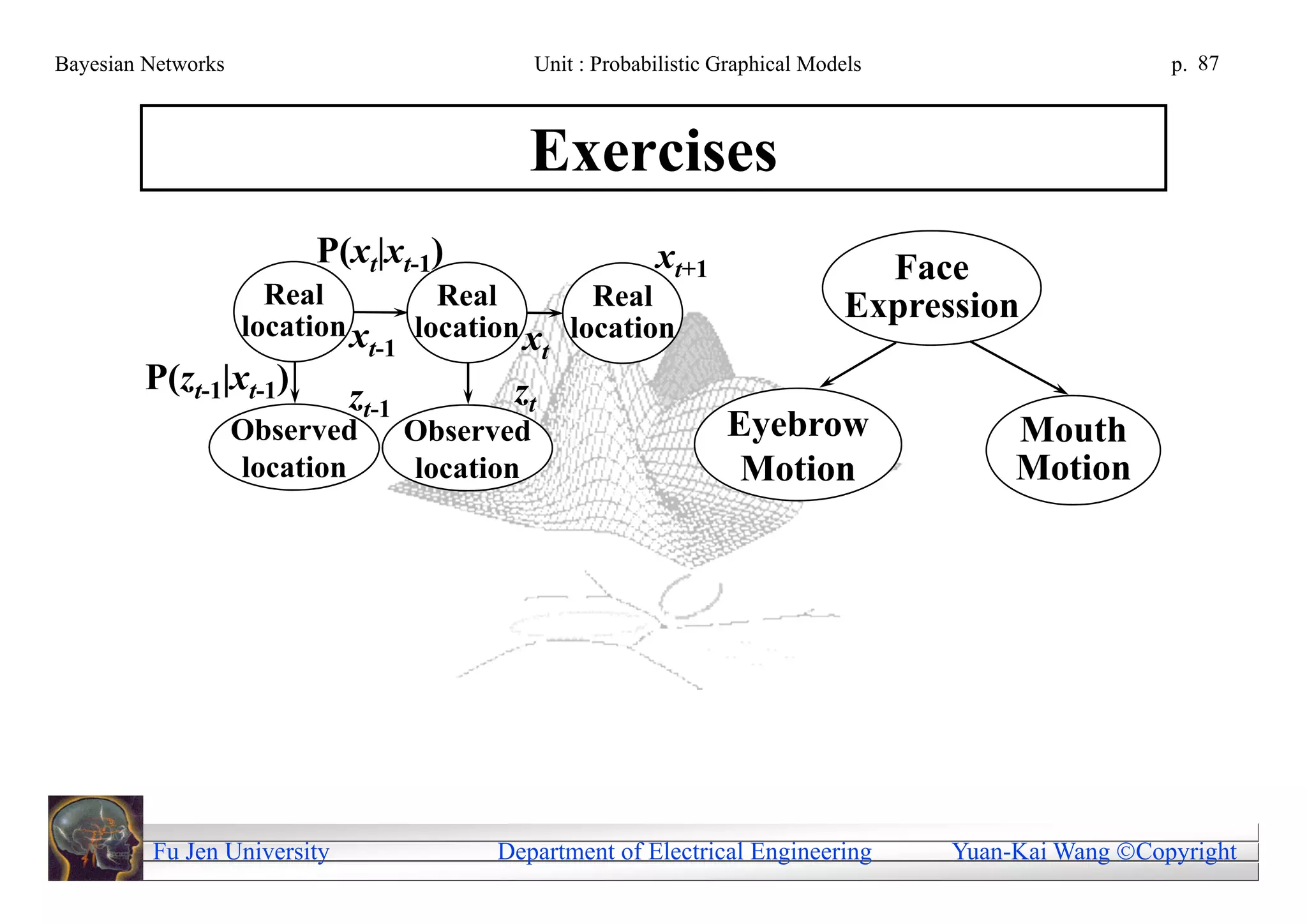

This document provides an overview of Bayesian networks and probabilistic graphical models (PGMs). It outlines the goals of learning how to build graphical models using graph theory and perform inference under uncertainty using probability theory. It also lists some example PGM models like Markov random fields, hidden Markov models, dynamic Bayesian networks, naive Bayes models, and applications in computer vision. Finally, it provides the table of contents and references for further self-study on PGMs and Bayesian networks.

![Bayesian Networks Unit : Probabilistic Graphical Models p. 153

References

• An introduction to Bayesian network theory

and usage, T. A. Stephenson, IDIAP

Research report IDIAP-RR 00-03, Feb. 2000.

[Available:

http://www.rpi.edu/~liaow/file/Intro_BN.pdf]

• Bayesian network without tears, E. Charniak,

AI Magazine, 1991. [Available:

http://www.rpi.edu/~liaow/file/BNtears.pdf]

Fu Jen University Department of Electrical Engineering Yuan-Kai Wang Copyright](https://image.slidesharecdn.com/05-probabilisticgraphicalmodels-110420032755-phpapp02/75/05-probabilistic-graphical-models-153-2048.jpg)