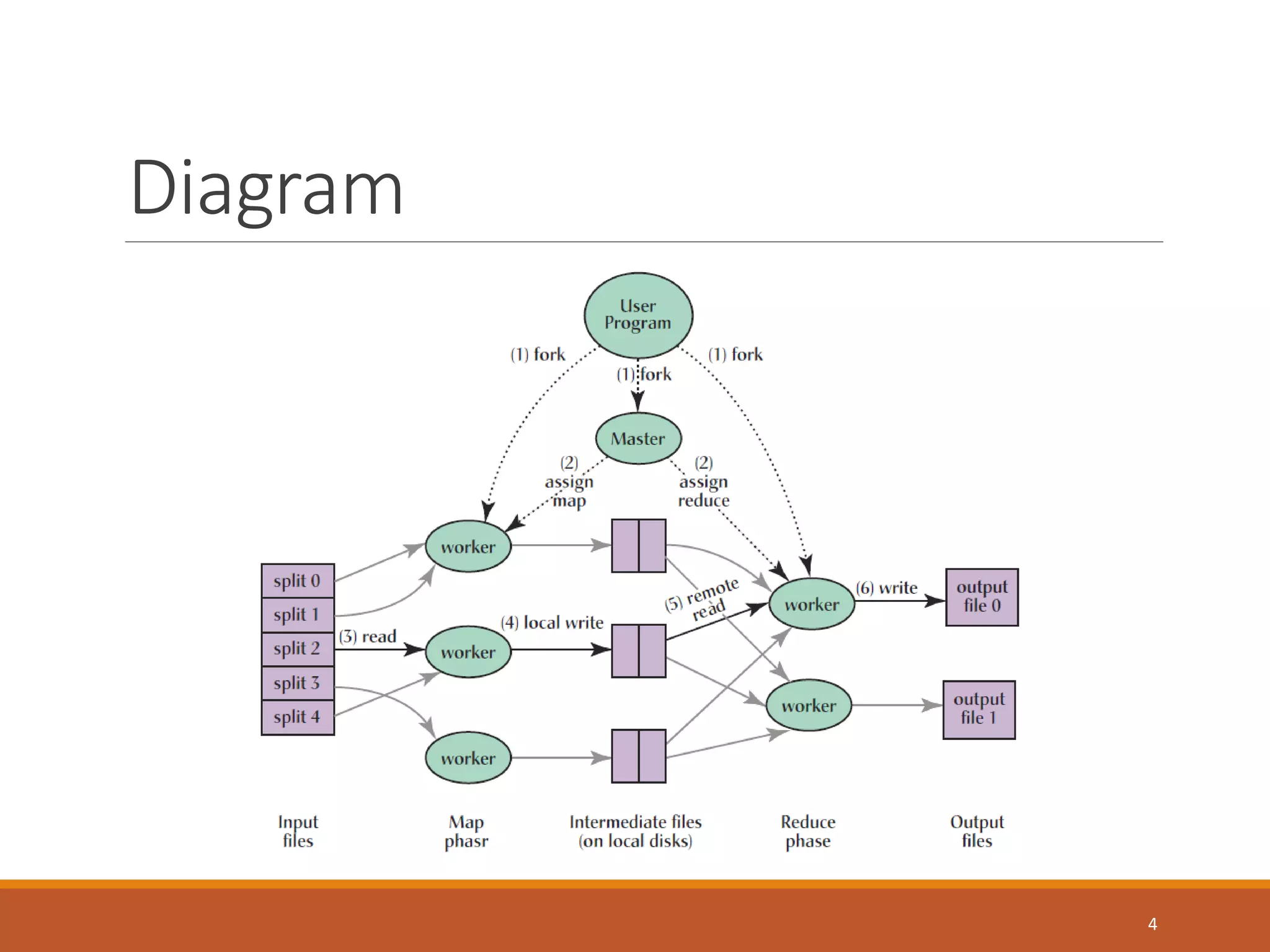

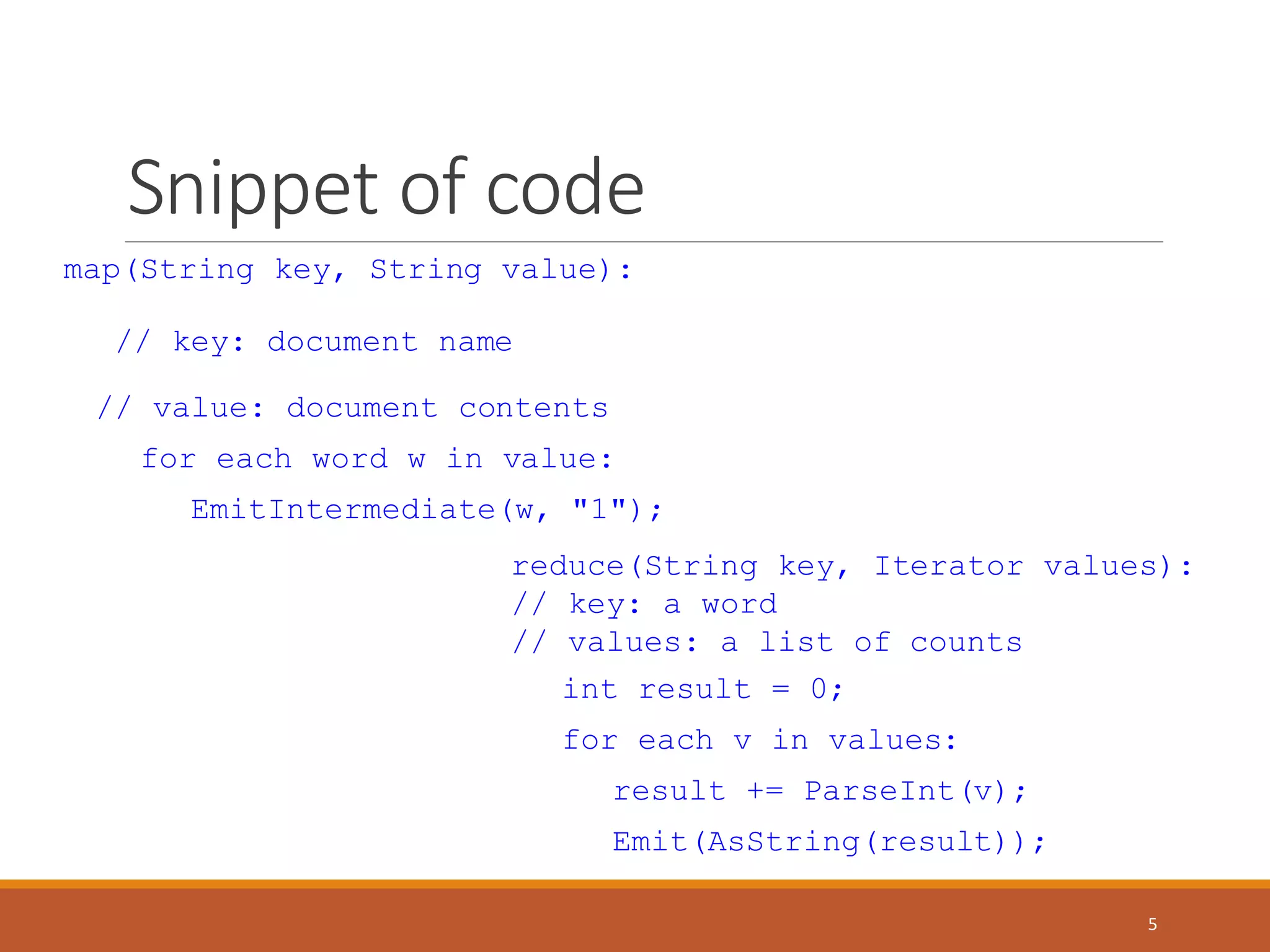

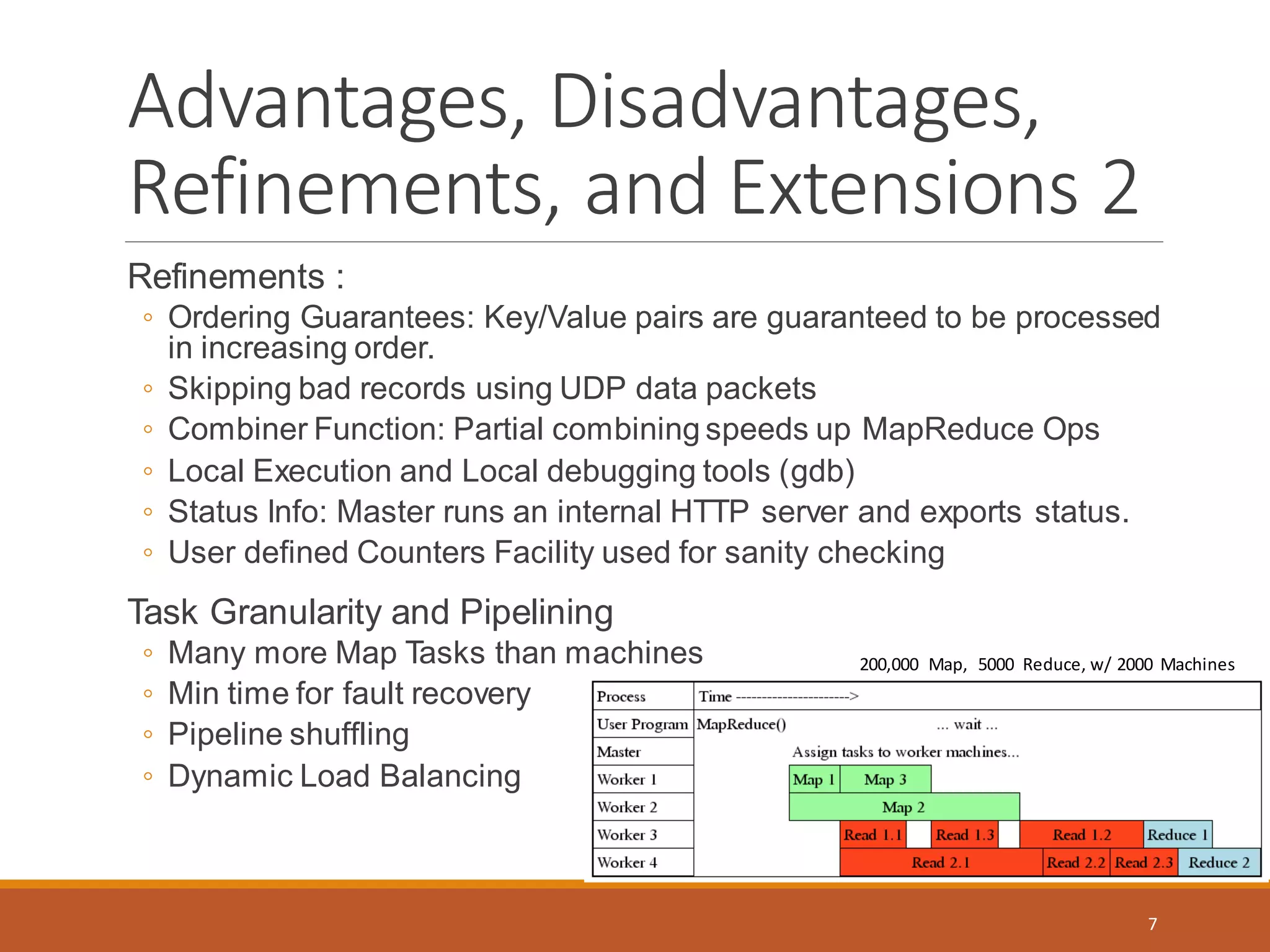

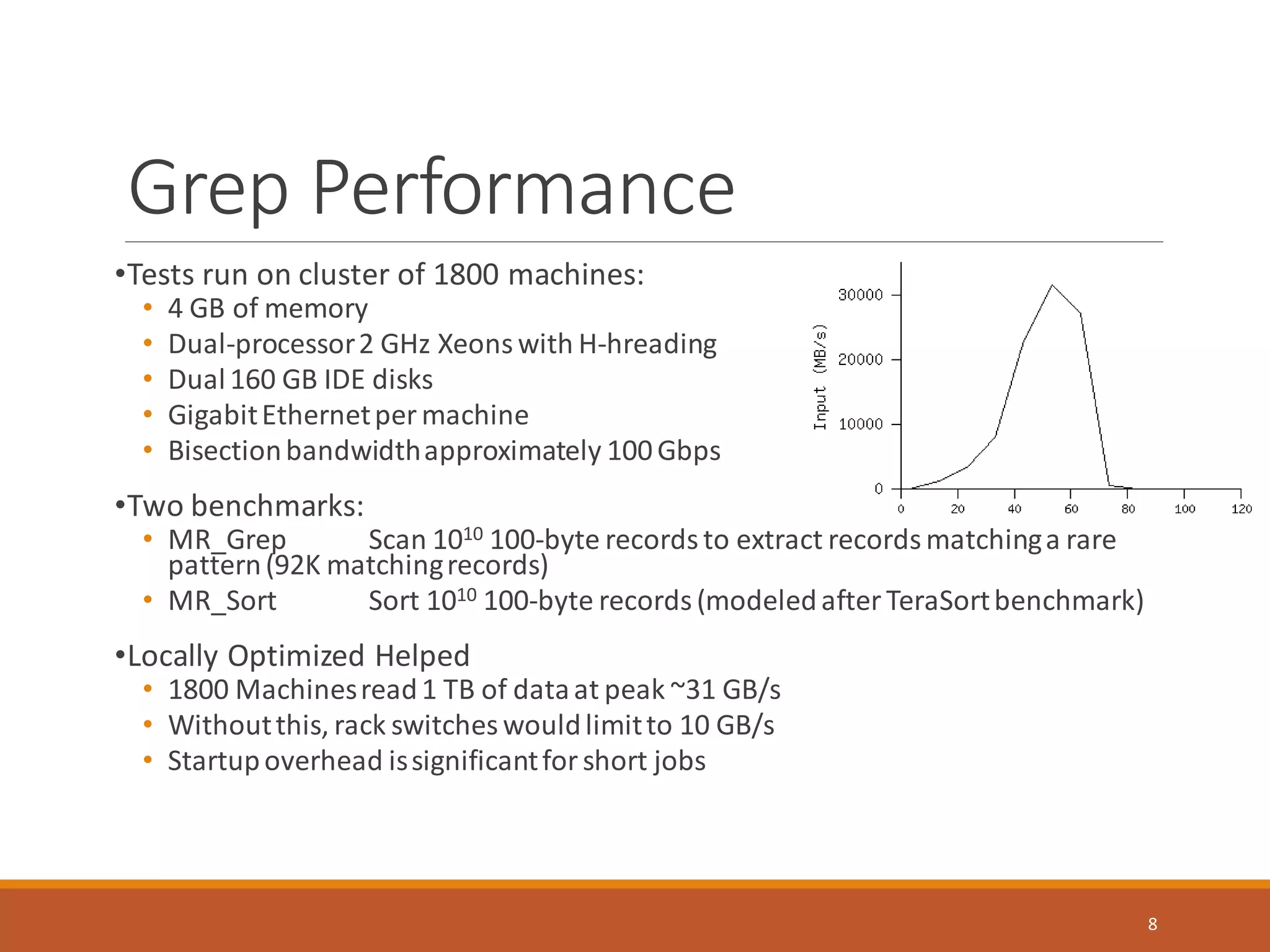

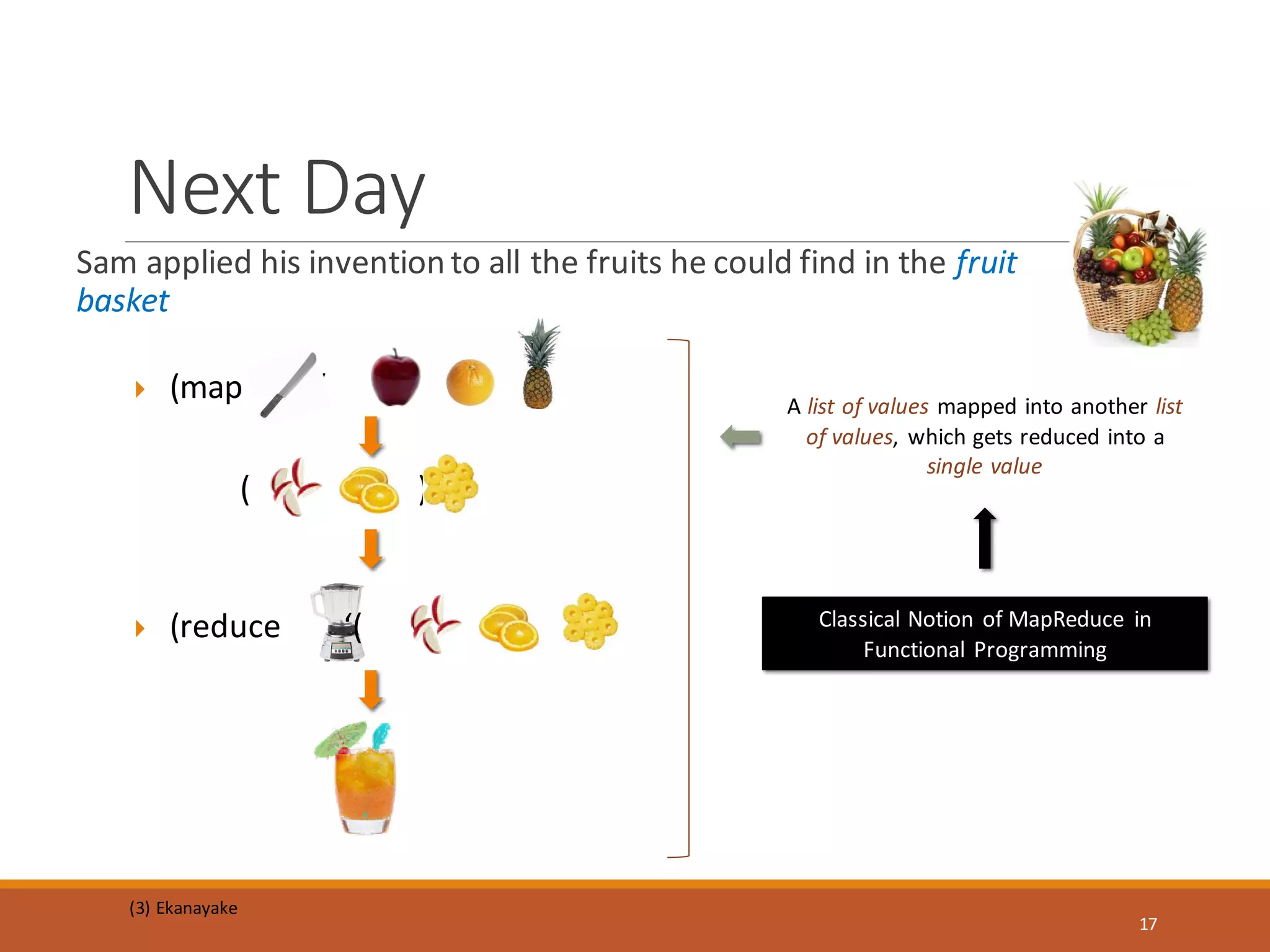

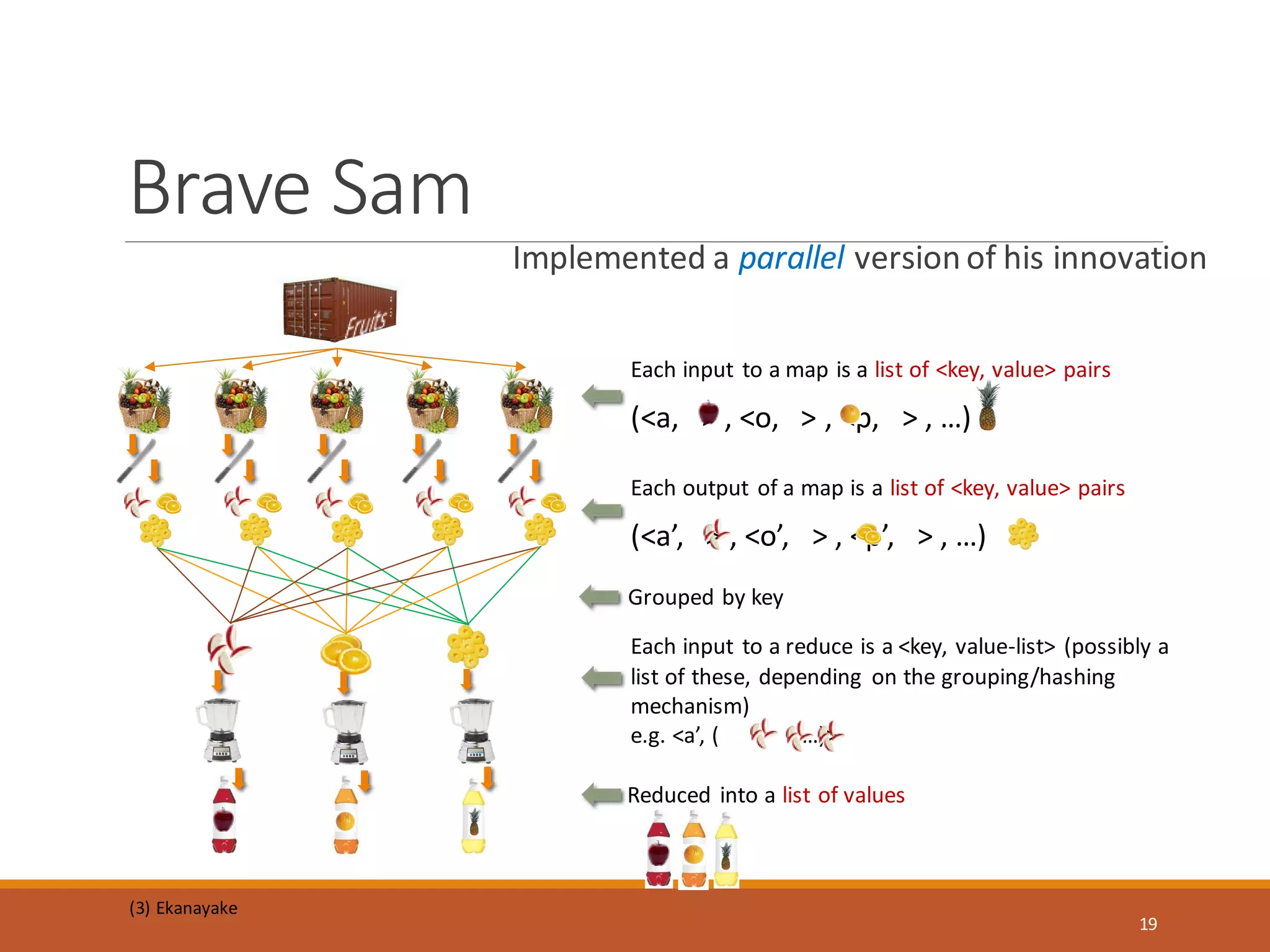

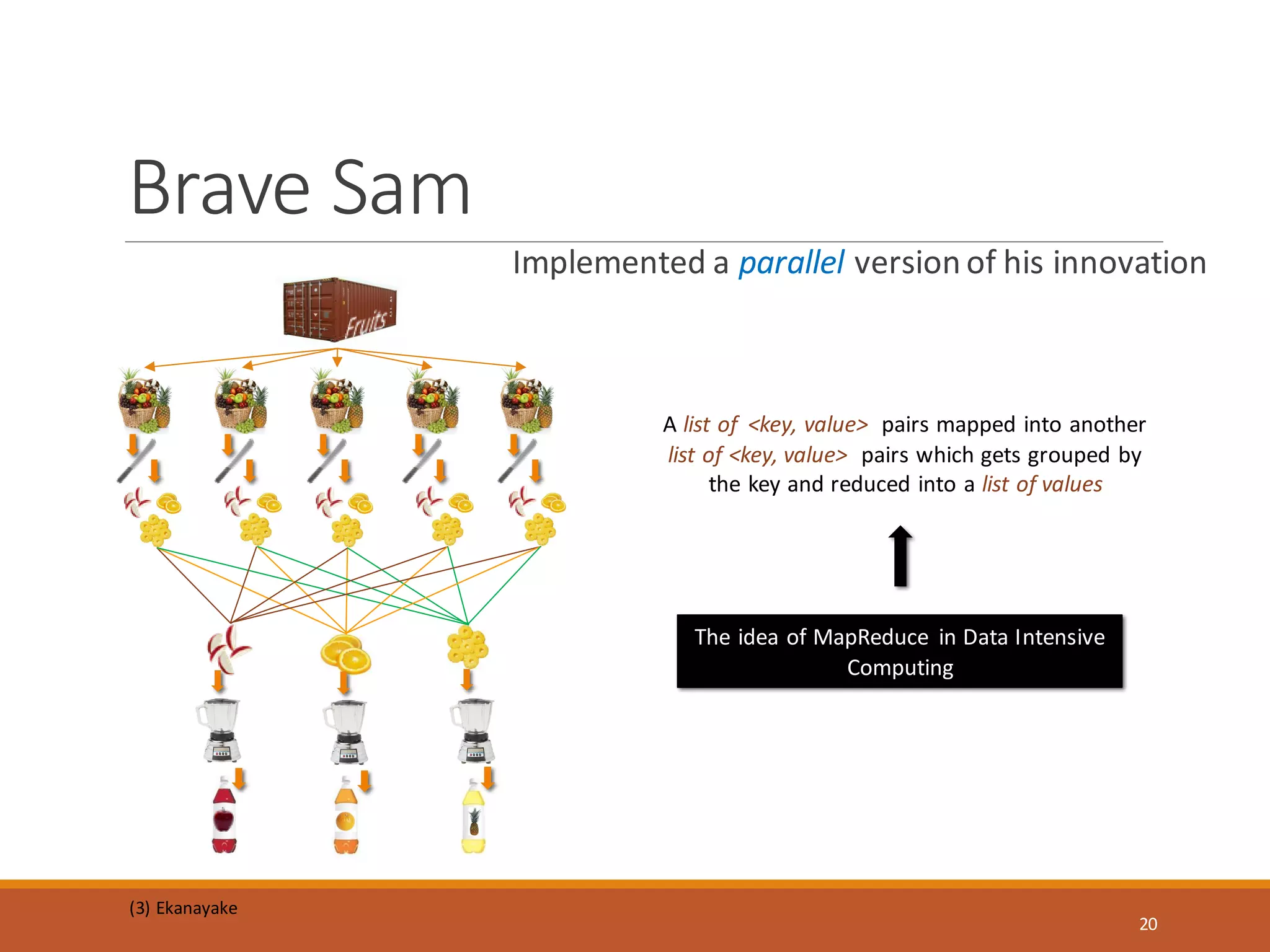

MapReduce is a programming model and software framework for processing large datasets in a distributed computing environment. It was originally developed at Google for processing web search data. The MapReduce framework breaks jobs into many small sub-tasks that are processed in parallel across large clusters of commodity servers. It handles parallelization, scheduling, load balancing, and fault tolerance. MapReduce jobs consist of a map step that processes key-value pairs to generate intermediate key-value pairs and a reduce step that merges all intermediate values associated with the same intermediate key.