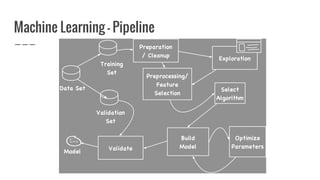

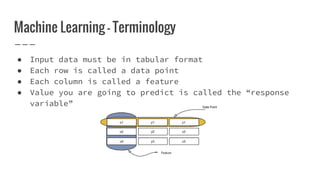

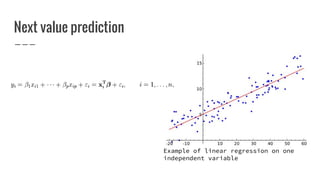

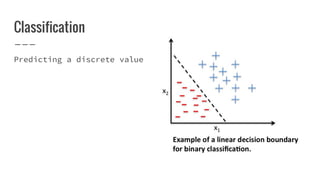

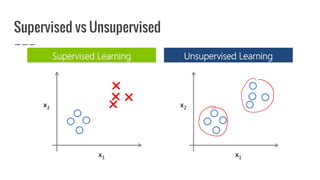

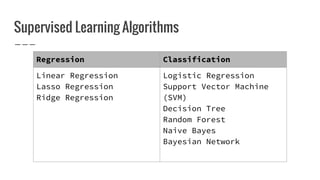

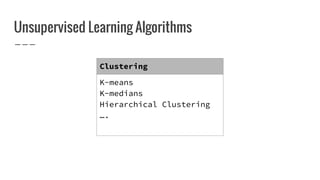

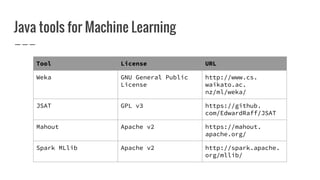

Nirmal Fernando is a technical lead at WSO2 who graduated from the University of Moratuwa. He discusses machine learning and predictive analytics, explaining that predictive analytics uses patterns in existing data to predict future outcomes. Machine learning gives computers the ability to learn without explicit programming. He then demonstrates building a logistic regression model using Apache Spark MLlib to predict whether individuals in the Pima Indian Diabetes dataset have diabetes.