This document discusses whether Spark is the right choice for data analysis. It provides an overview of Spark and compares it to other tools. Some key points:

- Spark can be used to build models on large datasets to detect fraud or recommend products using machine learning. It is well-suited to iterative algorithms.

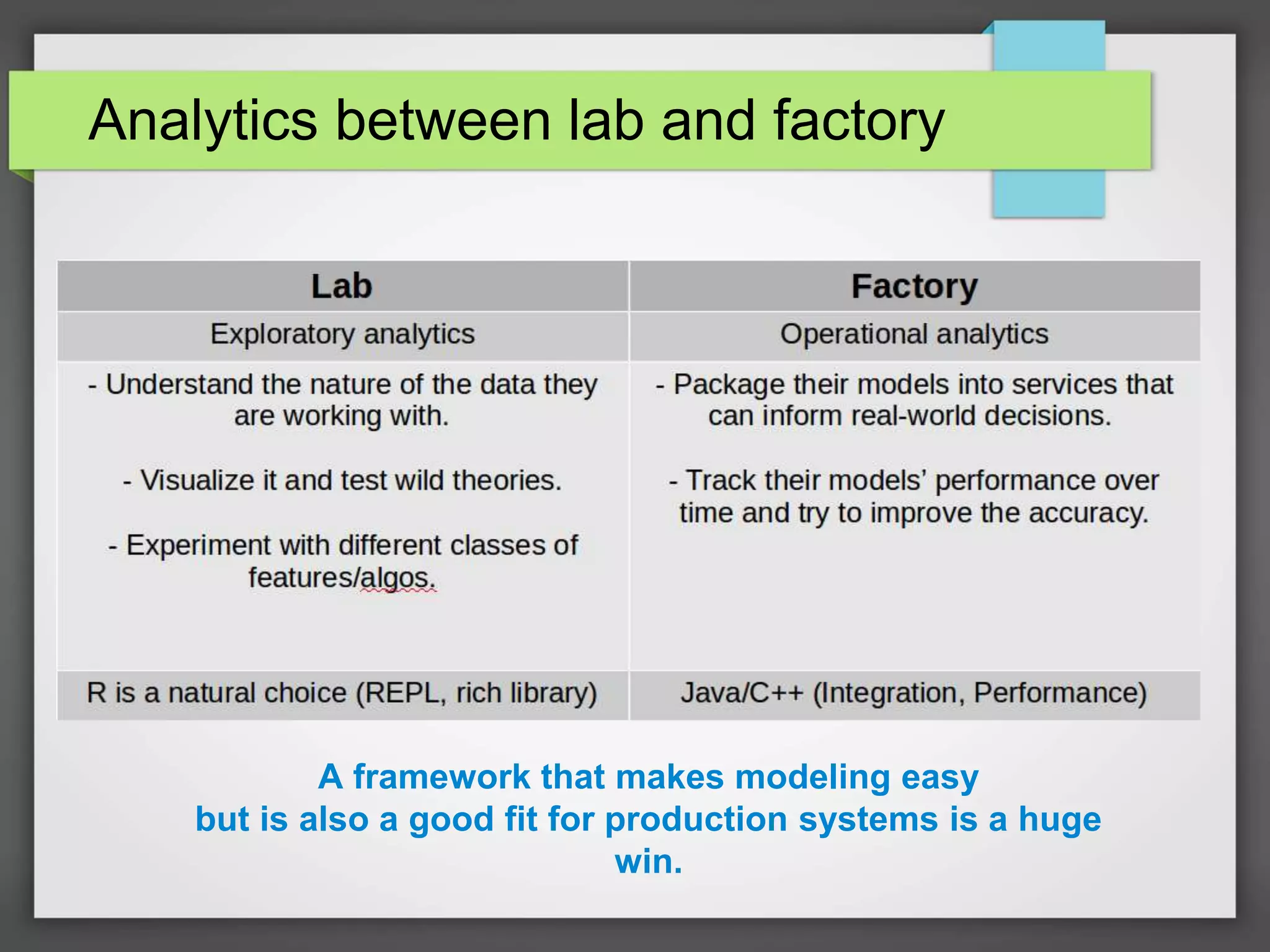

- Existing tools like R and Python are good for small datasets but don't scale well. Spark addresses this by running efficiently on clusters through its in-memory processing and optimized execution engine.

- Spark provides a programming model that makes writing parallel code easier and encourages good choices for distributed systems. This bridges the gap between research and production compared to other frameworks.

- While still maturing, Spark