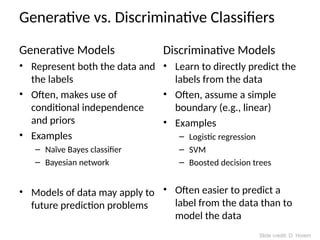

The document provides an overview of various clustering strategies, machine learning frameworks, and classifier types, emphasizing the importance of model generalization and the impact of training size on prediction accuracy. It discusses different classification methods such as nearest neighbor, linear classifiers, and support vector machines, while also addressing the bias-variance tradeoff and the need for appropriate feature representation. Additionally, it touches on the process of selecting kernels for image classification with SVMs and highlights the pros and cons of different classifiers.