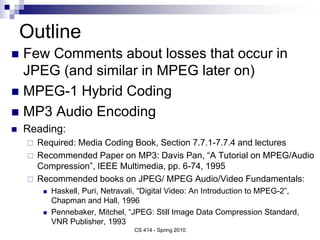

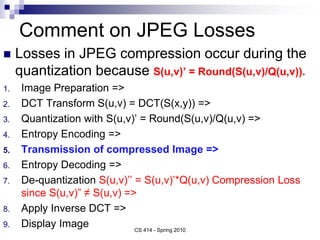

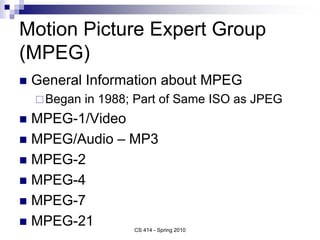

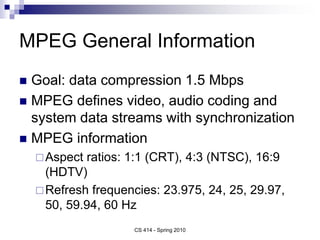

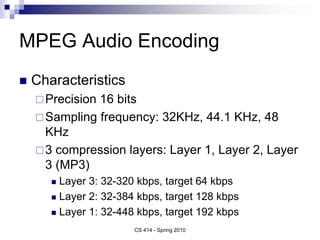

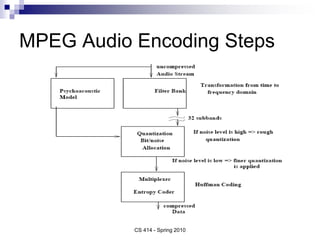

This document summarizes key points from a lecture on MPEG-1 video and MP3 audio encoding. It discusses JPEG losses from quantization and introduces MPEG hybrid coding. It describes MPEG video encoding steps including I, P, and B frames, motion estimation, quantization, and decoding order. It also summarizes MP3 audio encoding steps involving filter banks, psychoacoustic modeling, bit allocation, and MP3 audio format.

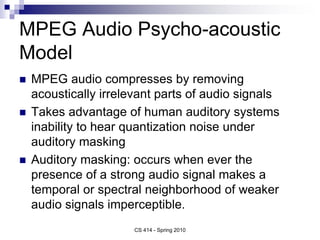

![MPEG Audio Filter Bank

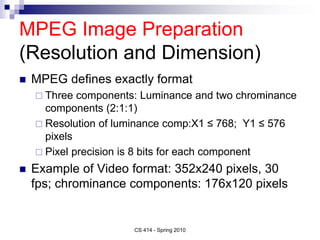

Filter bank divides input into multiple sub-bands

(32 equal frequency sub-bands)

Sub-band i defined

- filter output sample for sub-band

i at time t, C[n] – one of 512 coefficients, x[n] –

audio input sample from 512 sample buffer

CS 414 - Spring 2010

]

64

[

*

]

64

[

(

*

64

)

16

)(

1

2

(

cos(

3

]

[

7

0

7

0

j

k

x

j

k

C

k

i

i

S

j

k

t

]

[

],

31

,

0

[ i

S

i t

](https://image.slidesharecdn.com/lect10-mpeg1-230130154320-12c6af5c/85/lect10-mpeg1-ppt-24-320.jpg)