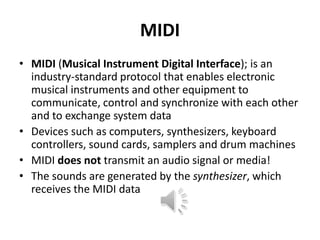

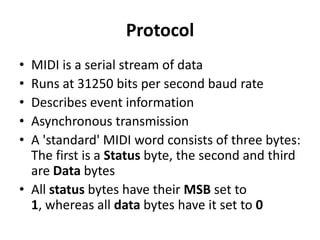

The document provides an overview of MIDI (Musical Instrument Digital Interface), detailing its history, specifications, message types, and applications in music technology. It explains how MIDI allows electronic instruments to communicate and highlights various message types including channel and system messages, along with MIDI controller examples. Additionally, it addresses composition methods, MIDI synthesizers, sequencers, samplers, and non-musical applications of MIDI technology.

![Message Name

Byte 1

Byte 2

Byte 3

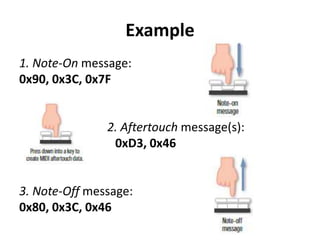

Example

Channel Messages

1000 cccc

The

MIDI

key

The velocity specifies

specifies the number

83 3D 79 turns note 3D (decimal 61) on channel 3 off

how quickly the noteof the key or note to

with a velocity of 79 (decimal 121).

release is affected.

release.

Note On

1001 cccc

The

MIDI

key

specifies the number

of the key or note to

turn on.

The velocity specifies

how

quickly

or 94 3D 79 turns note 3D (decimal 61) on channel 4 on

forcefully the note is with a velocity of 79 (decimal 121).

struck.

Polyphonic

Aftertouch

1010 cccc

The

MIDI

number.

The key pressure value.

Control Change

1011 cccc

The controller number The controller

[0 - 119]

[0 - 127]

Program

Change

1100cccc

The new program

-- n/a -(patch) number.

C3 44 changes the program number for MIDI channel

3 to 44 (decimal 68).

1101 cccc

The single greatest

pressure value of all -- n/a -depressed keys.

D3 44 changes the channel-pressure for MIDI

channel 3 to 44 (decimal 68).

Note Off

Channel

Pressure

(Aftertouch)

Pitch

Change

Bend)

Wheel

(Pitch 1110 cccc

key

A0 3D 5A changes the pressure for note 3D (decimal

61) on channel 0 to a value of 5A (decimal 90).

value B3 10 7F sets the value of controller number 10

(decimal 16) to 7F (decimal 127).

Least significant 7Most significant 7-bits E1 70 37 sets the pitch-bend for channel 1 to a value

bits of pitch-bend

of pitch-bend value.

of 03F8 (decimal 1016).

value.](https://image.slidesharecdn.com/midifinal-140221042854-phpapp01/85/The-MIDI-Protocol-Musical-Instrument-Digital-Interface-12-320.jpg)