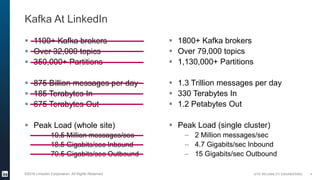

The document discusses the performance and operational aspects of Kafka at LinkedIn, detailing the scale and architecture of their Kafka deployment, which includes over 1,800 brokers and processes trillions of messages daily. It covers various topics such as hardware selection, cluster monitoring, performance problem triaging, and administrative improvements for managing Kafka effectively. The conclusion emphasizes collaboration between operations and development to ensure optimal performance and reliability of the Kafka ecosystem.